We recently hosted a mathematics lecture titled “Probabilistic Parrots and Bitter Lessons: A Tale of Machines and Language.” Shavindra Jayasekera is an Old Wilsonian (class of 2018) and is currently a third year PhD student at Imperial College London, specializing in statistics and machine learning. During his talk, Shavindra considered important questions about artificial intelligence, including how machine learning works, whether machines can match human performance, and how large-scale language models (LLMs) work through tokenization.

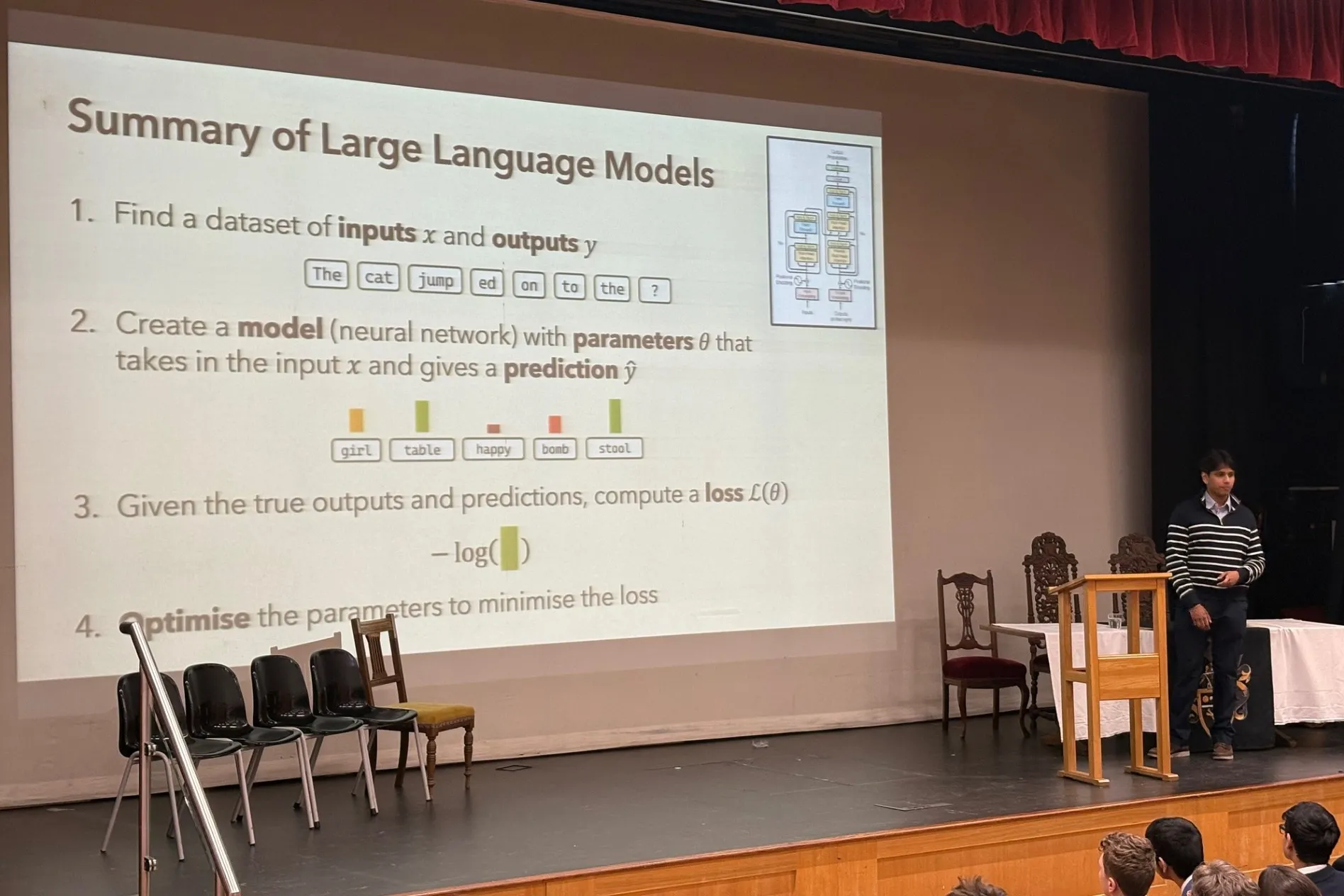

The lecture introduced the concept of deep neural networks, which are made up of multiple layers that data passes through, and each layer performs its own calculations. At every layer, the network has a number of parameters called weights and biases that can be adjusted to make the model better fit the data. By fine-tuning these parameters, the AI system learns to recognize and reproduce patterns and trends in the data used for training. Shavindra explained that applying functions to matrices to adjust these weights and biases allows the model to capture complex relationships and improve predictions over time.

To make these ideas more concrete, Shavindra shared an example of an early language translation algorithm. This case study highlighted both the strengths and limitations of AI and showed that when trained on enough data and when that data is used effectively, AI can outperform humans on certain tasks.

The lecture then moved on to large-scale language models and the role of tokenization. Shavindra explained that sentences are divided into words or subwords and each segment is assigned a unique token. The model then predicts which tokens are most likely to come next in the response based on the patterns learned from the training data and matches them to the tokens in the prompt. To add an element of randomness and creativity to the output, these probabilities are expressed on a logarithmic scale, allowing you to introduce some variation into the model while still favoring the most likely responses. This process of analyzing probabilities and specific words allows the LLM to give the impression that it understands what is being said and is able to generate appropriate responses.

Overall, the lecture was engaging and thought-provoking, introducing students to the mathematical complexity of AI algorithms and providing insight into recent developments in the field. Shavindra’s examples and detailed explanations make this topic more understandable and give us a deeper understanding of how the AI around us works. The session sparked curiosity and discussion, and students gained a new appreciation for the role of mathematics in technology. We hope Shavindra enjoyed the session as much as we did and will come back in the future.

Article written by Soham (12th year)