Testing deep learning models has become a major challenge as these systems are increasingly integrated into critical applications. Researchers Xingcheng Chen, Oliver Weissl, and Andrea Stocco, all from the Technical University of Munich and Fortiss GmbH, are tackling this problem using a new feature-aware test generation framework called Detect. Unlike existing methods that often lack semantic understanding or precise control, Detect systematically generates input by carefully manipulating disentangled semantic attributes within the model’s latent space, essentially exploring what the model means. Really I understand. This allows Detect to pinpoint the specific features driving the model’s behavior, uncovering both generalization strengths and hidden vulnerabilities, and even identifying bugs missed by standard accuracy measures. Their findings, demonstrated across image classification and detection tasks, show that Detect outperforms the current state-of-the-art, highlighting crucial differences in how fully fine-tuned convolutional models and weakly supervised models are trained, and paving the way for more reliable deep learning systems.

Delivering robust AI testing with latent space perturbations

Scientists have developed Detect, a new feature recognition test generation framework designed to rigorously assess the quality of deep learning models before deployment. This breakthrough addresses a critical gap in current testing methodologies, which often lack semantic insight into fraud and fine-grained control over generated inputs. The research team systematically generates input by subtly perturbing disentangled semantic attributes within the latent space of a vision-based deep learning model, allowing precise control over feature manipulation. Detection is more than just identifying faults. It pinpoints the specific features causing those failures, providing an important step toward more reliable artificial intelligence systems.

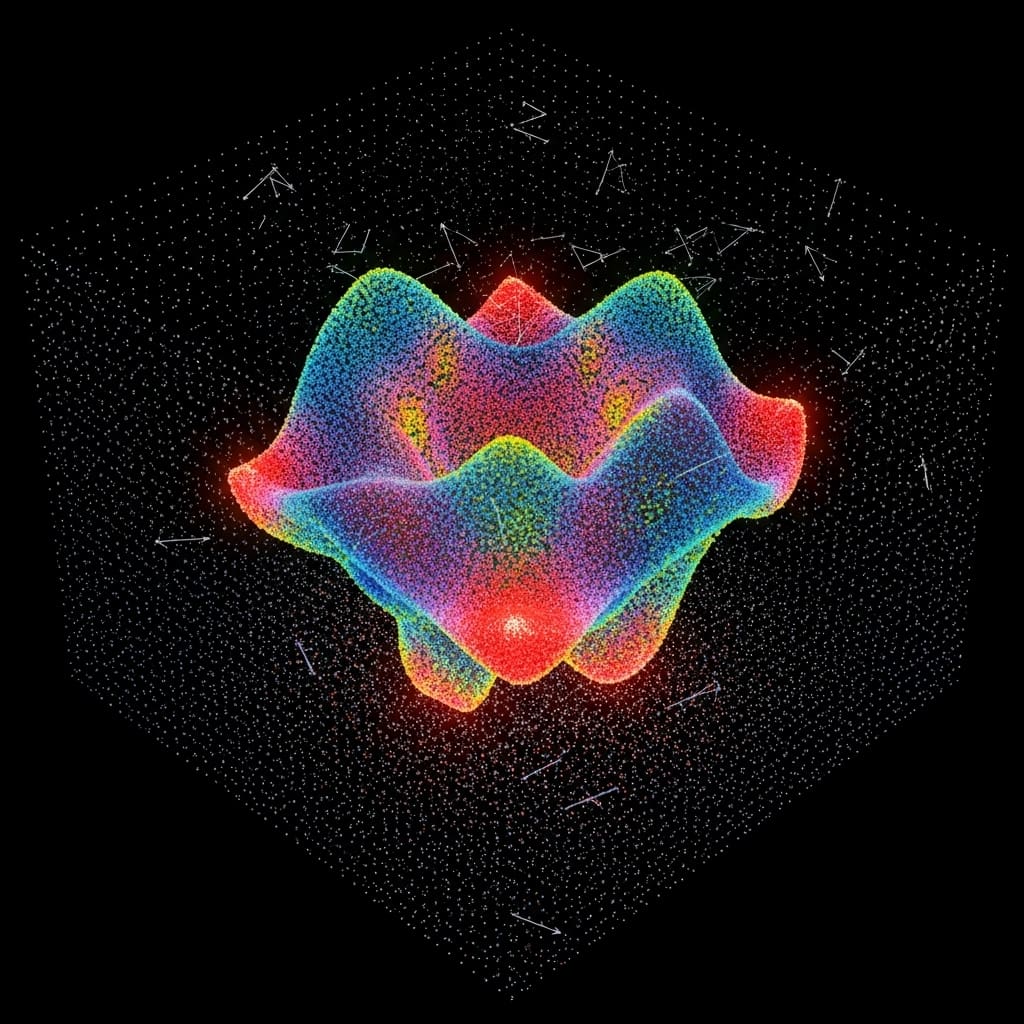

The core of Detect lies in its ability to perturb individual latent features in a controlled manner and carefully observe the resulting effects on the model’s output. By carefully modifying these features, the framework identifies features that cause behavioral changes and employs vision language models to provide semantic attribution, effectively linking input changes to model responses. This process allows for targeted perturbations designed to distinguish between task-relevant and irrelevant features and improve both generalization, the model’s ability to handle unseen valid input, and robustness against spurious correlations. Empirical results across image classification and detection tasks demonstrate Detect’s superior performance in generating high-quality test cases with fine-grained control and reveal distinct shortcut behaviors across different model architectures.

Specifically, Detect outperforms state-of-the-art test generators in accurately mapping decision boundaries and outperforms leading spurious feature localization methods in identifying robustness failures. This study reveals that fully fine-tuned convolutional models tend to overfit to local cues, such as co-occurring visual features, while weakly supervised transformation models tend to rely on more global features, such as environmental differences. These findings highlight the importance of interpretable, feature-aware tests in increasing the confidence of deep learning models, going beyond simple accuracy metrics and understanding how models reach their conclusions. This study establishes a new paradigm for testing deep learning systems, coupling input synthesis with feature diagnosis through semantically controlled perturbations in latent space.

Detect leverages style-based generative models to access a disentangled latent space, allowing precise manipulation of individual semantic features and assessment of both generalization and robustness. The framework incorporates a feature-aware test oracle that uses logit-based criteria to quantify the model’s response to perturbations, flag robustness failures, and test generalizability. Ultimately, Detect not only uncovers hidden vulnerabilities, but also provides valuable information. Detect applies targeted perturbations designed to enhance both generalization and robustness by distinguishing between task-relevant and irrelevant features.

Experiments across image classification and detection tasks demonstrate that Detect generates high-quality test cases with fine-grained control and reveals clear shortcut behavior across convolutional and transformer-based model architectures. Specifically, Detect outperforms state-of-the-art test generators at finding decision boundaries and leading spurious feature localization methods at identifying robust faults. Measurements confirm that fully fine-tuned convolutional models tend to overfit to local cues such as co-occurring visual features, while weakly supervised transducers tend to rely on global features such as environmental variations. Research has documented that Detect’s feature-aware perturbation allows precise manipulation of semantic features in the latent space, allowing detailed evaluation of model behavior.

Results show that Detect’s approach effectively uncovers bugs not captured by standard accuracy metrics, highlighting the value of interpretable and feature-aware testing in improving the reliability of DL models. In this study, we used a logit-based criterion to measure the model’s response to controlled perturbations and flag robustness failures when predictions changed significantly after changing semantically unrelated features. Detect’s system architecture consists of a StyleGAN generator and the system under test, operating across latent space, image space, and output space. This framework allows for flexible application across model types and data domains, and serves as an effective general test generator for fine-grained tasks.

This breakthrough provides a way to systematically test the behavior of DNNs under semantically controlled perturbations, addressing both generalization and robustness concerns through feature-aware modifications. Testing has proven that Detect’s ability to distinguish between relevant and irrelevant features provides a deeper understanding of model vulnerabilities and strengths. Data show that the framework’s performance in finding decision boundaries and locating spurious features represents a significant advance in DL model testing techniques and paves the way for more reliable AI systems.

Detection reveals overfitting due to latent space perturbations,

Scientists have developed Detect, a novel feature recognition test generation framework designed to evaluate deep learning models by systematically perturbing disentangled semantic attributes in latent space. This innovative approach enables controlled input generation, enables identification of features that cause changes in model behavior, and leverages language models for semantic attributes. The researchers demonstrated that Detect outperforms existing state-of-the-art test generators in decision boundary detection and outperforms leading spurious feature localization methods in robust fault identification. Empirical results across image classification and detection tasks reveal that fully fine-tuned convolutional models are susceptible to overfitting on local cues, whereas weakly supervised models tend to rely on broader, global features such as environmental variations to make predictions.

The authors acknowledge that there are limitations in the current range of supported generation architectures, primarily focusing on StyleGAN. Future work will extend the framework to encompass other generative models and modalities such as natural language and speech, broadening its applicability to a broader range of deep learning testing scenarios. These findings highlight the importance of interpretable and feature-aware tests in increasing the reliability of deep learning models. By bridging the gap between test generation and false feature diagnosis, Detect provides valuable insight into model vulnerabilities and how different architectures rely on different feature types. This is an important step toward building more robust and reliable artificial intelligence systems.