CondensNet DL architecture and GCM model

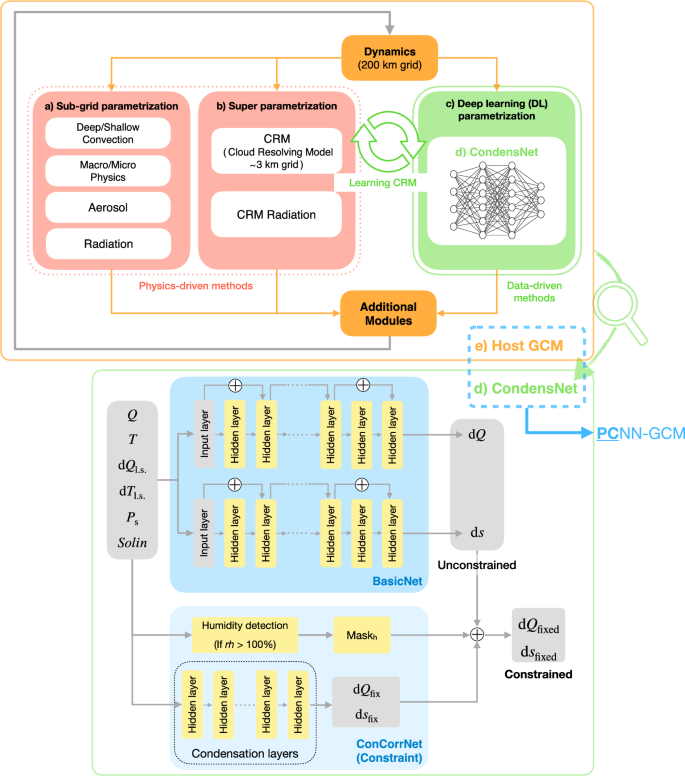

CondensNet (Fig. 5d) is a novel DL parametrization that learns and emulates the high-resolution CRM of SPCAM’s super parametrization8 (Fig. 5b), where the atmospheric dynamics is driven by the Community Atmosphere Model version 5.2 (CAM5.2)41 (Fig. 5e), running at a horizontal resolution of 1. 9° × 2. 5° with 30 vertical pressure levels, extending up to approximately 2 hPa, and employing a simulation timestep of 30 min. CAM5.2 is further coupled with the Community Land Model version 4.0 (CLM4.0)28, using prescribed sea surface temperatures and sea ice concentrations according to the AMIP protocol.

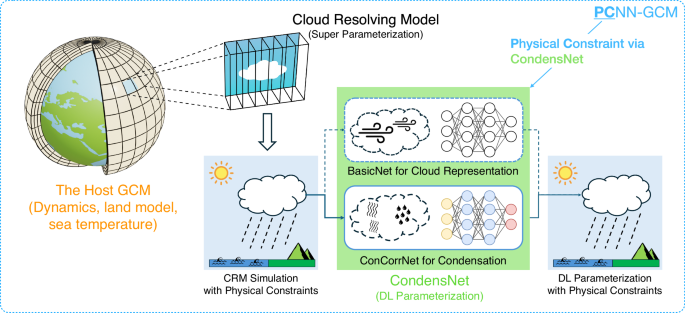

a, b Show the conventional subgrid parametrization and super parametrization approaches, respectively; c highlights the DL parametrization concept, d details the internal architecture of CondensNet; and e is the host GCM. The host GCM plus CondensNet form the PCNN-GCM framework.

Traditional GCMs like CAM use subgrid parametrization based on empirical models to represent cloud and convective processes (Fig. 5a), which can introduce significant uncertainties. GCMs that use super parametrizations, like SPCAM8, mitigate this issue by embedding a high-resolution cloud-resolving model (CRM) within each coarse grid cell (Fig. 5b). In our study, the two-dimensional CRM of SPCAM consists of 32 grid points in the zonal direction and shares 30 vertical levels with the host model dynamics driven by CAM5.2. The host GCM includes all model components except for the parametrizations, namely: the dynamical core, the land model (CLM4.0), and the sea surface temperatures. Consequently, SPCAM, CAM5.2, and the hybrid modeling framework share identical host GCM components and simulation data coupling workflows.

The host GCM provides input variables, including large-scale state variables such as water vapor Q, temperature T, surface pressure Ps, and top-of-atmosphere solar insolation Solin. In addition, large-scale forcing variables such as water vapor forcing dQl.s. and temperature forcing dTl.s. are supplied to further enhance the model’s predictive capability. CondensNet, our DL parametrization, inherits these input variables and returns predictions of water vapor tendency dQ and dry-static-energy tendency ds at each vertical level, using an independent ResMLP model from ref. 21) to predict downwelling solar radiation fluxes to drive the coupled land surface model.

The complete list of inputs and outputs is provided in Table 2.

Our new DL parametrization, namely the CondensNet model, consists of two neural networks, that have different tasks, and are integrated together, as depicted in Fig. 5d. These are:

-

BasicNet: tasked to predict basic tendencies of water vapor (dQ) and dry-static-energy (ds), capturing fundamental cloud physics. Here, we use the ResMLP model from ref. 21) as a basic model to explore the impact of ConCorrNet on stability in a more intuitive and controllable way.

-

Condensation Correction Network (ConCorrNet): ConCorrNet is designed to predict physically-constrained corrections to BasicNet’s outputs. It is also an NN, operating as an independent, predictive module that is only activated adaptively in regions of unphysical atmospheric conditions (mainly oversaturation) to prevent model instabilities.

CondensNet predicts physically constrained tendencies of water vapor (dQ) and dry-static-energy (ds) that comply with the saturation adjustment mechanism, while the prediction of radiative fluxes remains untouched (i.e., not corrected), as depicted in Fig. 5d.

In particular, we identify oversaturated grid points by comparing the prognostic specific humidity (Q) with the saturation specific humidity (Q*). A grid point is marked for subsequent adaptive physical constraint if it satisfies the condition Q > Q*, which is equivalent to a relative humidity exceeding 100%. This process results in the creation of a humidity mask, (Maskh):

$${{\text{Mask}}}_{{\text{h}}}({\text{lon}},\,{\text{lat}},\,{\text{lev}})=\left\{\begin{array}{l}\begin{array}{cc}1, & {\text{if}}\,Q > {Q}^{* }(rh > 100 \% )\end{array}\\ \begin{array}{cc}0, & {\text{otherwise}}\end{array}\end{array}\right.$$

(1)

where lon, lat, and lev represent the longitude, latitude, and vertical level indices of the grid points. The saturation specific humidity (Q*) represents the maximum mass of water vapor that a unit mass of moist air can hold at a given temperature (T) and pressure (p). It is precisely defined and commonly approximated as:

$${Q}^{* }=\frac{\epsilon \cdot {e}^{* }}{p-(1-\epsilon ){e}^{* }}\approx \frac{0.622{e}^{* }}{p}$$

(2)

where, p is the local atmospheric pressure, e* is the saturation vapor pressure at temperature T (calculated using a formulation such as the Goff-Gratch equation42), and ϵ = Rd/Rv ≈ 0.622 is the ratio of the specific gas constants for dry air (Rd) and water vapor (Rv).

We use Maskh to mark regions where unphysical oversaturation is likely to occur; this marking directs ConCorrNet’s attention to these sensitive regions, but does not automatically enforce a correction. Instead, ConCorrNet consists of two neural networks that respectively predict the corrective tendencies dQfix and dsfix. This adaptive methodology allows the model to learn the necessary physical corrections directly from the SPCAM labels for a given atmospheric state, enabling it to reproduce the complex physics of the reference simulation’s condensation processes.

In particular, the correction terms are then applied to the initial tendencies from BasicNet using the humidity mask Maskh:

$${\text{d}}{Q}_{{\text{fixed}}}={\text{d}}Q-{{\text{Mask}}}_{\text{h}}\odot {\text{d}}{Q}_{{\text{fix}}}$$

(3)

$${\text{d}}{s}_{{\text{fixed}}}={\text{d}}s+{\text{Mask}}_{{\text{h}}}\odot {\text{d}}{s}_{{\text{fix}}},$$

(4)

where ⊙ denotes element-wise multiplication. Through this mechanism, the physical constraints learned by ConCorrNet are integrated with the predictions from BasicNet. This ensures that the final outputs of the CondensNet model, the tendencies dQfixed and dsfixed, are physically consistent. Following the methodology of Wang et al.21, the surface precipitation rate is then derived by vertically integrating CondensNet’s final prediction for the water vapor tendency, dQfixed. This process provides the necessary moisture source for the land and ocean components of the host GCM, thereby closing the water cycle.

To validate CondensNet and its ConCorrNet’s ability to enforce physical constraints and stabilize simulation, we used six ResMLP models from Wang et al.21 recorded as causing unstable simulations. With the weights of these unstable ResMLP models frozen to act as our BasicNet, we trained only the ConCorrNet module. This end-to-end training was guided by a unified loss function on the final, corrected tendencies (dQfixed, dsfixed), ensuring that backpropagated gradients updated only ConCorrNet’s parameters. This experimental design isolates and demonstrates the corrective power of our module (i.e., six corrected cases in section “Long-term stability”). Further training specifications are provided in the subsection “Dataset and training details”.

Ablation studies presented in Supplementary Information Section C.2 further validate that correcting both dQ and ds is crucial for simulation stability.

Dataset and training details

CondensNet uses SPCAM simulation data for training. The specific inputs and outputs are listed in Table 2, including 30 vertical levels of specific humidity Q, temperature T, large-scale water vapor tendency dQl.s., large-scale temperature tendency dTl.s., as well as single-level surface pressure Ps and single-level incoming solar radiation Solin. The spatial dimensions of the original SPCAM training data are detailed in Table 2. The original data were generated with a 30-min time step, the same as CAM5.2. Note that CondensNet is trained in a time-independent manner, with samples drawn directly from the SPCAM dataset.

The output variables are the corresponding tendencies of water vapor dQ and dry-static-energy ds at each vertical level (30 in total), as well as the four radiation fluxes (SOLS, SOLL, SOLSD, and SOLLD) in that reach to the surface.

Notably, in CondensNet, following the traditional column-based parametrization design in GCMs, each neural network instance processes a single atmospheric column independently. During training, column samples from different spatial locations are randomly shuffled, as the network only needs to learn the vertical physical processes within individual columns. When coupled to the host GCM, CondensNet instances operate independently on each column and physics time step. The exchange of mass, momentum, and energy between columns is mediated by the model dynamics, represented through the large-scale tendencies (input variables dQl.s. and dTl.s.). This design maintains the intrinsic parallel efficiency of parametrizations while retaining the essential horizontal coupling provided by the dynamics.

The inputs consist of vertical profiles of atmospheric state variables (Q, T), large-scale tendencies (dQl.s., dTl.s.), and surface conditions (Ps, Solin). The outputs include physical tendencies of water vapor (dQ) and dry-static-energy (ds) at each vertical level, along with surface radiation fluxes (summarized in Table 2).

The basic neural network, BasicNet, is a pre-trained Residual Multilayer Perceptron (ResMLP) that predicts basic tendencies of water vapor (dQ) and dry-static-energy (ds). It contains seven residual blocks (14 hidden layers in total) and two separate ResMLP neural networks—one for predicting dQ and one for ds. Each ResMLP module has 512 neurons for each hidden layer and uses ReLU activation functions.

The condensation correction network (ConCorrNet) is also a ResMLP designed to adjust the predictions of BasicNet to enforce physical constraints related to water vapor saturation. ConCorrNet architecture includes 6 residual blocks, each containing 2 fully connected layers with 512 neurons, resulting in a total depth of 12 hidden layers. We selected the sigmoid activation function based on its superior convergence performance observed in preliminary experiments.

In terms of training, we focus here on the definition and interpretation of the loss functions used to train CondensNet. BasicNet and ConCorrNet can be optimized either jointly or in a two-stage scheme in which BasicNet is pretrained and then frozen while training ConCorrNet. In this work, we adopt the latter for faster convergence; the mathematical objectives below apply to both training protocols.

We minimize a single supervised loss on the final, mask-corrected tendencies—dQfixed and dsfixed—as defined in Eqs. (3) and (4), namely:

$${L}_{{\text{CondensNet}}}=\frac{1}{N}\mathop{\sum }\limits_{i=1}^{N}[{({\text{d}}{Q}_{{\text{fixed}},i}-{\text{d}}{Q}_{{\text{label}},i})}^{2}+{({\text{d}}{s}_{{\text{fixed}},i}-{\text{d}}{s}_{{\text{label}},i})}^{2}].$$

(5)

Here, N denotes the number of training samples (grid columns), and dQfixed and dsfixed are the masked, physically corrected outputs of CondensNet. This formulation of the minimization problem allows the backpropagation of gradients to both BasicNet and ConCorrNet; the humidity mask then determines when and where ConCorrNet is active, and it needs to learn to correct BasicNet’s unphysical predictions.

To better understand the training strategy adopted, it is useful to express the minimization problem as the sum of two interpretable terms. First, the standard supervised loss for BasicNet

$$\begin{array}{l}{L}_{{\text{BasicNet}}}=\frac{1}{N}{\sum }_{i=1}^{N}[{({\text{d}}{Q}_{i}-{\text{d}}{Q}_{{\text{label}},i})}^{2}\,+{({\text{d}}{s}_{i}-{\text{d}}{s}_{{\text{label}},i})}^{2}].\end{array}$$

(6)

Second, the residual-regression objective for ConCorrNet, restricted to the oversaturated region identified by the binary mask Maskh (with cardinality Nm):

$$\begin{array}{rcl}{L}_{{\rm{ConCorrNet}}} &=& \frac{1}{{N}_{m}}\mathop{\sum}\limits_{i\in {\rm{Mask}}_{h}}\left[({\text{d}}{Q}_{{\rm{fix}},i}-({\text{d}}{Q}_{i}-{\text{d}}{Q}_{{\rm{label}},i}))^{2}\right.\\ && +\left.{({\text{d}}{s}_{{\text{fix}},i}-({\text{d}}{s}_{{\text{label}},i}-{\text{d}}{s}_{i}))}^{2}\right].\end{array}$$

(7)

Intuitively, if we interpret the humidity mask as a binary gate: when Maskh = 1, ConCorrNet learns to correct the BasicNet predictions, while when Maskh = 0 ConCorrNet is inactive. Accordingly, the implemented loss in Eq. (5) back-propagates gradients to both heads wherever Maskh = 1, and to BasicNet everywhere. Details are provided in the Supplementary Information Section C.3.

The specific hyperparameters used during training for the results presented in this work are listed in Table 3, for both BasicNet and ConCorrNet.

The model was implemented using PyTorch and trained on multiple GPUs to accelerate computation. We used standard techniques such as data normalization and weight initialization to enhance training stability. Early stopping and model checkpointing were employed to prevent overfitting. The code is freely available at https://github.com/MathEXLab/PCNN-GCM.

Climatological processing

For variables with vertical distribution (temperature, wind speed, specific humidity), the zonal mean at each pressure level is given by

$${\bar{X}}_{{\text{zonal}}}(\phi ,p)\,=\,\frac{1}{{N}_{\lambda }}\mathop{\sum }\limits_{i=1}^{{N}_{\lambda }}X({\lambda }_{i},\phi ,p),$$

(8)

where Nλ is the number of longitudinal grid points, λi is the longitude at grid point i, ϕ is latitude, and p is pressure level. For surface or near-surface variables (precipitation, 10m wind speed), the horizontal mean is given by

$${\bar{Y}}_{{\text{horizontal}}}\,=\,\frac{1}{{N}_{\lambda }{N}_{\phi }}\mathop{\sum }\limits_{j=1}^{{N}_{\phi }}\mathop{\sum }\limits_{i=1}^{{N}_{\lambda }}Y({\lambda }_{i},{\phi }_{j})w({\phi }_{j}),$$

(9)

where w(ϕj) is the latitudinal weight factor. The climatological means are then obtained by averaging these spatial means over the analysis period

$${\bar{X}}_{{\text{clim}}}\,=\,\frac{1}{Y}\mathop{\sum }\limits_{y=1}^{Y}{X}_{m,y},$$

(10)

where Y is the total number of years in the analysis period, Xm,y represents the monthly mean for month m in year y.

Error metrics

Once the means introduced in section “Climatological processing” are obtained, we use different error metrics to assess the performance of PCNN-GCM against NN-GCM and CAM5, using as a reference (i.e., ground truth) SPCAM. In particular, we use the pattern difference

$${\text{diff}}(\phi ,\lambda ,p)={X}_{{\text{model}}}(\phi ,\lambda ,p)-{X}_{{\text{SPCAM}}}(\phi ,\lambda ,p)$$

(11)

where Xmodel and XSPCAM represent the climatological means from a given model and SPCAM respectively, the weighted root mean squared error for variables with vertical distribution

$${\text{RMSE}}(p)\,=\,\sqrt{\frac{{\sum }_{j=1}^{{N}_{\phi }}{\sum }_{i=1}^{{N}_{\lambda }}{[{X}_{1}({\lambda }_{i},{\phi }_{j},p)-{X}_{2}({\lambda }_{i},{\phi }_{j},p)]}^{2}w({\phi }_{j})}{{\sum }_{j=1}^{{N}_{\phi }}w({\phi }_{j})}}$$

(12)

where X1 and X2 represent the climatological means from two different models (for surface variables, the same formula applies without the pressure level dependency), and the coefficient of determination

$${R}^{2}=1-\frac{{\sum }_{i=1}^{N}{({X}_{i}^{{\text{model}}}-{X}_{i}^{{\text{SPCAM}}})}^{2}}{{\sum }_{i=1}^{N}{({X}_{i}^{{\text{SPCAM}}}-{\bar{X}}_{{\text{SPCAM}}})}^{2}}$$

(13)

where N is the total number of samples, \({X}_{i}^{{\text{model}}}\) and \({X}_{i}^{{\text{SPCAM}}}\) are the values at sample point i for a given model and SPCAM respectively, and \({\bar{X}}_{{\text{SPCAM}}}\) is the mean of SPCAM values over all samples.

In Eqs. (11–13), Xmodel corresponds to the model being evaluated (i.e., PCNN-GCM, NN-GCM, and CAM5), while XSPCAM corresponds to the SPCAM reference (i.e., ground truth).