Federated learning is a promising technique for collaborative machine learning, but performance often suffers when deployed across real-world networks with varying device capabilities and unreliable connections. Deakin University's Shanika Iroshi Nanayakkara and Shiva Raj Pokhrel are tackling this challenge with a new approach called A2G-QFL (Adaptive Aggregation with Dual Gains in Quantum Federated Learning). Their work introduces a framework that dynamically adjusts how individual contributions are combined, taking into account both the geometric relationships between models and the quality of service provided by each participating device. By jointly controlling these factors, A2G-QFL clearly improves stability and accuracy in difficult and heterogeneous network conditions. Importantly, it also includes existing methods such as FedAvg as specific examples of its broader approach.

Improved geometry and quality for aggregation

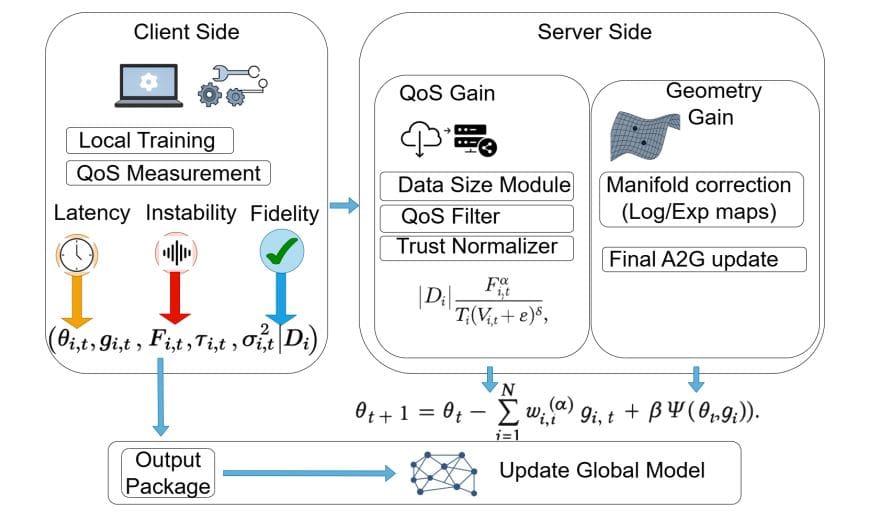

The research team has developed a new aggregation framework, A2G, to power federated learning across diverse and potentially unstable communication networks, including those that integrate quantum and classical technologies. This method addresses performance degradation caused by variations in client quality, communication reliability, and geometric differences between local and global models. A2G works by jointly controlling geometry blending through “geometry gains” and adjusting client importance using “QoS gains” derived from measures of teleportation fidelity, delay, and instability. The client receives global model parameters and runs a local optimizer to generate a local model and optionally generate stochastic gradient estimates while measuring important physical layer quality of service metrics (teleportation fidelity, communication delay, channel instability variance).

These measurements are sent to the server along with local model updates and dataset sizes. The researchers implemented a procedure to ensure consistent data collection across the network, weighted each client's contribution to the aggregation process, and calculated a QoS-dependent trust factor. This involves calculating a QoS factor based on fidelity, delay, and instability controlled by tunable parameters, and normalizing this factor by the ratio of each client's data size to create a trust score. This score is further normalized across all clients, resulting in weighting that favors reliable and high-performing nodes, and allows for tunable control of sensitivity to each QoS metric. The team implemented a geometry-controlled aggregation that combines QoS weighted gradient terms and geometry gains to effectively interpolate between standard Euclidean aggregation and more advanced geometry-aware averaging on the underlying parameter manifold. By adjusting the geometry gains, the researchers were able to adapt the aggregation process to the specific characteristics of the model and communication network, reviving existing techniques such as FedAvg and QoS-aware averaging as special cases.

Adaptive federated learning using quantum links

In this work, we introduce A2G, an adaptive aggregation framework designed to improve federated learning systems operating across networks with varying client qualities and potential quantum communication links. This effort addresses performance degradation caused by factors such as unreliable connections and differences in client capabilities. A2G uniquely combines quality of service (QoS) improvements from metrics such as teleportation fidelity, latency, and instability with geometry improvements that control how local and global models are blended. Experiments on a hybrid testbed and a dataset containing Breast-Lesions-USG demonstrate that A2G significantly improves both stability and accuracy.

With a geometry gain of 0.05, the system achieved a maximum accuracy of 68.25 percent and a final accuracy of 63.49 percent on the Breast-Lesions-USG dataset, consistently outperforming the standard FedAvg baseline. A moderately shaped gain will always give you excellent results. Gain is 0.

10 gave the highest accuracy of 65.08 percent, but higher values showed a systematic decrease in performance. Further investigation of a noisy communication link modeled as a bit-flip channel reveals the robustness of A2G. Under moderate noise conditions, A2G maintains strong performance and still outperforms the FedAvg baseline as noise increases. The team addressed challenges arising from varying client quality and communication constraints by developing a method to jointly regulate geometry blending and client importance through the implementation of geometry gains and quality of service (QoS) gains derived from metrics such as teleportation fidelity, latency, and instability. A2G update rules clearly improve stability and accuracy in difficult situations, while generalizing existing techniques such as FedAvg, QoS-aware averaging, and manifold-based aggregation. Experiments conducted on a hybrid testbed confirm the effectiveness of our framework in mitigating both client drift and bias caused by service quality variations. Although A2G's modular structure and compatibility with different optimizers and model classes positions it as a promising foundation for advanced distributed and quantum-enabled learning frameworks, future work will focus on establishing convergence guarantees and addressing smoothness and finite variance assumptions.