Robots are becoming smarter, more capable, and more pervasive, setting the stage for a whole new round of growth that will touch nearly every part of the semiconductor and software industries for decades to come.

Robots are at the core of physical AI, a broad segment of edge AI systems that interact with the world through artificial intelligence and sensors. This includes everything from humanoid robots to faceless industrial robotics, robo-dogs, drones, and increasingly autonomous vehicles. In the future, robots will work alongside humans, whether at work, at home, or in a health care setting as a companion or assistant.

Until recently, industrial robots had large safety zones around them so they could not accidentally harm humans. That’s starting to change. Better AI models, machine learning, wireless protocols, and sensor fusion mean robots are now capable of working in close proximity to people. But mistakes can still happen, just as autonomous vehicles occasionally misread traffic conditions and cause accidents.

“The potential for accidents exists if physical AI systems go completely unchecked,” said Matthew Graham, senior group director, verification software product management at Cadence. “This is one of the reasons I don’t think we’re on the precipice of AI completely replacing the human in the loop. AI is where the value is today and where the productivity gains will be made. But at the end of the day, in terms of autonomous vehicles, autonomous robots, and autonomous industrial — including robots, drones, and so on — to achieve the required certifications, you have to be able to prove to the assessor, whether it’s TÜV SÜD, CSA or UL, that you have suitably verified and certified the system is safe and that it still has a human signing off on it.”

Tesla’s Robotaxi and Waymo’s self-driving cars are evidence that autonomous physical AI is possible. “However, drones and robots will have different aspects, such as degrees of freedom, failure modes, and power budgets, as compared to automotive systems,” said Rajesh Velegalati, principal security analyst at Keysight EDA. “The regulatory maturity between automotive and drones/robots is different. It is more defined for cars and not as much for robots. This needs to be addressed.”

Engineers must determine where the physical AI system sits on the spectrum from vehicle to robot to vacuum cleaner and adopt the matching safety standards.

“It’s been chartered by us to go through the industry to find out and answer exactly the question, ‘Where is the partitioning happening for functional safety?’” said Adam White, division president for power and sensor systems at Infineon Technologies. “Is it needed? And if it is needed, which elements of the robot/humanoid will need to meet functional safety regulations? Is it just the microcontroller? Does it go all the way into the power stage, for example? These are the topics that we’re now working through openly. What I see in Asia is more speed. They’re not waiting for functional safety. They’re just building what they can get and getting it out there to demonstrate or to prove that they can do [this technology]. I agree it’s a no-brainer, because if these robots or humanoids have any interaction against humans, then you’re going to have to have functional safety. The questions for us are, ‘How much? And where will you need functional safety?’ It’s a little bit like with vehicles today. There are elements that adhere to functional safety. But do you need functional safety for your radio or your cockpit, for the speedometer, your display?”

As robotics are increasingly interfacing with humans, the root of functional safety is looking at the worst-case scenario that can happen. “You peel back from there and develop the components and systems based on where you see the safety threshold is,” said Paula Jones, segment director, mobility, ADAS, strategy, marketing at Infineon. “In the past, there were robots in factories, and where they were working was either closed off to humans or extreme safety measures were put in place. Now we see that there are more robot/human interactions, functional safety standards are migrating to the robotics industry, and the same with cybersecurity.”

Automotive security and safety organizations (SSOs) are at the forefront of standardizing tamper-resistant security features of automotive microelectronics. “Everyone, including a large portion of U.S. defense microelectronic providers, is following their lead,” said Scott Best, senior director for silicon security products at Rambus. “The reasons are varied, but they boil down to human safety, as a security flaw in an automotive safety system presents an immediate and obvious risk of physical harm. Importantly, the progress being made by automotive SSOs translates directly to robotic or physical AI systems. A self-driving car is a robot with wheels, after all. And a drone with a self-piloting expert system is just a robot that flies.”

Automotive and robotics standards

There is a lot of synergy and similarities between robotics and adjacent vectors in terms of functional safety and the use of AI. “You can have standards paralysis, as in, which standard should you look at?” said Andrew Johnston, engineering and technology leader, systems and functional safety engineering specialist at Imagination Technologies. “They’re all saying similar but different things. Robotics standards are evolving generally, underpinned by the de facto functional safety standard IEC 61508, but there are adjacent standards for machinery and all other autonomous systems, including vehicles.”

Today, some standards explicitly exclude the use of AI for high-integrity functions. “Emergent standards are now trying to find a way to, with credibility, use AI as part of a safety assurance case, whether that’s in the design and development life cycle or as the technology within the product itself, or both,” Johnson noted. “As technology is evolving concerning ML, and there is a growing understanding of the benefits of ML for certain applications, the standards have to evolve, too. Sometimes it’s a little bit of a chicken-and-egg scenario.”

The need for redundancy, for functional safety, and conforming to standards like ISO 26262 is impacting the design of physical AI. “Our customers are driving us to ensure that our tooling is certified, that we can provide certified flows, and they can use those flows to certify their devices,” Cadence’s Graham said.

Whether a drone, vehicle, or robot, physical AI can face harsh conditions from heat, cold, weather conditions, moisture, or environmental toxins and this is where there will be a crossover with optional functional safety and reliability. “Either ASIL will be for safety, or AEC-Q100 for a Grade 2 (to operate reliably in an ambient temperature range of -40°C to +105°C),” said Hezi Saar, executive director, product management, mobile, automotive and consumer IP at Synopsys. “These are things that some of the robotics developers are going after. Some of them are in factories. Some of them are going after markets that are no-brainers, that the market will adopt immediately, like helping elderly people, and dangerous jobs such as cleaning the windows outside [a skyscraper].”

Examples of standards and global progress for robotics:

- ISO 13482: Personal Care Robots;

- ISO 13849: Robot Safety for Machinery;

- ISO 10218: Industrial Robots, including autonomous mobile robots (AMRs);

- ANSI/RIA R15.08: Autonomous Mobile Robots;

- Regional derivatives like JIS B 8445 in Japan and CSA Z434 in Canada; and

- IEEE Robotics & Automation Society (RAS) Technical Committee on Safety, Security, and Rescue Robotics (SSRR)

Emerging standards and research:

- UL 4600: A key framework for autonomous product safety that emphasizes system-level hazard assessment.

- IEC 61508: Focuses on functional safety of electrical and electronic systems, offering insights for humanoid sensor and control systems.

- BSI 8611: Provides ethical guidelines for robot safety in public spaces, which is critical for humanoid robots operating outside industrial environments.

These standards show the robotics industry is considering safety, but it’s unclear how widely they will be adopted globally. “The standardization and taking care of the system level and application are being discussed, at least,” Saar said. “I don’t know about the implementation itself, but the concern I have is more as a consumer, as a human being, that we’re rushing with AI innovation, while regulations are not there worldwide. Sometimes professors A, B, and C are warning, ‘Hey, what are you going to do? You know, guys, we have to make sure everybody’s following the same rules, and for this paper, we are advancing technology and assuming everybody’s behaving well.”

Fig. 1: A mix of robotics and automotive standards. Source: Synopsys

Physical AI simulation and real-world safety measures

For software-defined vehicles and physical AI systems, safety needs to be considered from simulation through to prototype. “For the software-defined methodology to work, you start with something that’s purely virtual,” said David Fritz, vice president of hybrid-physical and virtual systems, automotive and mil-aero at Siemens EDA. “It has to be valuable. You’ve got to get hours, if not minutes, where the software teams and hardware teams are churning quickly. They’re trying all these different things. They’re trying to iterate to get to the point where they can prove success in passing some of these hardware requirements. That’s pure virtual, and that’s the lowest level of fidelity, but it’s enough fidelity to reach that first gate. Then, we have a higher level of fidelity, where we start to say, ‘We’re not modeling the behavior of the hardware. We’re modeling the hardware itself, down at the gate level or at the signal level, and yet we’re still running proposed production software.’ Hybrid is halfway between virtual and physical.”

At the end of that process comes something truly physical — a prototype. “It could be the plane or the rocket ship or the car,” said Fritz. “The reason you need that is you’ve done millions and millions of simulation runs virtually. You’ve done millions of runs in hybrid. You’re passing all the requirements, but you still need to verify that you get the same results in the physical vehicle, and at least the best case, worst case, and nominal case of all of those simulations to gain confidence that you’re not being fooled by some simulation glitch. That’s the whole spectrum of fidelity that’s needed to be successful in these projects.”

Nvidia applies its Halos safety initiative for autonomous vehicles to robotics, which includes simulation. “The safety element of this is something that has to be done across the whole stack, from top to bottom, from all of the software down to the chip and system level,” said Rev Lebaredian, vice president of Omniverse and simulation technology at Nvidia. “There’s a lot of overlap between both the hardware and software, between what we do in AV and the robotics world, so we’re applying it there. This is a very, very large effort that has many facets. Functional safety has many best practices, guidelines, and safeguards in terms of evaluating and testing these AIs. A big portion of that is testing extensively in simulation before deploying into the real world. That’s why it’s so critical that we build physically-accurate simulators that match the world as closely as possible. We can test these robots and fleets of robots for millions and millions of hours of operation in diverse environments before we put them out in the physical world and give them a physical body.”

Whether in automotive or robotics applications of physical AI, situational environmental awareness can be built through training, but there are limits. “You can train a vehicle controller with lots of — at face value — good data about roads, road conditions, road signage, and temperature variation,” said Imagination’s Johnson. “You can use a number of sensory inputs to do that. But as a human, when we are old enough to get behind the wheel of a car, we have been brought up in a certain environment with certain values, with certain inherent moral and ethical understanding, and social responsibilities. That’s a really hard thing to train a computer in. By the time you drive, you’ve spent [16 or] 18 years on this planet, and you’ve been in lots of interesting social environments to learn about how people interact, learn about general risk management and social responsibility. Some of that’s good, and some of it’s bad. How do you train a machine learning model to do that on top of just operational or environmental situational awareness? If you try to model a human, your input data, if you could even quantify it, would be far broader and far more detailed. That’s why I prefer to use the term machine learning, because a lot of these models are not intelligent.”

As robots leave fenced-off industrial settings and enter the world, more types of safety control are emerging. “The safety requirements as we are inventing new technology are high, but not as high as they will be eventually, when we get to a billion or billions of humanoids that are going to be amongst us, working with us in a collaborative manner,” said Deepu Talla, vice president and general manager of robotics and edge AI at Nvidia, speaking at OktoberTech. “There’s a lot of work that needs to be done in safety, but the other cool thing is that we can apply safety, not only inside the robot, on the robot, but we have an air traffic control scenario. Every plane is autonomous with a pilot or using a pilot, but they can’t do the job themselves without an air traffic controller.”

For example, in China, one company is navigating drone deliveries. “They’ve got the added complexity of altitude, which flight paths they are delivering in urban areas,” said Infineon’s White. “Order something by drone, and it drops it into a location, and they have to figure out which of the flight paths takes off. They get approval from local agencies that the drone could fly this flight path. This is the next level.” Eventually, vehicles will be able to detect if the road is sand, snow, mud, or another type of surface and adjust their behavior accordingly, he noted.

Boosting physical AI safety

Precise GPS and protocols like ultra-wideband (UWB) wireless can help boost safety for physical AI on the move. “In our own facilities, and back-end facilities and tests, there is very precise GPS notation within the facility to know exactly at any moment where that robot is,” said White. “We have that ability to track everything with very close accuracy, within spectrums that we’re going to be setting up some kind of base station. It’s a bit like air traffic control in that we know exactly where everything’s going.”

Companies can deploy safety by leveraging all the sensors in a factory or a manufacturing facility and using that information. “You can see what’s happening on a factory floor with every autonomous, or semi-autonomous robot, and even humanoids at that point,” said Talla. “There’s a lot of work that must be done in the area of safety, both in the robot and outside.”

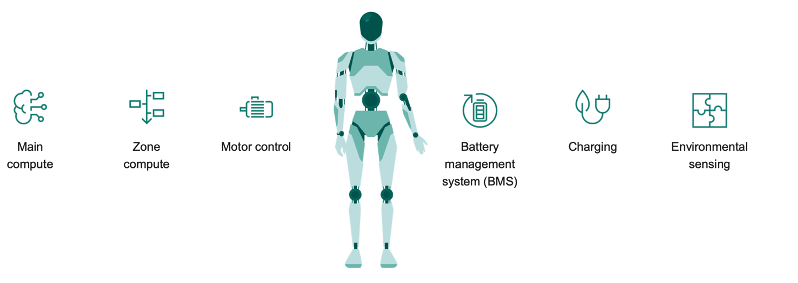

Fig. 2: Silicon needed for humanoids. Source: TSMC

Architectural crossover between automotive, physical AI

From an architectural perspective, the automotive world is moving from a distributed architecture with ECUs all over to a centralized system, and physical AI looks set to follow. “There are many benefits to that,” said Infineon’s Jones. “One of the key benefits is that the automotive OEMs or tiered automotive suppliers can do software updates over the air. They can reduce the size of the modules and enable a lot of other features, including AI. We see the same kind of activity in robotics, where there’s a centralized computer, then there’s smaller zones in the robot, like the arm zone.”

Fig. 3: Zonal compute in robot development. Source: Infineon

Others agree that physical AI will follow similar trends to automotive. “You will see zonal-like architectures in physical AI, because at the end of the day, what’s the difference between a robot and a car? They both move,” said Michal Siwinski, chief marketing officer at Arteris. “They both need to be mission-critical systems. They just move differently. We’re thinking that the whole Industry 4.0 with physical AI and that evolution has a lot more to do with autonomous and autonomy that is actually coming from and driven primarily by the car, so the automotive industry is probably the best proxy. There’s communications, there’s enterprise, there’s other functions, but the car is the proxy industry in that regard.”

Further, vehicles, robotics, and physical AI systems can deploy edge AI processors designed for real-time functions and reliability with built-in security. “You can have vision-based systems on a manufacturing line, or robotics or other things where you need a highly reliable execution path, but you still want to be able to execute today’s modern AI algorithms or models,” said John Weil, vice president and general manager for IoT and edge AI processor business at Synaptics. “The cherry on top is hitting it with a high degree of security.”

For physical AI connected to LLMs, the system might be connected to the internet or a data center, which brings its own risks. “Add a model that is no longer a tightly coupled embedded model, where you put in an input and you get an output, and it’s now a model that makes a decision on the fly,” said Weil. “Security is paramount.”

China speed

So far, China is leading the way when it comes to robot adoption. “The trains have left the station in China when it comes to robotics,” Infineon’s White noted.

Further, China’s automotive trends could signal what is next for automotive in physical AI development.

“The automotive markets growing the fastest right now are in Asia, specifically in China. China’s automotive market is a lot more consumerized than the rest of the world, which is struggling a bit or slowing,” said Satish Ganesan, senior vice president and chief strategy officer at Synaptics. “There’s going to be a convergence of the consumerization of automotive. There’s a lot more traction on EVs and autonomous driving vehicles, and you’ll see that things can come to pass faster. It’s not going to be the same as before, where they were bogged down by regulations. There will be two aspects of it. Running the vehicle, the autonomous driving — those things will be regulated. The software aspect of it will be less so. For implementing all of this, I do know that automotive companies are using AI for development.”

Conclusion

Overall, it is a big moment for physical AI, enabled by large and small language models, multi-modal sensors and simulation technology developments, along with a labor shortage and massive re-industrialization of regions such as the United States and Europe. “If you combine all three things, we have entered the golden age of robotics and physical AI in the next 10-year trajectory of how we can solve some of these problems,” Nvidia’s Talla said. “Almost nothing has been solved today, even though automation has existed for 50-plus years. In the last 10 years, even with AI in the form of deep learning and some transformers, and vision transformers, much of the AI that’s been deployed today is extremely brittle and very specific-purpose. We need to get to a general-purpose.”

Related Reading

LLMs Add Safety Risks To Physical AI

Extra measures are needed to avoid accidents and bias with robots and drones.

Increasing Roles For Robotics In Fabs

AI and robotics are taking on bigger, more complex, and increasingly autonomous tasks, but integration with existing equipment and processes remains a formidable challenge.

Fundamental Issues In Computer Vision Still Unresolved

Industry and academia are addressing next steps.