![According to the report, the use of smaller, specialized AI models provides a significant reduction in the power needed to generate responses [File] According to the report, the use of smaller, specialized AI models provides a significant reduction in the power needed to generate responses [File]](https://www.thehindu.com/theme/images/th-online/1x1_spacer.png)

According to the report, the use of smaller, specialized AI models provides a significant reduction in the power needed to generate responses [File]

| Photo credit: Reuters

The possibilities of artificial intelligence are immeasurable, but likewise vast energy consumption needs to be curbed, and by asking a shorter question of one way of achieving this, a UNESCO study was published Tuesday.

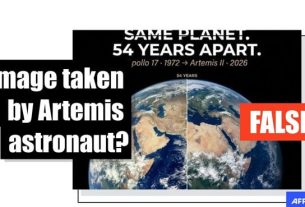

In a report published to mark AI for a good global summit in Geneva, UNESCO said that using shorter queries and more specific models could potentially reduce AI energy consumption by up to 90%.

Openai CEO Sam Altman recently revealed that each request sent to ChatGPT, a popular AI app that consumes 10 to 70 times the power of Google searches at an average of 0.34 WH.

CHATGPT receives approximately 1 billion requests per day, which is equivalent to 310 GWH per year. For example, it corresponds to the annual electricity consumption of 3 million people in Ethiopia. for example

Furthermore, UNESCO calculated that AI energy demand doubles every 100 days as generative AI tools are incorporated into everyday life.

“The exponential growth of the computational power required to implement these models increases the burden on global energy systems, water resources and critical minerals, raising concerns about competition over environmental sustainability, fair access and limited resources,” the UNESCO report warned.

However, we were able to reduce our power usage by almost 90% by reducing the length of the query or prompt and using smaller AI without degrading performance.

Many AI models, like ChatGpt, are generic models designed to respond to a variety of topics. This means that a huge amount of information must be sifted through to formulate and evaluate responses.

Using smaller, specialized AI models provides a significant reduction in the power required to generate responses.

Cut prompts have been cut from 300 words to 150 words.

As we already recognize the energy issues, all Tech Giants offer miniature versions of each large language model with fewer parameters.

For example, Google sells Gemma, Microsoft has the PHI-3, and Openai has the GPT-4o Mini. Similarly, French AI companies have introduced the Ministral model by Mistral AI.

Published – July 9, 2025 09:19 AM IST