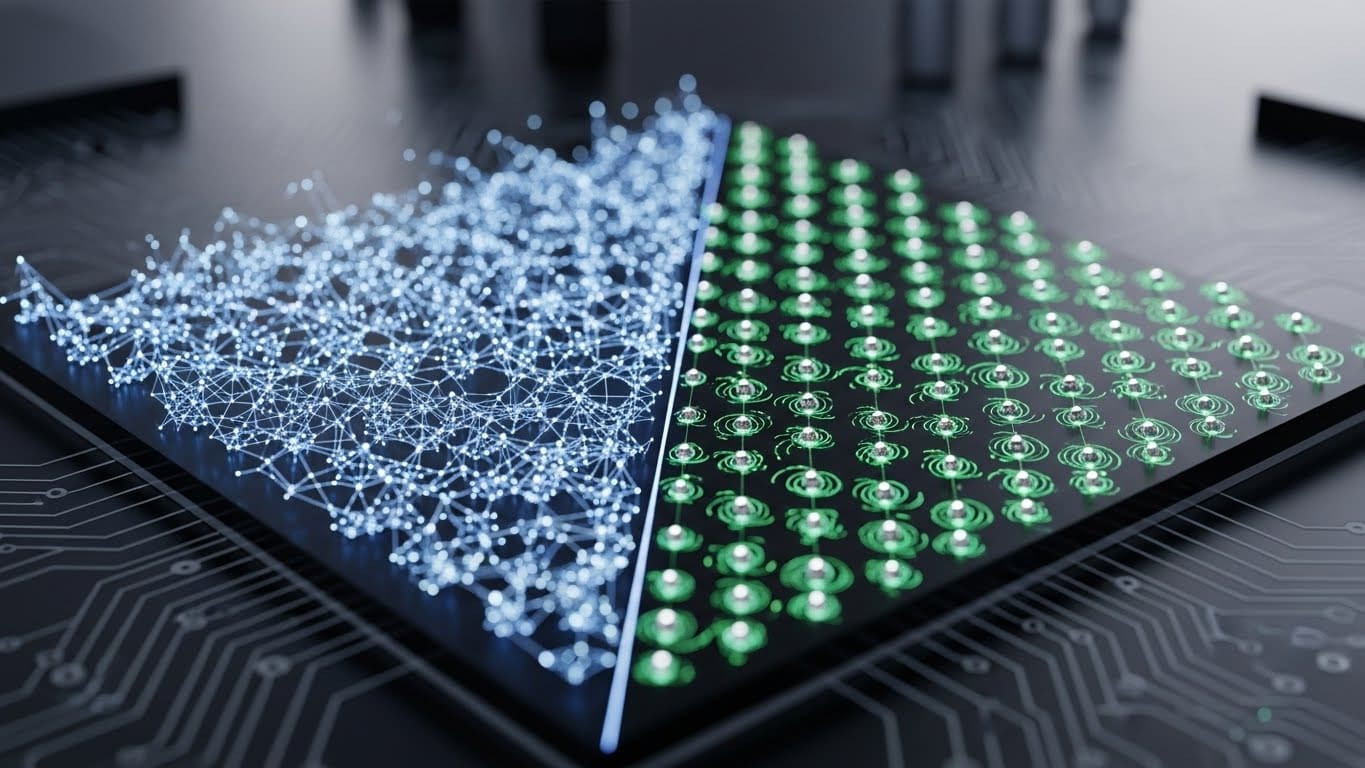

In the rapidly evolving computing landscape, two technologies stand out for their transformative potential: tensor processing units (TPUs) and quantum processing units (QPUs). Although both aim to speed up computational tasks, they operate on fundamentally different principles and address different challenges. TPUs are classic hardware accelerators optimized for machine learning workloads, especially the tensor operations that form the backbone of neural networks. QPUs, on the other hand, take advantage of the counterintuitive laws of quantum mechanics to perform calculations that are difficult for classical systems. Each technology represents a unique approach to solving complex problems, so understanding the differences between them is critical to understanding the future of computing.

The importance of TPU lies in its ability to democratize and scale artificial intelligence (AI). TPUs enable advances in natural language processing, computer vision, and autonomous systems by significantly reducing the time and energy required to train and deploy machine learning models. QPUs, on the other hand, promise dramatic speedups for certain problems, such as simulating quantum systems, optimizing logistics, and cracking cryptographic codes. However, quantum computing is still in its infancy and is constrained by technical hurdles such as error correction and qubit stability. This article explores the core principles, operating mechanisms, and challenges of TPUs and QPUs, providing a comprehensive comparison of these two pillars of modern computing.

As of 2024, TPU has grown into a highly efficient cloud integration accelerator used by both technology giants and startups. QPUs are still in the Noisy Intermediate-Scale Quantum (NISQ) era, but they are rapidly advancing with companies like IBM, Google, and IonQ pushing the boundaries of qubit count and coherence. The divergence of their trajectories highlights the dual trajectory evolution of computing. One is rooted in classical optimization, the other in quantum exploration.

How TPUs accelerate machine learning through Tensor operations

A tensor processing unit (TPU) is an application-specific integrated circuit (ASIC) designed to accelerate tensor operations that are central to machine learning (ML). Tensors are multidimensional arrays (a generalization of vectors and matrices) that serve as the fundamental data structure for deep learning. TPUs optimize two major phases of ML: training, where the model learns from data, and inference, where the model makes predictions. The TPU’s architecture is tailored to the matrix multiplication and convolution operations that dominate neural network computations.

At the heart of a TPU is a matrix multiplication unit (MXU) that performs billions of operations per second. For example, Google’s TPU v4, introduced in 2021, has 2.5 teraflops (TFLOPs) of performance per chip for mixed-precision computation and enables efficient processing of 16-bit floating point (FP16) and 8-bit integer (INT8) data. This is achieved through systolic arrays, which are grid-like structures of processing elements that stream data in parallel, minimizing memory bottlenecks. By embedding these arrays directly on the chip, TPUs reduce the reliance on external memory, which is a major source of latency in traditional CPUs and GPUs.

TPUs also utilize a special memory hierarchy. High-bandwidth on-chip memory (called SRAM) stores intermediate results, and high-capacity off-chip memory (HBM) processes larger datasets. This design allows data to move efficiently between compute units and storage, avoiding the “Neumann bottleneck” that plagues general-purpose processors. Additionally, the TPU integrates a unified memory controller that coordinates data flow between multiple chips, enabling seamless scaling of distributed training.

The result is a system optimized for ML-specific workloads. TPU clusters can train large language models in days instead of weeks and consume significantly less power than GPU-based systems. For example, a single TPU v4 chip can process 32 gigabytes (GB) of data per second with an energy efficiency of 25 TOPS/Watt (Tera Operations/Second). These metrics highlight why TPUs are essential for modern AI, especially applications that require real-time inference such as voice recognition and self-driving cars.

How QPU uses quantum mechanics to solve tough problems

Quantum processing units (QPUs) exploit quantum phenomena such as superposition, entanglement, and interference and operate on principles that defy classical intuition. At its heart are qubits, the quantum analogs of classical bits. Unlike classical bits, which exist in either 0 or 1 states, qubits can exist in both states simultaneously. This enables QPU. n Qubits represent 2^n states in parallel, enabling exponential computational power for certain tasks.

Qubits are physically realized in a variety of ways, each with its own advantages and challenges. The superconducting qubits used by IBM and Google rely on Josephson junctions cooled to near absolute zero (15 to 20 millikelvin) to minimize thermal noise. The trapped ion qubits employed in IonQ and Quantinuum use charged atoms manipulated by laser pulses, resulting in longer coherence times but slower gating. Optical qubits, which companies such as Xanadu are researching, encode quantum information in photons and enable room-temperature operation, but require complex optical routing.

Quantum gates are similar to classical logic gates, but operate on qubits through unitary operations that operate on probability amplitudes. For example, a Hadamard gate creates a superposition, while a CNOT gate entangles two qubits. These operations are combined into quantum circuits to perform algorithms such as Scholl’s algorithm for factoring large numbers and Grover’s algorithm for unstructured searches. However, quantum states are fragile. Decoherence (loss of quantum information due to environmental interactions) limits the coherence time of qubits to microseconds in current systems.

Error correction is an important issue. The leading approach, surface codes, requires thousands of physical qubits to encode a single logical qubit with fault tolerance. For example, IBM’s Eagle processor has 127 physical qubits, but only a fraction of the error-corrected logical qubits can be implemented. Despite these hurdles, QPUs are already outperforming classical systems on certain benchmarks, such as Google’s 2019 Quantum Supremacy Demonstration. There, a 53-qubit processor solved a problem in 200 seconds that would take a supercomputer 10,000 years.

Why decoherence and error correction limit QPU performance

The main challenge in quantum computing is maintaining the stability of qubits. Decoherence occurs when a qubit interacts with its environment, causing the superposition to collapse to a classical state. The coherence time (how long a qubit remains in a quantum state) varies widely depending on the type of qubit. For example, superconducting qubits typically have coherence times of 100 microseconds to 1 millisecond, whereas trapped ion qubits can maintain coherence for several seconds. Even with these variations, decoherence remains a bottleneck for practical quantum algorithms, as most algorithms require gating operations to complete before errors accumulate.

Error rates further complicate matters. The gate error of superconducting qubits ranges from 10^-3 to 10^-4 per operation. This means that a circuit with 1,000 gates will likely contain some errors. Error correction alleviates this by encoding the logical qubit across multiple physical qubits, but it requires significant overhead. For example, surface codes require 4 to 5 physical qubits per logical qubit for fault tolerance and additional qubits for syndrome measurements. This means that a 1,000 logical qubit system could require between 10,000 and 50,000 physical qubits, far exceeding current capabilities. IBM’s roadmap aims for 1 million physical qubits by 2030, but achieving this will require breakthroughs in materials science and cryogenics.

Scalability is another hurdle. Superconducting qubits require dilution refrigerators operating at 15 mK, making large-scale systems energy-intensive. Trapped ion architectures face the challenge of scaling ion traps to accommodate hundreds of qubits, while photonic systems suffer from losses in optical components. These limitations highlight the gap between theoretical quantum benefits and real-world deployments and highlight the need for innovations in error-tolerant qubit designs, such as topological qubits, which Microsoft is actively pursuing.

Why TPUs face tradeoffs in power efficiency and algorithmic adaptability

Although TPUs excel at accelerating machine learning, they face unique trade-offs in power efficiency and adaptability to evolving algorithms. TPU energy consumption remains a significant concern, especially as AI models grow in size and complexity. For example, training a large language model like GPT-4 using a TPU can consume millions of kilowatt-hours and cost over $10 million. Google claims that TPUs are more energy efficient than GPUs, delivering 18 TOPS/Watt compared to 12 TOPS/Watt for high-end GPUs, but power consumption still varies based on model size. A TPU v4 pod with 1,024 chips can consume 10 megawatts (MW) of power, so it requires advanced cooling solutions and proximity to renewable energy sources.

The adaptability of the algorithm is also limited. TPUs are optimized for certain tensor operations such as matrix multiplication and convolution, but struggle with tasks that require dynamic computational graphs or irregular memory accesses. This rigidity becomes an issue as AI research moves toward hybrid models that combine neural networks with symbolic reasoning or reinforcement learning. For example, reinforcement learning algorithms often require conditional branches and sparse updates, and TPUs’ fixed pipeline architecture makes these executions inefficient.

Additionally, the TPU’s software ecosystem is tightly integrated with TensorFlow, Google’s machine learning framework. This integration streamlines development within the Google Cloud ecosystem, but creates a barrier for users who prefer open source frameworks like PyTorch. Porting models between frameworks can reduce performance and reduce the TPU’s accessibility to broader AI innovation. These challenges highlight the need for more flexible accelerators that balance specialization and adaptability and ensure that TPUs remain relevant as AI evolves.

The way forward: Convergence and divergence in computing

The trajectory of TPUs and QPUs reflects a broader trend in computing: specialization in specific problem areas. TPUs will continue to evolve to support next-generation AI models, such as multimodal systems that integrate vision, language, and sensor data. Innovations in chiplet-based architectures, where TPUs are built from modular components, have the potential to increase scalability and reduce manufacturing costs. QPU, on the other hand, focuses on overcoming error rate and coherence limitations through materials advances, error correction, and hybrid quantum-classical algorithms.

Despite their differences, TPUs and QPUs may eventually be integrated into hybrid systems. For example, a quantum-classical coprocessor can use a QPU to optimize the weights of a neural network while a TPU handles inference. Although such integration requires new software frameworks and programming models, the potential for dramatically speeding up AI training and scientific discovery is enormous. As both technologies mature, their complementary strengths will shape the future of computing, enabling solutions to problems previously thought impossible.