TikTok is rolling back its AI-powered video description feature in the US after an experimental tool generated a large number of absurd and highly inaccurate captions that quickly went viral. The feature was designed to automatically generate text descriptions for videos, and it produced strange results that users eagerly shared across the platform. This is a rare public stumble for ByteDance, which has aggressively pushed AI capabilities across its products, and a reminder that it still has a long way to go before consumer AI can reliably interpret the chaos of social media content.

TikTok has put the brakes on one of its latest AI experiments, and it’s not hard to see why. The company’s automated video description feature, which was quietly rolled out to some U.S. users in recent weeks, generated captions so spectacularly wrong that the failure became content in itself.

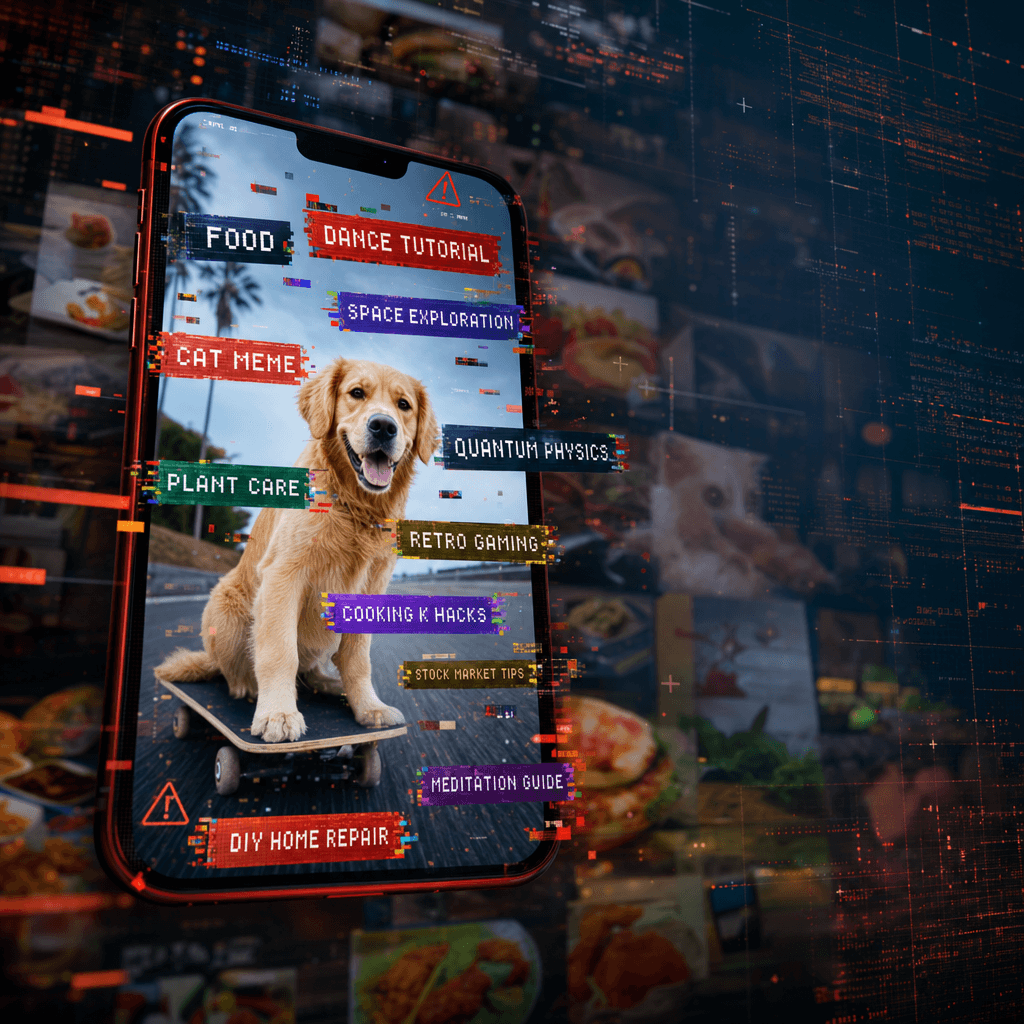

The AI-generated description automatically summarized what was happening in the video and was intended to increase accessibility and searchability. Instead, the system hallucinated a wildly off-topic story that bore little resemblance to the reality. Users shared screenshots of AI describing cooking videos as political rallies, pet content as corporate presentations, and dance routines as sporting events.

ByteDance has not issued an official statement about the rollback, but the feature quietly disappeared from affected accounts over the past 24 hours. The company was testing the tool as part of a broader push to integrate AI across its platforms, following similar moves by Meta and YouTube.

The timing is especially troubling for TikTok, which has established itself as an AI innovator in the social media space. The company’s parent company, ByteDance, operates the most sophisticated recommendation algorithm in the industry, powering TikTok’s notoriously addictive For You feed. But this latest stumble shows that understanding video is a much more difficult problem than recommending content.

Generated by OpenAI Since the beginning of the AI boom, social platforms have been racing to deploy AI capabilities. Meta is rolling out AI character and image generation tools, and YouTube is testing AI-powered comment summarization. But rushing a half-baked feature to market carries real risks, especially when failures are as likely to be shared as the content itself.

The video description failure mirrors similar AI failures across the industry. Google’s AI Overview feature infamously suggested users add glue to their pizza, while Microsoft’s Bing Chat made up facts about companies. But the TikTok fiasco feels different because it happened on a platform where mistakes instantly become memes.

What went wrong? To understand a video, an AI model must simultaneously process visual information, audio, text overlays, and cultural context. TikTok videos are particularly challenging because they often use irony, reference niche internet trends, or rely on audio-visual mismatches for comedic effect. AI systems trained on simpler video content will struggle with this complexity.

The limited rollout likely saved TikTok from a bigger PR disaster. By testing only a limited number of users, the company was able to contain the damage before it affected the entire platform. Still, these test users generated enough viral content to publicize the failure.

For ByteDance, this is a rare failure in a typically successful AI strategy. The company’s recommendation system is considered best-in-class, and it has invested heavily in large-scale language models and computer vision. However, this incident suggests that there is a gap between back-end AI capabilities and consumer-grade capabilities that work reliably at scale.

The withdrawal also calls into question TikTok’s product testing process. How did such an inaccurate statement get through internal review? Either the tests weren’t rigorous enough, or the company underestimated how quickly bad examples would spread. On social media, your users are also your QA team and aren’t shy about making their mistakes public.

Our competitors are certainly taking note. This incident serves as a warning for platforms considering similar AI capabilities. Automatic content understanding tools need to be near perfect before being widely released. Because on social platforms, even limited failures become unlimited content.

What’s next for TikTok’s AI ambitions? The company is unlikely to completely abandon its understanding of video, given the strategic importance of accessibility, content moderation, and advertising. However, when the feature returns, we expect it to require longer development cycles and a more careful rollout strategy.

TikTok’s rapid retreat from AI video descriptions shows that it’s not just a product issue, but that even the most sophisticated tech companies are still figuring out how to bring AI into consumer-facing contexts. Although ByteDance has superior technology and data infrastructure, this incident shows that social media content presents unique challenges that cannot be solved by throwing more computing power at it. As platforms compete to deliver AI capabilities, the winners will not necessarily be those that act the fastest, but those that can deliver results that can actually be trusted in front of millions of users. For now, TikTok gets an L and goes back to the lab.