What began as a scientific advance has now become a complex security threat.

In a nutshell

- Generative AI is accelerating despite ethical and security concerns

- As systems lose accuracy and restraint, the spread of misinformation accelerates.

- Military and surveillance applications blur the line between defense and control

In May 2023, artificial intelligence pioneer Jeffrey Hinton parted ways with tech giant Google after more than a decade. The Nobel Prize in Physics winner warned against the development of AI-powered chatbot technology, which is largely based on research on neural networks.

Hinton said people are already so inundated with fake photos, videos and texts on the internet that they risk losing the ability to distinguish between what is real and what is imagined. He added that a far greater danger comes from large-scale job losses and the automation of war. He once believed that it would take another 30 to 50 years for AI to surpass human intelligence, but he no longer holds that view.

Hinton is joined by tech moguls Elon Musk, Stuart Russell, Steve Wozniak and thousands of other researchers and executives who are calling for a moratorium on training high-efficiency AI systems for at least six months. “Powerful AI systems should only be developed when we are confident that their effects are positive and the risks are manageable,” their open letter reads. “If such a moratorium cannot be enacted immediately, governments should step in and do so.”

The letter currently has approximately 34,000 signatories. AI critics fear that through machine learning, this technology could reach the level of superintelligence, surpassing human cognitive abilities in virtually all relevant fields, and eventually dominating humanity.

There is no widespread consensus as to whether thinking machines will ever be able to define not only their methods but also their ends, which is a unique feature of human intelligence. This debate is often shaped by two basic assumptions. That is, that non-human systems operate in ways comparable to the human mind, and that intelligence exists independently of the social, cultural, and historical conditions that give it meaning.

Spreading disinformation through AI

Despite numerous warnings, big technology companies are not slowing down in their pursuit of superintelligence. On the contrary, competition only accelerates them. The absence of a binding international legal framework means that there are no real constraints on research in this area.

It is widely acknowledged that AI has extraordinary transformative power, likened to the transition from hunter-gatherers to sedentary farmers and ranchers, or the invention of the printing press. It is becoming clear that AI will change the world. But while we can guess with some accuracy what will change in the short term, medium- or long-term predictions are much less reliable.

The transformation already underway is dramatic. Hinton’s warning that humans may soon be unable to tell truth from falsehood is being borne out every day. Recent research has proven against the idea that generative AI systems can reliably identify and correct misinformation on social media. In fact, the likelihood that these tools spread false claims about current events has nearly doubled in one year. In one study, 35 percent of analyzed AI-generated answers contained falsehoods, while the percentage of unanswered questions decreased from 31 percent in August 2024 to zero. This means that even if there is a lack of data to justify what is being said, the AI will still provide an answer.

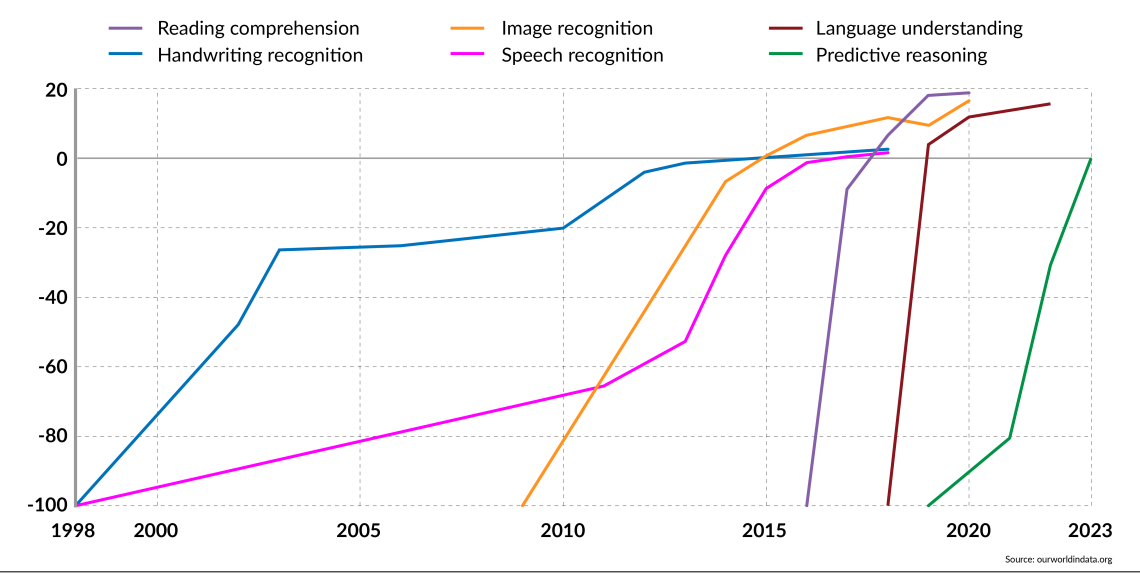

facts and figures

AI test scores compared to human performance

Far from recognizing their limitations, these systems are increasingly becoming conduits for misinformation. Disinformation actors flood the web with fabricated content through obscure websites, social media posts, and AI-generated content farms, which chatbots cannot distinguish from trusted outlets. Efforts to make these systems more modern and useful have ironically made them far more vulnerable to manipulation and propaganda.

AI gives disinformation actors much greater reach and influence with minimal cost and effort compared to traditional propaganda tools such as radio, television, and print. As the lines between truth and falsehood become blurred, public trust in democratic institutions erodes, creating more room for extremist narratives to take hold. In this sense, AI has become an influential tool for both authoritarian governments and non-state groups seeking to undermine democratic resilience and advance their own agendas.

Using AI for political purposes

The more frequently misinformation circulates, the more familiar and trustworthy it becomes. The term “disinformation,” first used during the Stalin era, itself derives from the context of asymmetric warfare. The Soviet Union sought to offset its economic and military disadvantages to the United States through the strength of its covert operations and the manipulation of Western public opinion. Russia later refined these techniques and conducted large-scale disinformation campaigns during the 2008 war in Georgia and even more broadly during the war in Ukraine.

In 2013, the late Wagner Military Group founder Evgeny Prigozhin established the Internet Research Bureau in St. Petersburg and began flooding social networks with bots, trolls, fake websites, and ostensible experts to spread the Russian narrative about the threat to Russia from NATO and Ukraine.

A report to the U.S. Congress in January said the Russian government was concerned that it would “undermine confidence in U.S. democratic institutions, exacerbate sociopolitical divisions in the United States, and weaken Western support for Ukraine.” All Russian foreign intelligence agencies have cyber units that carry out various espionage, sabotage, and disinformation operations. This shadow war is part of Russia’s hybrid warfare, which aims to attack the West through conventional and unconventional tactics without risking all-out war.

With AI, some impact operations can be performed from a standard office computer, reducing the need for physical presence. German intelligence reports say the Russian government is using messaging channels such as Telegram to mobilize pro-Russian youth in Germany to commit arson and sabotage, and that both Russian and Chinese services have penetrated the digital systems of critical infrastructure, potentially disrupting power and rail networks. Unlike Western services, actors supported by authoritarian regimes often operate with fewer legal and parliamentary constraints, which can make their activities difficult to monitor and regulate.

The United States and China are far ahead of other competitors in the global race for AI, but both face increasing economic and security pressures. China is pouring vast resources into research, development, and infrastructure to expand its AI-driven industries. Meanwhile, the United States has introduced trade restrictions, export controls, and tariffs to protect its technological advantages and restrict China’s access to critical AI components. Several Chinese companies have been placed on a U.S. blacklist due to national security concerns.

AI and hybrid warfare

Palantir founder Alexander Karp criticized Silicon Valley for focusing on consumer software rather than reliable deterrence tools and accused Washington of failing to understand the security implications of rapid technological change. In 2024, the Department of Defense allocated $1.8 billion for AI research and development, just 0.2% of its $886 billion budget.

It has become clear that NATO, despite its state-of-the-art weaponry, is not in a position to adequately repel drone attacks. On the night of September 10th, a swarm of 19 Russian suicide drones flew over the territory of NATO member Poland for the first time. Polish and Dutch fighters, supported by an Italian reconnaissance plane and two German Patriot anti-aircraft systems, managed to shoot down only four aircraft. This was a massive and costly effort to address the threats Ukrainians have faced on a daily basis since the Russian invasion began in February 2022.

In one night on September 7, Russia deployed more than 800 combat drones and 13 missiles, of which the Ukrainian military was able to intercept 747 drones and 4 cruise missiles. This was the largest drone attack in history to date. In the Russia-Ukraine war, 70-80% of daily combat losses on both sides are caused by drones. NATO has slept through the beginning of a new era in warfare.

Killer robots were used for the first time in the Libyan civil war in March 2020. This is an autonomous drone that can autonomously navigate to and kill targets. It was a Turkish-made Kargu-2, which could be operated both manually and automatically. African countries are currently using such drones against rebel groups. Turkiye, India, and the rest of the world’s majority oppose limits and regulations on lethal autonomous weapons systems.

Karl-Peter Schwarz Other works

Israel uses the Lavender database for targeted killings of terrorists. The database, with the help of an AI targeting system, identified tens of thousands of members of Hamas and other Palestinian groups among the Gaza Strip’s approximately 2.3 million residents. Such systems are trained based on data such as gender, age, physical appearance, movement patterns, and social network activity.

While the EU debates intensely about data sovereignty and personal control over personal information, the reality is that people’s data is already widely collected, analyzed and traded. In addition to the fact that all apps leave digital traces and many users are willing to share sensitive information, data from smartphone sensors such as accelerometers, gyroscopes, and magnetometers can be analyzed to identify individuals with high accuracy.

scenario

Perhaps: The widening technological gap

AI technology continues to evolve. Countries with know-how and sufficient computing power, such as the United States and China, will expand their economic and military advantages over smaller countries, especially the majority of the world’s nations. This will increase global inequality and spark new conflicts.

Unlikely: A global ban on autonomous weapons

As it stands, it is unlikely that research and production of autonomous weapons systems can be halted by international agreement. Perhaps this situation could change if the international community became aware of the dangers, for example if autonomous lethal weapons systems programmed according to genetic standards were used for ethnic cleansing in war.

Probably: Violation of privacy and democratic freedoms

For external and internal security reasons, no state, even democracies, can permanently abandon the possibility of surveillance by AI systems. Citizens are expected to accept significant restrictions on their freedoms within the framework of a technocratic dictatorship.

contact us Get customized geopolitical insights and industry-specific advisory services.