SVM algorithm

SVM is a supervised learning algorithm widely used for classification and regression analysis23. Based on statistics and machine learning theories, SVM aims to find an optimal hyperplane that accurately separates data points from different categories to achieve classification.

Given a training dataset containing two types of data points, SVM seeks to determine a hyperplane that separates these points. This hyperplane can be described by the classification function \(\:f\left(x\right)={w}^{T}x+b\). The distance between a data point xxx and the hyperplane is represented as \(\:\left|f\left(x\right)\right”https://www.nature.com/”|w||\), where \(\:||w||\) is the norm of the weight vector \(\:w\)24. For data points \(\:{x}_{1}\) and \(\:{x}_{2}\) belonging to different categories, the sum of their distances from the hyperplane is given by \(\:\left(\left|f\left({x}_{1}\right)+f\left({x}_{2}\right)\right”https://www.nature.com/”|w||\right)\). The distance between the two points to the hyperplane is called the margin. The core optimization goal in SVM is to maximize the margin, which can be achieved by adjusting the weight vector w and the bias term b to find a hyperplane that maximizes the margin25.

Given a training sample set \(\:\left\{\right({x}_{1},{y}_{1}),({x}_{2},{y}_{2}),….,({x}_{n},{y}_{n}\left)\right\}\), where \(\:{x}_{i}\) is the feature vector and \(\:{y}_{i}\) is the category label, the goal is to maximize the margin M such that Eq. (1) holds:

$$\:\stackrel{n}{\underset{i=1}{min}}\:\left({y}_{i}\right(w{x}_{i}+b\left)\right)=1.$$

(1)

\(\:(w,b)\) are the parameters of the hyperplane. The maximum margin M is related to www and \(\:\left|w\right|\) by the formula \(\:M=\frac{2}{\left|w\right|}\).

Maximizing \(\:M=\frac{2}{\left|w\right|}\) can be transformed into minimizing \(\:\frac{1}{2}{||w||}^{2}\). This way, the objective function can be rewritten as Eq. (2):

$$\:{min}_{w,b}\frac{1}{2}{||w||}^{2}.$$

(2)

Constraints (3) need to be satisfied:

$$\:{y}_{i}(w{x}_{i}+b)\ge\:1.$$

(3)

$$\:i=\text{1,2},\dots\:,n.$$

The SVM optimization problem becomes a convex quadratic problem that can be solved using Lagrange multipliers26,27. By solving this optimization, the optimal weight vector w and bias b can be obtained, thus determining the hyperplane that maximizes the margin28. In the Lagrange multiplier method, non-negative Lagrange multipliers \(\:{a}_{i}\ge\:0\) are introduced, resulting in the Lagrangian function:

$$\:L(w,b,\alpha\:)=\frac{1}{2}\parallel\:w{\parallel\:}^{2}-\sum\:_{i=1}^{m}\:{\alpha\:}_{i}\left({y}_{i}\right({w}^{T}{x}_{i}+b)-1).$$

(4)

Let the partial derivative of \(\:L(w,b,\alpha\:)\) with respect to w and b be zero:

$$\:w=\sum\:_{i=1}^{m}\:{\alpha\:}_{i}{y}_{i}{x}_{i}\sum\:_{i=1}^{m}\:{\alpha\:}_{i}{y}_{i}=0.$$

(5)

Return w and b to the first step:

$$\:\left\{\begin{array}{c}min\frac{1}{2}\sum\:_{j=1}^{i=1}\:{\alpha\:}_{i}{\alpha\:}_{j}{y}_{i}{y}_{j}{x}_{i}^{T}{x}_{j}-\sum\:_{i=1}^{m}\:{\alpha\:}_{i}\\\:s.t\sum\:_{i=1}^{m}\:{\alpha\:}_{i}{y}_{i}=0,{\alpha\:}_{i}\ge\:0,i=\text{1,2}…,m\end{array}.\right.$$

(6)

This is then transformed into the dual form, as shown in Eq. (7).

$$\:\left\{\begin{array}{c}max\sum\:_{i=1}^{m}\:{\alpha\:}_{i}-\frac{1}{2}\sum\:_{i=1}^{m}\:\sum\:_{j=1}^{m}\:{\alpha\:}_{i}{\alpha\:}_{j}{y}_{i}{y}_{j}{x}_{i}^{T}{x}_{j}\\\:s.t\sum\:_{i=1}^{m}\:{\alpha\:}_{i}{y}_{i}=0\end{array}.\right.$$

(7)

The final model is given by Eq. (8).

$$\:f\left(x\right)={w}^{T}x+b=\sum\:_{i=1}^{m}\:{\alpha\:}_{i}{y}_{i}{x}_{i}^{T}x+b.$$

(8)

The introduction of polynomial kernel functions allows for a polynomial mapping of the original features. This significantly improves SVM’s ability to handle nonlinear problems, as expressed in Eq. (9):

$$\:K({x}_{i},{x}_{j})={\left({x}_{i}{x}_{j}+\text{c}\right)}^{d},$$

(9)

\(\:{x}_{i}\) and \(\:{x}_{j}\) are the feature vectors of input samples, c is a constant that shifts the feature space, and d is the polynomial degree, representing higher-order feature combinations29,30.

CNN

2D-CNNs

CNNs excel at extracting high-level features and can easily learn semantic clues from data. In the field of computer vision, CNNs offer three key advantages over other traditional neural networks: First, CNNs implement weight sharing across the network, reducing the number of parameters to train, enhancing generalization, and preventing overfitting. Second, CNNs perform both feature extraction and classification simultaneously, making the output organized and highly dependent on the extracted features. Third, CNNs are easier to scale and implement on large networks31.

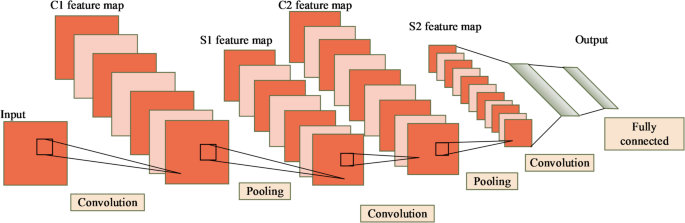

The 2D-CNNs are an important model in deep learning for processing image data. It extracts spatial features through local connections and weight sharing32. The 2D-CNNs consist of several layers: the input layer, convolutional layers, pooling layers, and fully connected layers. The core operation of the 2D-CNNs is the convolution operation. The architecture of the 2D-CNNs are shown in Fig. 1.

For an input image \(\:X\in\:{\mathbb{R}}^{H\times\:W\times\:C}\), where \(\:H\) and \(\:W\) represent the height and width of the image, and \(\:C\) represents the number of channels. The output feature map from the convolutional layer, \(\:Y\in\:{\mathbb{R}}^{{H}^{{\prime\:}}\times\:{W}^{{\prime\:}}\times\:K}\), can be computed using the Eq. (10):

$$\:{Y}_{i,j,k}=\sigma\:\left(\sum\:_{c=1}^{C}\:\sum\:_{p=1}^{P}\:\sum\:_{q=1}^{Q}\:{X}_{i+p-1,j+q-1,c}\cdot\:{W}_{p,q,c,k}+{b}_{k}\right).$$

(10)

\(\:{W}_{p,q,c,k}\) is the weight of the convolutional kernel (filter), with dimensions \(\:P\times\:Q\times\:C\times\:K\), where \(\:P\) and \(\:Q\) are the height and width of the kernel, respectively, and \(\:K\) is the number of kernels. \(\:{b}_{k}\) is the bias term, and σ(⋅) is the activation function, typically using the “Rectified Linear Unit (ReLU)” function: \(\:\sigma\:\left(x\right)=\text{m}\text{a}\text{x}(0,x)\).

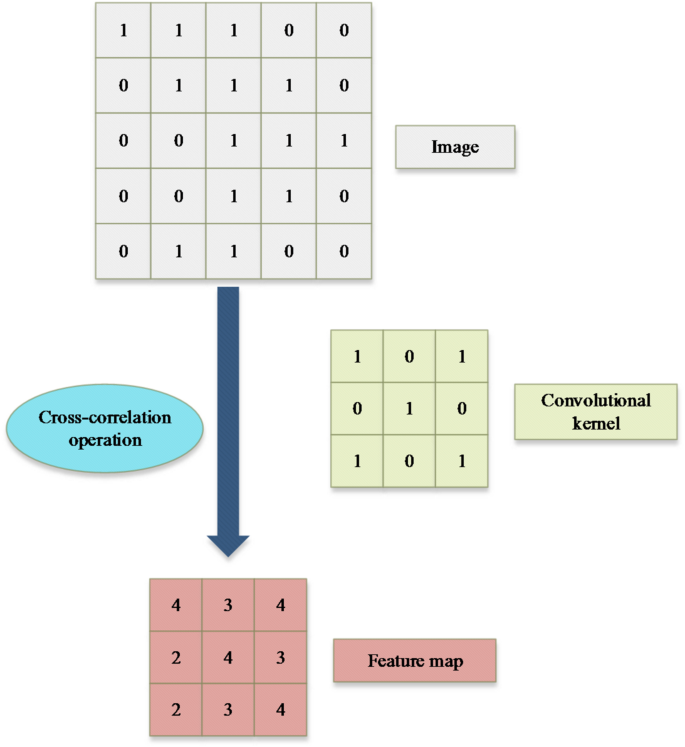

The convolution process is shown in Fig. 2.

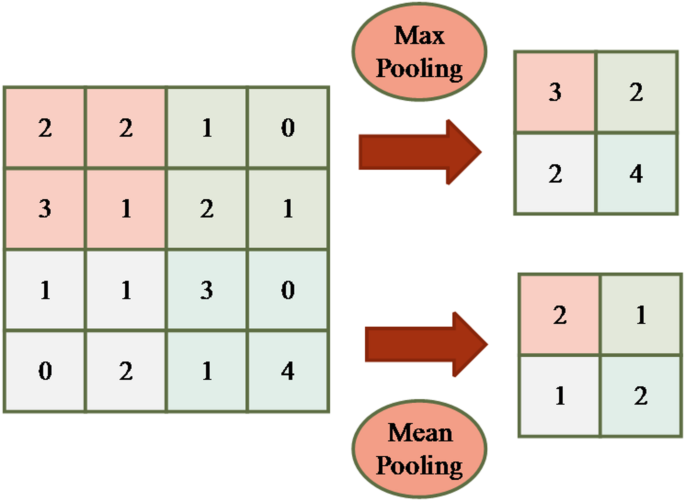

Pooling operations are typically used to reduce the dimensionality of the feature map, thereby decreasing the computational load and mitigating overfitting. In 2D image tasks, the target object does not always appear in a fixed position but can appear at some offset from its initial position. To alleviate the positional sensitivity of the convolution layer, pooling operations were introduced33. Additionally, pooling operations can significantly reduce the number of parameters and eliminate redundant information, without affecting the image’s information expression. Common pooling methods include Max Pooling and Mean Pooling. Max Pooling selects the maximum value within the pooling window, which helps preserve edge details, while Mean Pooling computes the average value within the window, emphasizing background information. Diagrams of both pooling computations are shown in Fig. 3.

Diagrams of two pooling computations.

In Fig. 3, for an image of size 4 × 4, a sliding pooling window of size 2 × 2 is used with a stride of 2. This means that after sliding 2 steps, the maximum value within the pooling window is taken as the output for Max Pooling; for Mean Pooling, the average value within the window is taken as the output.

Assuming the pooling kernel is R×S, the pooled output \(\:Z\in\:{\mathbb{R}}^{{H}^{{\prime\:}{\prime\:}}\times\:{W}^{{\prime\:}{\prime\:}}\times\:K}\) can be defined using the Equation (for Max Pooling as an example):

$$\:{Z}_{i,j,k}=\underset{p=1,\dots\:,R}{max}\:\underset{q=1,\dots\:,S}{max}\:{Y}_{(i-1)R+p,(j-1)S+q,k}.$$

(11)

The fully connected layer is used to map the extracted features to the target output space34. Suppose the features output by the pooling layer are flattened into a vector \(\:\text{z}\in\:{\mathbb{R}}^{d}\), then the output of the fully connected layer \(\:\mathbf{o}\in\:{\mathbb{R}}^{n}\) can be calculated using the Eq. (12):

$$\:\text{o}=\sigma\:(\text{W}\text{z}+\text{b}).$$

(12)

In the optimization process, the 2D-CNNs update the network parameters through the backpropagation algorithm to minimize the loss function L. For example, the cross-entropy loss is calculated as shown in Eq. (13):

$$\:L=-\frac{1}{N}\sum\:_{i=1}^{N}\:\sum\:_{j=1}^{M}\:{y}_{i,j}\text{l}\text{o}\text{g}{\widehat{y}}_{i,j}.$$

(13)

\(\:N\) is the number of samples; \(\:M\) is the number of classes; \(\:{y}_{i,j}\) is the true label; \(\:{\widehat{y}}_{i,j}\) is the predicted probability distribution by the model35. Through multilayer stacking and parameter optimization, 2D-CNNs can effectively extract spatial features in learning behavior pattern recognition tasks, achieving efficient classification and prediction.

3D-CNNs

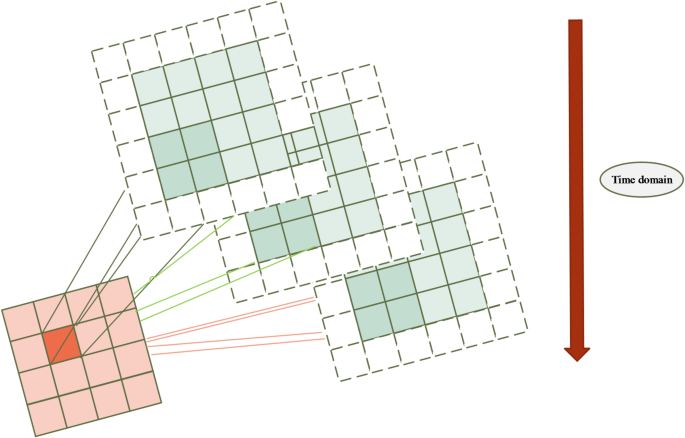

The 3D-CNNs are an important model in deep learning for processing spatiotemporal information, especially for feature extraction from video or three-dimensional volumetric data36,37. A diagram of 3D convolution is shown in Fig. 4.

Diagram of 3D convolution.

For the input data \(\:X\in\:{\mathbb{R}}^{T\times\:H\times\:W\times\:C}\), where \(\:T\) represents the temporal dimension, \(\:H\) and \(\:W\) are the height and width of the image, and \(\:C\) is the number of channels, the output feature map of the 3D convolution operation \(\:{Y\in\:\mathbb{R}}^{{T}^{{\prime\:}}\times\:{H}^{{\prime\:}}\times\:{W}^{{\prime\:}}\times\:K}\) can be computed using the following Eq. (14):

$$\:{Y}_{t,i,j,k}=\sigma\:\left(\sum\:_{c=1}^{C}\:\sum\:_{p=1}^{P}\:\sum\:_{q=1}^{Q}\:\sum\:_{r=1}^{R}\:{X}_{t+r-1,i+p-1,j+q-1,c}\cdot\:{W}_{r,p,q,c,k}+{b}_{k}\right).$$

(14)

\(\:{W}_{r,p,q,c,k}\) is the weight of the 3D convolution kernel, with size \(\:R\times\:P\times\:Q\times\:C\times\:K\), where \(\:R\) represents the temporal dimension of the convolution kernel38.

Similar to 2D-CNN, the 3D-CNNs also include pooling layers to reduce the dimensionality of the feature maps, but the pooling operation is extended to the temporal dimension. Assuming the pooling kernel size is \(\:{S}_{T}\times\:{S}_{H}\times\:{S}_{W}\), the pooled output \(\:Z\in\:{\mathbb{R}}^{{T}^{{\prime\:}{\prime\:}}\times\:{H}^{{\prime\:}{\prime\:}}\times\:{W}^{{\prime\:}{\prime\:}}\times\:K}\) can be defined by Eq. (15):

$$\:{Z}_{t,i,j,k}=\underset{r=1,\dots\:,{S}_{T}}{max}\:\underset{p=1,\dots\:,{S}_{H}}{max}\:\underset{q=1,\dots\:,{S}_{W}}{max}\:{Y}_{(t-1){S}_{T}+r,(i-1){S}_{H}+p,(j-1){S}_{W}+q,k}.$$

(15)

The 3D-CNNs can extract temporal features for behavior pattern analysis39. For example, in behavior recognition, motion changes between frames are key features, and 3D convolution jointly models spatial and temporal features by stacking temporal information.

Quantum convolutional neural network

The hybrid Quantum Convolutional Neural Network (QCNN) mainly consists of key components such as the quantum convolutional layer, pooling layers, and fully connected layers. In this architecture, the quantum convolutional layer uses quantum convolutional kernels instead of traditional classical convolutional kernels. It leverages the high concurrency and exponential storage capacity of quantum computing. This approach accelerates the convolution process40. The pooling layer combines classical and quantum computing, introducing three types of pooling methods: Max Pool, Avg Pool, and Quantum Pool, to accommodate the quantum convolutional kernels and efficiently and accurately extract features41. The fully connected layer, implemented via a classical feedforward neural network, is used to complete the action recognition task. The formalization of the hybrid QCNN is shown in (16):

$$\:y=\left\{{F}_{m}\left(\theta\:\right)\cdot\:\cdots\:\cdot\:{F}_{2}\left(\theta\:\right)\cdot\:{F}_{1}\left(\theta\:\right)\right\}\cdot\:\left\{{P}_{n}\left(\theta\:\right){U}_{n}\left(\theta\:\right)\cdot\:\cdots\:\cdot\:{P}_{2}\left(\theta\:\right){U}_{2}\left(\theta\:\right)\cdot\:{P}_{1}\left(\theta\:\right){U}_{1}\left(\theta\:\right)\right\}\cdot\:{U}_{0}\left(\text{x}\right).$$

(16)

\(\:{U}_{i}\left(\theta\:\right)\) represents the quantum convolutional layer, \(\:{U}_{0}\left(\text{x}\right)\) represents quantum state encoding, \(\:{P}_{i}\left(\theta\:\right)\) represents the pooling layer, and \(\:{F}_{i}\left(\theta\:\right)\) represents the classical fully connected layer. The quantum convolutional layer is primarily composed of quantum convolutional kernels42. These kernels are constructed using low-depth, strong entanglement, and lightweight parameterized quantum circuits. The quantum circuits utilize two qubits and perform complex convolutional tasks through a one-dimensional convolution approach. The quantum convolutional kernel is formed by sequentially arranging quantum convolutional blocks (QCB), which enhances its expressiveness and scalability. The quantum convolutional layer replaces the classical computational methods with quantum computing, fully exploiting quantum entanglement and parallelism. It retains the two key features of classical CNNs: local connectivity and weight sharing. This not only reduces the complexity of the network model but also significantly improves the computational efficiency of the model43.

The pooling layer enhances the prominent features of the data through downsampling operations, achieving dimensionality reduction and, to some extent, reducing the risk of network overfitting.

The fully connected layer is implemented through a classical feedforward neural network in machine learning. It uses the features extracted by the quantum convolutional layer and pooling layer. These features are then used to predict the output of the classification task. The computation is shown in Eq. (17):

$$\:y=\sigma\:\left(\sum\:_{i=1}^{n}\:{\theta\:}_{i}^{T}{x}_{i}+b\right).$$

(17)

σ(⋅) represents the activation function, \(\:{\theta\:}_{i}^{T}\) denotes the weight matrix, and b represents the bias parameter. First, the output from the last pooling layer is converted into a one-dimensional vector, which is then used as input for the fully connected layer. Next, this one-dimensional vector is processed by the activation function. The activation function introduces nonlinearity into the vector, thereby completing the task of detecting and identifying malicious code. Additionally, this process also optimizes the model’s training convergence performance.

Data preprocessing

In the study of badminton player motion classification, data preprocessing is a crucial step to ensure the model’s training effectiveness and improve accuracy. The following data preprocessing steps were applied in this study, including data cleaning, feature extraction, data normalization, data augmentation, and data partitioning.

Data collection and cleaning

The raw data used in this study was obtained from video recordings of broadcast and television programs, featuring four different badminton matches. The dataset includes information on athletes’ joint positions, velocities, and accelerations under various stroke techniques. Data cleaning is a crucial initial step. Samples with excessive missing values were removed, and abnormal data points were corrected. Missing data was partially imputed using interpolation methods to ensure data completeness and continuity. Outlier detection was primarily conducted using statistical methods, such as box plots, to identify and eliminate data points that significantly deviated from the mean.

Feature selection

Feature selection is a key step in improving model performance. To better understand the importance of different features in classifying badminton strokes, this study employs a model-based feature selection method to analyze each feature’s contribution to classification accuracy.

Arm angle

The arm angle is a critical feature, particularly in badminton strokes, where the movement pattern and angular variation of the arm directly influence shot accuracy and power. This feature is typically extracted from video frames using human pose estimation techniques, such as OpenPose. By analyzing the relative angular changes between the arm and other body parts, this approach accurately captures the detailed motion of athletes during strokes. In classification tasks, arm angle serves as a key factor in distinguishing different stroke types (e.g., forehand, backhand, and volley). Notably, in the QCNN model, arm angle is identified as the most significant feature, highlighting its essential role in stroke recognition.

Twist angle

The twist angle reflects the degree of upper body rotation during a stroke. In badminton, body rotation—including trunk and upper limb torsion—plays a crucial role in executing serves, forehand, and backhand strokes. The twist angle is typically extracted through pose estimation by analyzing angular changes in the shoulders, elbows, and spine to assess upper body rotation. In the QCNN model, the high significance of the twist angle indicates its importance in differentiating between high-intensity and low-intensity strokes. This feature is particularly indispensable for identifying complex movements, such as backhand volleys.

Footwork position

Footwork position reflects an athlete’s stance and foot movement during a stroke. As a high-intensity and dynamic sport, badminton requires precise footwork for players to reach the optimal hitting position in time. This feature helps determine whether an athlete is positioned correctly for a stroke and aids in distinguishing different shot types. For example, rapid footwork adjustments are often associated with serves or volleys, whereas slower adjustments may correspond to forehand or backhand strokes.

Grip type

it is a key feature closely related to stroke efficiency and execution. It determines the power and angle of a shot, influencing the athlete’s force application mechanism. By analyzing the relative position between the player’s hand and the racket handle, grip type characteristics can be extracted. The significance of this feature lies in the fact that different grips directly affect shot performance. For instance, forehand and backhand strokes require distinct grip techniques, and this variation is crucial for improving the classification performance of the model.

Step length

Step length describes the stride distance an athlete covers when executing a stroke. It reflects agility and explosiveness in movement. This feature is typically extracted using sensors or video-based motion tracking, with stride length calculated based on the time intervals and positional changes between foot placements. In badminton, step length plays a critical role in rapid shot preparation. This is especially true for fast-reaction strokes, such as volleys, where an athlete’s ability to adjust quickly can determine successful execution. Although step length has a relatively lower feature importance score, it still provides valuable supplementary information, helping the model better understand overall movement coordination.

Feature extraction

The goal of this study is to extract useful features from the motion data of athletes. Time-series features were first extracted from the raw data, including joint angles, angular velocity, and acceleration. These features can accurately describe the biomechanical performance of the athlete during different strokes. The specific extraction steps include.

Joint angle calculation

Based on three-dimensional coordinate data, joint angles were computed using the angle formula between vectors. These angles reflect the relative position changes of the athlete’s arm, shoulder, and torso during the stroke.

Angular velocity and acceleration

The angular velocity and acceleration of the joints were obtained by performing time-differencing on the joint angle data, capturing the dynamic features of the athlete’s movements.

Time-domain features

These include the start time and duration of each motion, as basic time-domain information.

Data normalization

To eliminate the influence of varying feature scales, all feature data were normalized. This study used the Z-score normalization method, which zeroes the mean and standardizes the variance of each feature to fit a standard normal distribution. This step ensures that different features have the same importance during training and prevents features with larger ranges from dominating the training process.

The normalization calculation is shown in Eq. (18):

$$\:{X}_{\text{n}\text{e}\text{w}}=\frac{X-\mu\:}{\sigma\:}.$$

(18)

Let X represent the original feature values, μ denote the mean of the feature, and σ represent the standard deviation of the feature.

Data augmentation

To enhance the model’s generalization ability, data augmentation techniques were employed. Due to the diversity and complexity of badminton actions, data augmentation effectively improves the model’s robustness, especially when the dataset is small. The specific augmentation methods used include.

Time shifting

The original data is shifted along the time dimension to simulate variations in the athlete’s actions across different time periods.

Rotation and scaling

Joint position data was subjected to rotation and scaling operations, simulating movements at different angles and postures.

Noise addition

To improve the model’s adaptability to noise, slight Gaussian noise was added to the original data to simulate the imperfections typically found in real-world environments.

Data partitioning

To evaluate the model’s performance, the dataset was divided into training, validation, and test sets. The specific partitioning approach is as follows.

Training set

Used for model training, accounting for 70% of the dataset. The training set includes various types of strokes from the athletes, allowing the model to learn how to distinguish between different action categories.

Validation set

Used to adjust model parameters during training, accounting for 15% of the dataset. The validation set helps to tune hyperparameters such as learning rate, regularization parameters, etc., to optimize model performance.

Test set

Used for final model evaluation, accounting for 15% of the dataset. The test set is not involved in the training process and is used solely to assess the model’s generalization ability and performance.

During the data partitioning process, care was taken to ensure the distribution of each action category remained balanced. This approach helped prevent the model from becoming biased towards certain categories due to class imbalance. Through these steps, the raw data was cleaned, standardized, and augmented, making it suitable for subsequent model training and analysis. These preprocessing operations not only improved the quality of the data but also significantly enhanced the model’s training efficiency and classification accuracy.