Subscribe • past problems

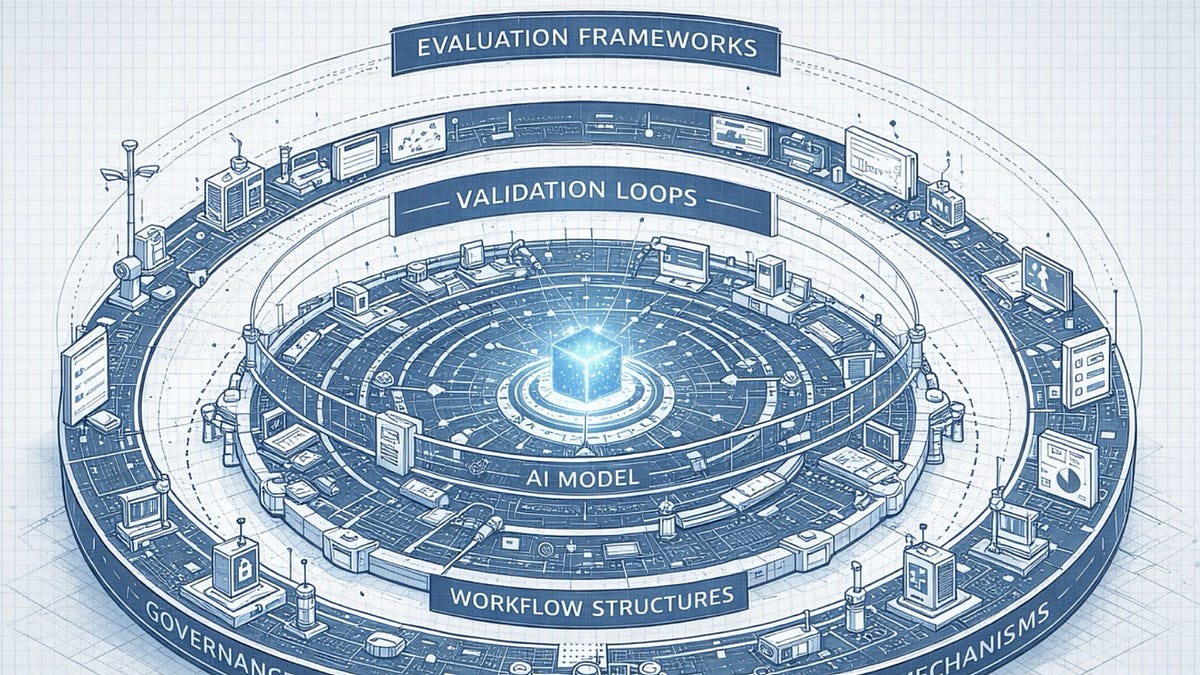

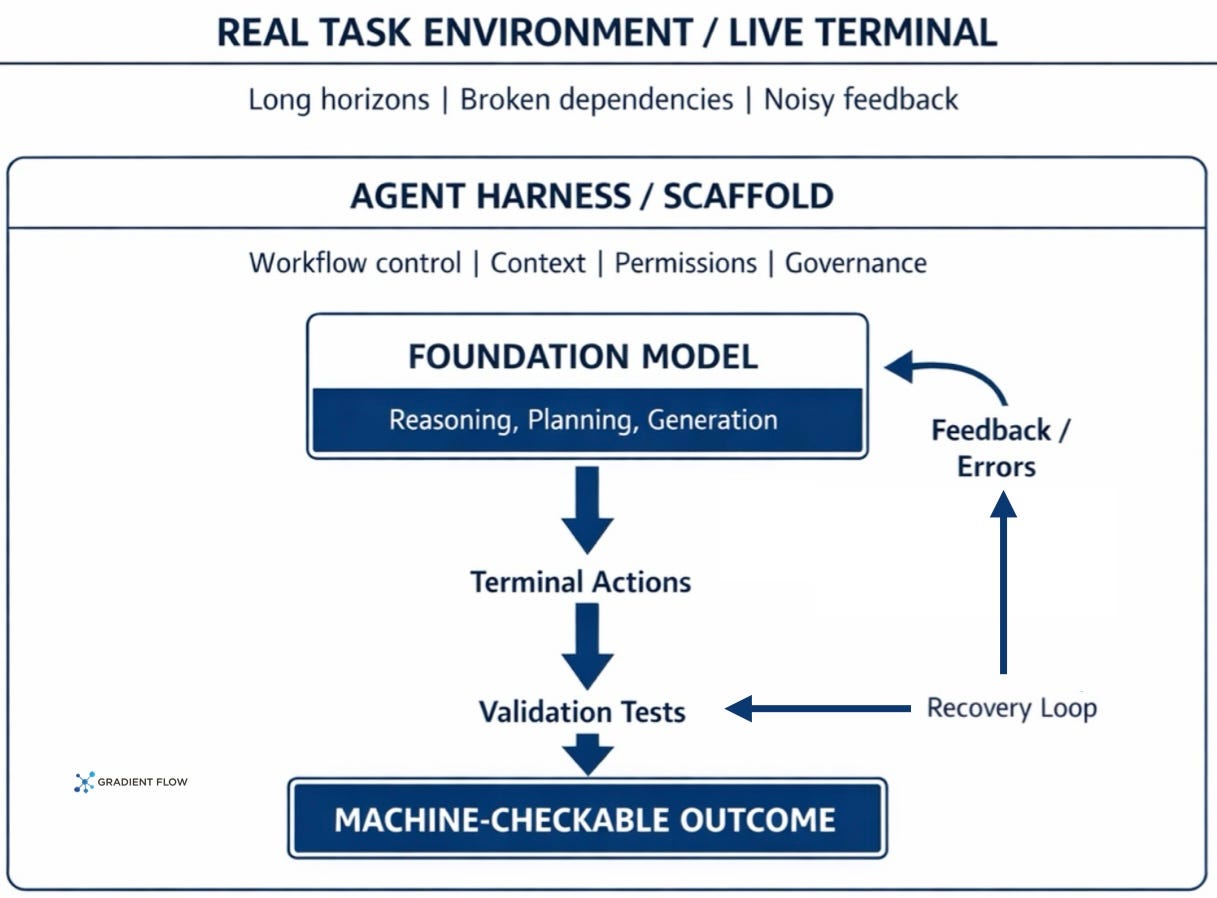

in previous postI argued that reliably deploying autonomous AI agents is not primarily a model problem. It’s an environmental issue. The gap between a capable foundation model and a production-ready system is bridged by: harness engineering: Structured workflows, validation loops, and the discipline of building governance mechanisms around models rather than within them. The central argument was that organizations that treat their surrounding environment as a primary engineering goal will outperform those that pursue better models, and this principle applies to all areas where agents handle complex and consequential tasks.

This discussion immediately raises practical questions. How do we actually know if an agent is ready to do the job? Most existing assessments still measure narrow, low-friction tasks in controlled or synthetic environments. These can tell us whether a model produces plausible answers or completes neat subtasks, but they reveal little about whether an agent can remain consistent across long workflows, adapt when something breaks, and complete the job it actually performs. Many benchmarks were so simple that the top models were already close to perfect scores, leaving no meaningful signal about which systems could handle the real work and which couldn’t.

Production typically requires sustained execution under friction. That means long chains of interdependent actions, true error recovery, and deep domain knowledge applied to messy, open-ended goals. This is fundamentally different from the tests that most benchmarks are designed to measure. Commercial interests in bridging that gap are no longer abstract. Terminal-based coding agents alone are already generating billions of dollars in revenue. This means that accurately measuring what these systems can and cannot do in realistic conditions is moving from a research interest to a commercial necessity for those building, deploying, or investing in AI agent products.

Some of the most capable autonomous agents in operation today are still focused on coding and software engineering. That makes sense. Terminals are one of the few environments where success criteria are clear and feedback is immediate. If a build fails, a dependency breaks, or a command returns incorrect output, the agent cannot hide behind a fluent response. You have to keep working until the job is done.

terminal bench It was built on that reality. This places the agent inside a real terminal environment loaded with the files, packages, and system configurations needed for the task. Each problem includes instructions, validation scripts, and reference solutions. What is measured is not whether the agent followed a desired sequence of steps, but whether the machine reached a checkable result. Looking competent is not a partial achievement. The output either works or it doesn’t.

Importantly, it’s not just that terminal benches have become more difficult. In important ways, it’s more difficult. Previous benchmarks have revealed little about sustained execution because they measured limited command-line skills, relied on a synthesis environment, or used very short tasks. Instead, the terminal bench asks whether the agent can manage long sequences of dependent actions, recover from real error messages, and apply domain knowledge to open-ended tasks. That rigor also comes from curation. All tasks in Terminal Bench are manually reviewed to reduce broken tests, poorly specified instructions, and loopholes that allow agents to game the assessment. Initial results demonstrate why that level of manual verification is important. The frontier agent still fails on more than a third of the tasks, and the smaller model performs much worse. For those building, deploying, or investing in agents, Terminal Bench becomes less of a leaderboard curiosity and more of a practical tool for differentiating between systems that can actually complete difficult tasks and systems that appear capable.

Evaluation is no longer just a report card at the end of the cycle. This belongs directly within the development stack.

Terminal Bench was not developed in isolation. A small but important set of related efforts is currently building on the same premise: agent evaluation should be more like real technical work than sophisticated demos. LongCLI bench takes this further by focusing on longer command line tasks and adding step-level scoring. So agents are penalized not only for failing to complete a job, but also for breaking something that was previously working. This is a meaningful advancement for those building production agents, since regression is often the real failure mode. DevOps gym Pushing the boundaries further into real-world software operations, evaluate agents on their ability to configure builds, monitor systems, and solve real-world problems rather than simply completing individual terminal prompts.

This ecosystem also extends to the infrastructure needed to train and compare terminal agents at scale. Termigen It addresses one of the most obvious bottlenecks exposed by Terminal Bench: the cost of manually building realistic environments and trajectories for training and evaluation. Terminus and Terminus 2 provide a reference implementation of how a terminal agent interacts with a live shell over a number of steps. This serves both as an engineering baseline and as a cleaner testbed for comparing models. And its appearance is Fine-tuned system centered around the terminal like reptiles, LiteCoder Terminaland terminal agent suggests that end-of-life competencies are currently being treated as separate competencies worthy of direct training. Taken together, these developments make Terminal Bench look less like a standalone benchmark and more like an anchor for a broader effort to measure and improve agents that need to do real work under real-world constraints.

Terminal Bench’s near-term roadmap aims to continue to provide useful information from benchmarks. Even as agents improve, benchmarking is only useful if you continue to scale the best systems. This means adding more difficult tasks, expanding coverage, and updating the suite before leaderboard movements become irrelevant. Equally important, the Terminal Bench team has made a clear case that manual verification is not an option. Their experience has shown that checking tasks for accuracy, closing loopholes, and fully specifying success conditions requires significant human effort, and costs increase as tasks become more complex.

The infrastructure that supports Terminal-Bench has become more valuable than the benchmark itself. port serves as an extensible framework that allows developers to go beyond basic testing and provide tools to optimize AI prompts, perform trial-and-error learning, and perform automated quality checks on agents. That’s a meaningful change. Evaluations are no longer just a report card at the end of a development cycle. Move into the development stack instead of staying outside of it. The ecosystem growing around Terminal Bench shows what that change really looks like.

The real influence comes from the surrounding system, not just the model.

For companies building agents outside of coding, this is perhaps the clearest short-term lesson. The winning approach is unlikely to be to make entire cumbersome, high-stakes workflows fully autonomous. Human-agent collaboration is more likely to be structured within a rigorously designed environment. It fits into the larger argument of my point previous post. The real influence comes from the surrounding system, not just the model. Terminal Bench makes that point clear by showing that even in the realm of fast feedback and machine-checked success, reliable autonomous performance remains limited. In areas where mistakes are more subtle and significant, companies will need to leverage more, do more assessments, and have more intentional handoffs between automated execution and human judgment.

-

Laws of Thought: Exploring a Mathematical Theory of Mind. AI is beginning to shape the economy, job market, and national security. That’s why I think more people should understand the concept of this easy-to-read book.

-

Rebellion: The rise and revolt of the college-educated working class. The college graduates who make lattes and run Apple Store demos are already struggling with the gap between the promise of their degrees and what the economy offers. As AI moves into knowledge work, that gap could widen even further for more people.

-

london falling. One of my favorite authors is here again. London felt like more than just a setting, it was like a character in itself, with all its glamor, deceit, and seedy underworld integrated into the story.

ben lorica edit Ethics.dev and Gradient Flow NewsletterWe are hosting data exchange podcast. he helps organize AI conference and AI agent conferenceand also serves as AI’s Strategic Content Chair. Linux Foundation. you can follow him linkedin, ×, mastodon, reddit, blue sky, YouTubeor TikTok. This newsletter is produced by gradient flow.