AI powers the future by enabling rapid innovation at unprecedented scale and gaining competitive advantage. Organizations are already moving from “AI curiosity” to “AI forward.” However, this speed comes with the infamous “AI black box” problem. How can we successfully build and deploy products based on AI models whose risks are based on deeply embedded logic and non-deterministic behavior?

Trust is a key catalyst for increasing speed. The ability to act with speed and absolute trust requires a robust and continuous security strategy.

Detect, assess, and protect as part of the AI security lifecycle

Success in the AI race will depend on proactively addressing security challenges. We stand behind a simple three-step framework for AI security, designed to help our customers adopt AI bravely. Discover, Assess, and Protect.

- Discover: You can’t assess the risk of what you can’t see. Understanding the entire attack surface requires a comprehensive real-time inventory of all AI models, inference datasets, applications, and AI agents across the enterprise.

- evaluation: You cannot protect yourself from risks that you have not identified. Trust in the world of AI begins with a scalable, contextual, and thorough assessment of both model artifacts and their runtime behavior.

- protect: Finally, these insights should be used to deploy actionable security controls that neutralize threats and help maintain compliance across the ecosystem.

prisma air 2.0 is an integrated platform that delivers a critical second pillar, the typical comprehensive assessment capabilities needed to move forward, along with thorough detection and robust runtime protection.

Two challenges for AI risk assessment

A central challenge in AI security is the infamous “black box” problem. Traditional security works by looking for known signatures and predictable execution paths, such as specific file hashes or buffer overflow attempts. AI systems are fundamentally different. The risks are based on hidden logic vulnerabilities (flaws in training data or architecture) and response behavior (unintended harmful outputs).

Traditional security treats AI models as static assets, ignoring the fact that the greatest risk lies within their complex embedded logic and, in the case of generative models, their non-deterministic behavior. This is why dedicated AI security assessment capabilities are essential.

Therefore, an effective AI assessment must address two distinct but interrelated layers of risk:

- AI model security: Ensure the integrity of the model artifacts themselves that make up the entire AI supply chain, from training data to compiled weights.

- AI Red Teaming: Ensuring Security action Reduce the impact of your model or app on the deployed context and address immediate exposure to adversarial inputs at runtime.

prisma airs®offers these capabilities and more as an integrated solution, giving CIOs a practical overview of their AI risk posture, from core artifacts to actual application behavior.

AI Model Security secures your AI supply chain, one model at a time

AI models are now some of the most valuable intellectual property, often representing millions of dollars of investment and years of unique data curation. However, modern MLOps workflows often rely heavily on third-party and open source models, which is the speed multiplier required to simultaneously create supply chain vulnerabilities on an unprecedented scale.

Risks of malicious AI models

Models imported from public repositories or partners may contain hidden threats that can lead to serious security incidents. This can range from embedded malware or Trojan horse models to hidden backdoors that facilitate IP disclosure, allowing attackers to execute arbitrary code once the model is loaded into a sensitive environment. When a model’s integrity is compromised, everything built on top of it becomes fundamentally flawed.

Prisma AIRS AI model security

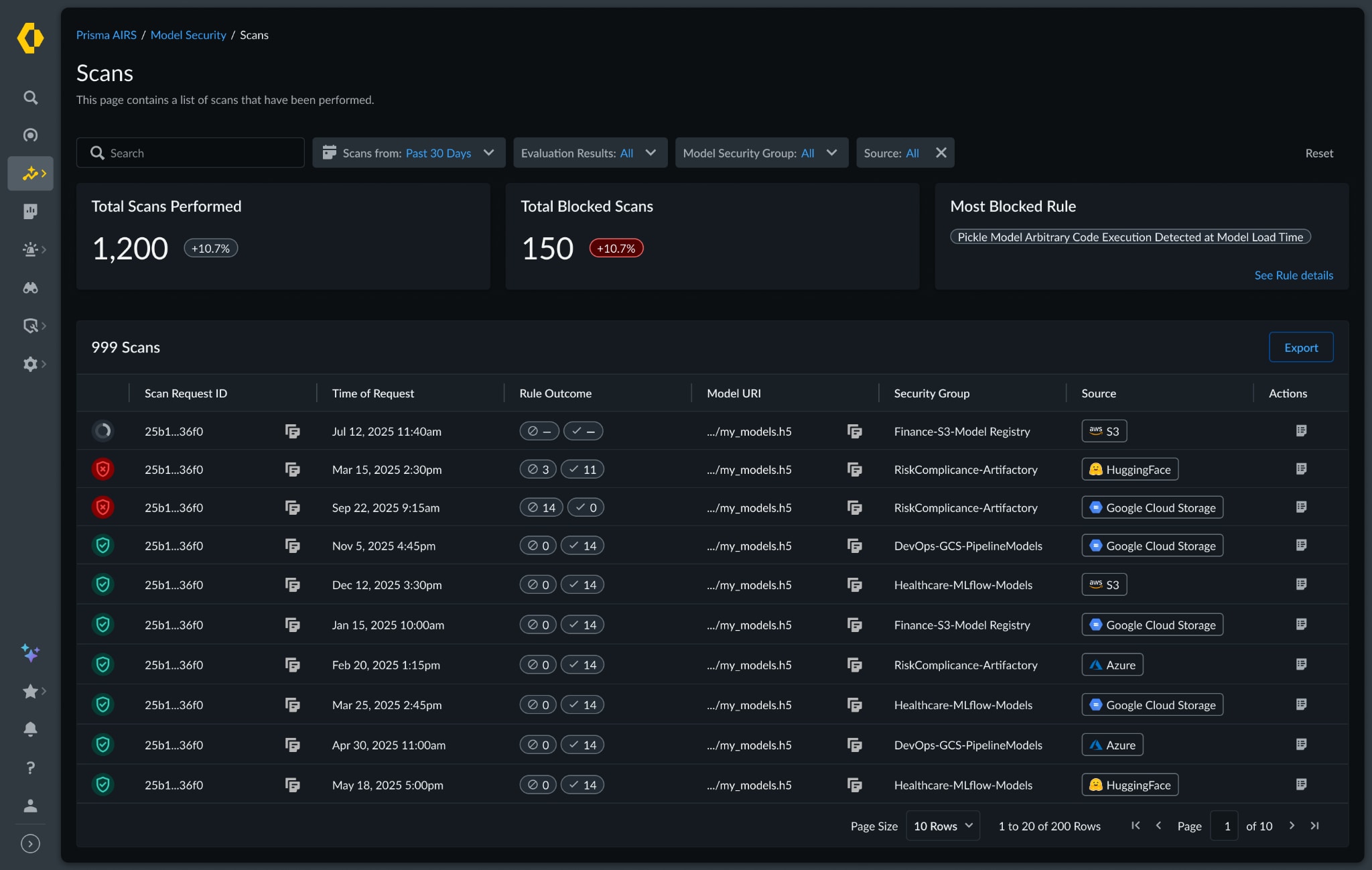

Prisma AIRS provides AI model security by moving inspection to the left side of the CI/CD pipeline and model registry. It is purpose-built to look deep inside model artifacts, examining the complex structure of model weights, metadata, and dependencies for over 35 model formats (including PyTorch, TensorFlow, Keras, and more) for architectural weaknesses or explicit threats.

Verified provenance and threat intelligence: Prisma AIRS AI Model Security leverages the combined power of Unit 42 and the 19,000+ members of the Huntr community to deliver curated critical intelligence. Prisma AIRS goes beyond file scanning to check the origin and integrity of complex data formats that often travel with open source components, dependencies, and models, and flags components associated with known AI-specific vulnerabilities.

With this tight integration and pre-deployment inspection, Prisma AIRS AI Model Security is designed to ensure the integrity of core model assets before they reach production, thereby neutralizing threats that traditional code and vulnerability scanners would otherwise miss.

Don’t fall into the guardrail trap. Watch our latest webinars Learn about the risks you may face when AI models in your AI ecosystem have hidden vulnerabilities.

AI Red Teaming turns behavioral risk into actionable governance

Even completely clean and validated model artifacts can be exploited once deployed through prompt injection or behavioral manipulation. Speeding up AI development and deployment requires continuous, scalable testing. Unfortunately, manual red teaming exercises are time-consuming, expensive, and quickly become obsolete against the ever-evolving threats targeting AI systems. Human testers cannot generate the amount and type of adversarial samples needed to secure a continuous delivery pipeline.

Risk of malicious AI behavior

The behavioral risk that AI produces harmful, illegal, or harmful output is one of the most significant impacts that threat managers must manage. These failures can be caused by advanced jailbreaks or unsafe output handling that return malicious code or denial-of-service vectors. Large language models are nondeterministic, so even under normal usage, rare edge cases can occur. Left unchecked, organizations are exposed to financial losses, data breaches, regulatory fines, and serious reputational damage. Therefore, continuous and systematic red teaming (testing both intended workflows and adversarial edge cases) is essential.

Autonomous, context-aware AI red teaming

Prisma AIRS automates this critical function and provides continuous security testing that scales with the speed of deployment. This is security through the speed of AI.

- Dynamic attack simulation: Prisma AIRS AI Red Teaming deploys dynamic, conversational AI Red Teaming agents that mimic real-world attacker behavior. The agent combines advanced techniques such as obfuscation, role-playing, and attempts to extract system prompts to tailor attacks based on application responses to truly stress test system defensibility.

- Comprehensive and contextual coverage: The platform executes over 500 different real-world attack scenarios, including exfiltrating data, generating harmful content, and manipulating system prompts. Importantly, these scenarios can be customized to the business context of your application to provide meaningful, high-fidelity insights.

- Governance and viability: Prisma AIRS AI Red Teaming provides CIOs with aggregated risk scores and findings reporting across their entire AI application portfolio. This is a single quantifiable metric for the board. Additionally, all discovered vulnerabilities are directly mapped to leading governance frameworks such as LLM’s OWASP Top 10 and the NIST AI Risk Management Framework. This mapping is critical because it allows security teams to demonstrate auditable compliance.

AI Red Teaming and Prisma AIRS provide an auditable, prioritized list of vulnerabilities that AI teams can focus on. Faster, more proactive identification enables faster remediation, which helps organizations demonstrate confidence to regulators and customers.

Red team the system before the attacker does. Watch the webinar Find out why red teaming AI is an essential part of securing your AI deployment.

Convert uncertainty into measurable confidence

Executives across all industries are encouraging their teams to move from curiosity to full-scale adoption, and the winners in this AI race will be those that act with speed and absolute trust. But pursuing AI innovation without a foundational security strategy is simply accepting unacceptable financial and reputational debt. Piecing together point solutions is only a short-term solution.

Prisma AIRS is designed to be an effective solution to the AI black box problem by providing AI model security and AI red teaming in a single integrated platform, turning uncertainty into measurable trust at scale.

Protect your AI infrastructure. Let’s protect your future. Deploy bravely.

Contact us now Learn more about how Prisma AIRS can help you solve AI black box problems.