Scientists are increasingly relying on neural emulators to approximate solutions to complex physical systems, but ensuring that these models reliably capture the underlying symmetries remains a major challenge. Los Alamos National Laboratory’s James Amarel, Robyn Miller, and Nicolas Hegartner, along with colleagues Migliori, Castleton, and Skurikhin, have developed a new diagnostic method that goes beyond simple tests of forward-pass behavior to assess how well neural networks internalize physical symmetries. Their work introduces an “influence-based” method to measure how parameter updates propagate between symmetry-related states, effectively exploring the local geometry of loss situations. By applying this technique to a fluid emulator, the research team demonstrates that “gradient coherence” is important for learning to generalize across symmetry transformations and identify basins compatible with those symmetries, providing a powerful new way to evaluate surrogate models and ensure that you have truly learned the physics.

This innovative approach goes beyond a simple test for forward-pass homoscedasticity and directly examines learning dynamics to determine whether the model is truly learning the underlying physics or just memorizing patterns in the training data. This research focuses on understanding how neural networks represent physical systems governed by equations such as the Navier-Stokes equations that exhibit symmetries such as translation, rotation, and reflection. These symmetries define equivalence classes of solutions, or “trajectories.” This means that physically equivalent states should be treated similarly by a well-trained emulator.

The researchers hypothesized that a model that truly internalized the solution operators would seamlessly propagate information across these trajectories, as evidenced by the alignment of the slopes of the loss functions when evaluated with symmetry-related inputs. This consistency indicates that training updates constructively influence each other and prevent separation of physically equivalent configurations, an important indicator of robust generalization. Measuring this mutual influence therefore provides a diagnostic tool that goes beyond standard forward pass checks and reveals how physically consistent training updates are. This study contributes to three main areas of machine learning research: interpretability, generalization theory, and scientific machine learning.

By leveraging influence functions, the team is advancing interpretability techniques to explore the dynamics of training beyond simple output analysis. Furthermore, this study frames symmetry learning as a basin selection problem within a loss landscape dominated by orbital gradient coherence, informing generalization theory. Within the realm of scientific machine learning, this provides a principled diagnostic for assessing whether a neural emulator has truly learned the symmetries inherent in the solution operators. This innovative approach provides a concise framework for assessing the consistent behavior of symmetries through both forward pass consistency and probing learning dynamics, providing a powerful tool for evaluating and improving the generalization capabilities of neural emulators.

Gradient coherence reveals symmetric learning in emulators

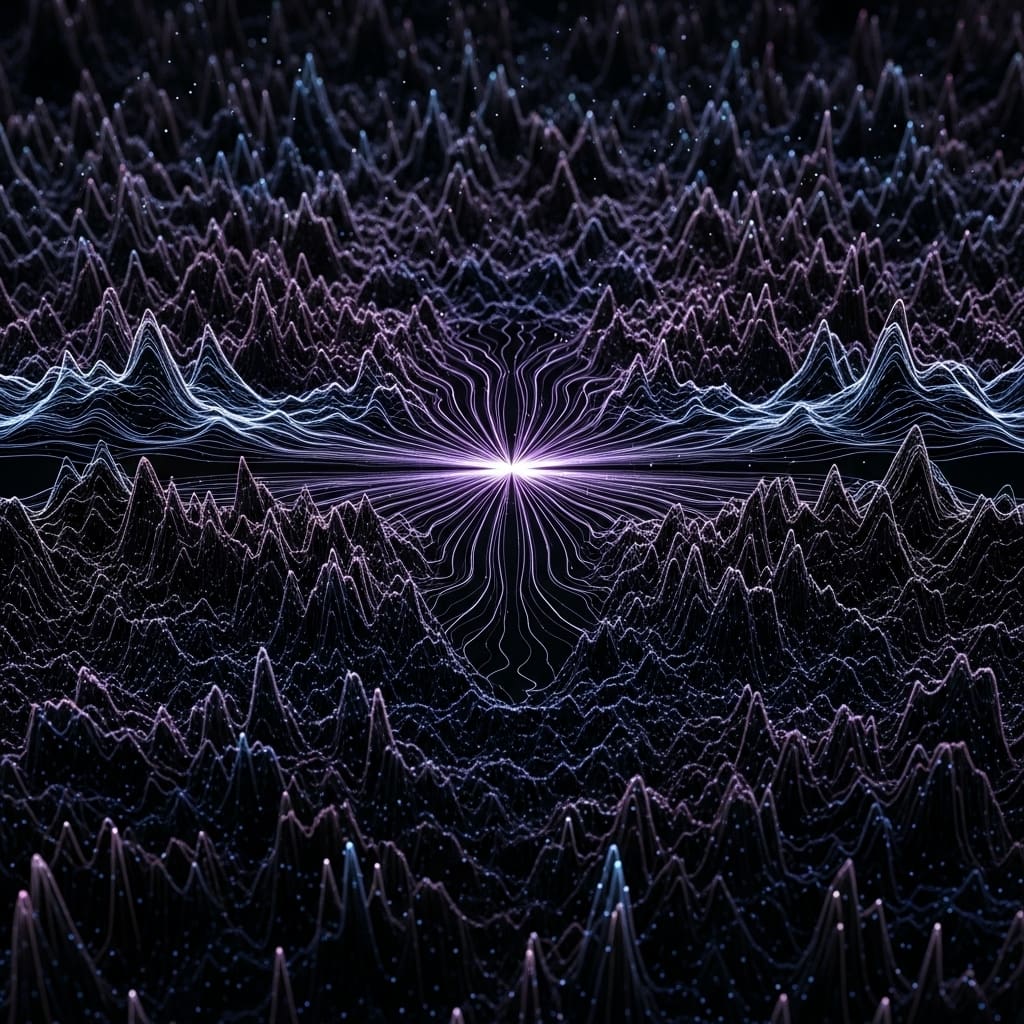

This work pioneers a method to quantify a model’s ability to generalize across symmetric trajectories by framing symmetry learning as a basin selection problem within a loss landscape. The researchers designed a symmetry-aware gradient diagnostic that leverages influence functions to directly investigate training dynamics, extending previous explainability frameworks beyond simply analyzing forward-pass behavior. This approach uses the Lie derivative of the cost function along the gradient direction caused by each individual test example, expressed as Vμ = −χμν∂νCx, to define a vector field representing the contribution of each example to the loss. To extend the scope, the analysis was extended to models trained on velocity fields generated by the Navier-Stokes equations using NS-BB, NS-Gauss, and NS-Sines initial conditions representing qualitatively different feature spaces. The model was trained in distributed mode on two 40GB A100 GPUs using Lux. jl and zygot. jl has three seeds that control initialization and partitioning of the dataset, and results are reported with quantile range bars to capture variation.

Gradient coherence reveals symmetry internalization in emulators

Scientists have achieved a new method for evaluating symmetry internalization in neural emulators of partial differential equation solving operators. Experiments revealed that the proposed trajectory-wise gradient coherence is a local property of the loss landscape of the trained model, providing a new technique for determining whether a surrogate model internalizes the symmetry properties of known solution operators. The results show that symmetry neglect can manifest not only in the representation space but also in the local geometry and can prevent a consistent update structure across symmetry-related inputs. Each state snapshot consisted of a 128 × 128 grid of mass density, Cartesian momentum density, and energy density.

The data shows that despite fewer parameters, ViT consistently outperforms UNets on test metrics, suggesting a more efficient learning process. Measurements confirm that the influence function, expressed as the Lie derivative of the cost along the gradient direction, effectively quantifies whether a gradient update caused by one example reduces or increases the loss of another example. When applied to symmetry-related inputs, the neural tangent kernel acts as an analog of Fisher information and measures whether the learning signal propagates coherently along the symmetry trajectory. The researchers evaluated the influence function across six test mini-batches and standardized the resulting influence matrix by normalizing with respect to the empirical variance, establishing uniformity as a baseline for unstructured stochastic variation, and deviations indicating influence beyond random noise.

Symmetric learning linked to gradient coherence improves generalization

This finding proves that homoscedastic error at testing is related to how training dynamics distribute effects across symmetric trajectories, and reveals the difference between models that truly learn shared structure and those that simply assemble local estimators. Although symmetry-agnostic architectures can achieve low errors, they may allocate influences unevenly and may result in accurate interpolation without internalizing the underlying physics, leading to situations where high prediction accuracy does not guarantee robustness under symmetric transformations. Conversely, apparently equivariant layers like UNets promote data efficiency and generalization through uniform gradient coupling, but this can slow down optimization. The authors acknowledge that their diagnosis characterizes symmetry learning related to the training distribution, meaning that biases in the data representation can influence the measured coherence.

They also note that while the transformer is scalable, it can converge rapidly with special gradients that sacrifice faithful symmetry representations. Future research should focus on developing approximate or relaxed symmetric mechanisms that balance the need for generalization with the flexibility required for efficient optimization, potentially combining the strengths of transformer and equivariant modeling. This study provides valuable diagnostics for building confidence in scientific machine learning systems, especially when robustness under symmetry is paramount.

👉 More information

🗞 Loss landscape geometry and learning symmetries: Or what influence functions reveal about robust generalization.

🧠ArXiv: https://arxiv.org/abs/2601.20172