To evaluate the effectiveness of the proposed SADE-KAN model, a simulation environment was developed in Python, following the architectural specifications outlined in Sections “Kolmogorov arnold network” and “Optimization of KAN with SADE”. All experiments were executed using Python version 3.12.0 on a Windows 11 platform, running on a 2.60 GHz 64-bit Intel Core i5-4210U processor with 12 GB RAM and a 500 GB hard drive. The hourly load data from ISO-NE, preprocessed as described in Section “Dataset and preprocessing”, served as the input to the forecasting model. Data partitioning details are provided in Table 6. The performance of SADE-KAN was benchmarked against conventional MLP, the original KAN, and several recently proposed state-of-the-art models. Evaluation was based on key performance indicators, including mean absolute error (MAE), root mean squared error (RMSE), mean absolute percentage error (MAPE), training time, and model complexity (measured by the number of parameters), across multiple forecasting horizons.

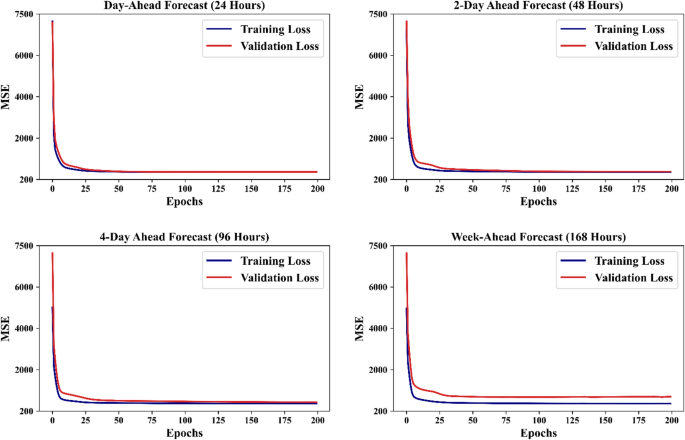

The experiments were structured to systematically compare the performance of these models with differing architectures, in order to comprehensively assess their impact on forecasting accuracy, training efficiency, and model complexity. Each model is trained for 200 epochs using the Adam optimizer with a learning rate of 0.001 and mean squared error (MSE) as the training and validation loss function calculated as 49

$$MSE = \frac{1}{N}\mathop \sum \limits_{i = 1}^{N} \left( {\hat{Y} – Y} \right)^{2}$$

(14)

The evaluation metrics used in this experiment to assess the performance of these networks are MAE, RMSE, and MAPE, which are calculated as 49

$$MAE = \frac{1}{N}\mathop \sum \limits_{i = 1}^{N} \frac{{\left| {\hat{Y} – Y} \right|}}{Y}$$

(15)

$$RMSE = \sqrt {\frac{1}{N}\mathop \sum \limits_{i = 1}^{N} \left( {\hat{Y} – Y} \right)^{2} }$$

(16)

$$MAPE = \frac{1}{N}\mathop \sum \limits_{i = 1}^{N} \frac{{\left| {\hat{Y} – Y} \right|}}{Y} \times 100\%$$

(17)

where N denotes number of samples, \(\hat{Y}\) and \(Y\) represents the predicted and corresponding actual load respectively. By averaging the squared differences between observed and predicted values, the MSE offers a precise evaluation of model performance. The squaring step penalizes larger errors more heavily, making the metric suitable for addressing outliers and significant deviations. The MAPE quantifies the average deviation between the predicted values and the actual values, expressed as a percentage. The MAE, on the other hand, calculates the average of the absolute differences between predicted and actual values, providing a direct and interpretable measure of the average forecasting error. In contrast, the RMSE measures the standard deviation of the differences between the predicted and actual values, providing insight into the magnitude of prediction errors. Lower values of MAE, MAPE and RMSE indicate superior model performance, showing more accurate and reliable forecasts.

Results and comparative assessment

The evaluation results of the SADE-KAN model reveal significant insights into its performance in comparison to the traditional MLP, the original KAN and recently published advanced models. The results indicate that the predictions obtained using our proposed model better approximate the actual system load across all forecasting horizons, demonstrating robust generalization to unseen data. This is particularly evident in Fig. 6 which depicts the training and validation loss curves across all forecast horizons. The curves reveal a rapid convergence and robust learning of the underlying data patterns without overfitting. The training loss demonstrates a steady decrease with each epoch, while the validation loss follows a similar trajectory which shows robust generalization to unseen data. This stability in the learning process proves the robustness and reliability of the proposed SADE-KAN.

KANs learning evaluation on ISO-NE-CA hourly data.

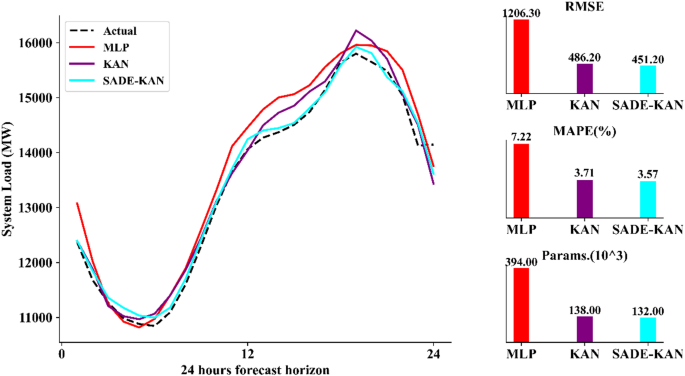

Additionally, Fig. 7 illustrates the 24-h forecasting performance, where SADE-KAN captures rapid changes in system load more effectively than both the KAN and MLP models. While the original KAN and MLP show occasional over-prediction or under-predictions, especially in the middle hours of the forecast horizon, SADE-KAN adapts more quickly to sudden shifts in load demand. This enhanced adaptability demonstrates suitability of the SADE-KAN for dynamic load forecasting, which is a critical aspect of effective load management.

One day ahead forecasted load with hour resolution using MLP, KAN and SADE-KAN.

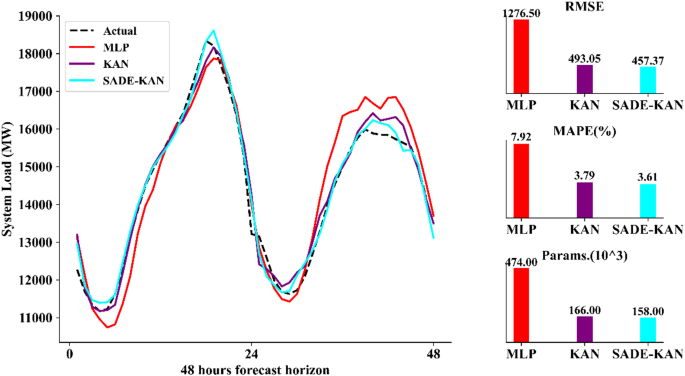

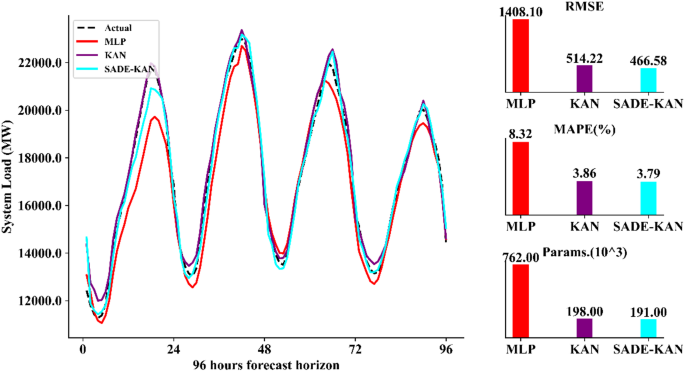

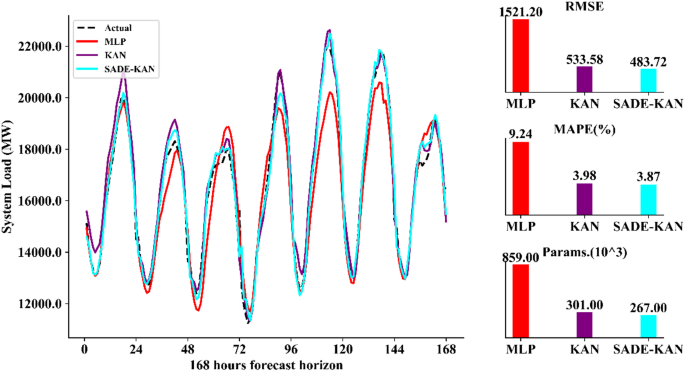

Further, the capability of SADE-KAN to capture dynamic load variations is evident in the load forecasts for the next 48 h, 96 h and 168 h as illustrated in Figs. 8, 9, and 10 respectively. SADE-KAN consistently outperforms both the original KAN and MLP models in capturing rapid load fluctuations, especially at longer forecast horizons. While KAN demonstrates a robust capacity to model load variations, it shows some limitations in tracking abrupt changes compared to the enhanced adaptability of SADE-KAN. In contrast, the MLP model exhibits a noticeable lag in responding to these variations, particularly over the longer horizons, where it struggles to capture immediate shifts in load. The ability of SADE-KAN to adjust quickly and accurately to these dynamic changes across longer forecasting horizons not only demonstrates its robustness but also makes it a more reliable and effective model for STLF.

Two days ahead forecasted load with hour resolution using MLP, KAN and SADE-KAN.

Four days ahead forecasted load with hour resolution using MLP, KAN and SADE-KAN.

Week ahead forecasted load with hour resolution using MLP, KAN and SADE-KAN.

To comprehensively quantify the performance of our proposed model, a thorough comparison with MLP and KAN is presented in Table 7. The table displays several key performance metrics including, MAPE, MAE, RMSE, training time, and the count of trainable parameters across four forecasting horizons, 24, 48, 96, and 168 h. Across all horizons, SADE-KAN consistently achieves the lowest error values, with a MAPE ranging from 3.57% (24-h horizon) to 3.87% (168-h horizon), compared to 3.71–3.98% for KAN and a significantly higher 7.22–9.24% for MLP. Similarly, MAE and RMSE values follow the same trend. For instance, at the 168-h horizon, SADE-KAN achieves an RMSE of 483.72 MW and an MAE of 397.16 MW, outperforming KAN (533.58 MW RMSE, 435.75 MW MAE) and substantially surpassing MLP (1521.2 MW RMSE, 1176.2 MW MAE). This consistent accuracy across increasing horizons underlines robustness and generalization capability of the SADE-KAN. Particularly, while MLP suffers a sharp degradation in performance as the horizon lengthens, SADE-KAN maintains stable accuracy with only marginal increases in error metrics.

In terms of computational cost, SADE-KAN incurs higher training times than MLP but remains comparable to or slightly more efficient than the original KAN. For example, at the 96-h horizon, SADE-KAN completes training in 1819.9 s, whereas KAN requires 1862.6 s. Moreover, SADE-KAN achieves this improved performance with a slightly reduced number of trainable parameters compared to KAN, further highlighting the efficiency of the proposed model.

A significant advantage of SADE-KAN is its performance in both accuracy and parameter efficiency. With only 132,000 trainable parameters for the 24-h forecasting horizon, SADE-KAN significantly reduces model complexity compared to the MLP, which requires 394,000 parameters for the same task. Across all forecasting horizons, SADE-KAN utilizes, on average, approximately 35% of the parameters used by the MLP, while also improving upon parameter count of the original KAN. This substantial reduction in model size indicates that SADE-KAN can deliver superior forecasting performance with lower computational overhead which is a critical attribute for deployment in resource-constrained environments.

In terms of performance, SADE-KAN outperforms MLP significantly across all forecast horizons, delivering lower error metrics. Moreover, SADE-KAN enhances the original KAN by refining its training process and boosting its ability to capture complex load dynamics. These improvements ensure that our proposed model provides more reliable and precise forecasts.

In terms of accuracy, SADE-KAN consistently outperforms the MLP across all forecast horizons, achieving significantly lower MAPE, MAE, and RMSE values. Furthermore, it enhances the original KAN by optimizing its training process and improving its capacity to model complex load patterns. These enhancements result in more precise and reliable forecasts, particularly for extended horizons where traditional models often degrade in performance.

While the proposed model achieves these gains, it still requires longer training time than MLP. This remains the only area where MLP holds an advantage. However, compared to the original KAN, SADE-KAN slightly reduces the training time, offering improvement in computational efficiency. Despite the longer training duration relative to MLP, the substantial improvements in forecast accuracy and reduced error rates make SADE-KAN a much more effective model for STLF practices.

Input features sensitivity analysis

The performance of the SADE-KAN model varies significantly with the number of input features used, as illustrated in Table 8. In this table, the GCA grade represents the proportion of features retained, and its effect on model performance is analyzed across all forecasting horizons. Initially, with 18 input features with GCA grade 0.5, the model exhibits relatively high error metrics, with an RMSE of 1296.78 MW, MAE of 989.65 MW, and MAPE of 6.9%. However, as the number of features is reduced, its performance improves, reaching optimal accuracy at GCA grade 0.7 with 8 features. At this level, the RMSE drops to 451.22 MW, MAE to 353.69 MW, and MAPE to 3.57%, indicating that a moderate reduction in input features enhances its ability to forecast load with greater precision.

Further reduction in the number of features, as seen at GCA grades 0.74 and 0.9, leads to a slight deterioration in performance. The RMSE increases to 492.18 MW and 583.29 MW, respectively, while MAPE rises to 3.87% and 6.52%. These results suggest that while reducing input features can improve model performance up to a point, there is a threshold beyond which accuracy declines due to the loss of critical information that is necessary for effective forecasting.

Performance in longer forecast horizons

The forecasting capability of SADE-KAN across extended time horizons is presented in Table 9. This evaluation aims to assess its temporal generalization ability and its capacity to maintain predictive accuracy as the forecast window is stretched from 24 to 168 h.

At the 24-h horizon, SADE-KAN achieves a MAPE of 3.57%, MAE of 353.69 MW, and RMSE of 451.22 MW, establishing a strong baseline performance. As the forecasting horizon extends to 48, 96, and 168 h, the model exhibits a gradual increase in error, which is expected due to the cumulative uncertainty associated with longer temporal predictions. However, the degradation is minimal and consistent, indicating its robustness. Specifically, at 168 h, the MAPE increases only to 3.87%, with MAE and RMSE rising moderately to 397.16 MW and 483.72 MW, respectively. This marginal increase in forecasting error over a 7-day horizon is a noteworthy result. It suggests that SADE-KAN is capable of learning complex temporal dependencies and preserving stability over extended prediction intervals. Additionally, while the runtime increases with the horizon, growing from 1519.9 s for 24 h to 2307.2 s for 168 h, the computational cost remains within practical limits.

Importantly, the model avoids the steep performance degradation typically seen in conventional architectures such as MLP when extrapolated to multi-day forecasts. These results affirm that SADE-KAN not only delivers high accuracy for immediate forecasts but also sustains performance across longer-term horizons, making it suitable for diverse STLF applications with varying planning requirements.

Performance compared to advanced models

To further establish the efficacy and competitiveness of the proposed SADE-KAN model, its forecasting performance was benchmarked against several recently published advanced machine learning and deep learning approaches. The comparison, summarized in Table 10, includes state-of-the-art models such as Bi-directional LSTM (Bi-LSTM) 50, CNN-LSTM hybrid 50, extreme gradient boosting (XGBoost) 51, light gradient boosting machine (LightGBM) 51, and an ensemble of XGB, gradient boosting regressor (GBR), support vector regressor (SVR) and K nearest neighbors (KNN) reported in 52.

SADE-KAN consistently outperforms all compared models across key accuracy metrics. It achieves the lowest MAPE of 3.57%, indicating superior relative error performance. In terms of absolute metrics, SADE-KAN attains an RMSE of 451.22 MW and an MAE of 353.69 MW, both of which are the lowest among all models presented. While the ensemble method reports a comparable MAPE of 3.69%, its extremely high RMSE of 2920.7 MW and MAE of 2282.1 MW expose significant absolute deviation, suggesting poor generalization despite a favorable percentage error.

Compared to Bi-LSTM and CNN-LSTM, SADE-KAN offers clear gains in accuracy. It reduces MAPE by 22.7% and 15.8% relative to Bi-LSTM and CNN-LSTM, respectively, while also improving RMSE and MAE. Particularly, SADE-KAN also demonstrates a more efficient runtime profile than gradient boosting models such as XGBoost and LGBM, which require over 3600 s for training. SADE-KAN completes training in 1519.9 s, offering a practical balance between accuracy and computational cost.

In summary, SADE-KAN surpasses the accuracy of deep learning and ensemble-based models, while maintaining computational feasibility. These results underscore its effectiveness and suitability for real-world STLF applications where both precision and efficiency are critical.