- At Meta, we use invisible watermarks for various content origin use cases on our platform.

- Invisible watermarks are useful for a variety of use cases, including detecting videos generated by AI, verifying who originally posted a video, and identifying the sources and tools used to create the video.

- We share how we overcame the challenges of scaling invisible watermarks, including how we built a CPU-based solution that improves operational efficiency while providing GPU-like performance.

Invisible watermarking is a powerful media processing technique that allows signals to be embedded in media in a way that is imperceptible to humans but detectable by software. This technology provides a robust solution for content provenance tagging (indicating the origin of content) and enables content identification and tracking to support a variety of use cases. At its core, invisible watermarks work by subtly modifying pixel values in images, waveforms in audio, or text tokens generated by large-scale language models (LLMs) to embed small amounts of data. The design of the watermarking system adds the necessary redundancy. This ensures that embedded identifying information remains persistent through transcoding and editing, unlike metadata tags, which can be lost.

Deploying invisible watermarking solutions into large-scale production environments presents many challenges. In this blog post, we describe how we overcame the challenges of deployment environment, increased bitrate, and decreased visual quality to adapt to a real-world use case.

useful definitions

Digital watermarking, steganography, and invisible watermarking are related concepts, but it’s important to understand the difference between them.

| Features | digital watermark | steganography | invisible watermark |

| the purpose | Content attribution, protection, and origin | secret communication | Content attribution, protection, and origin |

| visibility | Can you see it or not? | can’t see | can’t see |

| Robustness

For modification of content |

Medium to high | usually low | High (remains after editing) |

| Payload/message capacity | Medium (various) | various | Medium (e.g. >64 bits) |

| calculation cost | Low (visible) to high (invisible) | various | High (advanced ML models) |

The need for robust content tagging

In today’s digital environment, where content is constantly being shared, remixed, and even generated by AI, important questions arise:

Who first published the video?

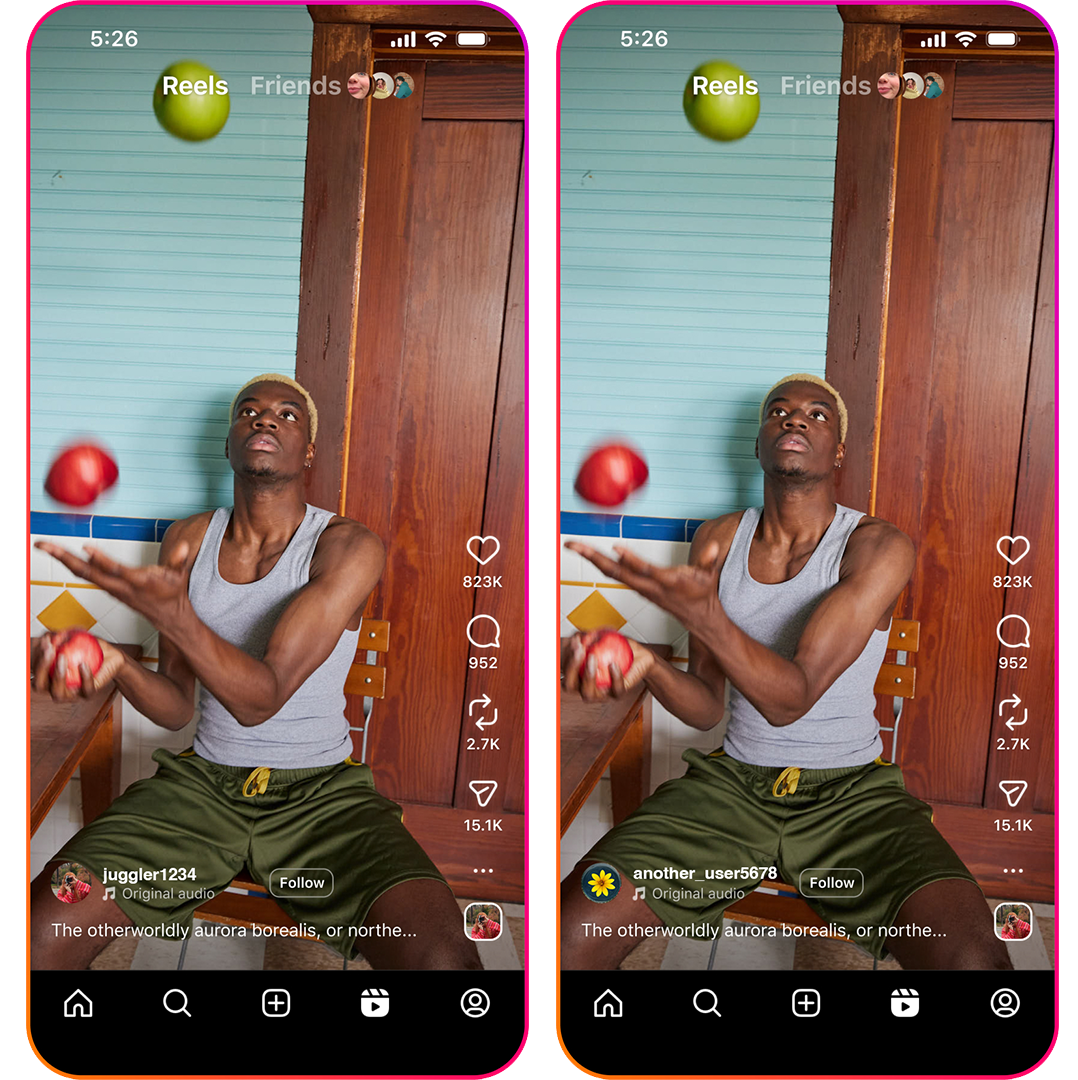

Although two different usernames are visible in the photo in Figure 1, there is no visual indication of who originally uploaded the image. An invisible watermark helps you identify when a video was first uploaded.

teeth It Eben be Reat Imagician?

Generated AI (GenAI) As video becomes more realistic, it becomes increasingly difficult to distinguish between real content and content generated by AI. Invisible watermarks can be used to infer whether content like the one in Figure 2 was generated by AI.

What kind of camera was used?

When we come across a fascinating image or video like the one in Figure 3, we often wonder about the sources and tools used to create it. Invisible watermarks can directly infer this information.

Traditional methods such as visual watermarks (which can be distracting) and metadata tags (which can be lost when the video is edited or re-encoded) cannot adequately and reliably address these challenges. Invisible watermarks are a good alternative due to their persistence and low perceptibility.

A Scaling Journey: From GPU to CPU

Previous digital watermarking research (starting in the 1990s) used digital signal processing techniques (such as DCT and DWT) to modify the spectral characteristics of images to hide imperceptible information. Although these methods have proven to be very effective for static images and were considered “solved problems,” they are not robust enough to the various types of geometric transformations and filtering found in social media and other real-world applications.

Today’s cutting-edge solutions ( video sticker) uses machine learning (ML) techniques to significantly improve robustness to the types of edits seen on social media. However, applying the solution to the video problem domain (i.e., frame-by-frame watermark embedding) can be computationally prohibitively expensive without the necessary inference optimization.

GPUs may seem like an obvious solution for deploying ML-based video watermarking solutions. However, most types of GPU hardware are specialized for training and inferring large-scale models (such as LLMs and diffusion models). Video transcoding (compression and decompression) is partially or not supported at all. Enabling invisible watermarks on videos therefore poses unique challenges for existing video processing software (FFmpeg) and hardware stacks (GPUs without video transcoding capabilities or other custom accelerators for video processing without efficient ML model inference capabilities).

Attempting GPU optimization and moving to CPU

Our embedding architecture uses FFmpeg and custom filters to compute and apply an invisible watermark mask to the video. Filters act as reusable blocks that can be easily added to existing video processing pipelines. Moving to a more optimal inference service for warmed-up models would mean sacrificing the flexibility of the FFmpeg filter, so that wasn’t an option for our application.

When profiling the invisible watermark filter, we found low GPU usage. We implemented frame batching and threading in our filters, but these efforts did not significantly improve latency or utilization. GPUs with hardware video encoders and decoders can more easily reach high throughput, but the GPUs available in the service do not have video encoders and must send frames back to the CPU for encoding. Here, software video encoders can become a major bottleneck for pipelines that use low-complexity ML models on GPUs.

Specifically, we encountered three main bottlenecks:

- Data transfer overhead: Passing high-resolution input video frames back and forth between the CPU and multiple GPUs created thread and memory optimization challenges, resulting in suboptimal GPU utilization.

- Inference latency: Processing multiple invisible watermark requests in parallel across multiple GPUs on the same host significantly increased inference latency.

- Model loading time: Despite the small size of the model, loading the model consumed a significant portion of the total processing time. Relying on FFmpeg no longer allows you to use warmed up and preloaded models on the GPU.

Recognizing these limitations, we began investigating CPU-only inference. The Embedder’s neural network architecture is more GPU-friendly, and initial benchmarks showed end-to-end (E2E) performance to be more than twice as slow on the CPU. Significant improvements were made by adjusting the threading parameters of the encoder, decoder, and PyTorch, and optimizing the sampling parameters used in the invisible watermark filter.

Ultimately, with properly tuned threading and embedding parameters, the E2E latency when running invisible watermark on a single-process CPU was within 5% of GPU performance. Importantly, multiple FFmpeg processes can run in parallel on a CPU without increasing latency. This breakthrough allows us to calculate the required capacity and deliver a solution that is more operationally efficient compared to GPU-based solutions.

We conducted comprehensive load tests to verify the scalability of our CPU solution in a distributed system. Given the pool of CPU workers, we generated test traffic by increasing the request rate to identify the peak performance point before the per-request latency started to rise. For comparison, we used the same parameters for GPU inference on a pool of GPU workers with similar capabilities. The results confirm that our CPU solution can perform at scale comparable to our local test results. This achievement allows us to provision the required capacity with greater operational efficiency compared to GPU-based approaches.

Optimization considerations and tradeoffs

Deploying invisible watermarks at scale has presented several optimization challenges, primarily related to trade-offs between four metrics:

- Latency: The speed at which watermarking takes place

- Watermark detection bit accuracy: Embedded watermark detection accuracy

- Visual quality: Make embedded watermarks imperceptible to the human eye

- Compression efficiency (measurement method: BD rate): Prevent embedded watermarks from increasing bitrate too much

Optimizing for one metric can negatively impact other metrics. For example, enhancing watermarks to increase bit precision can result in visible artifacts and increased bitrate. It is not possible to create a perfectly optimal solution for all four metrics.

Managing the impact on BD rates

Invisible watermarks, although imperceptible, result in an increase in entropy that can lead to higher bitrates in video encoders. Our initial implementation showed a BD rate regression of approximately 20%. This means that users will need more bandwidth to watch watermarked videos. To alleviate this, we devised a new frame selection method for watermark embedding that significantly reduces the impact of BD rate while improving visual quality and minimizing the impact on watermark bit detection accuracy.

Dealing with poor visual quality

You need to make sure that the “invisible” watermark truly remains invisible. Initially, significant visual artifacts were observed despite the high quality metric scores (VMAF and SSIM).

We approached visual quality assessment by implementing custom post-processing techniques and iterating through different embedding settings through crowdsourced manual inspection. This subjective assessment was critical to unblocking, as traditional visual quality metrics proved insufficient to detect the types of artifacts caused by invisible watermarks. While tuning the algorithm to be invisible to the human eye, we carefully monitored the impact on bit precision to achieve the best balance between visual quality and detection accuracy.

What I learned and the way forward

Our efforts to implement a scalable and invisible watermarking solution have provided us with valuable insights.

When properly optimized, CPU-only pipelines can achieve performance comparable to GPU pipelines at a much lower cost in certain use cases. Contrary to our initial expectations, a properly optimized CPU provided a more operationally efficient and scalable solution for our invisible watermarking system. Although GPUs are still faster for inferring invisible watermark models, we were able to use optimizations to lower the overall compute and latency of our CPU fleet.

Traditional video quality scores are insufficient for invisible watermarking. We found that metrics such as VMAF and SSIM cannot fully capture the perceptual quality issues caused by invisible watermarks and require manual inspection. Further research is needed to develop metrics to programmatically detect visual quality degradation caused by invisible watermarks.

Quality standards for production use are high. Watermarking techniques affect the BD rate and downstream video compression, so they may not be directly applicable to real-world use cases. In order to maintain good bit accuracy for detection while keeping the impact on BD rate low, it was necessary to extend the literature.

We have successfully shipped a scalable watermarking solution with superior latency, display quality, detection bit accuracy, and minimal impact on BD rates.

North Star’s goal is to continue improving accuracy and copy detection recall with invisible watermark detection. This includes further tuning of model parameters, pre- and post-processing steps, and video encoder settings. Ultimately, we envision invisible watermarks as lightweight “filter blocks” that can be seamlessly integrated into a wide range of video use cases without product-specific adjustments, minimizing impact on user experience while providing robust content provenance.