Data source and preprocessing

This study used data from the CVAR online database, a part of MedEffect Canada13. This program is designed to manage data on health products and their adverse reactions for the primary benefit of the consumer. Health Canada maintains the database, which contains reports of suspected ADRs, including voluntary reports from consumers and health professionals and mandated reports by manufacturers and distributors. The data covers the timeframe from 1965 through April 30, 2024. The CVAR database contains general information, prescribed medications and dosage, and adverse effects for ~ 1.1 million entries of individual reports. The raw data was extracted in ASCII format, structured across 13 files, forming a relational database.

Data preprocessing is an essential step for enabling effective ML, which is particularly crucial when working with complex high-dimension healthcare datasets. Given that the ML models we were investigating required numerical inputs, several preprocessing and transformations were required before model training and evaluation. The data underwent preprocessing steps, including automated validation, standardization, and quality control measures to ensure consistency and reliability of the reports.

Extracting the CVAR database resulted in multiple files regarding different aspects of the reports (such as general information, prescription details, and adverse effect status). These were merged through Python scripts using a common identifier, “Adverse Reaction Report (AER) Number”. The CVAR database was expressed in both English and French, the latter columns being removed. All categorical variables were one-hot encoded into binary format, while continuous variables were left unchanged. Features with more than 50% missing values were dropped. Once the data was prepared, the ML workflow was applied as shown in Fig. 2.

Data Processing and Modeling Pipeline for Prediction of Clozapine ADRs.

Study population

The database was filtered using an SQL query to only include records where the active drug ingredient was clozapine. Clozapine is marketed under many brand names such as Clozaril®, Leponex®, Versacloz®, and others. A total of 9935 ADR reports pertaining to clozapine were extracted from the CVAR database. Among these reports, 337 instances of agranulocytosis were identified, corresponding to an occurrence rate of 3.39%. To prepare the data for analyses, one-hot encoding was applied to the drug and adverse reaction columns, converting categorical variables into binary values utilizing Sci-kit Learn Python libraries. Body Mass Index (BMI) was calculated as weight (in kilograms) divided by height (in meters squared). This created a feature to normalize both height and weight. Features were then scaled using the sklearn (or Sci-kit Learn) Python library to improve feature uniformity, ensuring that all features initially held equal weight in subsequent analyses. The completed dataset comprised 9395 records and 387 feature variables, focusing exclusively on clozapine-prescribed records in a structured, tabular format.

Outcome definition

The targeted outcome of the study was agranulocytosis, which was defined as the target variable. Agranulocytosis was represented in the preprocessed data as a binary variable, where a value of 0 represented non-occurrence controls (negative case) and a value of 1 represented occurrence (positive case).

Out of all reported ADRs with clozapine, 99.29% of the cases were listed as ‘suspect’ to cause the ADR, with a minority 0.71% labeled as concomitant. A ‘suspect’ designation indicated the reporter considered clozapine as the primary cause of the ADR while ‘concomitant’ suggested the presence of alternative contributing factors.

Predictors and feature selection

Baseline characteristics of the data contained 9395 individual reports each related to 387 feature variables. All 387 relevant variables were included in the model training. This included general report information (sex, age, height, weight), clozapine dosage, co-occurring ADRs, outcomes, and seriousness.

ML Methodologies

Data split

The preprocessed data was partitioned using a stratified random split: 80 percent training and 20 percent testing, maintaining the original class distribution (Fig. 2). Within the training set, fivefold stratified cross-validation with nested optimization was implemented to mitigate model overfitting. Stratification ensured that each fold has the same proportion of classes as the entire dataset. This is particularly crucial for imbalanced datasets as it preserves consistent class distribution across folds. The test set remained held out until the final model evaluation to prevent data leakage and provide an unbiased performance assessment. The random seed was set to 42 for reproducibility.

Resampling techniques

Agranulocytosis classification in the CVAR database resulted in imbalanced classes, with 9058 agranulocytosis-negative to 337 agranulocytosis-positive classes. Severe class imbalances were exploited by common evaluation metrics such as accuracy and Area Under the Receiver Operating Curve (AUC-ROC), resulting in overly optimistic predictions. Before discussing alternative metrics for evaluating model performance, we first addressed the class imbalance problem through resampling techniques. Through resampling, the decision boundary was more defined to strengthen the model’s predictive capabilities. In a clinical context, maximizing true positives at the cost of false positives is favoured due to the significance of potentially missing a diagnosis14.

Effectively increasing the sensitivity of a classifier to the minority class is a distinct problem related to detecting rare diseases. As the minority class has a low incidence in datasets, traditional ML algorithms tend to be biased towards the majority class, leading to missing crucial positive minority class predictions.

Two re-sampling options exist: under-sampling the majority (agranulocytosis-negative) class or synthetically generating the minority (agranulocytosis-positive) class. The techniques that were used were under-sampling and SMOTE. While combined methods are available, we evaluated each technique separately to isolate and understand its individual impact on model performance.

Firstly, models were trained and tested using the original data split, which consisted of 9058 agranulocytosis-negative and 337 agranulocytosis-negative to evaluate base performance. Given the stark class imbalance, it would be misrepresentative of the model’s true performance to evaluate based on accuracy or Area Under the Precision-Recall Curve (AUC-PR) given the importance of positive class predictions.

Under-sampling reduces the number of majority class entries by random sampling within the majority class dictated by a ratio in relation to the minority class. In our case, we applied under-sampling at a 2:1 ratio, resulting in 674 agranulocytosis-negative to 337 agranulocytosis-positive cases.

The SMOTE is a technique first introduced in 2002 which aims to create synthetic instances of the minority class15. In our case, the minority class was the agranulocytosis-positive class. Functionally, SMOTE takes minority class examples in feature space and introduces synthetic neighbours in a specified ratio to increase the sensitivity of the classifier. By increasing the minority class and therefore decreasing the class imbalance, ML models are presented with more minority class examples. Default SMOTE parameters were used, where k = 5 nearest-neighbors and sampling strategy = auto. SMOTE was only applied to training data within each cross-validation fold, ensuring synthetic samples were not leaked into the testing sets.

Model development

Multiple algorithms were chosen to be integrated into our ML pipeline with the target variable of agranulocytosis in individuals treated with clozapine (Table 1). By standardizing the processed data, feature variables, and target variables, we trialed different combinations of algorithms and resampling techniques to determine ideal combinations for agranulocytosis prediction, which can be applied to other diseases. All models were implemented using scikit-learn (version 1.5) and XGBoost (version 2.1.1) libraries16,17.

Evaluation metrics

Traditional ML model evaluation metrics such as model accuracy and AUC-ROC can be misleading when applied to severely imbalanced datasets, often showing artificially high values that are not indicative of true performance, especially in rare-condition prediction tasks. We propose utilizing three evaluation metrics for imbalanced classification: recall (known as True Positive Rate/Sensitivity), AUC-PR, and Matthews Correlation Coefficient (MCC). Best evaluation metrics should be chosen carefully considering the context. Specifically, in this study, recall pertains to correctly identified agranulocytosis-positive cases, which is paramount to the serious nature of agranulocytosis.

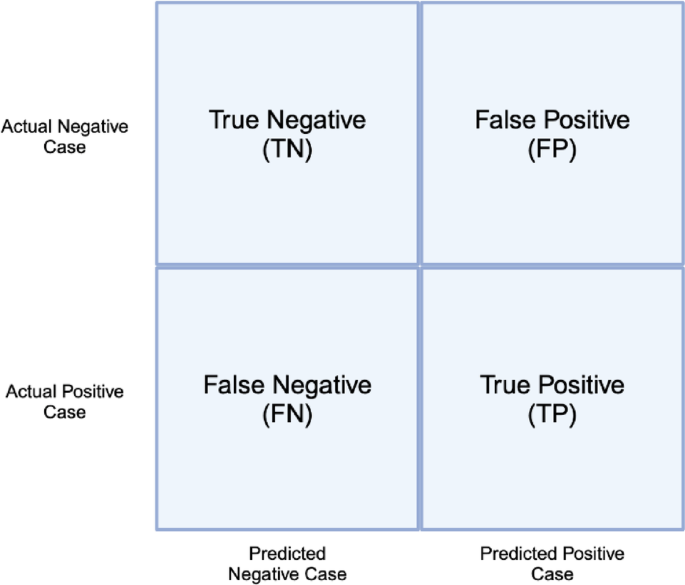

AUC-PR and MCC were chosen due to reliably returning values representative of overall performance in all four quadrants of the 2 × 2 confusion matrix. An example of a confusion matrix and associated quadrants is shown in Fig. 3.

Confusion matrix for binary classification.

Recall measures the proportion of actual positive cases which were correctly identified and is also known as sensitivity or true positive rate (TPR). It is extremely critical in clinical contexts to have high recall due to the high cost of potentially missing diagnoses.

$${\text{Re}} call = \frac{TP}{{TP + FN}} \in [0,1]$$

AUC-PR is comparable to AUC-ROC in the manner that the area under the curve is the metric, the difference lies in the curves themselves. Firstly, the receiver operating curve is a true positive rate as a function of the false positive rate, with 0.0 and 1.0 being representative of imperfect and perfect classification, respectively. Extremely imbalanced datasets common in healthcare research leads to overly high AUC scores, which misrepresent the effectiveness of a classifier. On the other hand, AUC-PR evaluates the area under the curve of precision plotted as a function of recall, with 0.0 representing no predictive power and 1.0 representing a perfect classification. Plotting precision as a function of recall demonstrates the trade-off between the quality of positive predictions and true positive predictions the model makes. The AUC-PR plot has a baseline which shifts given the class distribution, given a random classifier, the baseline = 0.5. The baseline is calculated from the positive class proportion in the dataset. In our case, the baseline is 337/9395 = 0.0359 or 3.59%.

MCC is proposed to be a better alternative to the traditional evaluation metrics (accuracy, AUC-PR) when evaluating models relating to imbalanced data. First introduced in 1975, MCC ranges from -1.0 to 1.0 and returns a high score (Table 2) only when prediction rates are acceptable across all four quadrants of the confusion matrix (Fig. 3)18. The formula for MCC is given below:

$$MCC=\frac{TP * TN -FP * FN}{\sqrt{\left(TP+FP\right)\left(TP+FN\right)\left(TN+FP\right)(TN+FN)}}\in [-1.0, 1.0]$$

In conclusion, due to the imbalanced nature of our data, we choose to evaluate model performance on recall, AUC-PR, and MCC. These metrics evaluate predictions across all cases, with recall being representative of the proportion of positive cases predicted correctly. This is especially valuable in identifying rare events, such as drug-induced agranulocytosis.

SHAPley feature values

Multiple models were utilized to evaluate the best model by performance metrics while maximizing explainability through Shapley-Additive-exPlanations (SHAP) values. First introduced by Lundberg and Lee, it offers a game theory-derived explanation of ML model outputs19. Since the variables of interest are the predictors of agranulocytosis, we utilize a feature importance metric which allows us to describe the underlying variables for the prediction of agranulocytosis-positive cases across all models. SHAP is model-agnostic, meaning that it can be applied to all models regardless of the architecture. The SHAP values determine how much each variable contributes to the prediction. In our study, they were calculated using 80% of the training data and 20% of the testing data, with the Gradient Boosting (GB) model and SMOTE sampling.

SHAP decision plots offer insight into how each feature impacts the individual steps of each individual prediction, showing the contribution of each feature variable at each stage. Decision plots were created using the SHAP Python package, with the x-axis representing the model output and the y-axis containing features.