High-end graphics processors running in modern data centers look efficient on paper. Top-of-the-line products may require as little as 700 watts to run large language models. But in the real world, the same chip can require nearly 1,700 watts of power as electricity passes through the conversion, resistive, and thermal layers.

This hidden inefficiency has become one of the less talked about crises of artificial intelligence. As data centers expand to accommodate larger and larger models, energy is wasted to obtain power. to This chip could rival the energy used in the computation itself.

Pengzu thinks that's ridiculous. And he believes there is a solution.

At the startup PowerLattice, Zou and his team have built something small enough to hide under a processor package, but ambitious enough to promise a dramatic rethinking of how AI hardware consumes power. Their idea is based on the simple principle of moving power conversion as close to the processor as physics allows.

AI’s invisible energy tax

Modern data centers run on alternating current from the electrical grid. However, the AI chip can only handle low-voltage direct current, around 1 volt. Moving from one to the other requires multiple conversion steps.

And each step involves sacrifice.

When the voltage drops, the current increases to save power. This large current must pass through the circuit board and interconnects. And where current flows, heat is generated. Energy losses are proportional to the square of the current, so small inefficiencies can quickly add up.

“This exchange occurs close to the processor, but even in low-voltage conditions the current can travel a significant distance,” explains Hanh-Phuc Le, a power electronics researcher at the University of California, San Diego. He is not affiliated with PowerLattice. “The closer you get to the processor, the less distance large currents have to travel, which reduces power loss,” he says.

For years, engineers have been chipping away at this problem. But the advent of AI has put a new and uncomfortable spotlight on it.

“This has almost become a critical issue today,” Zou said, given the explosive growth in power demand in data centers. IEEE spectrum.

Achieving power conversion in millimeter units

Traditional systems perform the final voltage drop several centimeters away from the processor. PowerLattice reduces that distance down to millimeters.

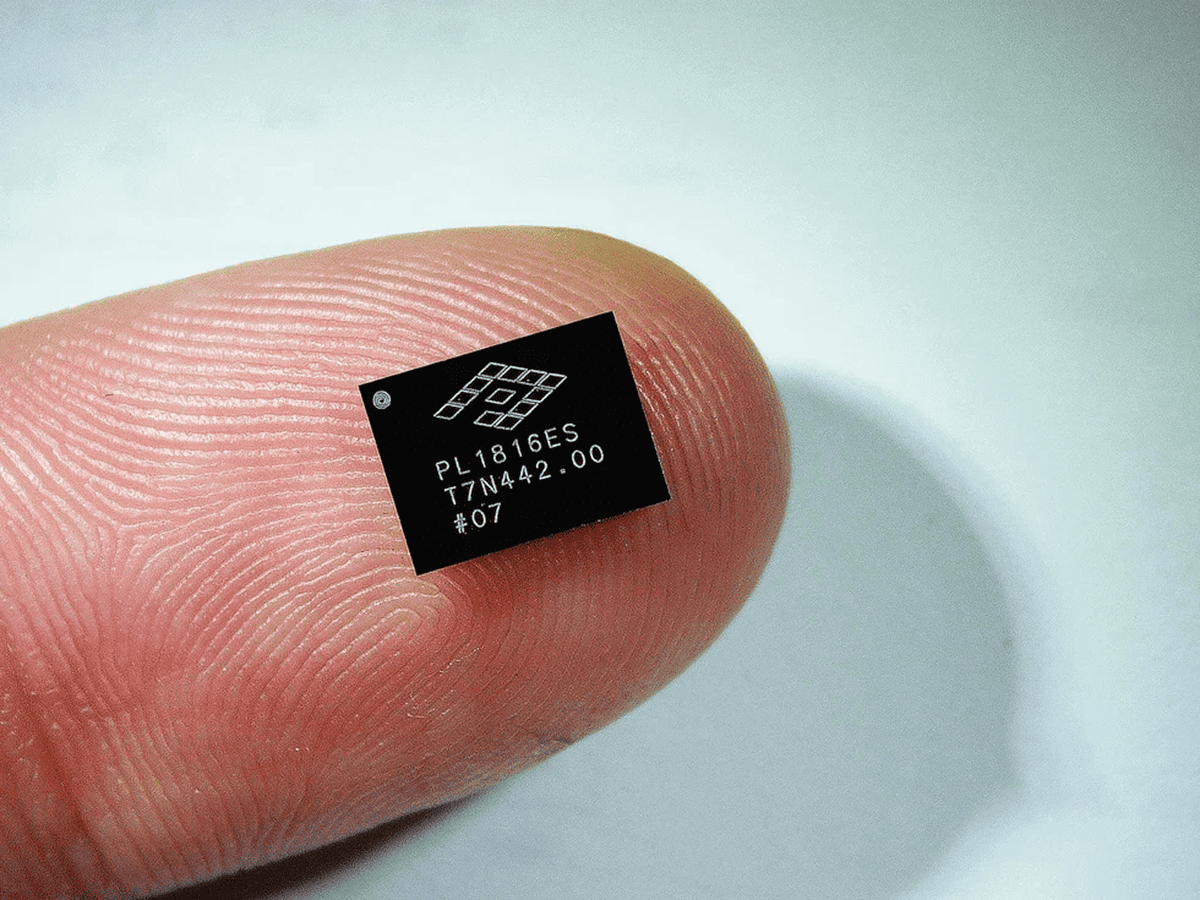

The company has designed a small power delivery chiplet that is placed under the processor package itself. Converts power at the last possible moment, minimizing the distance over which high currents flow.

Each chiplet contains an inductor, voltage control circuitry, and software programmable logic. The entire device is about twice the size of a pencil eraser and only 100 micrometers thick, or about the width of a human hair.

This extreme thinness allows the chiplet to sit below the processor without taking up space from other critical components. When it comes to data center hardware, real estate is everything.

Zou argues that this proximity fundamentally changes the calculation of power losses. Shorter distance means less heat. Less heat means less energy wasted.

However, the miniaturization of power electronics comes with its own set of challenges.

inductor problem

Inductors are the unsung heroes of voltage regulation. It stores energy for a short period of time and then releases it smoothly to stabilize the power supply.

They are also stubborn and physical.

Engineers have long known that the physical size of an inductor directly affects the amount of energy it can manage. Making it smaller usually results in worse performance.

PowerLattice used materials science to address this constraint. Zou said the team built the inductor from a special magnetic alloy, which “allows us to operate the inductor very efficiently at high frequencies.” He added, “We can now operate at frequencies 100 times higher than traditional solutions.”

At higher frequencies, the circuit can rely on inductors with much lower inductance. Lower inductance means less material. Also, less material means smaller components.

Many magnetic materials lose their desirable properties when subjected to such extreme conditions. PowerLattice's alloy maintains its performance and allows the entire regulator to be scaled down without collapsing.

As a result, the area of the voltage regulator is less than 20 times smaller than today's solutions, Zou says.

Big claims, real questions

PowerLattice claims its chiplets can reduce power consumption by up to 50%, effectively doubling performance per watt.

This number naturally raises eyebrows.

talk to IEEE spectrum, Lee doesn't completely deny this idea, but he is cautious. He says that 50% savings “may be achievable, but it means that PowerLattice would have to directly control the load, which also includes the processor.”

In other words, the most dramatic benefits are usually achieved when the power system dynamically adjusts voltage and frequency based on what the processor is doing in real time. This technique, known as dynamic voltage and frequency scaling, requires deep integration with the chip itself.

PowerLattice does not control the processor. Without that control, Lee says the savings may still be meaningful, but may not be as miraculous as advertised.

Zou counters that flexibility is also part of the design. Chiplets are “highly configurable and scalable,” he says. Customers can deploy many or just a few chiplets depending on their architecture and needs.

Zou argues that “this is one of the key differentiators” of the company's approach.

A crowded and changing market

Even if the technology works as promised, PowerLattice faces familiar challenges for startups. That means major companies have the same problem.

Major chip manufacturers, including Intel, are developing their own fully integrated voltage regulators. These solutions aim to solve similar inefficiencies by building power management directly into the processor design.

Mr. Elephant is not worried. He believes Intel's technology will remain in-house. “We are quite different from a market position standpoint,” he says.

A decade ago, that difference might have sealed PowerLattice's fate. Le recalls a time when processor vendors had tight control over the entire ecosystem. Customers who purchase the chip often must also purchase the accompanying power management hardware or risk losing reliability guarantees.

“For example, Qualcomm can sell its own processor chips, but the majority of its customers must also buy its own Qualcomm power management chips,” Lee said.

This lock-in left little room for independent power innovators. However, today's hardware situation is different.

“There is a trend called chiplet implementation, which is heterogeneous integration,” Le says. System designers are now combining components from multiple companies to achieve the highest possible performance and efficiency.

Small startups building processors and AI infrastructure are just as power-hungry as their larger rivals. You may not be able to take advantage of the bespoke power solutions offered by leading companies. For them, third-party innovations like PowerLattice's chiplets may be attractive.

“That's the way markets are,” Lee said. “We have startups working with startups that do things that actually rival or compete with some of the larger companies.”

Why this matters beyond the data center

AI’s energy footprint is not just an engineering issue, but a social issue. As governments and companies pledge to reduce emissions, data centers are placing an increasing strain on the power grid. Improving power efficiency doesn't just save money. Reduce the environmental cost of computation and free up energy for other things.

Efficiency in AI has mainly focused on algorithms through training tricks, pruning techniques, or smarter architectures. Don't get me wrong, these work. Meanwhile, the hardware is burning up electrons behind the scenes.

PowerLattice's work is part of a broader recognition that the future of AI is not just about smarts, it's about plumbing.

Sometimes the biggest problems can be solved by moving critical components just a few millimeters closer to where the work will actually be done.