Training strategies for cross-domain generalization

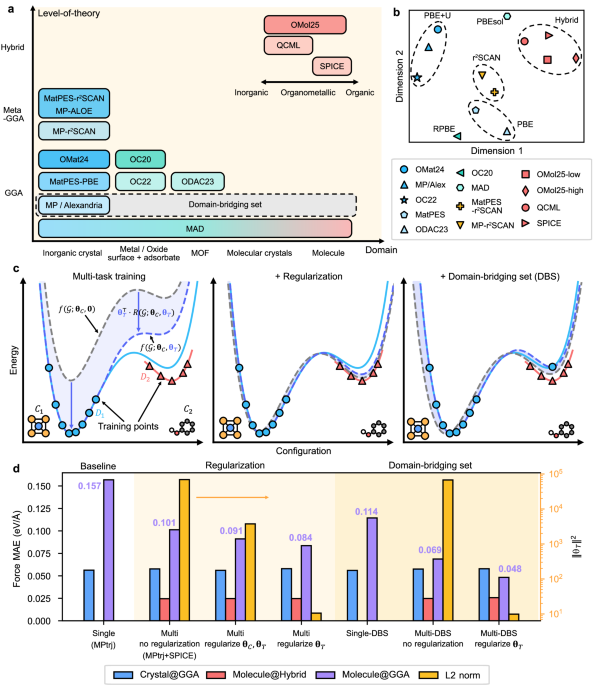

In this study, we address the training of MLIPs using multiple ab initio databases that differ both in the types of atomic configurations (e.g., inorganic versus organic systems) and in the underlying computational approaches (e.g., semilocal versus hybrid functionals). To construct a single MLIP capable of accommodating databases generated under heterogeneous computational settings, we employ a multi-task MLIP framework, where each task corresponds to a different database. (In our original paper, we referred to this approach as multi-fidelity43. Since the present datasets often share the same level of fidelity, such as Perdew-Burke-Ernzerhof (PBE)44 and revised PBE (RPBE)45 within the generalized gradient approximation (GGA), we now use the term multi-task for the same methodological approach.) Within this framework, the model parameters are divided into two categories: (i) shared parameters (θC) that are universally applied across all databases, and (ii) task-specific parameters (θT) that are optimized exclusively for the database corresponding to task T. Formally,

$${{{{\rm{DFT}}}}}_{T}({{{\mathcal{G}}}})\approx f({{{\mathcal{G}}}};{{{{\boldsymbol{\theta }}}}}_{C},{{{{\boldsymbol{\theta }}}}}_{T}),$$

(1)

where DFTT denotes the reference label obtained within DFT corresponding to task T, f represents the MLIP model, and \({{{\mathcal{G}}}}\) indicates atomic configuration. Through the shared parameter set θC, knowledge gained from one database is transferred to the others.

For the continuity of inferred force from MLIP models, one usually avoids non-smooth activation functions such as Rectified Linear Unit (ReLU)46. This guarantees model become at least C1 function for parameter space. Therefore, one can apply Taylor’s theorem to Eq. (1) with respect to θT:

$$f({{{\mathcal{G}}}};{{{{\boldsymbol{\theta }}}}}_{C},{{{{\boldsymbol{\theta }}}}}_{T})= f({{{\mathcal{G}}}};{{{{\boldsymbol{\theta }}}}}_{C},{{{\bf{0}}}})+{{{{\boldsymbol{\theta }}}}}_{T}^{\top }\cdot {\nabla }_{{{{{\boldsymbol{\theta }}}}}_{T}}f({{{\mathcal{G}}}};{{{{\boldsymbol{\theta }}}}}_{C},{{{\bf{0}}}})\\ +{{{{\boldsymbol{\theta }}}}}_{T}^{\top }\cdot {R}_{1}({{{\mathcal{G}}}};{{{{\boldsymbol{\theta }}}}}_{C},{{{{\boldsymbol{\theta }}}}}_{T})\\= f({{{\mathcal{G}}}};{{{{\boldsymbol{\theta }}}}}_{C},{{{\bf{0}}}})+{{{{\boldsymbol{\theta }}}}}_{T}^{\top }\cdot R({{{\mathcal{G}}}};{{{{\boldsymbol{\theta }}}}}_{C},{{{{\boldsymbol{\theta }}}}}_{T}),$$

(2)

where

$$R({{{\mathcal{G}}}};{{{{\boldsymbol{\theta }}}}}_{C},{{{{\boldsymbol{\theta }}}}}_{T}):={\int }_{0}^{1}{\nabla }_{{{{{\boldsymbol{\theta }}}}}_{T}}f({{{\mathcal{G}}}};{{{{\boldsymbol{\theta }}}}}_{C},t\,{{{{\boldsymbol{\theta }}}}}_{T})\,dt$$

(3)

can be obtained by integration since \({\nabla }_{{{{{\boldsymbol{\theta }}}}}_{T}}f({{{\mathcal{G}}}};{{{{\boldsymbol{\theta }}}}}_{C},t\,{{{{\boldsymbol{\theta }}}}}_{T})\) is continuous thus Riemann integrable. The right-hand side of Eq. (2) thus separates the contributions that do and do not depend on θT. Namely, \(f({{{\mathcal{G}}}};{{{{\boldsymbol{\theta }}}}}_{C},{{{\bf{0}}}})\) depends solely on the shared parameters θC and is therefore referred to as the common PES. The second term represents the task-specific contribution.

The left panel of Fig. 1c schematically illustrates the training outcomes and the role of each term in Eq. (2) when two databases (D1 and D2) with distinct atomic structures (C1 and C2) and computational methods are employed. The blue and red solid curves represent the ground-truth PESs of the respective ab initio methods, with markers along each curve indicating the training data points. We focus on the task T associated with D1. The blue dashed curve corresponds to the MLIP prediction incorporating θT, fitted to the blue data points. The gray dashed curve denotes the common PES [\(f({{{\mathcal{G}}}};{{{{\boldsymbol{\theta }}}}}_{C},{{{\bf{0}}}})\) in Eq. (2)], while the shaded blue region highlights the task-specific contribution.

Since the resulting MLIP PES is expressed as the sum of the common PES and the task-specific contribution, multiple combinations of θC and θT can yield comparable training losses. As depicted in the left panel of Fig. 1c, when the task-specific contribution dominates, the MLIP prediction (blue dashed curve) deviates significantly from the ground truth (blue solid curve) at C2, which exists only in D2. This suboptimal transfer occurs because the common PES is inaccurate, while the task-specific contribution is overfitted to a single domain (C1) and therefore performs poorly in out-of-distribution regions.

To address this issue, we introduce regularization on θT. Motivated by Eq. (2), the task-specific contribution term can be suppressed by regularizing the size of θT, since the absolute value of the remainder in Eq. (3) has an upper bound. By penalizing large values of θT, regularization encourages the model to rely more heavily on the common PES, ensuring that the shared representation captures the essential bonding characteristics across both databases. This is illustrated schematically in the central panel of Fig. 1c, where the common PES now remains close to the ground-truth PESs of both tasks, thereby enhancing knowledge transfer between the two databases.

While the regularization of θT is effective in improving transfer learning, it tends to fit the PES to the ground truth of D2 in the C2 domain. This limitation arises from the complete absence of D1 at C2. To address this, we introduce a domain-bridging set (DBS), which augments D1 by evaluating a subset of D2 with the ab initio method used for D1 (see the right panel of Fig. 1c). Incorporating a DBS requires only marginal computational effort (see below) yet yields substantial improvements in the accuracy of task T at C2.

To demonstrate the effectiveness of the proposed approach, we employ the MPtrj7 and SPICE27 databases for MLIP training. The MPtrj database, representative of inorganic bulk systems, is generated using the PBE functional, whereas the SPICE database, curated for organic molecules, is based on hybrid functional of ωB97M47. For multi-task modeling, we adopt the SevenNet-MF architecture43 and evaluate model performance by computing the force mean absolute error (MAE) relative to ab initio reference results.

The evaluation results are presented in Fig. 1d. All evaluations are performed using the validation sets that are not utilized for training. As a baseline, we trained a single-task model using only the MPtrj database (left region), which exhibits large errors for molecular structures evaluated at the PBE level (denoted as Molecule@GGA). We then examined the impact of different regularization strategies in SevenNet-MF training, comparing a multi-task MLIP without regularization, a model trained with regularization applied to both θC and θT, and a model trained with regularization applied only to θT. As shown in the middle region of Fig. 1d, all models achieve comparable force accuracy within their respective training domains and computational settings (Crystal@GGA and Molecule@Hybrid), regardless of the regularization scheme. However, the force MAE differs significantly when evaluated on out-of-domain organic molecular configurations at the PBE level (Molecule@GGA), while all multi-task MLIPs outperform the single-task baseline. In particular, selective regularization of θT yields greater improvements than conventional regularization applied to all model parameters, underscoring its advantage for optimizing cross-database transferability. The right axis shows that model accuracy systematically improves as the L2 norm of θT decreases, indicating that effective knowledge transfer is facilitated by suppressing task-specific contributions.

Next, we extend our evaluation to scenarios where a DBS is available (right region of Fig. 1d). For the DBS, we randomly subsample 0.1% of atomic configurations from the SPICE database and recompute them using the same computational settings as those used for MPtrj. As shown in Single-DBS, accuracy remains insufficient when training with only MPtrj and DBS. In contrast, supplementing with the full SPICE database through multi-task training (Multi-DBS) yields a substantial performance improvement. Furthermore, combining DBS with selective task regularization produces a synergistic effect, achieving the best overall accuracy among all training strategies.

We further examine the effects of regularization and DBS on each term in Eq. (2) by analyzing the PES associated with the binding of two water molecules using the multi-task models shown in Fig. 1d. For each model, two task-specific PES are obtained by selecting θT = θMPtrj and θT = θSPICE, where θMPtrj and θSPICE denote parameters trained on the MPtrj and SPICE databases, respectively. In addition, the common PES is evaluated by explicitly setting θT = 0 during inference.

The resulting PES for each model evaluated on molecular input structures are shown in Supplementary Fig. 2. The PES inferred using θSPICE exhibits good agreement with the ωB97M reference across all training methods. In contrast, the agreement between PBE and the PES obtained with θMPtrj varies substantially, consistent with the trends shown in Fig. 1d. Comparison of Supplementary Fig. 2a, b indicates that regularization renders the common PES more physically meaningful, yielding a more generalizable representation of θC and improved agreement with PBE. By contrast, comparison of Supplementary Fig. 2a, c shows that DBS enhances accuracy by improving the task-specific contribution associated with θMPtrj, while the common PES remains suboptimal. Together, these results demonstrate that the proposed training strategies operate as intended and clarify the synergistic effect of regularization and DBS, as illustrated in Supplementary Fig. 2d.

Building on these observations, we developed a uMLIP, 7net-Omni, by concurrently training the SevenNet-MF architecture on 15 publicly available databases (covering 13 different computational protocols) with selective task regularization and DBS. The training set spans a diverse collection of ab initio databases encompassing molecular, crystalline, metal–organic framework (MOF), and surface systems, as schematically illustrated in Fig. 1a. The specific databases, along with their chemical domains and XC functionals, are listed in Table 1. The present uMLIP concerns the charge neural system, so charged structures in SPICE, OMol25, and QCML are excluded. Each database is assigned to a distinct task, but databases with identical computational protocols are grouped under the same task. We also use the term channel to refer to task-specific inference.

To construct DBS, we adopted the computational settings used in the MPtrj database and performed single-point calculations on approximately 0.1% of structures from six representative databases (see Methods for details of the sampling criterion). The numbers of sampled structures from each database are summarized in Supplementary Table 1.

To efficiently train on heterogeneous datasets, we adopted a curriculum learning strategy. Specifically, the model was first trained on crystal databases such as MPtrj, sAlex, and OMat24 to establish a foundation of chemical knowledge. The training was then extended to molecular systems by introducing OMol25 into the dataset. Finally, the model was trained on the whole database listed in Table 1. This curriculum-based training process enables the model to effectively capture complex chemical environments, such as adsorbate-slab systems, by utilizing prior knowledge established for crystalline and molecular systems. When a new database is introduced as an additional task, the corresponding PES is adapted by initializing the energy-shift parameters via linear regression and making them trainable.

This approach resulted in a more stable learning process compared to joint training on all databases from scratch, which we found to suffer from unstable optimization and poor initial convergence in a highly heterogeneous distribution of the training set. Similar training procedures have previously been adopted to integrate heterogeneous databases into a single MLIP model41. During this curriculum learning, the predictive accuracy on crystalline databases showed no degradation (Supplementary Fig. 3), indicating that progressively expanding the training dataset did not induce noticeable catastrophic forgetting on previously trained domains. We note that the specific ordering of the curriculum was not systematically optimized, leaving room for further improvement in the choice of curriculum sequence. Further details of dataset composition, sampling ratios, and training procedures are provided in the Methods section.

To examine whether the model encodes computational methods in an explainable way, we analyzed the trained parameters of 7net-Omni associated with task embeddings. Since the base architecture, SevenNet-MF, employs one-hot encoding of each task in each self-interaction layer43, the corresponding θT parameters in each self-interaction layer themselves serve as task-embedding vectors. Specifically, we used the θT from the first self-interaction layer of SevenNet-Omni. The latent vectors were visualized using principal component analysis (PCA) in Fig. 1b. We find that the task-dependent weights form distinct clusters that primarily correlate with the choice of XC functional and the application of a Hubbard U correction48. For example, molecular databases employing hybrid functionals group together in a similar region. This indicates that the model captures both similarities and discrepancies across different levels of theory, thereby enhancing the generalizability of the multi-task framework. Comparable analyses have been reported in the context of multi-task property prediction using the multiXC-QM9 dataset49,50. However, unlike the present observation, it produced mixed results; for instance, embeddings generated with the same XC functional but different basis sets exhibited low similarity. This difference may stem from the use of selective task regularization in 7net-Omni, which allows the model to better recognize cross-theory similarities.

Frontier uMLIPs

Throughout this paper, we compare the performance of 7net-Omni with other top-performing multi-task uMLIPs, namely UMA (UMA-m-1p1)8 and DPA (DPA-3.1-OpenLAM)9, as well as single-task uMLIPs: eSEN (eSEN-30M-OAM, eSEN-30M-OMAT)12, ORB (orb-v3-conservative-inf-mpa, orb-v3-conservative-inf-omat)13, 7net-ompa (SevenNet-MF-ompa), NequIP (Nequip-OAM-L)14,51, GRACE (GRACE-2L-OAM-L, GRACE-2L-OMAT-L)15, and MACE (MACE-mpa-0-medium, MACE-omat-0-medium)52,53. The specific model names tested in this work are indicated in parentheses and these correspond to the most accurate variants within each model family, as far as we can determine. We note that all the models are equivariant graph neural networks except for DPA-3.1 which utilizes invariant features. The architectural categorization of ORB remains ambiguous. While ORB employs interatomic displacement vectors as equivariant input features54, its use of multi-layer perceptrons in the model architecture means that strict roto-equivariance and exact energy invariance are not enforced13. Instead, ORB adopts the equigrad loss, which penalizes large energy discrepancies between rotated configurations rather than guaranteeing strict equivariance by construction13. All the models are conservative, producing atomic forces as gradients of the energies.

We classify UMA and DPA as multi-task uMLIPs, as they are trained concurrently on databases spanning diverse material classes, including inorganic bulk, surfaces, and organic molecules. UMA is trained on OMat24, OC20, ODAC2531, OMC2532, and OMol25, whereas DPA is trained on OpenLAM-v1, which aggregates 31 databases, including large-scale datasets such as OMat24, MPtrj, OC20, and SPICE, as well as several domain-specific databases. Architecturally, UMA incorporates additional embedding layers that encode information about the DFT task, charge, and spin, which are appended to the node features at each graph convolution layer. By contrast, DPA employs a one-hot encoding scheme at the final multilayer perceptron stage to distinguish training databases when predicting atomic energies. In terms of regularization, UMA uses the AdamW optimizer with a small weight decay applied to all model parameters, while DPA adopts the Adam optimizer without regularization. Thus, neither UMA nor DPA introduced the task-specific regularization proposed in this work.

Single-task uMLIP models, including eSEN, ORB, NequIP, GRACE, and MACE, typically have two variants depending on the choice of database: OMat24 or MPtrj/Alexandria, which were generated using slightly different pseudopotential sets21. In most cases, the models are first pretrained on the OMat24 database to capture broad, high-energy configurations (e.g., eSEN-30M-OMAT). These OMat24-pretrained uMLIPs are then fine-tuned on the MPtrj and Alexandria databases (e.g., eSEN-30M-OAM).

Since the present work focuses mainly on PBE-based benchmarks, we concentrate on the tasks for each uMLIP in the OMat24 and MPtrj/Alexandria databases. For 7net-Omni, because the DBS is constructed using the computational setup of MPtrj, the mpa channel is generally the most accurate, although this can vary slightly depending on the examples considered, as discussed in later sections. We also consider the matpes channel of 7net-Omni, since its exclusion of the Hubbard U term proves useful for applications including transition metals (see Cross-domain or cross-functional scenarios and Metallic surfaces sections). Although 7net-ompa was trained in a multi-task architecture (concurrently on MPtrj, sAlex, and OMat24), we classify it as a single-task model because its training data consist solely of inorganic crystals. Since 7net-ompa share the same architecture and hyperparameters with 7net-Omni, the performance gain of 7net-Omni over 7net-ompa can be attributed to the additional databases and the multi-domain training strategy proposed here.

For notational simplicity, we henceforth denote multi-task models in the form model.task (e.g., UMA.omc, DPA.spice) and single-task models in the form model[dataset] (e.g., eSEN[oam] for eSEN-30M-OAM, MACE[omat] for MACE-omat-0-medium).

We emphasize that in the following benchmark tests, all physical quantities are computed self-consistently within the given uMLIP or ab initio method, independent of results from other approaches. For example, when estimating reaction energies between two states, each state is obtained by fully relaxing the structure within the model itself. For benchmarks employing van der Waals interactions at the D3 level, we add the D3 correction separately if the task was not trained with D3 included (e.g., 7net-Omni.omat24). If the database already incorporates van der Waals interactions, we use the task results without further modification (e.g., 7net-Omni.omol25 and UMA.omc). We add a cautionary remark that the performance improvements reported in this work primarily reflect better agreement with the PBE functional, which does not necessarily imply closer correspondence to physical reality due to the inherent approximations of GGA functionals and DFT. When comparisons are made with higher levels of theory, such as the meta-GGA r2SCAN functional, or experimental data, we explicitly note the reference methods.

Single-domain applications

Before conducting extensive multi-domain benchmarking, we first examine in-domain cases where both the material type and XC functional are represented in the training set. One such benchmark is Matbench Discovery42, which reports F1, κSRME, and RMSD, each probing a different facet of reliability. The F1 score (harmonic mean of precision and recall) measures the accuracy of crystalline energy ranking; κSRME is the symmetric relative mean error (SRME) for lattice thermal conductivity (κ), reflecting the fidelity of curvature at the PES minimum; and RMSD quantifies structural deviations after relaxation relative to DFT. A combined performance score (CPS) summarizes these metrics. 7net-Omni.mpa achieves F1 = 0.889, κSRME = 0.265, and RMSD = 0.0639, yielding CPS = 0.849, which is slightly higher than 7net-ompa.mpa (0.845). Notably, κSRME for 7net-Omni.omat24 and 7net-Omni.matpes are 0.253 and 0.243, respectively, lower than for 7net-Omni.mpa, which can be attributed to the inclusion of high-energy configurations in OMat24 and MatPES.

To extend beyond tests on pure crystals, we compute grain boundary (GB) energies for 327 configurations across 58 elemental metals55, covering a range of misorientation angles as well as tilt and twist boundaries. We exclude 30 GBs with non-orthogonal planes relative to the z-direction15. The GB energy (γGB) is computed following the definition in ref. 55, and performance is quantified as the SRME of γGB. Supplementary Fig. 4a summarizes the performance of 7net-Omni compared with other leading uMLIPs. Parity plots for GB energy benchmark are also illustrated in Supplementary Fig. 5. All models exhibit comparable performance, but 7net-Omni.mpa performs slightly better.

As another non-crystalline benchmark, we compute defect binding energies in steels. Previous DFT studies have examined interactions among carbon interstitials and vacancies, as well as interactions between transition-metal solute atoms56,57, which have been adopted as a benchmark for uMLIPs58. In this benchmark, we compute binding energies between carbon and vacancy, as well as between transition-metal solutes (Ti, V, Cr, Mn, Co, Ni, Cu, Nb, and Mo). For all relaxations of defective structures, both atomic positions and lattice parameters are allowed to vary, while the cell shape is constrained to remain orthogonal. Supplementary Fig. 4b, c compare the accuracy of uMLIPs for defect binding energies, as well as their parity plots can be found in Supplementary Figs. 6, 7. Overall, all models achieve reasonable accuracy, with no single model showing a clear advantage.

While PBE functionals remain the standard approach in inorganic solid-state simulations, hybrid functionals are more widely adopted in molecular modeling because they provide more accurate descriptions of reaction barrier heights, a task where semilocal functionals often struggle39. To evaluate the hybrid-functional fidelity of multi-task models, we consider small-molecule torsion barriers as a benchmark for conformational PES descriptions. Two pharmaceutically relevant datasets, the biaryl set and TorsionNet50059,60, were recently recomputed at the ωB97M-D3 level of theory47,61, yielding torsion barriers. From these, we select all 88 molecules in the biaryl set and 100 molecules sampled from TorsionNet500. Torsional barrier heights are defined as the energy difference between the minimum and maximum points along the torsional PES59.

In Supplementary Fig. 4d, we benchmark several uMLIPs, including 7net-Omni.spice, 7net-Omni.omol25, DPA.spice, and UMA.omol25 as multi-task models, alongside single-task models for organic molecules, such as MACE-OFF24-medium trained on the SPICE dataset27,33 (denoted MACE[spice]) and eSEN-sm-conserving trained on the OMol25 dataset26 (denoted eSEN[omol]). (See Supplementary Fig. 8 for parity plots.) Although the dispersion corrections differ between the functionals used in SPICE (ωB97M-D3) and OMol25 (ωB97M-V62), the results are compared on equal footing. As shown in Supplementary Fig. 4d, all models achieve high accuracy for torsional barriers, with MAEs well below the chemical accuracy threshold of 1 kcal/mol (gray dashed line in Supplementary Fig. 4d).

Cross-domain or cross-functional scenarios

The main motivation of this work is to develop a uMLIP that can simulate complex material systems in which distinct classes of materials interact. One example is semiconductor processing, such as ALD, where precursor molecules adsorb onto silicon-based substrates. In such applications, semilocal functionals, most commonly the PBE functional, are preferred to describe both molecular and solid-state systems simultaneously63,64. Within the current multi-task training framework, this requires effective knowledge transfer from PESs learned with hybrid functionals to those learned with the PBE functional for molecules. To examine this more closely, we first focus on molecular systems, either isolated or crystalline, computed at the PBE level, as shown in Fig. 2a–c. (For parity plots of each benchmark, see Supplementary Figs. 9–11).

a MAE in predicting torsional energy barriers. The y-axis lists the uMLIP models. For multi-task uMLIPs, the inference channel is indicated to the right of each bar; for single-task uMLIPs, the corresponding training set is shown. White bullets mark the accuracy of the hybrid-functional channel (parentheses) in reference to the ωB97M-D3 results. b MAE of reaction energy predictions for organometallic complexes. c MAE of cohesive energy predictions for organic crystals. d MAE of formation energy predictions for hybrid organic-inorganic perovskites. e MAE of adsorption energy predictions for molecular inhibitors, computed by energy changes from physisorbed to chemisorbed states. f Error distributions of four benchmark tasks for metal–organic frameworks, represented as box plots. The box and red horizontal line in the box plot show the quartiles and median of the error distribution, respectively, while whiskers represent 1.5 times the inter-quartile range. Individual data points are randomly jittered along the horizontal axis for visual clarity. In (a–f), all reference DFT data are obtained with PBE-D3. Solid bars in (a–e) represent the best-performing channel or training database. Individual parity plots are provided in Supplementary Figs. 9–11,14,15. Source data are provided as a Source Data file.

First, small-molecule torsion barriers studied in the previous section are selected as a single-molecule benchmark for evaluating the accuracy of conformational PESs in complex molecules. The molecules are reoptimized at the PBE-D3 level to directly compare energy barriers with PBE-fidelity channels. The MAE in torsion barriers is summarized in Fig. 2a, which shows that 7net-Omni achieves significantly higher accuracy than 7net-ompa, benefiting from the incorporation of molecular databases. Notably, 7net-Omni.mpa attains the hybrid-functional accuracy of 7net-Omni.spice reported in the previous section (see the white bullet). This demonstrates successful knowledge transfer across both chemical domains and fidelity. UMA.omc and DPA.mp show similar trends; however, they exhibit large variations in accuracy among channels, whereas 7net-Omni maintains more uniform error levels, attributable to the selective task regularization.

In Supplementary Fig. 9, parity plots for torsion barriers are shown for all models. As the MAE increases, the data points become more scattered, indicating that the errors are more random rather than systematic. This increased randomness makes the predictions less reliable. This trend is consistently observed throughout this work: larger MAE values generally correspond to weaker correlations.

In Fig. 2a, multi-task models on accurate channels outperform most single-task models, underscoring the role of training data for molecules. The exception is eSEN[oam], which achieves accuracy comparable to that of 7net-Omni. The relatively high accuracy of eSEN[oam] on systems involving small molecules is consistently observed throughout this work. The unexpected performance of eSEN[oam] outside its training domain has also been reported in other studies65,66, although it remains unexplained at present.

Next, we compute the reaction energy (Erxn) for organometallic complexes. A total of 53 reactions involving 97 organometallic complexes were collected from the literature and reoptimized at the PBE-D3 level of theory67,68. Figure 2b shows the MAE of Erxn over the benchmark set, indicating that 7net-Omni outperforms all other models. Both 7net-Omni and UMA include organometallic complexes in their training sets (OMol25), yet their cross-functional performances differ substantially. Interestingly, 7net-Omni.matpes is more accurate than 7net-Omni.mpa, despite the latter channel incorporating some organometallic complexes through DBS. Further analysis reveals that reactions with large errors in 7net-Omni.mpa predominantly involves Cr, Fe, Co, and Ni centers (see Supplementary Fig. 10), suggesting that this underperformance is related to the application of PBE+U in the MPtrj/sAlex database for partially filled 3d transition metals. A more detailed discussion is provided in Metallic surfaces section.

To assess the description of intermolecular interactions, which are critical in organic semiconductors and molecular liquids, we investigate molecular crystals. A total of 86 molecular crystals are considered, comprising 23 from the X23 set69,70 and 67 from the BMCOS1 benchmark set71, spanning systems from simple crystals to optoelectronic materials. Four crystals that overlap with the BMCOS1 set are excluded from the X23 subset to avoid redundancy. We compute the cohesive energy (Ecoh), defined as the energy required to separate the crystal into its constituent molecules. The MAE of Ecoh in Fig. 2c shows that 7net-Omni.mpa outperforms other models, including UMA.omc, which is explicitly trained on molecular crystals. (For parity plots, see Supplementary Fig. 11). Supplementary Figs. 12, 13 further compare errors in the equilibrium volumes of molecular crystals, showing trends consistent with those in Fig. 2c.

Next, we consider the ABX3-type hybrid organic-inorganic perovskites as an example of multi-domain applications, which have attracted considerable attention in photovoltaics and optoelectronics72,73. Although some hybrid perovskites are included in databases such as MPtrj, diverse combinations of A, B, and X remain under-represented. Kim et al.74 constructed a comprehensive database of hybrid organic-inorganic perovskites, including one of the 16 molecular cations at the A site and a metal of the IV group at the B site. We randomly select 100 hybrid perovskite structures from the database and calculate the theoretical formation energy (Efor), which represents the relative energy difference between the perovskite and its components (e.g., metal and molecule) at the PBE-D3 level:

$${E}_{{{{\rm{for}}}}}={E}^{{{{{\rm{ABX}}}}}_{3}}-{E}^{{{{{\rm{A}}}}}^{{\prime} }}-{E}^{{{{\rm{B}}}}}-\frac{3}{2}{E}^{{{{{\rm{X}}}}}_{2}}-\frac{1}{2}{E}^{{{{{\rm{H}}}}}_{2}},$$

(4)

where Eα is the energy of α. In Eq. (4), \({{{{\rm{A}}}}}^{{\prime} }\) indicates the neutral organic molecule corresponding to the cation A+ (e.g., NH3 for \({{{{{\rm{NH}}}}}_{4}}^{+}\)), and EB denotes the total energy of the elemental metal B in its stable crystalline phase.

The benchmark results are shown in Fig. 2d, as well as corresponding parity plots are represented in Supplementary Fig. 14. Across all tested models, 7net-Omni shows the best agreement with DFT. Notably, both UMA.omc and UMA.omat exhibit relatively large errors: the omat channel suffers from inaccurate molecular energies, suggesting limited transferability from the OMol25 database, while the omc channel struggles to identify stable structures of inorganic crystals. In contrast to eSEN[oam], eSEN[omat] shows large errors for certain molecules (e.g., F2, HF, NO, O2), which results in low accuracy in Fig. 2d. This issue is further analyzed later in this section.

In Fig. 2e, we extend our analysis to surface reactions involving the adsorption of organic molecules on dielectric substrates, motivated by thin-film deposition in semiconductor processing. Specifically, we consider Si-centered inhibitor molecules adsorbing on SiO2 and Si3N4 substrates, which are relevant to area-selective deposition (ASD)75. We adopt 78 PBE-D3 reference results for physisorption and chemisorption from ref. 76. For the adsorbates, we examine (N,N-dimethylamino) trimethylsilane (DMATMS) and ethyltrichlorosilane (ETS), while SiO2 and Si3N4 serve as substrates. The reaction energy (Erxn) is defined as the energy difference between the chemisorbed and physisorbed states,

$${E}_{{{{\rm{rxn}}}}}={E}^{{{{\rm{chem}}}}}-{E}^{{{{\rm{phys}}}}}.$$

(5)

Figure 2e summarizes the model accuracy, showing that 7net-Omni achieves substantial improvements over 7net-ompa. Among the single-task models, eSEN and ORB also perform competitively. (For parity plots, see Supplementary Fig. 15).

Metal–organic frameworks (MOFs), as hybrid organic-inorganic materials, offer vast structural tunability through the nearly limitless combinations of linkers and metal nodes77. However, their immense chemical space and large atomic numbers often make DFT-based screening impractical. Recent advances in uMLIPs provide a scalable alternative78. To assess the accuracy of 7net-Omni in MOF applications, we conducted four benchmark tasks covering both homogeneous properties (heat capacity) and heterogeneous cases (MOF-guest interactions).

Full data in Supplementary Fig. 16 show that all models performed well in predicting heat capacities79,80, producing MAEs less than 0.03 J/K/g, but accuracy in MOF-guest interactions varied widely depending on channels and training databases for some models (UMA, DPA, and eSEN). Therefore, throughout Fig. 2f, we display only accurate variants: 7net-Omni.mpa, UMA.omc, DPA.mp, 7net-ompa.mpa, eSEN[oam], ORB[omat], NequIP, GRACE[omat], and MACE[mpa]. Except for UMA.omc, all models include D3 corrections separately.

Next, we evaluated adsorption of single CO2 and H2O molecules using the test set from the GoldDAC dataset81, following established protocols (see upper-right section in Fig. 2f). The adsorption energy, Eads, of a molecule X is defined as

$${E}_{{{{\rm{ads}}}}}={E}^{{{{\rm{MOF+X}}}}}-{E}^{{{{\rm{MOF}}}}}-{E}^{{{{\rm{X}}}}},$$

(6)

where EX is the energy of the isolated molecule X (X=CO2, H2O). In this task, 7net-Omni.mpa achieved accuracy comparable to eSEN[oam] and close to UMA.odac, which was specifically designed for this type of evaluation (Supplementary Fig. 16b). Similar trends were observed for predicting experimental CO2 Henry coefficients (KH) from the ODAC25 study31 (see lower-right section in Fig. 2f), reflecting the general proportionality between adsorption energies (Eads) and KH.

In Supplementary Fig. 16b, 7net-Omni.odac23 underperforms in Eads without deformation in the repulsion region, even though this task is directly trained with the database related to MOF-gas interactions. We suspect that this behavior arises from the relatively loose DFT settings used in the ODAC23 dataset, which may have introduced noise25. In preliminary tests, replacing ODAC23 with ODAC25 (without updating DBS) significantly reduced the error especially in the repulsive region, as illustrated in Supplementary Fig. 17.

In the lower-left region of Fig. 2f, we further evaluated adsorption with framework deformation using a DFT dataset from prior work82, again focusing on single-molecule CO2 and H2O adsorption. In this scenario, 7net-Omni.mpa delivered the highest accuracy, surpassing the practical 0.1 eV MAE threshold for screening (gray dashed line in Supplementary Fig. 16d)82. Parity plots for the MOF benchmarks are illustrated in Supplementary Figs. 18–22.

In the above benchmark tests, particularly in Fig. 2c,d,f, we find that the accuracy of isolated molecules plays a crucial role in determining overall model performance. Specifically, for the MOF systems discussed above, the error analysis of Eads in Supplementary Fig. 16e indicates that the deviations mainly originate from energy errors of the adsorbates (CO2 and H2O). This is generalized in Fig. 3a, which presents the distribution of energy discrepancy between uMLIPs and DFT for 26 molecules sampled from the examples in this section. It can be seen that single-task models tend to overestimate molecular energies, except for eSEN[oam]. (The differences in DFT settings between MPtrj and OMat24 change the molecular energies by less than 2 meV/atom). The relatively weaker performance of UMA.omat can be attributed to the small fraction of molecule-containing structures in the OMat24 database itself than MPtrj and Alex (see Supplementary Table 2), as well as insufficient knowledge transfer from molecular databases trained for other inference tasks. Similar limitations of multi-task models on low-dimensional configurations have also been reported in a previous study66. Since the whole systems exhibit closer agreement with DFT, the isolated molecules become effectively destabilized in many models. As an illustrative example, we investigate the origin of errors in hybrid perovskite systems (Fig. 2d). The total energy errors of bulk phases (\({E}^{{{{\rm{ABX}}_3}}}\) and EB) are consistently smaller than those of molecular species (\({E}^{{{{{\rm{A}}}}}^{{\prime} }}\) and \({E}^{{{{\rm{X}}_2}}}\)), which are commonly overestimated by most uMLIPs, as shown in Supplementary Fig. 23a, b. Consequently, errors associated with molecular systems exhibit stronger correlations and contribute more significantly to the Efor, consistent with the trend observed in Supplementary Fig. 16e (see Supplementary Fig. 23c).

a Error distributions of molecular energies across uMLIP models, represented as violin and box plots. The inference tasks of multi-task models and the datasets used for single-task models are indicated at the top of the plot. The box and red horizontal line in the box plot show the quartiles and median of the error distribution, respectively, while whiskers represent 1.5 times the inter-quartile range. Individual data points are randomly jittered along the horizontal axis for visual clarity. b PES for CO2 adsorption on Mg-MOF74 computed with DFT (PBE-D3) and uMLIPs. c Comparison of Eads without deformation for CO2 and H2O between DFT and 7net models. Linear regression was performed for data points with \({E}_{{{{\rm{ads}}}}}^{{{{\rm{DFT}}}}}\) less than 0.5 eV. The line equations are shown in the figure insets. Source data are provided as a Source Data file.

The spurious destabilization of molecules also significantly distorts the PESs. This is illustrated in Fig. 3b, which plots the PES as a CO2 molecule approaches the corner of a MOF. The destabilization causes molecular overbinding and a stiffening of the PES near equilibrium, manifested as increased curvature. The stiffening is further corroborated in Fig. 3c, which presents parity plots of the Eads values without deformation from Fig. 2f. Predictions from two 7net models, 7net-Omni and 7net-ompa, are compared with DFT results; the slope for 7net-ompa is substantially greater than unity, indicating pronounced PES stiffening. Supplementary Fig. 24 compiles the corresponding distributions for other models and shows that PES stiffening is consistently observed across models, albeit to varying degrees. These position-dependent shifts in the PES imply that simply replacing molecular energies with their corresponding DFT values cannot eliminate the spurious stiffening. By contrast, in the ALD scenario in Fig. 2e, the molecular energy errors cancel out when taking the difference between chemisorbed and physisorbed states, yielding a less pronounced performance gap between models.

Metallic surfaces

Heterogeneous catalysis is another area where computational materials science plays a crucial role. Reactions such as the hydrogen evolution reaction (HER), oxygen evolution reaction (OER), and carbon dioxide reduction reaction (CO2RR) are of major industrial importance, underpinning technologies for solar fuel production and electrocatalytic CO2 conversion83,84. Computational studies have provided deep mechanistic insights into these processes85, while large-scale ab initio datasets, such as those compiled by the Open Catalyst Project23,24, have enabled the development of catalyst-specific foundation MLIPs. We benchmark our model on a range of metal-surface adsorption tasks, spanning canonical reactions such as HER, OER, and CO2RR, as well as adsorption of organic molecules on noble-metal surfaces relevant to heterogeneous hydrogenation and oxidation of volatile organic compounds.

We first investigate the adsorption of simple adsorbates (*H, *O, *OH, *CO) on noble-metal surfaces (Au, Ag, Cu, Pd, Pt). These systems are included in the OC20 dataset, computed with the RPBE functional, and are also sparsely sampled in the DBS (see Supplementary Table 1). For each metal, we consider both the (100) and (111) surfaces, with three symmetric adsorption sites: bridge, hollow, and top for (100) surfaces, and fcc, hcp, and top for (111) surfaces. The adsorption energy of adsorbate X is computed using Eq. (6), where EX for H, O, and OH is determined from the equilibrium of H2 and H2O molecules, while for CO the molecular energy is used directly. In total, 120 adsorption energies were computed.

As shown in Fig. 4a, 7net-Omni significantly outperforms other models, achieving a low MAE of approximately 0.06 eV in Eads. (See Supplementary Fig. 25 for parity plots). The white bullets on the multi-task models indicate the MAE of the oc20 channel relative to RPBE reference values, showing that the PBE performance of 7net-Omni exceeds that of 7net-Omni.oc20. This may be due to the much larger number of structures available at the PBE level compared with RPBE, which biases the model weights toward PBE fidelity. A similar trend is observed for DPA.omat. By contrast, UMA.omat shows much larger errors than UMA.oc20, indicating inefficient knowledge transfer. (Among various channels of UMA, only UMA.omat was able to produce reasonable results for tests in this section). Single-task models, with the exception of eSEN[oam], fail to achieve comparable accuracy. This is primarily because of their limited ability to describe reference molecular energies, as discussed in the previous section, which leads to systematic shifts in the reaction energies of identical adsorbates. The large errors in eSEN[omat] in comparison with eSEN[oam] are attributed to the inaccurate energies for some molecules, as shown in Fig. 3a.

a MAE for adsorption energies of *H, *O, *OH, and *CO adsorbates on five noble metals, Cu, Pd, Pt, Ag, and Au. The y-axis lists the uMLIP models. For multi-task uMLIPs, the inference channel is indicated to the right of each bar; for single-task uMLIPs, the corresponding training set is shown. White bullets mark the performance of the RPBE-fidelity channel (parentheses), compared with RPBE results. b MAE of physisorption energy predictions for ADS41 dataset. c MAE of chemisorption energy predictions for ADS41 dataset, excluding Co and Ni surfaces. d MAE of chemisorption energy predictions for adsorptions on Co and Ni surfaces in ADS41 dataset. e Potential energy curves of uMLIPs along the distance between the oxygen atom and the Co or Cu metal surface. Reference DFT data are calculated within PBE (a) or PBE-D3 (b–d). Solid bars in (a–d) represent the best-performing channel or training database. Individual parity plots are presented in Supplementary Figs. 25, 26. Source data are provided as a Source Data file.

To extend the evaluation to more diverse metals and adsorbates, we consider the ADS41 dataset86,87. ADS41 comprises 15 physisorption systems (primarily organic molecules on noble-metal surfaces) and 26 chemisorption systems. Reference adsorption energies were computed at the PBE-D3 level of theory87. The benchmark results are shown in Fig. 4b–d. Figure 4b displays MAEs for physisorption energies, while Fig. 4c presents chemisorption energies excluding Co and Ni. The anomalous Co and Ni systems are displayed separately in Fig. 4d. Compared with Fig. 4a,c includes additional noble metals (Ir, Rh, Ru) as well as adsorbates (CCH3, I, NO). Overall, Fig. 4b,c reveal consistent trends across uMLIPs, with 7net-Omni and eSEN[oam] performing best, in agreement with Fig. 4a. The parity plots for benchmarking ADS41 dataset are provided in Supplementary Fig. 26.

In the previous section, we showed that destabilization of molecular energies significantly affected the PES. To examine such an effect in catalytic reactions, we investigate the CO2RR process on Pt and Pd surfaces. Specifically, we consider COOH formation pathways: CO2 + *H → *COOH shown in ref. 88 on Pt(111) and Pd(111) surface, where CO2 is initially physisorbed. Nudged elastic band (NEB) calculations are performed to identify the minimum-energy paths. The resulting PES profiles and error analysis in Supplementary Fig. 27 show that the forward and backward reaction barriers usually differ by 0.1–0.2 eV between DFT and uMLIPs except for 7net-Omni and eSEN[oam], indicating potential inaccuracies in the turn-over frequency of CO2RR reactions.

Figure 4d for Co and Ni shows that most models catastrophically fail to predict Eads. This stems from the computational settings used in databases that employ PBE+U (e.g., MPtrj and OMat24). To account for the correlated nature of 3d transition-metal oxides, these databases apply a Hubbard U correction whenever partially filled 3d metals such as Co and Ni coexist with oxygen atoms. (For general conditions for application of PBE+U, we refer to ref. 89). As a result, uMLIPs trained heavily on these databases inherit PESs that include U corrections whenever oxygen atoms interact with Co or Ni.

We illustrate this in Fig. 4e by moving an oxygen atom above Co and Cu(111) surfaces. Most models exhibit anomalous PESs for Co, whereas predictions for Cu remain physically sound. Consequently, the adsorption energies of oxygen-containing molecules on Co and Ni surfaces are widely mispredicted. Notably, the OC20 database includes oxygen-containing adsorbates on partially filled 3d metals but excludes U corrections. However, the weight parameters for mpa and omat channels of 7net-Omni appear more heavily tuned to larger datasets such as MPtrj, sAlex, and OMat24.

Interestingly, this erratic behavior is not observed for 7net-Omni.matpes, which was trained on databases generated without U22 and achieves the best overall performance. In Fig. 4e, 7net-Omni.matpes also produces physically sound PESs. Notably, UMA and DPA maintain robust accuracy despite being trained on MPtrj or OMat24. It remains to be investigated whether the difference arises from the encoding strategy (e.g., DPA encodes task embedding only at the final layer) or from auxiliary mechanisms (e.g., mixtures of linear experts in UMA).

The above discussion is relevant whenever transition metals and oxygen atoms are within the cutoff radius. For example, this occurs in CO binding to single-atom metal centers in organometallic complexes (see Cross-domain or cross-functional scenarios section) and in OH/CHO adsorption on metal nitrides (Supplementary Fig. 28)90. These results underscore the need for caution when applying uMLIPs to adsorption on 3d transition-metals.

r2SCAN fidelity

Recently, the meta-GGA regularized-restored Strongly Constrained and Appropriately Normed (r2SCAN) functional has attracted considerable attention, as it provides improved agreement with experimental results compared to conventional PBE calculations, particularly for properties such as thermal stability, phonons, and lattice volumes91,92. However, r2SCAN computations are several times more expensive than with the PBE functional, and only recently large databases based on r2SCAN become available22,30,93. In training 7net-Omni, we included three databases at the r2SCAN level (Table 1). For MP-r2SCAN, data were collected directly from the Materials Project database, while MatPES-r2SCAN and MP-ALOE are directly available in the public domain. The total number of r2SCAN entries (1,283,036) corresponds to only 0.8% of those at the PBE level.

There are two other open models, MACE22 and VMD50, that provide r2SCAN energies. MACE is a single-fidelity model trained on MatPES-r2SCAN and MP-ALOE, whereas VMD adopts the multi-fidelity architecture of M3GNet6,94 to simultaneously train on PBE and r2SCAN data from MatPES. For 7net-Omni, we employ the matpes_r2scan channel instead of mp_r2scan, as the former is trained with larger databases. We also computed the same properties using 7net-Omni.mpa as a baseline. In other words, any performance below 7net-Omni.mpa is regarded as equivalent to having no benefit from training at the r2SCAN level.

The benchmark results for r2SCAN-based uMLIPs are presented in Table 2. We first compute energies of crystals whose SCAN values are provided in the Alexandria database95. The crystal structures are fixed and Efor with respect to elemental phases is compared. For cross-domain applications involving surface and molecular configurations, we consider the ADS41 and BMCOS1 benchmark sets used in Cross-domain or cross-functional scenarios section, which also provide r2SCAN data. We further evaluate κ for binary compounds with high symmetry42,96 in comparison with theoretical values at the r2SCAN level (see Methods) as well as experimental data97,98,99. Given the growing interest in meta-GGA functionals for predicting ionic conductivity in solid-state electrolytes, we assess model performance on force prediction along the molecular dynamics (MD) trajectories of argyrodite Li6PS5Cl reported in ref. 43.

As shown in Table 2, 7net-Omni exhibits the best overall accuracy except for the energy estimation of BMCOS1 dataset. The improved agreement with experimental κ for matpes_r2scan channel in comparison with mpa implies that the r2SCAN channel of 7net-Omni can be utilized to obtain thermal properties of materials more accurately than with the conventional PBE functional. Considering the high computational cost of r2SCAN calculations, this represents a significant advance in theoretical approaches. Parity plots in benchmarking r2SCAN can be found in Supplementary Figs. 29–33.

In Table 2, 7net-Omni.mpa yields more accurate Ecoh values for molecular crystals than other r2SCAN models. This may be due to the complete absence of molecular structures in the r2SCAN training data. Adding appropriate DBS at the r2SCAN level may improve these results. Likewise, the MAE for Eads is 0.159 eV on the ADS41 dataset, whereas the corresponding MAE in the PBE channel is less than 0.1 eV (see Fig. 4c). Additional DBS in OC20 and OC22 at the r2SCAN level may further improve accuracy.

We also note in Table 2 that 7net-Omni.matpes_r2scan performs substantially better than MACE, despite both models being trained on largely the same r2SCAN databases. The additional MP-r2SCAN set for 7net-Omni contributes only near-equilibrium configurations with limited data size (see Table 1), so the performance gain can be attributed to effective transfer learning from the abundant PBE-level data with similar PES characteristics. This is also consistent with the data-efficient multi-fidelity framework43.

Inference speed

We compare the inference speed of various uMLIPs on an NVIDIA H100 GPU (94 GB) using MD simulations of diamond Si structures. The performance results are summarized in Fig. 5, where the x-axis denotes the number of atoms and the y-axis represents the simulation throughput in nanoseconds per day when the time step is 1 femtosecond. For libraries accelerating tensor-product operations, 7net-Omni was tested with FlashTP100 (Omni-flash) as well as cuEquivariance (v0.7.0)101 (Omni-cueq), MACE with cuEquivariance (v0.7.0), and NequIP with OpenEquivariance (v0.4.1)102. For models supporting LAMMPS103 execution, the measurements were performed within LAMMPS, whereas those without LAMMPS support (eSEN, UMA, ORB) were evaluated using ASE MD104. Although DPA supports LAMMPS, only the ASE results are reported here due to installation difficulties.

The inference performance of each uMLIP is evaluated using MD simulations of diamond Si. The speed is reported in units of nanoseconds per day (ns/day), measured on a H100 GPU card. Source data are provided as a Source Data file.

The original SevenNet interface integrated with LAMMPS was used for the FlashTP library10, while coupling with the cuEquivariance library was achieved through the ML-IAP package within LAMMPS105. The lowest precision settings were adopted for ORB (float32-high), UMA (tf32), MACE (float32), and NequIP (float32). For the UMA model, additional acceleration was achieved by applying torch.compile and pre-merging linear experts, while activation checkpointing was enabled for systems containing more than 1000 atoms to fit the model into GPU memory8. For the ORB model, torch.compile and cuML-accelerated graph construction106 were applied for further acceleration. All models were tested with increasing system sizes until the GPU memory limit was reached.

In Fig. 5, it is observed that 7net-Omni, when accelerated with cuEquivariance, achieves a competitive throughput of approximately 0.36 ns/day for a system containing 10,000 Si atoms, although this is roughly one-third of the speed of MACE, the fastest model in the present benchmark. In contrast, eSEN, which attains accuracy comparable to that of 7net-Omni in many cases, requires significantly more memory and demonstrates substantially slower performance. While cuEquivariance provides superior inference performance for 7net-Omni compared to FlashTP acceleration in larger systems ( > 3000 atoms), its throughput decreases for smaller systems. This decline is likely due to GPU underutilization or additional auxiliary overhead, indicating potential for further optimization. Notably, GRACE maintains high efficiency even for small system sizes.