-

Our series on transformer alternatives begins by exploring the resurgence of RNNs.

-

Learn more about DeepSeek v4 and GPT 5.5.

-

In the Opinion section, we delve into an interesting topic: the CLI for everything.

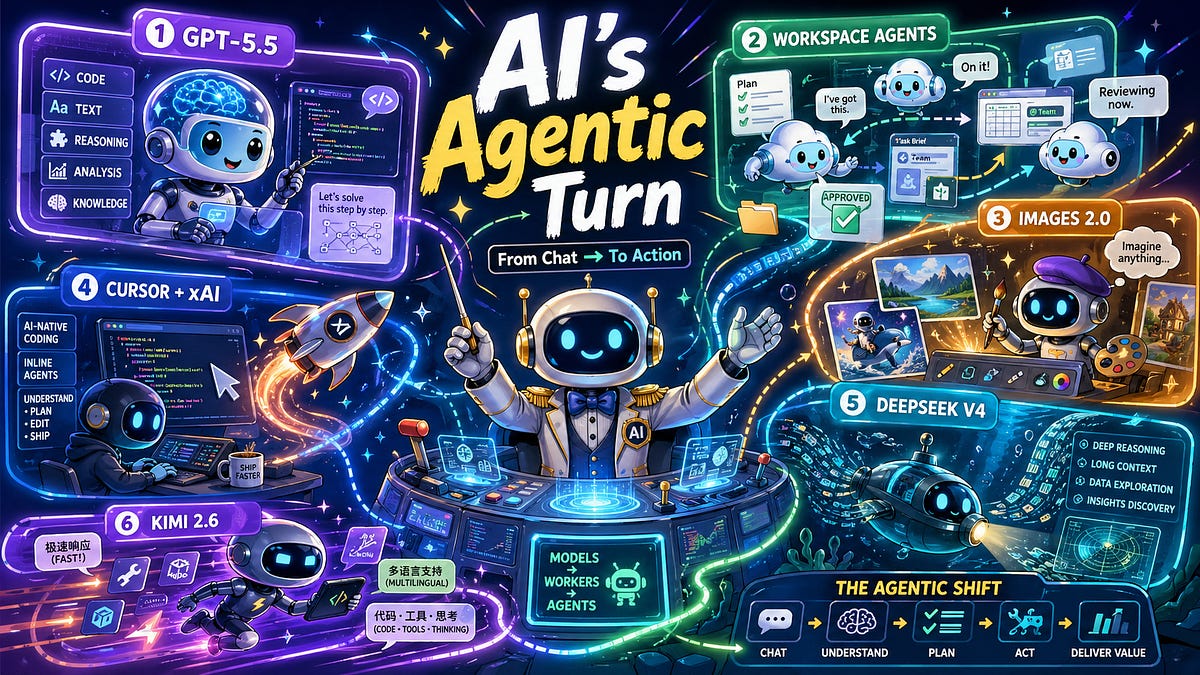

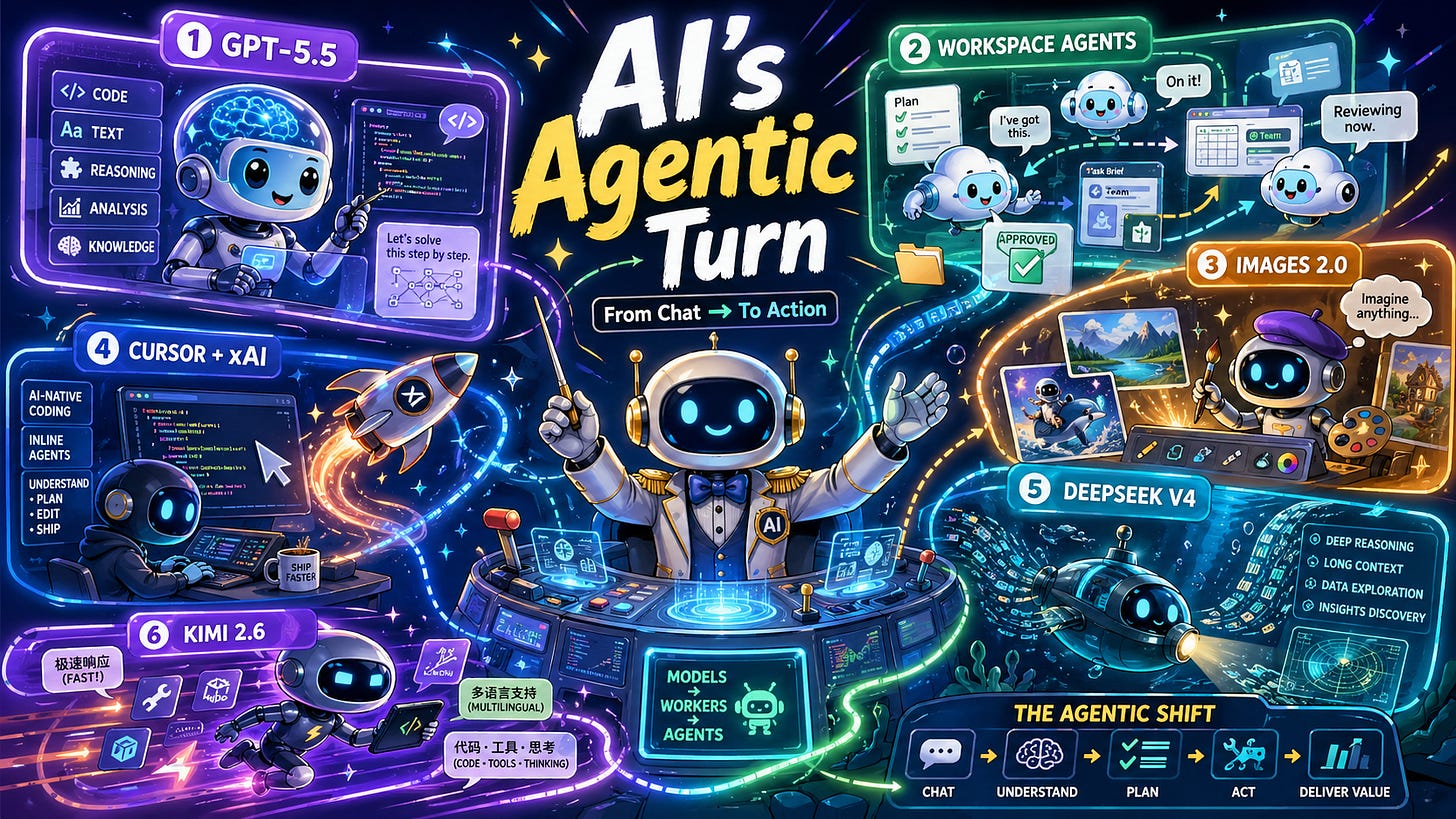

This week in AI felt less like a new model release cycle and more like a change in the foundations of the software itself. The important story is not just that new models are more capable. That has been the default trajectory for the past few years. A further interesting development is that models are becoming increasingly intertwined with the systems in which work is actually done, such as code editors, enterprise workflows, cloud environments, collaboration tools, and agent interfaces.

OpenAI’s GPT-5.5 release is clearly the center of gravity. This represents a continued expansion of Frontier Model’s capabilities across reasoning, coding, tool usage, long-context work, and specialized tasks. But the benchmark story is becoming almost secondary. The Frontier model is no longer just a model. It’s runtime. This is the intelligence layer within coding environments, research workflows, enterprise assistants, and autonomous systems. This model is becoming more like a computational engine that can coordinate actions, rather than a smarter chatbot.

Other releases of OpenAI have made that claim even clearer. Workspace agents push ChatGPT from your personal productivity tools to your organization’s shared infrastructure. Codex-powered agents can reside on-premises, run in the cloud, work across tools like ChatGPT and Slack, follow permissions, remember context, and execute long-running workflows. This isn’t just “custom GPT with enterprise packages”. This is the beginning of AI as a reusable organizational process. At the same time, ChatGPT Images 2.0 expands the reach of AI work from language and code to visual production with powerful text rendering, multilingual support, visual reasoning, and “images with thought,” allowing models to spend more time planning and adjusting before production. Taken together, these releases show that OpenAI is making ChatGPT less of an app and more of a multimodal work environment, a place where text, code, images, tools, memory, authorizations, and agents start coming together in one place.

The relationship between xAI and Cursor fits perfectly into this larger pattern. Cursor has become one of the clearest examples of AI-native software development moving from novelty to infrastructure. Code is an ideal environment for agents because it is explicit, testable, configurable, and economically valuable. Coding agents can suggest, edit, run, debug, and verify. You can work in a loop and measure your progress. Whoever owns that loop will own one of the most important surfaces in the future of AI.

Meanwhile, DeepSeek V4 and Kim 2.6 demonstrate how the ecosystem of open and semi-open models is rapidly compressing the frontier from below. The new contest isn’t just about chat quality or leaderboard theater. This is about long context, coding performance, tool usage, latency, cost, and agent reliability. In other words, the battlefield is moving from intelligence as conversation to intelligence as action.

This is the real theme this week. AI is becoming a reality. A model is no longer a product in itself. A product is a model plus harnesses, tools, memory, permissions, environment, and feedback loops. We are moving from a model of answering questions to a system of performing work.

AI Lab: Google DeepMind, Google Research

summary: This paper introduces Decoupled DiLoCo, an evolution of the DiLoCo framework designed to improve the resiliency of large language model pretraining to hardware failures and network issues. By separating computation between independent, asynchronously communicating “learners,” the framework achieves significant increases in training efficiency (goodput) while maintaining competitive model performance, even in highly fault-prone environments simulated by chaos engineering.

AI Lab: Inclusion AI, Ant Group

summary: In this paper, we present LLaDA2.0-Uni, a unified discrete diffuse large-scale language model that seamlessly integrates multimodal understanding and production within a single framework. By discretizing visual input into semantic tokens and employing block-level mask diffusion, this model is consistent with specialized visual language models while supporting interleaved generation and inference.

AI Lab: Carnegie Mellon University, Amazon AGI

summary: The authors introduce SkillLearnBench, the first benchmark designed to evaluate continuous learning methods for agent skill generation across 20 real-world tasks. Their evaluation revealed that while continuous learning methods improve performance over a no-skill baseline, they still do not reach human-created skill levels and struggle with open-ended tasks.

AI Lab: Meta Superintelligence Labs, University of Washington, New York University, Google DeepMind, Carnegie Mellon University, Princeton University

summary: In this paper, we propose a test time scaling framework for long-term coding agents by converting noisy rollout trajectories into compact and structured summaries. This representation-centric approach, which utilizes recursive tournament voting (RTV) for parallel scaling and parallel distillation refinement (PDR) for sequential scaling, significantly improves the performance of frontier models on difficult agent benchmarks.

AI Lab: stanford university

SWE-chat introduces the first large-scale dataset of real-world coding agent sessions capturing over 6,000 interactions, 63,000 user prompts, and 355,000 tool calls from open source developers. Analysis of this data reveals that while “vibe coding” is growing in popularity, it remains costly, introduces more security vulnerabilities, and frequently requires users to interrupt and fix agents.

AI Lab: microsoft

summary: AutoAdapt is an end-to-end automated framework designed to optimize complex domain adaptation processes for large language models under severe resource constraints. The framework achieves a 25% relative accuracy improvement compared to state-of-the-art automatic baselines by employing a multi-agent debate system to navigate best practices and LLM-based surrogates for efficient hyperparameter tuning.

of New version of DeepSeek is here It has a context length of 1M and excellent agent functionality.

Kimi 2.6 has been released Features a marquee feature for agent coding.

OpenAI ChatGPT releases incredibly enhanced image generation capabilities.

OpenAI Announcing Workspace Agenta new experience for creating agents that can handle complex workflows within ChatGPT.

hug face Open Source ML Internan agent that researches and writes ML-related code.

-

SpaceX pre-empted Cursor’s nearly-closed $2 billion funding round at $50 billion valuation By offering a $60 billion post-IPO acquisition option As SpaceX scrambles to establish itself as an AI company following the xAI merger, it will pay an interim $10 billion in “cooperation” fees.

-

Infosys and OpenAI announce strategic partnership Combine Infosys Topaz Fabric with OpenAI’s Codex and Frontier models to power enterprise software engineering, legacy modernization, and DevOps automation at scale.

-

NeoCognition is an AI agent lab founded by Professor Yu Su of The Ohio State University with co-founders Xiang Deng and Yu Gu. Emerging from stealth with $40 million seed Co-led by Cambium Capital and Walden Catalyst Ventures, we built a self-learning agent that develops a model of the world for a given work environment.

-

Anthropic receives an additional $5 billion from Amazon (Amazon’s total investment reaches $13 billion) in exchange for a 10-year AWS commitment of more than $100 billion covering up to 5GW of Trainium2 to Trainium4 capacity to deliver training and services for Claude.

-

Microsoft has pledged A$25 billion (approximately US$18 billion) to Australia by the end of 2029. We will expand Azure AI supercomputing capacity by more than 140%, strengthen our cyber defense efforts with ASD, and commit to providing work-ready AI training to 3 million Australians by 2028.

-

Jeff Bezos and Vik Bajaj’s physics AI lab Project Prometheus Closes $10 billion round at approximately $38 billion valuation JPMorgan and BlackRock are also among the participants, as the holding company is considering up to $100 billion to buy an industrial business that feeds business data into the lab’s models.

-

Brett Taylor and Clay Baber’s Sierra Acquired Paris-based, YC-backed Fragment The company’s third acquisition in 2026, after Opera Tech and Receptive AI, brings co-founders Olivier Moindrot and Guillaume Genthial to the team, strengthening Sierra’s agent development efforts in France.

-

ComfyUI raises $30 million at $500 million valuation The round, led by Craft (in collaboration with Pace Capital, Chemistry, and TruArrow), leverages an open source community of 4 million users and more than 60,000 nodes to make node-based workflows the de facto control layer for production-grade generative media.

-

Google commits up to $40 billion to Anthropic — Current valuation of $350 billion, with another $30 billion increasing to $10 billion based on performance milestones. In addition to this, the Google Cloud deal will also be extended to provide 5GW of TPU-based compute over the next five years.

-

Mehta signs multi-year, multi-billion dollar deal We deployed tens of millions of AWS Graviton5 cores in our compute portfolio for agenttic AI inference workloads, becoming one of AWS’s largest Graviton customers and validating the hypothesis that agenttic AI is driving demand back to CPUs.