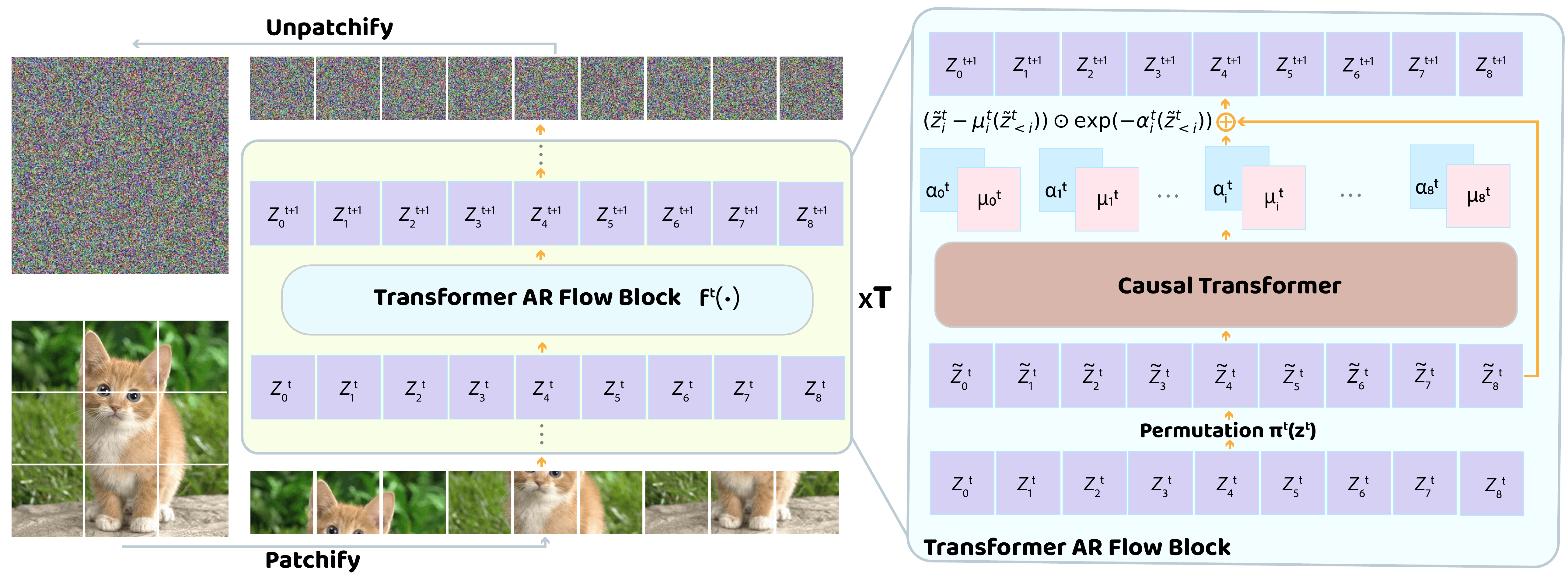

Normalization Flow (NFS) is a likelihood-based model for continuous inputs. They have shown promising results in both density estimation and generation modeling tasks, but have received relatively little attention in recent years. This work shows that NFS is more powerful than previously thought. Tarflow: Presents a simple and scalable architecture that enables highly performant NF models. Tarflow can be thought of as a transformer-based variant of masked autoregressive flow (MAF). It consists of a stack of autoregressive transblocks on an image patch, alternating self-healing directions between layers. Tarflow is easy to train end-to-end and allows you to model and generate pixels directly. We also propose three important techniques for improving sample quality. An effective guidance method for Gaussian noise enhancement during training, post-training removal procedures, and both class conditional and unconditional settings. To summarise these, Tarflow sets up new cutting-edge results on image likelihood estimation, breaking previous best methods with large margins, and generates samples of quality and diversity comparable to the diffusion model for the first time in a standalone NF model.

Figure 1: Samples generated by Tarflow at various resolutions.

Figure 2: Tarflow model architecture.