This section discusses literature showing different methods of optimizing inventory systems and incorporating the reduction of carbon emissions. Optimization in inventory management involves using mathematical models, metaheuristics, and machine learning algorithms to achieve the desired goal, like minimizing costs and maximizing efficiency.

Mathematical and heuristic models with environmental considerations

Mathematical models were first introduced by Ford W. Harris in 1913 and have since been improved to solve inventory management problems of varying levels of complexity. The emergence of non‑linear, multi‑objective, and large‑scale problems motivated the development of new methods. Heuristic and metaheuristic methods were developed to tackle these issues and resulted in satisfactory approximate solutions.

Ahmadini et al. (2021) proposed a multi-objective inventory and production model with backordering that integrated green investment decisions to mitigate environmental impact. The model aimed to maximize the profit relative to total backordered quantity, minimize holding costs, minimize total waste generated in each production cycle, and minimize the penalty costs related to green investment. They conducted a Pareto-based analysis to study the tradeoffs among the objectives and found that higher levels of green investment reduced waste and emissions while improving profits when backordering was significant. Their results showed that scenarios with greater environmental investment produced lower waste and emissions but had higher investment penalties, whereas scenarios with limited investment reduced costs but increased waste5.

Karampour et al. (2022) proposed a model of a bi-objective green vendor-managed inventory problem to maximize profit and minimize transportation carbon emissions using the non-dominated sorting genetic algorithm-II (NSGA-II), multi-objective of Keshtel algorithm (MOKA), and multi-objective of red deer algorithm (MORDA). Their results of their research showed that MORDA trumped both MOKA and NSGA‑II in terms of solution quality. Also, they conducted a sensitivity analysis which showed that allowing some backorders can reduce shipping frequency without eroding profitability6.

Pilati et al. (2024) proposed a mathematical model to solve bi-objective problems that minimize costs and reduce carbon emissions of the inventory system with perishable goods. The research paper used the Pareto front to find the best tradeoff between lowering costs and sustainability goals. The goals are anchor points representing two solutions; one is focused on reducing costs and the other reducing emissions. Their findings showed an 8% reduction in carbon emissions with an increase of 3% in costs when moving away from the anchor points7.

Ghosh and Mahapatra (2024) used a bi-objective optimization model that simultaneously minimizes total expected costs and emissions within an integrated supply chain with random demand, backorders, and sales losses. The authors’ model optimized variables such as order quantity, re-order level, production rate, shipment number, and vehicle velocity. The methodology employed the NSGA-II evolutionary algorithm, utilizing a Taguchi experiment for parameter tuning to identify a Pareto front of non-dominated solutions. The study concluded that while increasing vehicle velocity reduces the total expected cost, total emissions initially decreased before rising after a threshold point8.

Inventory models under carbon emission policies

Zakeri et al. (2015) examined the tactical and operational planning implications of two regulatory schemes: carbon tax and cap-and-trade. The authors used a mixed-integer linear programming (MILP) model to analyze actual data from an Australian dining furniture company. Their results showed that 34% emissions reduction goal resulted in a 26.72% increase in supply chain costs. The authors concluded that while carbon trading was superior for achieving emissions reductions and maintaining service levels, carbon taxes may be preferable from an uncertainty perspective because trading costs were subject to volatile market conditions9.

Purohit et al. (2016) investigated the inventory lot-sizing problem within a carbon cap-and-trade system using a mixed-integer linear programming (MILP) model. The authors aimed to determine optimal replenishment timings and stock levels under non-stationary stochastic demand and cycle service level constraints. The authors found that increasing the carbon price reduced the average total cost, total inventory, and total emissions. The authors concluded that cycle service levels and demand coefficients of variation had a significant impact on performance measures regardless of demand variability10.

Huang et al. (2020) proposed a mathematical model using the Lagrange multiplier method to analyze how carbon emission policies affected inventory decisions, including order quantity, delivery schedules, shipment frequency, and green investment levels. The model integrated logistics decisions with policy constraints and aimed to minimize total costs subject to emission reduction constraints. They showed that policy selection depended on the efficiency of a firm’s emission reduction technology and on the rate at which the benefits from green investment diminished over time. The study found that strict emission policies worked best when companies could reduce emissions efficiently3.

Mishra et al. (2021) proposed a sustainable economic order quantity model (SEOQ) to exhibit a satisfactory profit for greenhouse farms under multiple cases of backorder and deterioration, using controllable carbon emissions. The authors showed that sustainable inventory management in the carbon tax and cap partial backlogging case had a better profit with the highest cycle time and the lowest value of the fraction length period, and the lowest green technology investment cost11.

Yadav and Khanna (2021) proposed a sustainable inventory model for perishable products that integrated expiration effects, price-sensitive demand, and a carbon-tax policy to determine the optimal selling price and replenishment cycle for maximizing total profit while minimizing carbon emissions. Their results indicated that under a carbon-tax policy, the policy achieved a better trade-off between profit and emissions than without the policy. The comparative analysis demonstrated that carbon-tax policies can reduce emissions by roughly 50% per month while decreasing profits by 7–8%12.

Ghosh (2022) explored the optimization of a two-echelon supply chain specifically considering the impact of random defect rates and random demand under a strict carbon cap policy. The researcher formulated a mixed-integer non-linear programming (MINLP) problem to determine the optimal production-inventory policy, where production emissions depended on the production rate and transportation emissions depended on vehicle velocity. Using Lingo optimization software, the study established a base case with an optimized production rate of 1509.12 units and a vehicle velocity of 100 km/hour, resulting in a total cost of $41,164 under a carbon limit of 600 Tons/year. The study concluded that organizations typically exhaust their allotted carbon limit to minimize costs, and that reducing lead times and defect rates were the most effective strategies for lowering total supply chain costs13.

Reinforcement Learning (RL) in Inventory Management

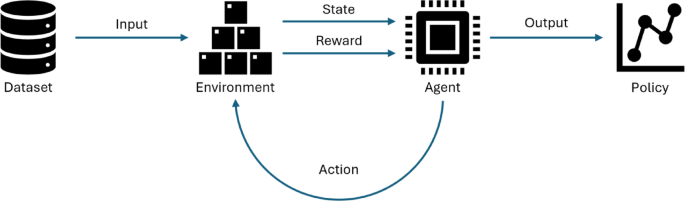

Recent studies have applied machine learning techniques to inventory management problems, leveraging predictive models, and optimization algorithms to improve decision-making under uncertainty. However, traditional machine learning approaches often rely on static datasets and predefined rules, which limit their ability to adapt dynamically to changing environments. To overcome these limitations, researchers have turned to reinforcement learning (RL). RL has been employed to tackle different inventory management challenges, such as multi-echelon inventory optimization, multiproduct Economic Order Quantity (EOQ), multi-perishable goods, and inventory management under carbon-trading policy constraint. Reinforcement learning is a remarkable tool for decision-making because it learns from experience.

Kara and Dogan (2018) used Q-learning and Sarsa algorithms to solve an inventory management system of perishable products with random demand and deterministic lead time to minimize the total cost. The problem was solved under two replenishment policies: age-based and stock quantity. They assumed a single perishable product, while the supplier had an infinite capacity. Their results showed the effectiveness of replenishment policies that considered age information for perishable items in terms of cost optimization. Their results demonstrated that those policies, modeled using Q-learning and Sarsa algorithms, outperform traditional stock-level dependent approaches. These algorithms provided better results when demand had high variation, and products had short lifespans14.

Wang, Q. et al. (2022) solved a lost sales inventory system as well as a multi-echelon inventory system using double deep Q-network algorithm (DDQN). The authors compared the results in terms of demand fulfillment and cost reduction with standard algorithms like (s, S), capped base-stock policy, and constant-order policy. Their results showed that the DDQN algorithms reached better solutions than the other algorithms, with reductions in costs reaching 4%15.

Selukar et al. (2022) investigated the use of single-agent RL algorithms, namely Advantage Actor Critic (A2C) and Deep Deterministic Policy Gradient (DDPG), in reducing costs for the inventory management system of multiple perishable products with multiple lifetimes and inventory limitation factors. They also used models of real-world parameters such as overdue cost, shortage cost, lead time, and corruption costs. Their research showed that the single-agent RL algorithms could reduce inventory cost and spoilage rate of the goods when the products’ lead times and lifecycles were known, along with the demand distribution16.

Kaynov et al. (2024) used the proximal policy optimization (PPO) algorithm to solve a one-warehouse multi-retailer inventory system. The problem was solved under a lost sales case and a backordering case. Their results were compared to general-purpose rationing/allocation policies, where an improvement of 1 − 3% was made in the lost sales case, and 12 − 20% in the backordering case in terms of total expected costs17.

Zhou et al. (2024) proposed a multi-agent reinforcement learning model to identify cost-effective inventory policies using a modified twin delayed deep deterministic policy gradient (TD3). They introduced value decomposition and experience buffer modification to the algorithm to avoid poor performance calling it (EM-VDTD3), which resulted from random action exploration usually done in the learning process. Their results showed how the proposed method outperformed the benchmark methods regarding scalability and cost efficiency18.

Tian et al. (2024) proposed a deep reinforcement learning–based replenishment model called IACPPO (Integrated Advantage Actor-Critic and PPO). It integrated the Advantage Actor–Critic (A2C) and Proximal Policy Optimization (PPO) algorithms for multi-item warehouse inventory replenishment. Their approach used a continuous action space for replenishment decisions, for all items simultaneously based on historical demand, backlog and shortage costs, and inventory states. Experiments on two real-world datasets (e-commerce warehouse data and pharmaceutical sales records) showed that IACPPO achieved lower cumulative inventory costs and better average cost growth rates than forecasting models (e.g., XGBoost, GRU, LSTM, DARNN, Prophet) and several DRL baselines (DDPG, SAC, TD3, PPO, A2C, LSTM-DDPG)19.

RL in inventory management with environmental considerations

Fallahi et al. (2022) integrated Q-learning with metaheuristics, particle swarm optimization, and differential evolution, to solve an EOQ problem with multiple reusable items, which reduced the environmental impact of the inventory system where the Q-learning algorithm controlled the value of the metaheuristic algorithms’ parameters. Their study demonstrated that integrating Q-learning significantly enhanced the parameter tuning of both metaheuristic algorithms, thereby contributing to improved overall optimization performance20.

Wang, Q. and Yang, Y. (2024) proposed a deep reinforcement learning algorithm (DRL) based on PPO Lagrange. Their algorithm controlled the ordering quantity in inventory and the carbon allowance ordering in carbon trading policy. It addressed the challenge of coordinating ordering decisions to minimize total costs while meeting emission constraints. Their results showed that their proposed method performed better than traditional inventory policies in terms of costs and resulting emissions and highlighted the criticality of carbon pricing in contracts2.

Wang, J. et al. (2025) proposed a proximal policy optimization (PPO) model to reduce both total costs and carbon emissions under a carbon tax policy for a multi-echelon closed-loop supply chain of waste electrical and electronic equipment. Their results showed that, compared to a traditional (s, Q) inventory policy, the PPO-based policy achieved a cost improvement of 9.1% and an emission reduction of 46.7%. The authors conducted sensitivity analysis using different carbon tax rates across scenarios with varying recycling quantities and stochastic product demand. Their results indicated that increasing the carbon tax rate generally led to lower emissions but higher operational costs, while lower tax rates reduced cost pressure but didn’t provide enough incentive for emission reduction21.

Identified research gap

Previous studies have applied machine learning methods to inventory management to address complex and multi-objective decision problems, often reporting reduced computational time compared to traditional mathematical and metaheuristic approaches. Reinforcement learning, a subset of machine learning, enables sequential decision-making under uncertainty. Despite this potential, its application to inventory management remains limited, particularly in studies that jointly consider economic and environmental objectives. Existing reinforcement learning applications have examined inventory settings such as multi-echelon systems, perishable products, and carbon trading mechanisms operating under regulatory constraints. Commonly adopted algorithms include Proximal Policy Optimization (PPO), Advantage Actor Critic (A2C), Double Deep Q Network (DDQN), and hybrid reinforcement learning models, with a primary focus on cost and service-level optimization.

The literature further indicates that studies treating carbon emissions as an independent objective in inventory management have largely relied on mathematical formulations and metaheuristic techniques. In contrast, reinforcement learning based approaches typically incorporate carbon emissions through external regulatory mechanisms, such as emission trading or taxation schemes. An analysis of reward structures in existing reinforcement learning studies shows that carbon emissions are commonly embedded within cost-related objectives, reflecting regulatory compliance. Consequently, there is limited research on reinforcement learning-based inventory optimization that models carbon emissions as a separate objective in unconstrained decision settings.

Motivated by this gap, the present study develops replenishment policies for an inventory system within an unconstrained multi-objective framework that maximizes profitability while minimizing carbon emissions. Carbon emissions are incorporated as a distinct component of the reward function, allowing direct examination of trade-offs between maximizing profits and minimizing carbon emissions without using emission policies in emissions control. The inventory problem is formulated as a Markov Decision Process, enabling reinforcement learning agents to learn adaptive ordering policies under uncertainty. Four reinforcement learning algorithms are evaluated: PPO, A2C, DDQN, and Phasic Policy Gradient (PPG). While PPO, A2C, and DDQN have been previously applied to inventory management problems, PPG is examined for the first time in this context to the authors’ knowledge. The study aims to compare the performance of these algorithms and assess their suitability for bi-objective inventory optimization.

The remainder of the paper is structured as follows. Section 3 presents the problem formulation. Section 4 describes the reinforcement learning algorithms. Section 5 reports and discusses the results. Section 6 examines the impact of reward weight selection. Section 7 highlights the managerial implications of the results. Section 8 concludes the paper.