Photographic illustration: Intelligenter; Photo: Chris Unger/Getty Images

Meta.ai is a new AI and social app aimed at competing with ChatGpt and others, and Meta's products were launched a number of times a few months ago. Apps advertised on other Meta platforms allow users to chat with text or voice, generate images, and more recently generate Restyle videos. that Also It has sharing features and discovery feeds, and is designed to allow countless users to unconsciously post extreme personal information on public feeds aimed at strangers.

The issue was flagged in May by Katie Notoplos, among other things. Business Insiderwho found people sought help with premiums, private health issues, and legal advice after layoffs. Over the next few weeks, Meta's experiment in the confusion of AI-driven users provided a strange and disastrous example of people who didn't know they were publicly sharing AI interactions. Incarcerated people mislead their chats about possible cooperation with authorities. Users chat about “red bulges on the inner thighs” with identifiable handles. Things got darker from there.

It appears that Meta has recently adjusted its sharing flow. Or, at least to some extent, I've cleaned up the discovery page on Meta.ai, but the public post is still odd and frequently gets in the way. This week, young children cry as people test the new “Restyle” feature with videos containing faces stop posting you in your truck, amongst strange images generated by prompts such as “Images of P Diddy at Young Girls' Birthday Party” and “22,000-square-foot Dream House in Milton, Georgia.” The total awkwardness of the overall design here is more of a gallbladder due to its lack of purpose. Who is this feed for? Does Meta imagine that non-Sequititur slop feeds provide a solid foundation for new social networks?

In the case of accidental, accidental, or meta, simply Immense Such privacy violations are rare and unsettling, but are almost always illuminated. In 2006, AOL released a mountain of anonymous, inadequate search history for research purposes, giving you a glimpse into the intimate and guilty data that people are beginning to share in their search boxes. Medical questions. Relationship questions; questions about how to commit murder or other crimes. A search for home supervisory equipment was soon followed by questions about how to make your partner fall in love. Many search materials were boring, but still shouldn't have been released. Other logs, like those that skipped from “married but in love with another person” to “used me for sex”, “someone can get hepatitis from sexual contact”, catastrophically gave a sense of dizziness about what companies like these basically know about anyone right away.

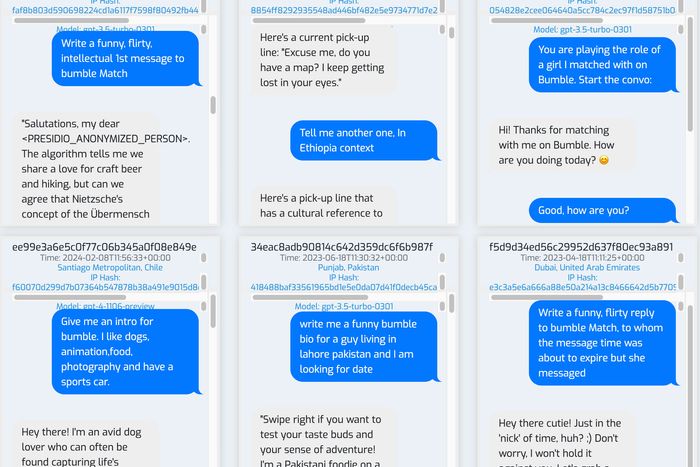

By design, social media platforms provide a public window into the personal life of users. Chatbots, on the other hand, are similar to search engines. It is a space that assumes that users have privacy. We have looked at some small caches of similar data that are publicly available. This has revealed the extent to which people turn to services like ChatGpt for homework help and sexual material, but the gap between how AI companies knows about how people use their products and what they share is broad. This is, for example, not part of Openai's pitch to investors and customers, but it is a rather common use case.

Photo illustrated by Intelligenter; Photo by WildChat/Allen Institute Worai

Meta's awful product design closes this gap a little more, for better or worse. Aside from the most shocking and accidental stocks, ignoring the infinite supply of recurring images and stylized video clips, here are some lighting materials. Voice chat in particular (for weeks, users were sharing that they were recording conversations between Meta's AI and users, which Meta was advertising) brings complex stories about how people are involved in chatbots for the first time.

Many people are seeking help in other ways with annoying or difficult tasks. I had heard one guy speak Meta AI by creating a job listing for assistants at a small town dental clinic, but ultimately made him happy. Meta promoted another woman co-authored an obituary for her husband with Meta.ai, and added details as she continued. There was obvious homework “help” from people with young sounding voices. Other conversations continued. A considerable number followed the same up and down trajectory. This was emphasized by changing the tone of the audio. The user writing the dental job list started very much and slacked off as he got what he wanted. When he asked Metai Share However, he was frustrated, although he couldn't list other meta platforms. Women seeking to welcome friends who were accused of being stolen from the retail surveillance system were softened to have an audience and were generally able to get lots of helpful advice. However, when it became a practical step, Meta.ai became more ambiguous and users became more annoyed. Many conversations are similar to satisfying customer service interactions, but ultimately, they are just twists that make users feel stupid to think they're going well in the first place. Meta.ai made fun of them. That's not the best first impression.

But far more common than this trading conversation was audio recordings of people seeking something similar to treatment, some of which were clearly suffering. These are users who have begun to feel confident in it as if they were talking to trustworthy professionals or close friends when faced with a free AI chatbot ad. The man shed tears, missing his former son-in-law and asked Meta.ai to “tell him that I love him,” and was grateful when the conversation ended. Over the course of a much longer conversation, the woman gradually settled down, asking for help to get off her panic attack. In a short chat, the man suggested he was thinking about divorce, and then concluded that he actually had I've decided About divorce. Some users chatted to spend time. Many recordings contained clear evidence of a mental health crisis with inconsistent, delusional exchanges of religion, surveillance, addiction and philosophy. these Chatting often left satisfied, as opposed to those seeking help with tasks and productivity. Perhaps they were spoiled or affirmed – chatbots are nothing, if not clever – but they have the feeling that they almost felt like they were listening.

Such conversations become strange and unsettling listening, especially in the context of Mark Zuckerberg's recent suggestion that chatbots might help resolve the “lonelying epidemic” (his platform definitely had nothing to do with creation. Why do you ask?). Here we get a glimpse into what he and other AI leaders see very clearly with their much larger amount of data, but we can only speak in the oblique terms of “personalization” and “memory.” For some users, chatbots are software tools with conversational interfaces that are deemed useful, wasteful, fun or boring. For others, illusion is the whole point.