Most big tech companies have placed age restrictions on their powerful chatbots, but that hasn’t stopped some toy companies from claiming to use OpenAI and Google to power their products.

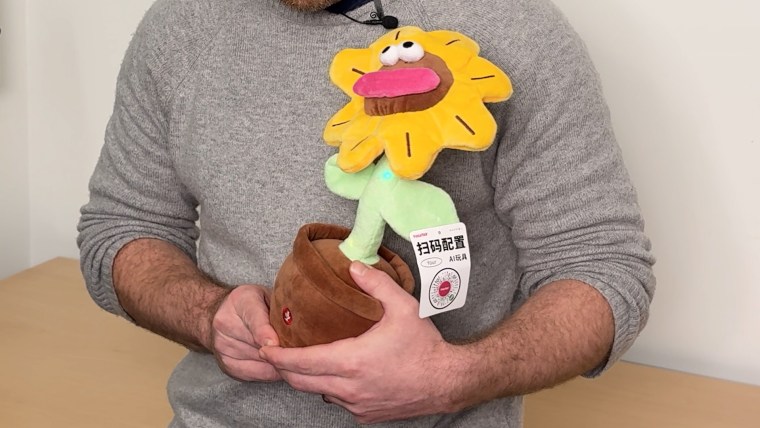

A report released Tuesday by a consumer watchdog group found that more than 20 toys advertised online were sold as containing key AI models despite regulations to ban their use by children.

Toy companies appear to have discovered gaps in AI companies’ policies regarding age restrictions, according to a report from the Public Interest Research Group Educational Fund (PIRG). Although young people are prohibited from using such models and their chatbots, developers (people and companies building on AI models) often do not face similar restrictions.

PIRG said it was able to sign up for developer access to AI models from Google, OpenAI and xAI, but there was “no substantive review” of whether to target the service to children. Anthropic asked PIRG if it plans to develop products for minors.

On developer platforms from Google, Anthropic, and OpenAI, PIRG was able to build a system designed to behave like an AI-powered teddy bear for children.

“Some AI companies claim their models are not designed for children,” RJ Cross, the report’s lead author and a PIRG researcher, told NBC News. “However, we allow third-party developers to use it in their toys, and we are not involved in any safety issues.”

In response to a request for comment, an OpenAI spokesperson said in a statement: “Minors are entitled to strong protections, and we have strict policies that all developers are required to abide by. We take enforcement action against developers if we determine they violate our policies. Those policies prohibit the use of our services to exploit, endanger, or sexualize anyone under the age of 18.”

“These rules apply to all developers who use our APIs, and we implement classifiers to ensure our services are not used to harm minors,” the spokesperson said, referring to the application programming interfaces (APIs) that developers use to interact with the company’s services.

An Anthropic spokesperson told NBC News that users of the company’s AI system must be at least 18 years old, as young people are at a higher risk of experiencing negative outcomes from conversations with chatbots. The spokesperson said developers must use age-appropriate guardrails and communicate to users that their products leverage AI, stressing that developers must follow Anthropic’s Acceptable Use Policy, which prohibits many types of dangerous or harmful behavior.

Google and xAI did not respond to requests for comment.

The AI boom has created a new market for a variety of products that incorporate leading chatbots as technology companies compete to attract developers. AI toys flooded store shelves last holiday season, but experts have warned that they pose a variety of safety concerns, as revealed in an NBC News investigation.

Today’s AI toys rely on a small number of technology companies for interactive features. But rather than building AI into toys, most companies use the internet to send data to AI companies, which send responses back to toys.

Concerns about the use of AI chatbots by minors have spurred action from tech companies, many of which have placed age limits on users.

OpenAI says its flagship system, ChatGPT, is “intended for people 13 and older,” and that it has also built a version for people under 18 that handles sensitive topics differently.

Google says users must be 13 years or older to use Gemini AI products. Google also has strict restrictions that prohibit organizations from using its products in services or businesses that are “targeted or likely to be accessed by individuals under the age of 18.”

PIRG identified more than 20 unique toys being sold online that claim to use OpenAI’s system, but five toys that claim to use Google’s system, which appear to be in direct violation of Google’s terms of service for targeting children. However, some toys misspell OpenAI’s product name or claim to use both OpenAI and Google’s systems, calling into question the accuracy of toy makers’ claims.

Cross said that assuming the toymaker’s claims are valid, the apparent lack of oversight raises questions about the company’s ability to track how developers and third parties are using its systems.

“It doesn’t make a lot of sense for an AI company that hasn’t released a child-safe version of its model to allow anyone with a credit card to sign up to make a product for kids using the same technology,” Cross said. “It makes little sense for AI companies to outsource child safety to unvetted developers.”

PIRG also identified toys that claim to be powered, at least in part, by Anthropic and xAI’s AI services. Anthropic’s terms of service require organizations to agree to additional warnings about making their products available to users under 18, but NBC News says those additional guidelines are never displayed if the developer identifies themselves as an “individual” rather than an “organization” using Anthropic’s services. While xAI’s consumer terms prohibit users under the age of 13, the terms for corporate users, which cover the use of xAI for “business purposes,” do not have the same language.

Most major AI companies monitor submissions and requests to their services, and their terms of service include provisions that allow them to ban users if they violate their policies.

Rachel Frantz, director of the Young Children Thrive Offline Program at child advocacy group Fairplay, told NBC News that relaxing rules for developers could undermine fundamental rules that protect children from harmful AI-generated material.

“It’s not surprising that there’s a discussion of, ‘Who’s going to be first?’ There are discussions going on between AI companies and companies that incorporate AI into children’s products,” Franz said in written comments. “Both have a long history of avoiding responsibility and risking harm to children for profit.”

Franz continued: “To truly keep children safe, AI companies need to ensure their models are not used in children’s products and increase oversight and accountability for companies using their models.”