Overview of Architecture

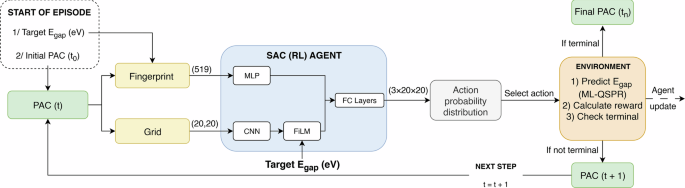

Given a target HOMO-LUMO gap, the generative goal-conditioned reinforcement learning model constructs PAC through a series of modifications to satisfy that target HOMO-LUMO gap. To guide this search, the model attempts to minimize the difference between the target and calculated HOMO-LUMO gaps of the modified PAC in addition to a penalty term that scales with the ring count. The calculated HOMO-LUMO gaps were determined using a predictive machine learning-based model that demonstrates good agreement with results from Density Functional Theory (DFT) calculations while being more computationally efficient35. Since the forward ML model’s error in the HOMO-LUMO gap is about ±0.15 eV, any structures generated within this margin are considered successful designs. As a result, the model learns a distribution that prioritizes actions leading to smaller-sized structures with properties close to the target, followed by actions producing satisfactory, though less optimal, structures, and lastly, structures that deviate from the target.

PAC Structural Representation in Machine Learning

When designing an ML workflow in cheminformatics, the choice of structural representation for molecules is crucial because it directly impacts the quality and quantity of knowledge available for learning. Some standard representations for molecules include SMILES, molecular fingerprints, graphs, and latent vectors41,42. The requirements for encodings also differ between predictive and generative tasks. While capturing relevant structural information is essential, the output of generative models necessitates two-way (or reversible) encodings. One-way encodings, like molecular fingerprints, are inappropriate because they lack the reversibility required to reconstruct the original molecule. SMILES strings, a widely used example of a two-way encoding, address this limitation and have been extensively employed in inverse design7,16,24,25,26,32,43. However, their sequential, syntax-sensitive format often leads to the generation of chemically invalid structures. This issue is particularly exacerbated when dealing with large, multi-cycle molecules, such as PACs. Graph representations, though capable of encoding molecular topology, also suffer from similar validity concerns while being more computationally expensive. Alternatively, latent vectors are typically obtained from autoencoders and represent molecules as continuous embeddings in a learned chemical space. They allow smooth interpolation and optimization but suffer from reconstruction loss for complex molecules.

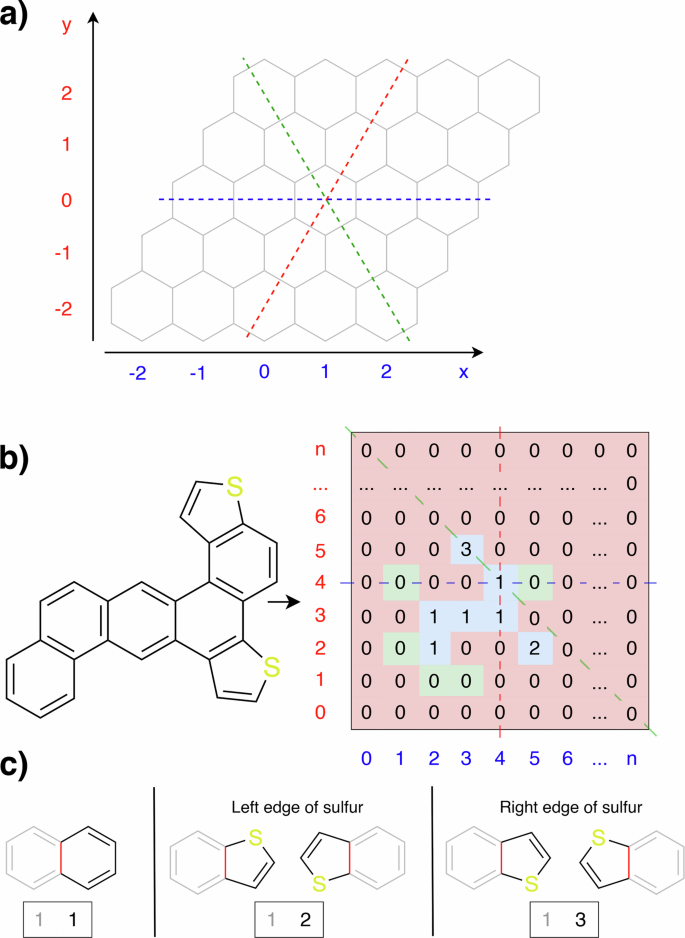

Given these limitations, we devise an alternative efficient representation for PAC that capitalizes on their unique planar structures. PACs in this study are treated as molecules composed of rows of fused rings arranged in a skewed grid-like fashion. We therefore model their chemical space within a Cartesian (x-y) coordinate system (Fig. 2a). Each point represents a benzene unit, a thiophene unit, or an empty position. Since the layers are not vertically aligned due to the staggered stacking of these rings, the y-axis shifts to the right as the layers increase. This arrangement is analogous to the axial coordinate system for a hexagonal grid with a bottom-left origin44. It is represented here as a sparse matrix, where zero entries are empty spaces and non-zero entries indicate the presence of rings. Specifically, “1” is a benzene unit, “2” is a thiophene attached at the left edge of sulfur, and “3” is a thiophene attached at the right edge of sulfur (Fig. 2c). Additional constraints are imposed on thienoacenes where each molecule may contain at most two thiophene rings, and each thiophene can fuse with only one benzene ring on either side. This limitation is set to avoid extrapolation beyond the scope of our forward model’s training dataset, which is available in the Data Availability statement. Note that the inverse step does not have such a limitation. Finally, alignment is complicated by thiophenes having five atoms compared to six in benzene. However, since each thiophene ring can have only one neighboring ring, it terminates addition along that axis and is restricted to a single axis.

a PAC modeled in the Cartesian coordinate system. b Conversion of a PAC to the grid representation. Tiles are color-coded to visualize the action space. Blue tiles are aromatic units. Green tiles are where new rings can be inserted. Red tiles are inaccessible locations. c Numeric encoding of each ring type in the grid representation.

It is important to point out that while the grid representation is a 2D representation of a molecule, such a representation does not assume the molecule to be planar. In fact, geometries of all molecules in the dataset used in this study were fully optimized at the DFT B3LYP/6-31 + G(d) level. The aspects of non-planarity for some molecules were addressed in detail in our previous works34,35. For larger systems, there are cases where diradicals can be stable in addition to their singlet states. Determining such stability requires a rather accurate level of quantum chemistry calculations, which are beyond the scope of this work and thus not considered here. In this dataset, the band gap is approximated by the HOMO-LUMO gap. According to Koopman’s theorem, the HOMO-LUMO gap provides a good estimate of the experimental band gap for an isolated molecule. This has been discussed in more detail in our earlier works34,36,37, and the term “HOMO-LUMO gap” (Egap) is used in this study.

To systematically construct PACs, we define unit transformations that represent changes in matrix element values. We limit valid actions to ring addition to simplify the action space and expedite learning. Invalid actions are also excluded by employing domain knowledge to determine where the addition of a ring results in an invalid molecule. This includes a valency check that ensures no radicals will be formed. Valid locations are adjacent to pre-existing rings, and adding to these locations must not lead to any sp3 carbon. Additionally, it must not violate the aforementioned rules for thienoacenes (Fig. 2b). Again, these restrictions exist to prevent the model from exploring the chemical space outside the types in our dataset. When the training dataset for the structure-to-property ML model increased to include more complete molecular types, these restrictions could be removed. The list of actions is also checked against each other for repeated structures, as symmetry can occur from addition at opposite ends. Because symmetry can slow down learning, we disabled it to accelerate training.

Alternatively, Weiss et al.45 also developed an efficient representation for PACs, representing each molecule as a graph in which every ring or heteroatom is treated as a node. Using this representation, they found success in the inverse designs of PAC using guided diffusion45. They covered a broader chemical space than ours, encompassing benzene rings and various five-membered aromatic rings. However, they did not consider peri-condensed PACs, perhaps due to the limitations of the training data. Note, our framework allows the generation of both cata-condensed and peri-condensed configurations by performing validity checks at every construction step.

The grid representation of PAC is an effective way to encode the spatial information of the molecule, which is a deciding criterion for inverse design. The generation of molecules is only possible if the model is fully aware of the arrangement of bonds and atoms, in addition to being able to add new information. In this regard, the grid representation enables the reversal process from the structural identifier to the structure itself. Even though this alone is sufficient to complete the model, we found that the accuracy of the model can be notably improved when the grid representation is augmented with a molecular fingerprint, specifically the Weisfeiler-Lehman (WL) graph kernel35,46. In the same work on the ML-QSPR model, the WL graph kernel demonstrated excellent capability in predicting PAC electronic properties. Compared to other molecular fingerprints, WL graph kernels have advantages in their ability to capture substructure information and repetition, a dominant feature in PACs. WL graph kernels, however, suffer from a loss of spatial information during encoding and thus cannot be used for inverse design by themselves. By combining two different input modalities, we found that the performance of our inverse design model significantly improved.

Goal-Conditioned Reinforcement Learning

Reinforcement learning is a machine learning paradigm in which an agent learns to make optimal decisions by maximizing cumulative rewards through interactions with an environment. It is a flexible approach that enables fine-tuned control over the trade-off between exploration and exploitation while also supporting multi-objective optimization through reward engineering. Reinforcement learning has been widely utilized in materials design with great success6,7,47. There are two major approaches to training an RL model: value-based methods and policy-based methods. Value-based methods learn a value function to derive an optimal policy by selecting actions that maximize expected rewards. While value-based methods are sample-efficient in suitable use cases, they can be prone to instability. Deep Q-learning, one of the most popular RL techniques, has been studied for generating molecules33,47. However, the most significant drawback of this technique is that it is deterministic at inference when using the epsilon-greedy strategy, yielding a single structure for each target. This determinism contradicts the intended goal of optimality-diversity in our approach, highlighting the need for a stochastic approach. In contrast, policy-based methods directly learn a policy π without the intermediate step of estimating a value function. These methods tend to be more stable and effective in high-dimensional action spaces.

In addition to these two approaches, it is also possible to combine both value-based and policy-based techniques, balancing the strengths and weaknesses of each48. A notable algorithm within this hybrid method category is the Soft Actor-Critic (SAC)49. Soft Actor-Critic (SAC) is an off-policy, actor-critic reinforcement learning algorithm that optimizes a stochastic policy while maximizing both cumulative rewards and entropy. Controlling exploration and exploitation is achieved using the entropy regularization coefficient α, where a lower value promotes exploitation and a higher value promotes exploration. This hybrid approach benefits from the sample efficiency of value-based methods and the stability of policy-based methods, making it particularly effective in complex, stochastic environments.

The inverse design framework is illustrated in Fig. 3. The RL agent processes two input modalities simultaneously: a one-dimensional (1D) vector and a two-dimensional (2D) grid. Each input is encoded using a network appropriate for its structure. The 1D vector is processed by a multi-layer perceptron (MLP), while the 2D grid is passed through a convolutional neural network (CNN). The outputs of these two networks are then combined using fully connected layers to produce the final action. Additionally, goal-conditioned reinforcement learning requires that the goal information be included in both input modalities. For the 1D vector, the goal vector and the molecule’s metadata are concatenated directly with the fingerprint before being passed into the MLP. For the 2D grid, the goal is incorporated using a Feature-wise Linear Modulation (FiLM) layer50, which conditions the feature maps of the CNN based on the goal after the input has passed through the CNN layers.

Numbers in parentheses indicate the dimensions of the data.

Hindsight Experience Replay (HER)

To improve the performance of GCRL models, we incorporate Hindsight Experience Replay (HER)51, which has been shown to enhance learning efficiency52. HER introduces the relabeling strategy for arbitrary value-based models that implement a replay buffer. The concept behind HER is that failed episodes do not teach RL models as effectively as successful episodes. However, by modifying the initial goal to reflect success, the model can still learn valuable information from these failed episodes. Formally, HER selects a sample \(({s}_{t}|\left|g,{a}_{t},{r}_{t},{s}_{t+1}\right|{|g})\) from the replay buffer, modifies the initial goal, and recalculates the reward, resulting in additional synthetic data as \(({s}_{t}{||}{g}^{{\prime} },{a}_{t},r{\prime} ,{s}_{t+1}{||}{g}^{{\prime} })\). This reduces training time and improves sample efficiency by providing a more meaningful learning signal.

Sampling Algorithms

Once the model is trained, specialized algorithms are used to build the list of candidate PACs. The output of SAC can be interpreted as a complex probability tree, where each trajectory τ = (s0, a0, s1, a1, …, st) represents the actions taken to reach a specific PAC. The probability of that trajectory, \({\rm{P}}({\rm{\tau }})\), is the product of the probabilities of taking each action at at state st across all time steps. Since the optimality of a PAC is correlated to its probability, the objective of the algorithm is to sample a number of trajectories with the highest P(τ). Two algorithms are used to sample from this distribution: Monte Carlo sampling and threshold-based sampling. Each algorithm has a corresponding control parameter that controls the number of candidates generated. Uniqueness is also considered for both algorithms, which refers to the fraction of generated candidates distinct from one another.

Threshold-based sampling works by continuously sampling the top-performing pathways with the highest probability while pruning all paths with a probability less than a defined threshold. This threshold-based search is deterministic; given the exact probabilities, it will always yield the same path. Consequently, it generates structures in order of their optimality while pruning pathways that are likely to fail, resulting in a uniqueness of 100%. In this method, the threshold value serves as the control parameter, where decreasing the threshold allows for generating more suboptimal structures. Optimal threshold values are model-dependent, which is caused by the difference in the magnitude of probability. This problem becomes more prominent when changing the entropy coefficient α, as a higher entropy value significantly broadens the probability distribution.

The Monte Carlo sampling method executes the model for a select number of episodes, selecting an action based on its probability. Since the search is stochastic, candidates will vary between executions, so uniqueness is less than 100%. The main shortcoming of this approach is that it is not possible to generate structures in order of their optimality. PACs with low probability can also occur, while optimal PACs may possibly be missed. Despite these limitations, Monte Carlo sampling is better suited for comparing metrics across different models because it is not affected by the absolute probability value. The control parameter is temperature, τ, which scales the logits from the probability distribution. A lower τ sharpens the distribution, making high-probability samples more dominant, thus favoring optimal candidates. Conversely, a higher τ results in a more uniform distribution, increasing the likelihood of selecting less optimal candidates but enabling the exploration of a more diverse chemical space.

Model Performance from Different Settings

To systematically evaluate the effects of different training strategies on the model performance, we designed six models with various configurations. Each model, represented by its ID (e.g., Model 1, Model 2, etc.), was sampled via Monte Carlo sampling at both low and high temperatures. Their performance is evaluated using the diversity, failure ratio, uniqueness, and optimality as shown in Table 1. The failure ratio is defined as the number of structures exceeding the allowed error limit divided by the total number of structures generated. Optimality is defined as the mean ring count of structures per HOMO-LUMO gap. Diversity is the total number of unique structures, calculated as the total count minus duplicates. This definition of diversity is different from previous publications in this area, which is related to the Tanimoto similarity factor between generated molecules within the generated set25,26,53. However, such a metric provides little useful information for PACs with repeating benzene and thiophene rings. Finally, uniqueness is defined as the fraction of unique structures (diversity) among all generated structures.

Model 1 was trained without HER and serves as the baseline, while models 2-5 were trained with HER. Model 3 differs from Model 2 in its shorter training duration to investigate how quickly the model can learn. Model 4 builds upon Model 2 by increasing the entropy coefficient α, promoting more aggressive exploration. Model 5 was trained without the WL graph kernel to demonstrate that using both input structural representations is superior to the grid-only approach. Finally, Models 1-5 were all initialized at coordinate (0, 0), which allowed the model to learn a localized strategy efficiently but limited its ability to generalize across the grid. If the initial position were changed, these models would perform poorly and generate a high number of failed designs. As an additional experiment, we introduced Model 6, which used a uniform distribution of initial states instead of a deterministic one to test whether the framework could learn a more adaptive strategy.

Across all models, raising the temperature consistently increased the failure ratio and diversity, while reducing optimality (i.e., the largest size rings). The improved performance of Model 2 over Model 1 revealed the effectiveness of the HER strategy, as introducing diverse, synthetic trajectories via HER enhanced accuracy without compromising diversity and optimality. Between Models 2 and 3, the longer-trained Model 2, as expected, outperformed Model 3 in accuracy and optimality. Nevertheless, Model 3 achieved acceptable performance, reflecting the sample efficiency of the framework. Between Models 2 and 4, Model 4, with a higher α, explored more aggressively, resulting in substantially more structures than Model 2, even in low-temperature sampling. This is attributed to its flatter action probability distributions, which produced more structures. Despite this exploration bias, Model 4 retained accuracy nearly on par with Model 2. One caveat is that the total structures under low- and high-temperature settings are close, suggesting the model performance is not too sensitive to the temperature setting. Nevertheless, increasing α remains a viable approach for increasing diversity.

Between Models 2 and 5, total structures and diversity-optimality have minimal differences. However, the failure ratio for Model 2 was lower, confirming that the addition of the fingerprint had improved accuracy. Uniqueness across all models varies around 24.2–57.9%, with higher α producing greater stochasticity. These values are comparable to traditional GAN and VAE models54,55, though lower than modern architectures such as AREA25. Uniqueness values reported here stem from Monte Carlo sampling and therefore appear lower, but it should be noted that threshold-based sampling deterministically achieves complete uniquiness. Finally, Model 6, despite our intuition that a random initialization scheme would decrease the performance, achieved accuracy and diversity-optimality comparable to the other variants. This result highlights the framework’s ability to generalize effectively in dynamic environments.

Training Metrics for Different Settings

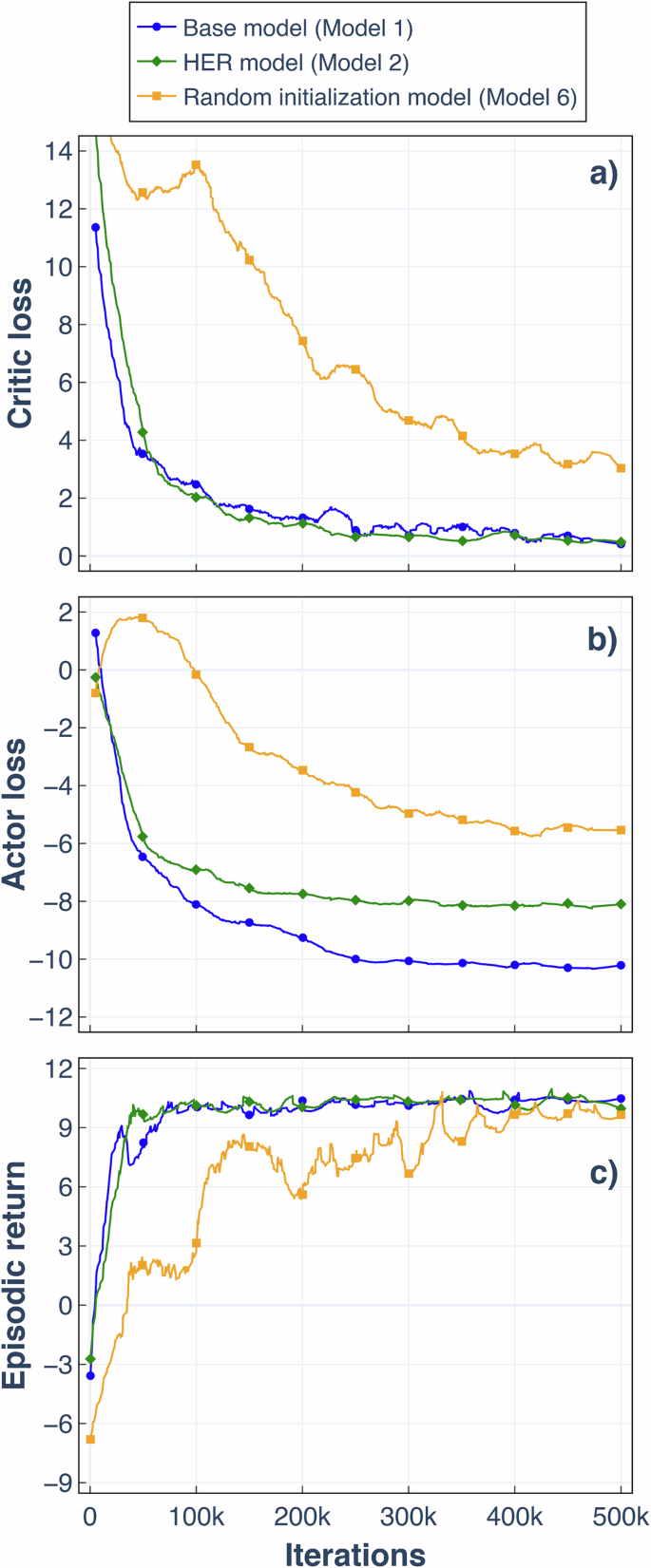

Training curves for the base model (Model 1), the HER model (Model 2), and a model with random initialization (Model 6) reveal rapid initial learning for Models 1 and 2 (Fig. 4). This is evidenced by falling critic and actor losses alongside rising episodic return. In contrast, Model 6 started with higher losses, likely due to the increased noise introduced by synthetic experience replay. Despite slower initial learning, Model 6 demonstrated strong generalization ability as episodic return gradually improved. Further training for Model 6 could yield improvements, as its losses have not converged towards the end of training.

Improvement in a critic loss, b actor loss, and c episodic return for three settings. The Savitzky-Golay filter is applied using a window length of 100 and a polynomial degree of 2 to reduce noise.