(Metamol Works/Shutterstock)

Data scientists want to do data science. It’s exactly what the title says. However, data scientists are often asked to do more than build machine learning models, such as creating data pipelines and provisioning compute resources for ML training. This is not a good way to keep data scientists happy.

To keep Netflix’s 300 data scientists happy and productive, in 2017 Savin Goyal, team leader of the Netflix machine learning infrastructure team, abstracted some of the non-data-science-like activities into data scientist Led the development of a new framework that allows you to focus more. It’s the time spent doing data science. This framework, called Metaflow, was released by Netflix as his open source project in 2019 and has been widely adopted ever since.

Goyal recently Data Nami He talks about why he created Metaflow, what it does, and what customers can expect from his startup, Outerbounds’ enterprise version of Metaflow.

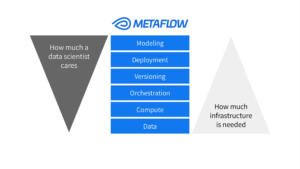

“If you want to do machine learning, there are some raw materials you need,” says Goyal. “You need a place to store and manage your data, and some way to access that data. You need a place to coordinate the compute of your machine learning models. experiment tracking, model deployment, etc.) Next, we need to understand how data scientists actually create their work.

“So all these raw building blocks exist in different ways, but it’s still up to the data scientist to cross the barrier from one tool to another,” he continues. increase. “That’s where Metaflow comes in.”

Metaflow helps by standardizing many of these processes and tasks, allowing data scientists to focus more on machine learning activities using Python, R, or other frameworks. In other words, data scientists become “full-stack,” Goyal says.

“For a company like Netflix, it’s very important to provide a common platform for all data scientists to be more productive,” he says. “One of the big problems plaguing most companies is that if you need to deliver value with machine learning, you need people who are not just good at data science, but who can handle the complexity of your internal systems. It means that we need to

With the introduction of Metaflow, Netflix’s data scientists (they call themselves machine learning engineers) no longer worry about how to connect to various internal data sources or access large compute instances. Goyal says you don’t have to. Metaflow automates many aspects of running training and inference pipelines at scale on Netflix’s cloud platform (AWS).

Source: Metaflow

In addition to automating data access and computing, Metaflow also provides MLOps capabilities to help data scientists document their work through code snapshots and other features. According to Goyal, the ability to reproduce results is one of the major advantages provided by this framework.

“One thing traditional machine learning lacks is problem reproducibility,” he says. “Suppose you are a data scientist and you are training a model. Often no one else can reproduce your results. There are no people, so we basically provide a reproducibility guarantee that allows people to share their results within their teams, encouraging collaboration.”

Metaflow also helps reduce costs by allowing users to mix and match different cloud instance types within a given ML workflow, says Goyal. For example, a data scientist wants to train an ML model using a large dataset stored in Snowflake. It’s a lot of data, he says, so a memory-intensive analysis process needs to run first. In that case, the data scientist might want to train the model on the GPU. After the compute-intensive training process, fewer resources are required to deploy the model for inference.

“Basically, you can create different sets of workflows to run on different instance types and different resources,” says Goyal. “This further reduces the overall cost of training machine learning models. I don’t want to pay.”

Source: Metaflow

Metaflow allows data scientists to use their preferred development tools and frameworks. You can still run TensorFlow, PyTorch, scikitlearn, XGBoost, or any other ML framework you want. Metaflow has a GUI, but the main way to interact with the product is by including decorators in your Python or R code. According to Goyal, at runtime, the decorator determines how the code executes.

“We basically target people who are familiar with data science,” he says. “They don’t want to be taught data science. They’re not looking for a no-code, low-code solution. That’s where metaflow comes in.

Since Netflix first released Metaflow as open source in 2019, it has been widely adopted by hundreds of companies. Goldman Sachs, Autodesk, Amazon and S&P Global use it, according to the project’s website. Dyson, Intel, Zillow, Merck, WarnerMedia, DraftKings. Another user, CNN, reports an 8x improvement in performance over time in terms of the number of models he has deployed in production. says Goyal.

Savin Goyal is the creator of Metaflow and CTO and co-founder of Outerbounds.

He says open source projects on GitHub have 7,000 stars, putting them in the top one or two in their field. The Slack channel is very busy, he says, with about 3,000 active members. According to Goyal, Metaflow has been adapted to run on Microsoft Azure and Google Cloud as well as Kubernetes since he was first released for AWS. It is also used in Oracle and Dell hosted clouds.

In 2021, Goyal co-founded Outerbounds with former Netflix colleague Ville Tuulos and Oleg Avdeev from MLOps vendor Tecton. Mr. Goyal and his team at the San Francisco-based company will continue to be the lead developers of the open source Metaflow project. Four months ago Outerbounds released a hosted version of his Metaflow that allows users to get up and running very quickly on his AWS.

Because Outbounds controls how the infrastructure is deployed, the managed product can offer security, performance, and fault-tolerance guarantees compared to the open-source version, says Goyal.

“With open source, we have to make sure our services work for everyone who wants to use us. In certain areas, it’s easier said than done,” he says. “If you are using our managed product, we can afford to have certain very specific opinions regarding your implementation.”

Reducing cloud spending is a big focus for the Outerbounds service, especially in today’s GPU scarcity and high cost. The company will eventually allow customers to leverage the power of their on-premises GPUs, provided they have some connectivity to their hyperscaler of choice.

“Once you start scaling machine learning models, it starts to get expensive very quickly, but there are many mechanisms to bring that cost down significantly,” says Goyal. “Do you know, for example, that GPUs are not short of data? How can we ensure that data can be moved with the highest possible throughput? That’s more than what cloud providers offer.”

Related products:

The future of data science lies in untangling AI

Birds are not real. Not even MLOps

ModelOps ‘breakthrough year’, says Forrester