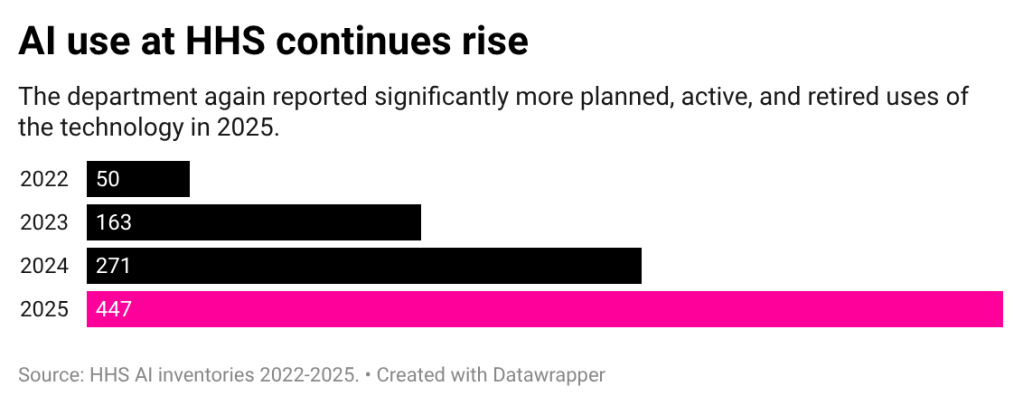

According to the department’s latest inventory, the department’s reported use of AI in 2025 increased by 65%, indicating that the use of the technology is becoming more widespread as leadership simultaneously moves to reduce staffing.

New use cases include pilots aimed at alleviating staffing shortages, multiple disclosures of so-called “surrogate” tools, and additional deployment of data related to the department’s work with unaccompanied children. More than half of applications in 2025 will be in pre-deployment or pilot stages, meaning most applications are still in their infancy.

Valerie Wilschafter, a fellow at the Brookings Institution who focuses on artificial intelligence and emerging technologies, said after reading the inventory that the agency has a “huge focus” on expanding its business. And HHS has already been one of the agencies with a larger inventory of use cases over the past few years.

They’re “leaning into it,” Wiltshafter said.

The increase in usage at HHS comes after several years of dramatic increases in usage of this technology reported by the Health and Human Services Administration. In 2023, the sector’s inventory more than tripled from the previous year, and in 2024 it reported a 66% jump. The increase, of course, comes against the backdrop of major changes at the department and HHS Secretary Robert F. Kennedy Jr.’s goal to do “more with less.”

The department has moved over the past year to reorganize bureaus and lay off thousands of employees, in line with the Trump administration’s call for agencies to reduce their footprint. At least two use cases specifically address limited workforce as a reason for the tool.

The Office for Civil Rights published a pilot using both ChatGPT and Outlook CoPilot to address the issue of “understaffing.” ChatGPT is used to streamline investigations by “breaking down complex legal concepts into plain language and identifying patterns in the courtroom.” [rulings] Affects Medicaid services. ” Meanwhile, CoPilot will be used via Westlaw for “faster communication with the public.”

According to the inventory, both of these uses were “law enforcement.” Neither entry included details about how the generation tools specifically address the labor shortage.

HHS and OCR did not respond to FedScoop’s requests for comment to further explain some uses and provide additional details not included in the publication. FedScoop submitted the request last week through an HHS email form and followed up directly with a spokesperson on Tuesday. FedScoop also submitted an investigation via OCR’s own email form on Wednesday.

It’s difficult to know exactly what staffing issues OCR addresses and how, but some generally caution against its use to replace rather than support employees.

“AI can be an important tool in all walks of life, and it certainly has the potential to make government better and more efficient for the public and for all people,” said Cody Wenzke, senior policy advisor at the ACLU’s National Political Advocacy Office, who specializes in surveillance, privacy, and technology.

That said, Wenzke added, “This doesn’t replace all human decision-making, especially when we’re dealing with an incredible downsizing of the federal government.”

Wirschafter similarly said that the need for applications aimed at addressing talent shortages “seems to be a bit problematic and perhaps self-inflicted.”

However, Wilshafter praised the fact that the inventory provides transparency to the public for interesting applications such as OCR. “Part of the fact that they are providing this transparency is that there is some visibility into the problem they are trying to solve,” she said.

Using agents, Palantir, xAI

Among other notable use cases, the Department of Children and Families revealed that the Office of Refugee Resettlement plans to use its AI system to verify the identities of adults applying to care for unaccompanied minors.

Although this use case is currently in the pre-deployment stage, it is one of the sector’s few “high impact” entries, and is an application that requires additional risk management practices to be followed in order to continue operating. The vendor has not been disclosed.

Other tools seemed consistent with the Trump administration’s political goals. ACF said it has introduced two tools to identify position descriptions and grants that violate the president’s executive order aimed at erasing diversity, equity, and inclusion from the federal government.

Both of these use cases cite Palantir as the vendor and cite “improved review efficiency and reduced administrative burden on staff” as reasons for using the tool. Palantir, a vendor that gained attention for its work with Immigration and Customs Enforcement during the Trump administration, is frequently listed on HHS inventory lists and has more than 15 use cases by the technology company. Most of these use cases are within ACF.

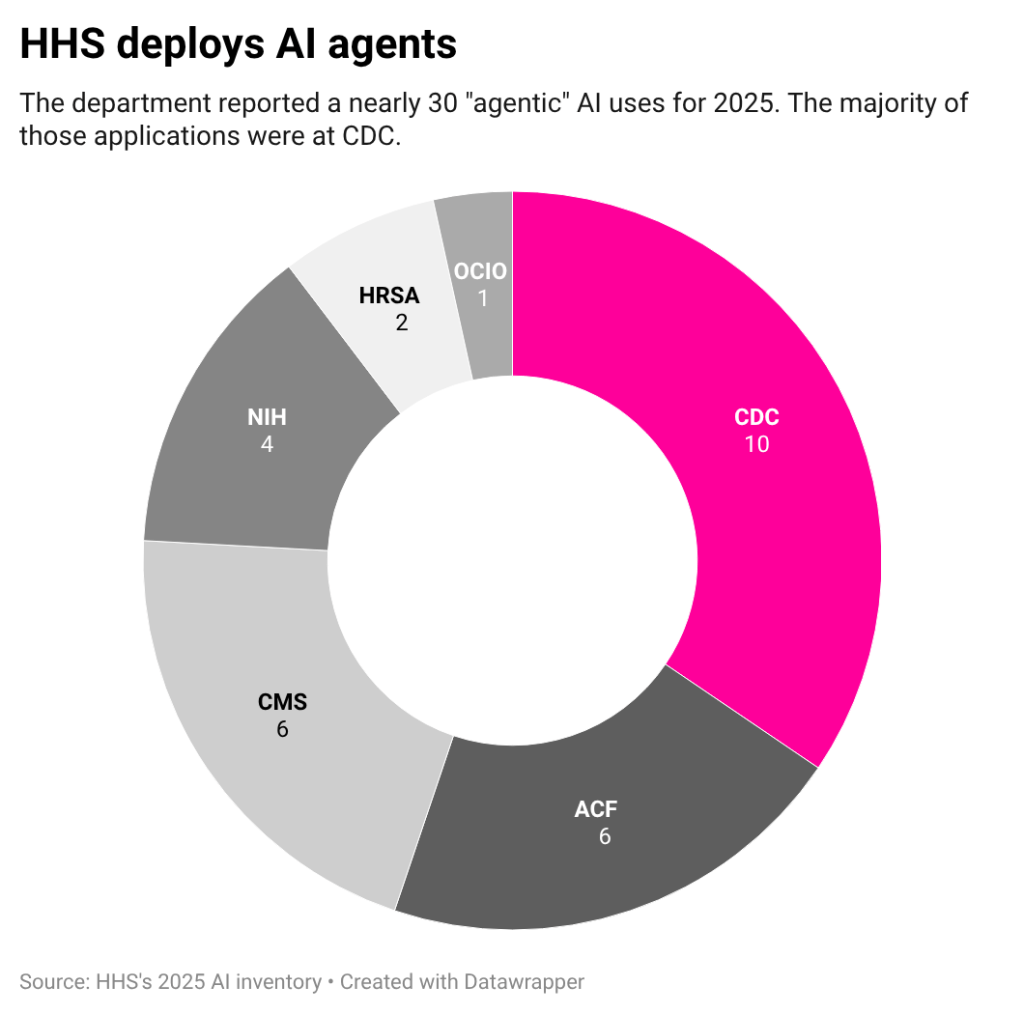

Additionally, HHS lists the use of multiple “agent” AIs for 2025.

Agentic AI is a commercially hot application of technology where certain tasks are performed autonomously or with little human intervention. While these use cases still represent a small portion of HHS’ inventory, there were only a handful of use cases called AI agents across the federal government last year.

One of those entries is the U.S. Centers for Disease Control and Prevention’s OpenAI Deep Research pilot. According to the disclosure, the tool is used to help authorities process large amounts of research and data and generate output in the form of reports. The entry mentions an internal study that found “94% of prompts resulted in high-quality successful reports,” with most completed within 30 minutes.

Other uses include Freedom of Information Act response agents at the Health Resources and Services Administration and internal use cases for staff questions regarding ethics policies at the National Institutes of Health. Both were before the introduction.

Not included in inventory: HHS is using xAI’s Grok as part of the recent rollout of the RealFood.gov website. The website encourages Americans to “eat real food” instead of processed foods, and is in line with the agency’s new dietary guidance. The page uses AI to guide users to answer health questions and links directly to Grok.

Although the use of an AI chatbot partnered with Elon Musk is not listed in the catalog, the agency lists xAI gov as one of the services it uses to schedule and manage initial drafts of documents, other communications, and social media posts. Both of these use cases were listed in a separate inventory of common commercial applications for the technology.

There is room for improvement

Wilschafter said that compared to other inventories, the HHS publication is one of the more detailed the agency has submitted in 2025 in terms of information about each application and the issues it aims to fix.

But that doesn’t mean the publication is perfect. For example, the lack of a use case that would have a significant impact on HHS inventory has raised questions among advocates and researchers considering the disclosures.

Comparing new inventory year over year is also difficult. Unlike last year’s catalog and many others published this year, HHS’ new publication does not have a unique identifier for each entry. These identifiers are typically alphanumeric codes that government agencies specify for each use, and without them, it becomes difficult to identify new tools, especially if names or other details change.

Blank fields also leave supporters wanting more.

“Reviewing the inventory raises serious questions about how much transparency is actually being provided here, because there are so many fields that are left blank or simply marked as confidential rather than publicly available,” Wenzke said.

Specifically, Venzke pointed out that public privacy impact assessments do not exist for the vast majority of use cases.

Of all submissions in HHS’s inventory, only one indicated the location of a privacy impact assessment. Despite being included in guidance sent to agencies by the White House Office of Management and Budget, some agencies did not include this category at all. Although HHS includes this information, it provides little, if any, information about how HHS evaluates privacy.

Government agencies are required by law to conduct privacy impact assessments (PIAs) of technologies that collect personally identifiable information to ensure that those systems are equipped to protect that information. These assessments should be publicly accessible.

However, finding public privacy impact assessments is often difficult because systems may use different names and assessments may cover multiple systems, Benzke explained. “The link to PIA alone gives us more certainty in holding the government accountable,” he said.

HHS either did not provide a link, said the PIA would be published soon, or said the PIA was not published. Wenzke said this was “totally contrary to PIA’s purpose of public transparency.”

For Quinn Annex Reese, a senior policy analyst on the Civic Technology Equity team at the Center for Democracy and Technology, the jump in inventory size raises questions about what government agencies are doing to manage it.

“What’s most notable is what government agencies are saying or doing to explain the fact that the total use of AI has increased so significantly,” Annex-Rees said. “If you look at things like AI compliance plans and AI strategies, it’s not very clear whether agencies have done a ton of additional groundwork to prepare them for such a significant expansion in the use of AI.”