The research roadmap involved two methods: LSTM and XGBoost. The first is designed to predict the different characteristics of storms, and the second to predict the occurrence of storms based on their characteristics.

Study site and data

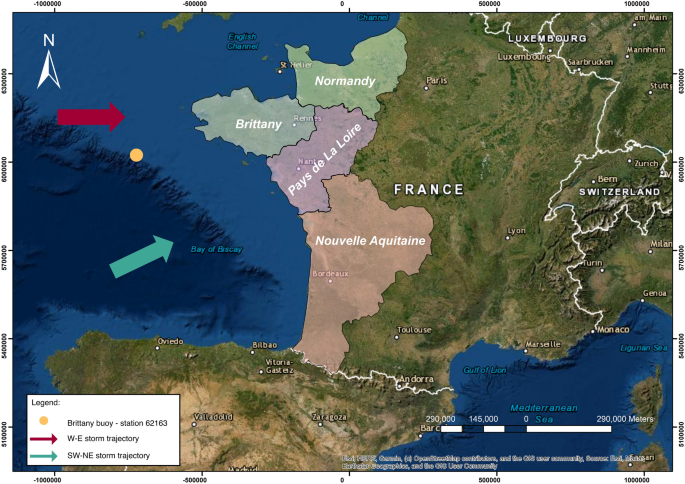

The site for this study was the western coast of France, comprising four regions: Normandy, Brittany, Pays de la Loire, and Nouvelle-Aquitaine (Fig. 1). These regions have experienced unexpected storms, resulting in many human and material losses12,14, highlighting the need for historical reconstruction of past storms. The study area has therefore been the subject of numerous studies to identify past storm dynamics, their relation to climatological mechanisms, their trajectories, and the spatialization of damage42,43. These studies have identified two main storm paths: The first estimated from west to east and impacting a restricted area of the French Atlantic coast, the second more extensive, with a southwest-to-northeast trajectory affecting a larger section of the coast42. These results concur with the classification method known as Dreveton, which proposes a classification of storms based on the origin of the associated depression, either Atlantic or Mediterranean; those associated with low-pressure systems over the Atlantic are more frequent than those from the Mediterranean44. Two classes of storm directly affect the Atlantic coast. The first relates to storms generated from depressions over the British Isles. The storm tracks, therefore, pass through Brittany then move up towards northern France, affecting either the north of France or the entire country. The other storm class relates to those generated from depressions over the Bay of Biscay. The trajectory of this second category of storms moves inland via the Pays de la Loire—Poitou—Charentes and continues towards the east of France and then Germany, affecting the northern part of France or the whole country. The studies conducted by Castelle et al.45,46 have greatly contributed to enhancing the comprehension of storm impacts and creating predictive tools for reducing coastal hazards in the study area. For example, their study of the effects of intense winter storms in 2013–2014 provides insights on the erosion patterns and the development of megacusp embayments45. This research focuses on the correlation between storm wave attributes, such as wave height, duration, and angle of incidence, and the consequent patterns of erosion45. In addition, Castelle et al.46 developed a new climate index based on sea level pressure, termed the Western Europe Pressure Anomaly (WEPA) index, which accurately accounts for the variations in winter wave height throughout the Western European coast. The WEPA index outperforms other prominent atmospheric modes in explaining winter wave variability and excels at capturing extreme wave height events, as demonstrated by its performance during the severe 2013–2014 winter storms that affected the study area46. Moreover, Castelle et al.47 conducted a study on the changes in winter-mean wave height, variability, and periodicity in the northeast Atlantic from 1949 to 2017, and examined their connections with climate indicators. Their research highlighted the growing patterns in the height, variability, and regularity of winter waves. These patterns are mainly influenced by climate indices like the North Atlantic Oscillation (NAO) and WEPA. As the positivity and variability of the WEPA and NAO increase, the occurrence of extreme winter-mean wave heights becomes more common47, which makes these indices valuable predictors in forecasting coastal hazards.

Location of the study area.

This study combines data from a storm database and offshore buoy data from 1996 to 2020. The Meteo France website provided the storm database, containing all the storm events in the study area (http://tempetes.meteo.fr/spip.php?rubrique6), and the historical measurements from the Brittany Buoy—station 62,163, with hourly records of wave height, wave period, wind speed, temperature, pressure, and humidity from January 1, 1996 to December 31, 2020 (https://donneespubliques.meteofrance.fr/?fond=produit&id_produit=95&id_rubrique=32). These hourly records were transformed into daily values by calculating the average value for each day and adding this to the storm names and occurrences extracted from the storm database. Storm occurrence is defined as days with wind speed values ranking above the 98th percentile of historical wind speed measurements from buoy data and coinciding with the days identified in the storm database as having storm events. Using this definition of storm occurrence, we were able to identify all the storm events in the study area since 1996. The occurrence of storms followed a binomial probability distribution, where the value 1 represented the occurrence of a storm, and the value 0 represented no storm. The combination of buoy data and storm database provided multivariate time series data (Table 1) representing a day-by-day record of historical weather and marine data and the precise days on which storms occurred, which could be used to predict the occurrence of storms and their different characteristics.

Prediction of storm characteristics

The dataset was fed into a multivariate LSTM model to predict the values of the six storm characteristics at once. The following steps were taken to prepare the data for implementing the algorithm: first, any missing values for each attribute were replaced with the median value for all the known values of the attribute. This approach is more reliable than mean imputation when the data includes outliers or is skewed since the median is less impacted by extreme values. The LSTM model was then constructed in the Keras framework, the input to the LSTM consisting of a time series vector x for T time steps in the series.

$$x=\left[x\left(1\right), x\left(2\right), \dots , x\left(T\right)\right]$$

Each input vector x

(1)

$$MAPE= \frac{100}{n} {\sum }_{i=1}^{n}\left|\frac{{y}_{i – {\widehat{y}}_{i}}}{{y}_{i}}\right|$$

(2)

$$RMSE = \sqrt {\frac{1}{n}\mathop \sum \limits_{i = 1}^{n } (y_{i} – \hat{y}_{i} )^{2} }$$

(3)

$${R}^{2}=1- \frac{{\sum }_{i=1}^{n}{({y}_{i – }{\widehat{y}}_{i})}^{2}}{{\sum }_{i=1}^{n}{({y}_{i -} \overline{Y })}^{2}}$$

(4)

where \(n\) is the number of samples, \({y}_{i}\) the real value, \({\widehat{y}}_{i}\) the predicted value, and \(\overline{Y }\) is the mean of the observed values. The best performance is achieved when the values of MAE, MAPE, and RMSE are close to 0 and the value of R2 is close to 1.

The Pearson correlation coefficient (r) was also used to assess the performance of the LSTM model, specifically the strength of the linear association between the observed and predicted values. The definition of this measure is as follows:

$$r= \frac{{\sum }_{i=1}^{n}({x}_{i}- \overline{x })({y}_{i}- \overline{y })}{\sqrt{{{\sum }_{i=1}^{n}({x}_{i}- \overline{x })}^{2}{\sum }_{i=1}^{n}{({y}_{i}- \overline{y })}^{2}}}$$

(5)

where \({x}_{i}\) are the observed values, \({y}_{i}\) are the predicted values, \(\overline{x }\) and \(\overline{y }\) are the means of the observed and predicted values, respectively, and \(n\) is the number of samples.

Prediction of storms

The objective of the second part was to predict the occurrence of storms based on their characteristics. To achieve this, an XGBoost binary classifier was developed using the XGBoost library. The XGBoost model used the six characteristics as input \({X}_{i}\), and storm occurrence was used as model output \({y}_{i}\), given a binary class label \({y}_{i }\in \{\mathrm{0,1}\}\) , indicating the absence or occurrence of a storm, respectively (Table 1). The median value of each attribute was used to fill in the missing values of the independent variables \({X}_{i}\). The dataset was split into training and testing sets at the ratio of 80 to 20. The 20% testing data (unseen data) corresponding to the period from January 2016 to December 2020 was set aside to avoid information leakage. The training dataset from January 1996 to December 2015 only was used for tuning the hyperparameters. The preprocessing phase of normalizing independent variables \({X}_{i}\) was carried out using the method described in the section ‘Prediction of storm characteristics’. This section applied Bayesian optimization using the Hyperopt library in Python to find the best combination of hyperparameters. Bayesian optimization using Hyperopt is an effective method for Hyperparameter optimization of the XGBoost algorithm. It performs better than other widely-used approaches such as grid search and random Search50.

The XGBoost model was trained using stratified k-fold cross-validation with K = 3. Stratified k-fold is highly appropriate for imbalanced data, as in our study, as it helps keep the class ratio in the folds the same as the training dataset51. The training dataset was divided into three subintervals—two for training and one for evaluating. Six hyperparameters were optimized, namely Learning rate (eta), Maximum Tree Depth, Gamma, Column samples by a tree, Alpha, and Lambda, and the search space defined is shown in Table 2. Fifty different combinations were tested to find the optimum set of hyperparameters. For each combination, recall was set as the evaluation metric for cross-validation. The combination at which the highest recall value was found is identified as the best set of hyperparameters (Table 3). After identifying the best hyperparameters, XGBoost was trained with the entire training dataset and tested using the unseen dataset. The Google Colab A100 GPU system was used to run the hyperparameter optimization and model training.

Due to the low frequency of storm occurrence, the following performance measures were used to evaluate the accuracy of the model: Recall, specificity, false positive rate (FPR), and false negative rate (FNR). These assessment metrics are not sensitive to imbalanced data52 and can be calculated using the following equations:

$$\mathrm{Recall }({\text{Sensitivity}})=\mathrm{ True positive rate}= \frac{TP}{TP+FN}$$

(6)

$$\mathrm{Specificity }=\mathrm{ True negative rate}= \frac{TN}{TN+FP}$$

(7)

$${\text{FPR}}= \frac{FP}{FP+TN}$$

(8)

$${\text{FNR}}= \frac{FN}{FN+TP}$$

(9)

where:

-

\(TP\): true positives, where the model predicted samples correctly as positive. In this case, the storms were classified as ‘storm’.

-

\(TN\): true negatives, where the model predicted samples correctly as negative (no storms predicted as ‘no storm’).

-

\(FP\): false positives, where the model wrongly predicted samples as positive (no storms predicted as ‘storm’).

-

\(FN\): false negatives, where the model wrongly predicted samples as negative (storms predicted as ‘no storm’).

The performance of the model was also measured using ROC (receiver operating characteristics) curve. ROC curve is a two-dimensional graph where the x-axis is the FPR and the y-axis the TPR (true positive rate), and it is generated by changing the threshold on the confidence score. The ROC curve is not sensitive to imbalanced data and can illustrate the diagnostic capability of a binary classifier52. The area under the ROC curve (AUC) metric is used to assess the performance of the XGBoost model since there is no scalar value representing the expected performance in the ROC curve. The AUC metric ranges from 0 to 1, and a perfect model will have an AUC of 1.