Image by author

Machine learning is a type of computer algorithm that enables machines to learn without the need for explicit programming.

Nowadays, machine learning applications are found everywhere, including navigation systems, movie streaming platforms, and e-commerce applications.

In fact, between the time you wake up and the time you go to sleep, you’re likely interacting with dozens of machine learning models without even realizing it.

The machine learning industry is projected to grow by over 36% between 2024 and 2030.

Considering that nearly every large organization is investing heavily in AI, there is a lot to gain from honing your machine learning skills.

If you’re a data science enthusiast, developer, or just a regular person looking to improve your data science knowledge, here are five common machine learning models you should know about.

1. Linear regression

Linear regression is the most common machine learning model used to perform quantitative tasks.

This algorithm is used to predict a continuous outcome (y) using one or more independent variables (X).

For example, if we are given the task of predicting house prices based on the size of the house, we would use linear regression.

In this case, the size of the house is the independent variable X, which is used to predict the independent variable, the price of the house.

This is done by fitting a linear equation that models the relationship between X and y, expressed as y=mX+c.

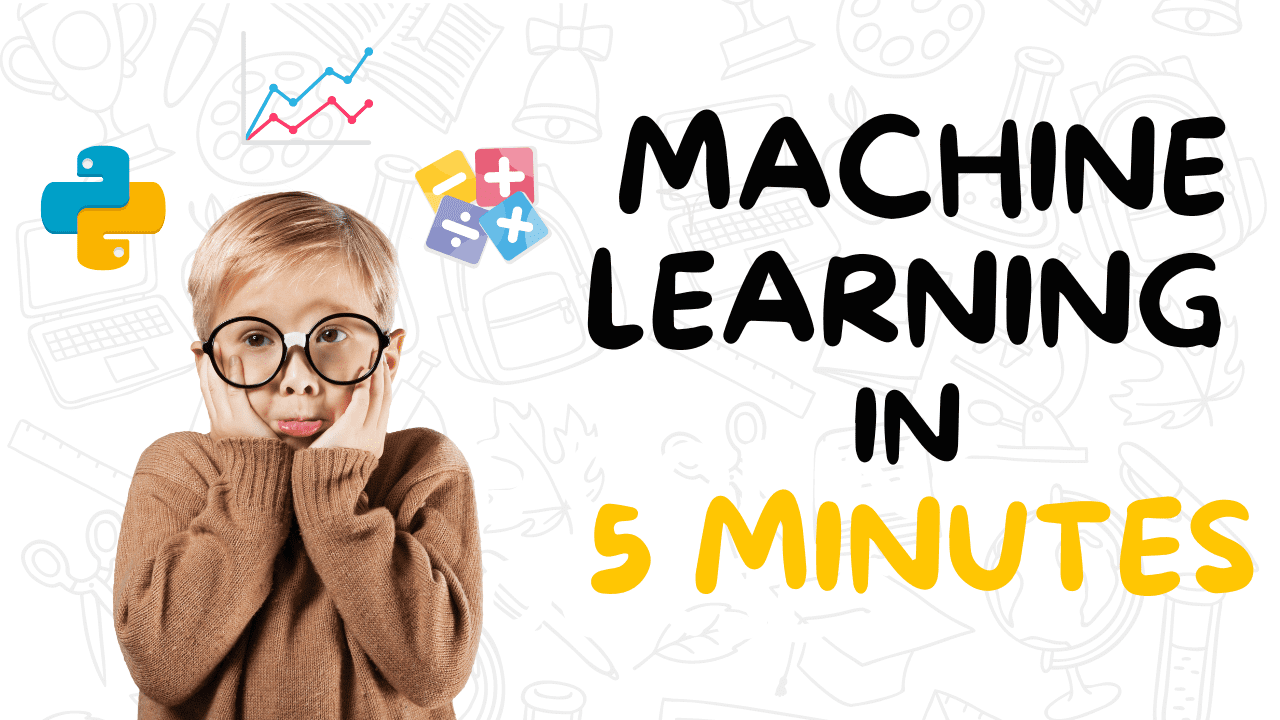

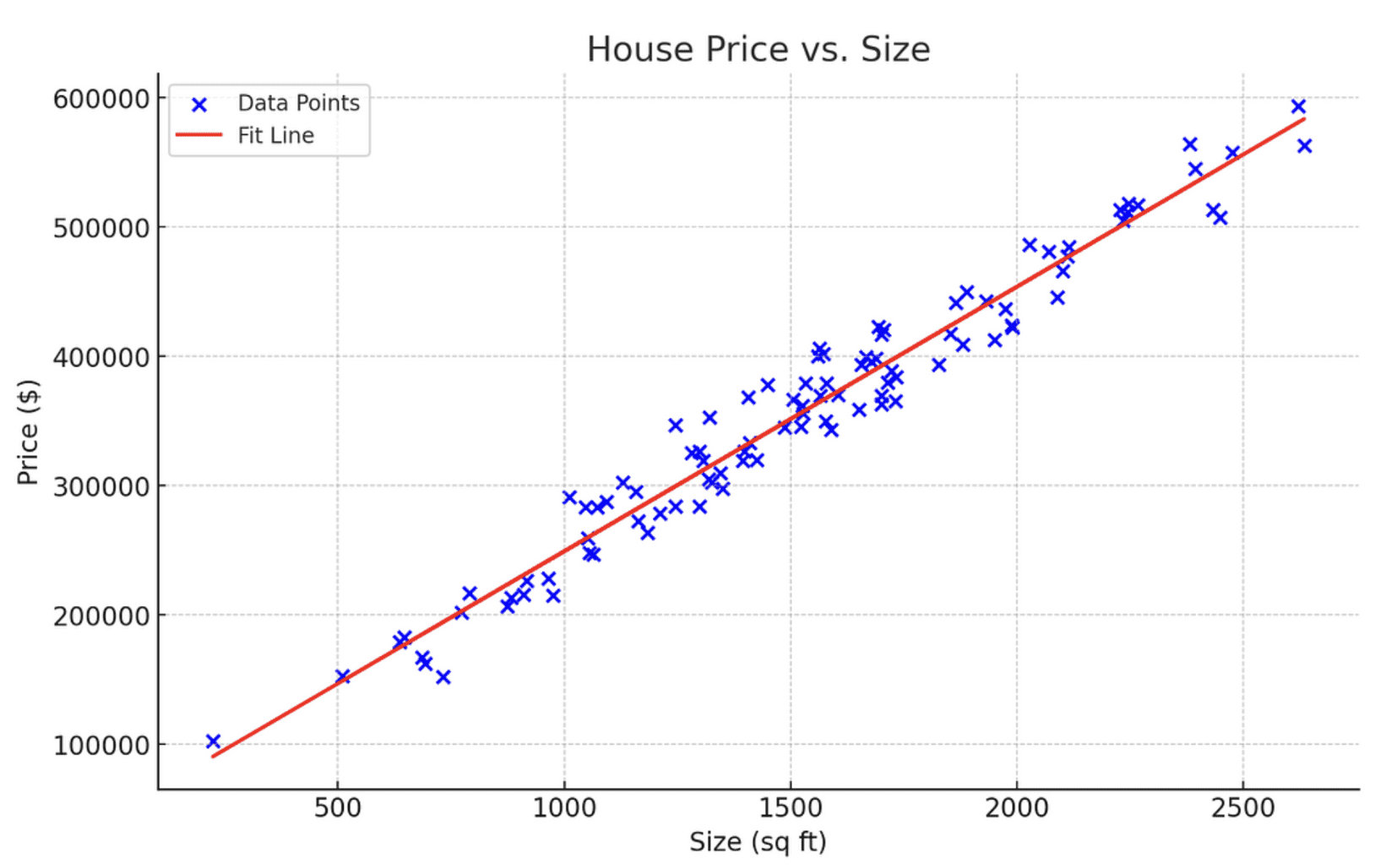

Below is a diagram that shows a linear regression modeling the relationship between home price and size.

Image by author

Learning Resources

To learn more about the intuition behind linear regression and how it works mathematically, I recommend watching Krish Naik's YouTube tutorial on the subject.

2. Logistic regression

Logistic regression is a classification model used to predict a discrete outcome based on one or more independent variables.

For example, based on the number of negative keywords in a sentence, logistic regression can be used to predict whether a particular message should be classified as legitimate or spam.

Below is a graph that shows how logistic regression works.

Image by author

Note that unlike linear regression, which represents a straight line, logistic regression is modeled as an S-shaped curve.

As shown in the curve above, as the number of excluded keywords increases, so does the likelihood that a message will be classified as spam.

The x-axis of this curve represents the number of excluded keywords, and the y-axis represents the probability that an email is spam.

Typically in logistic regression, a probability greater than or equal to 0.5 indicates a positive result, which in this context means that the message is spam.

Conversely, a probability less than 0.5 indicates a negative result, meaning the message is not spam.

Learning Resources

If you want to learn more about logistic regression, StatQuest's logistic regression tutorial is a great place to start.

3. Decision Tree

Decision trees are a popular machine learning model used for both classification and regression tasks.

They work by splitting a dataset based on its features and creating a tree-like structure to model this data.

Simply put, a decision tree allows you to continually split data based on certain parameters until a final decision is made.

Below is an example of a simple decision tree for determining whether or not you should eat ice cream on a particular day.

Image by author

- The tree starts with the weather and determines if it's suitable for eating ice cream.

- If the weather is warm, proceed to the next node, health, if not, the decision is “no” and no further division is made.

- At the next node, if the person is healthy, they can eat ice cream, if not, they should refrain from eating it.

Notice how each node in the decision tree splits up the data, breaking down the classification process into simple, manageable questions.

Similar decision trees can be drawn for any regression task with quantitative outcomes and the intuition behind the process remains the same.

Learning Resources

To learn more about decision trees, we recommend watching StatsQuest's video tutorial on the topic.

4. Random Forest

A random forest model combines predictions from multiple decision trees to return a single output.

Intuitively, this model should perform better than a single decision tree since it leverages features from multiple predictive models.

This is done using a technique called bagging or bootstrap aggregation.

Here's how bagging works:

A statistical technique called bootstrap is used to sample the data set multiple times with replacement.

Then, we train a decision tree on each example dataset, and the outputs of all trees are finally combined to render a single prediction.

For regression problems, the final output is generated by averaging the predictions made by each decision tree, while for classification problems a prediction of the majority class is made.

Learning Resources

To learn more about the theory and intuition behind the model, watch Krish Naik's Random Forest tutorial.

5. K-Means Clustering

All of the machine learning models we've discussed so far fall into the category of techniques known as supervised learning.

Supervised learning is a technique that uses labeled datasets to train algorithms to predict outcomes.

In contrast, unsupervised learning is a technique that does not work with labeled data, but instead identifies patterns in data without training on what specific outcomes to look for.

K-Means clustering is an unsupervised learning model that essentially takes in unlabeled data and assigns each data point to a cluster.

An observation belongs to the cluster with the closest mean value.

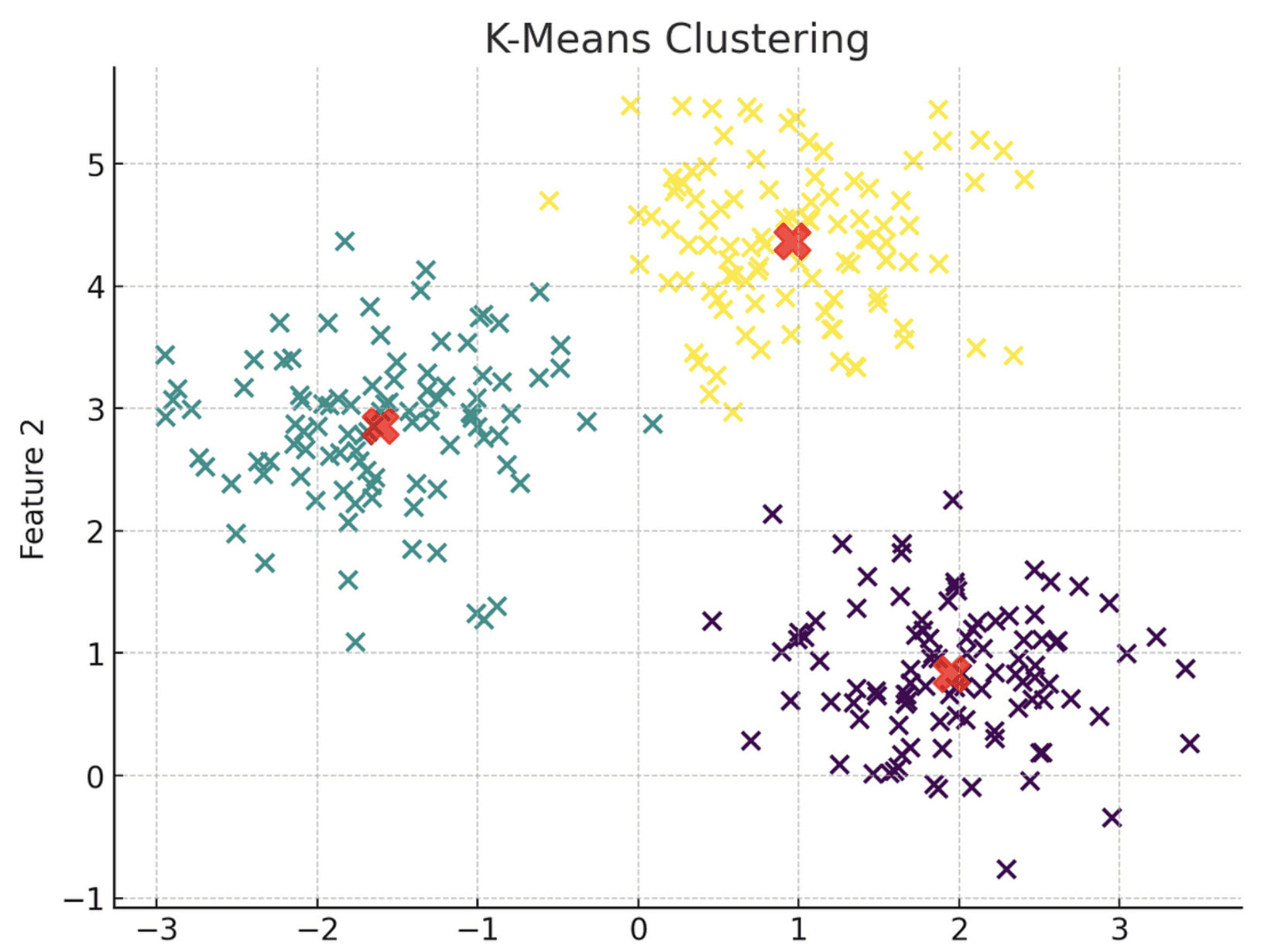

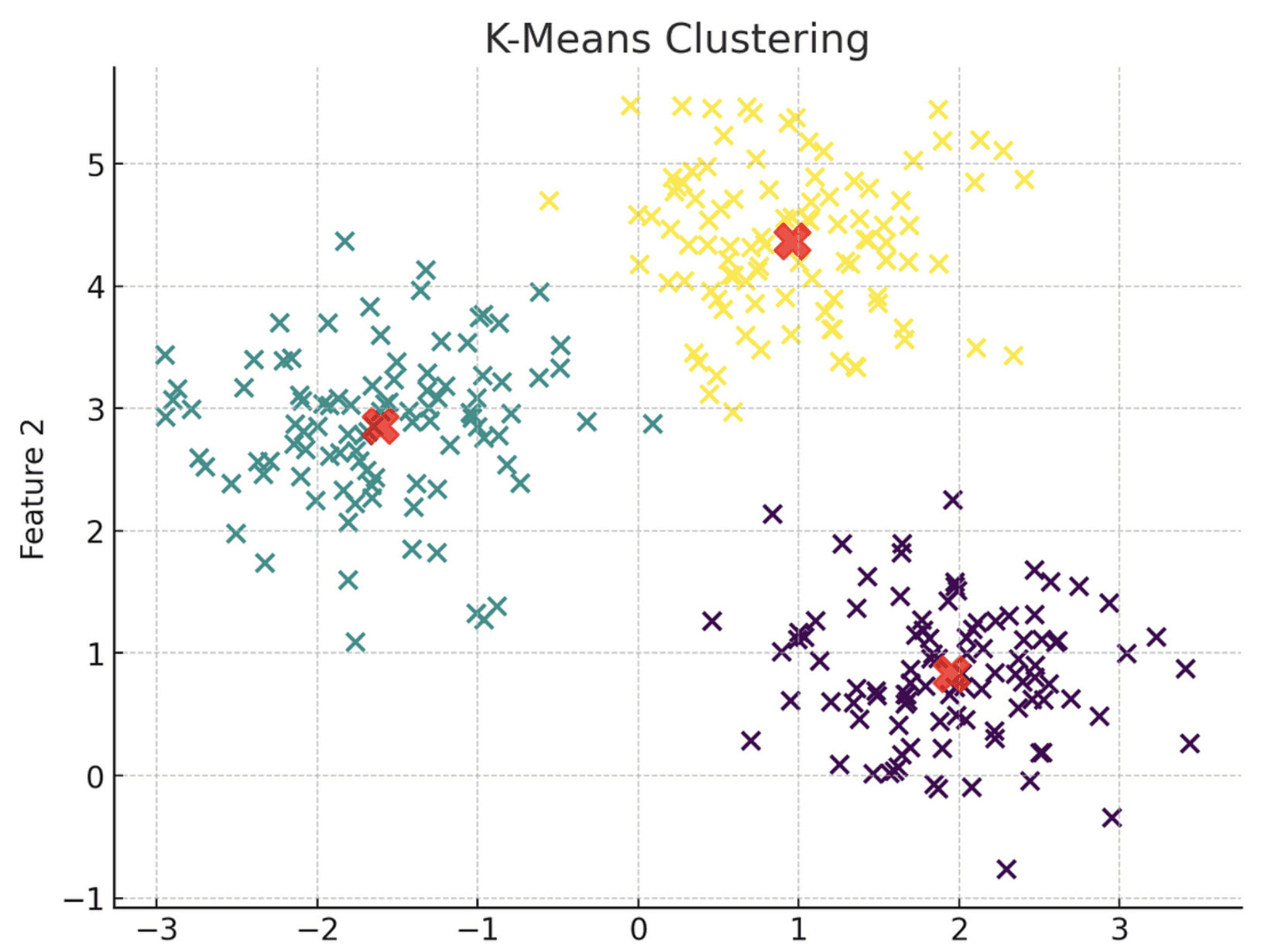

Below is a visual representation of the K-Means clustering model.

Image by author

Notice how the algorithm groups each data point into three separate clusters, each represented by a different color, based on their proximity to the centroid, indicated by the red X.

Simply put, all data points in cluster 1 share similar characteristics and therefore are grouped together. The same principle applies to clusters 2 and 3.

When building a K-Means clustering model, you must explicitly specify the number of clusters to generate.

This can be achieved using a technique called the elbow method, which involves plotting the model's error score versus the various cluster values on a line graph, and then choosing the inflection point, or “elbow,” on the curve as the optimal number of clusters.

Here's a visual representation of the Elbow Method:

Image by author

Note that the inflection point of this curve is at the 3 cluster mark, which means that the optimal number of clusters for this algorithm is 3.

Learning Resources

If you want to learn more about this topic, check out StatQuest

An 8 minute video that gives a good explanation of how K-Means clustering works.

Next steps

The machine learning algorithms discussed in this article are commonly used in applications across industries, including forecasting, spam detection, loan approval, and customer segmentation.

If you made it this far, congratulations! You now have a solid understanding of the most widely used predictive algorithms and have taken the first step towards branching out into the world of machine learning.

But the journey doesn't end here.

To better understand machine learning models and be able to apply them to real-world applications, we recommend learning a programming language such as Python or R.

Freecodecamp's Python for Beginners course

This course is a great starting point, and if you get stuck on your programming journey, there are YouTube videos that explain how to learn to code from scratch.

Once you learn to code, you can actually implement these models using libraries like Scikit-Learn and Keras.

To strengthen your data science and machine learning skills, we encourage you to use generative AI models like ChatGPT to create a learning path that works for you. Below is a more detailed roadmap to help you get started learning data science with ChatGPT.

Natasha Selvaraj Natasha is a self-taught data scientist with a passion for writing. Natasha writes about all things data science and is a true guru on all data topics. You can connect with Natasha on LinkedIn or check out her YouTube channel.