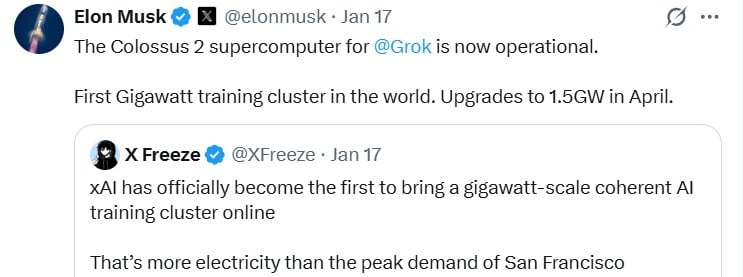

Elon Musk’s xAI announced Colossus 2 on Friday, marking a major breakthrough as the world’s first gigawatt-scale (GW) AI training supercluster. The move outpaces rivals like OpenAI and Anthropic, which are reportedly delayed until 2027 or later.

The main motivation behind this project is to provide the massive computational power needed to train next-generation AI, especially Grok 4.

Built in record time using on-site gas turbines and Tesla Megapacks, the cluster’s current output will rival San Francisco’s peak electricity demand. Additionally, xAI is expected to reach 1.5 gigawatts of capacity by April.

The immediate rollout has drawn praise from Nvidia’s CEO but criticism from activists over contamination in the South Memphis area.

While competitors are still developing their strategic plans for 2027, xAI is already operational at the major city level today.

The execution speed is unrealistic as shown below.

- Colossus 1 – 122 days from start to full operation

- Colossus2 – Incredibly broke the 1GW barrier and targeted a total of 2GW

Elon’s strategy hasn’t completely changed, but it’s moving forward faster than anyone else, the strategy is clear, and it’s being executed at scale. The launch of Colossus 2 marks a significant milestone in the computing era, setting new records for hardware density and the way AI companies interact with the energy grid and the environment.