Although diffusion models are now good at generating images, and researchers are now applying the technique to more complex prediction tasks, significant limitations still remain. These models typically rely on predefined settings and may not always work optimally. KAIST's Changgyoon Oh, Jongoh Jeong, Jegyung Cho, and Kuk-Jin Yoon are addressing this challenge by developing a system that learns to select the most useful steps within a diffusion process, especially for dense prediction tasks that require generating detailed information. An innovative approach that incorporates modules for task-aware timestep selection and feature integration allows models to adapt to new tasks with minimal training data and achieve superior performance on challenging datasets. This breakthrough is expected to expand the applicability of diffusion models to a wide range of real-world problems that require accurate and detailed predictions from limited examples.

Efficient fine-tuning of large language models

This study details a comprehensive overview of research in machine learning, deep learning, and parameter-efficient fine-tuning methods, focusing on advances in vision transformers and multitask learning. This review includes recent developments in adapting large-scale language models using fundamental techniques such as deep residual learning and U-net image segmentation, as well as techniques such as BitFit and LoRA that minimize the number of trainable parameters. Several adapter techniques, prompt tuning techniques, and variations such as MoE-LoRA and LCM-LoRA are also investigated, highlighting their role in improving model efficiency and performance. The analysis extends to multi-task learning and transfer learning strategies, including approaches to disentangling task transfer and sharing knowledge between tasks. We also cover vision transformers such as BEiT and CrossViT and their adaptation to dense predictions, as well as the use of large-scale datasets such as LAION-5B to train image-text models. This review also includes work on specific applications such as medical image segmentation and skin lesion analysis, as well as other related work on depth estimation and meta-learning.

Adaptive timestep selection for diffusion models

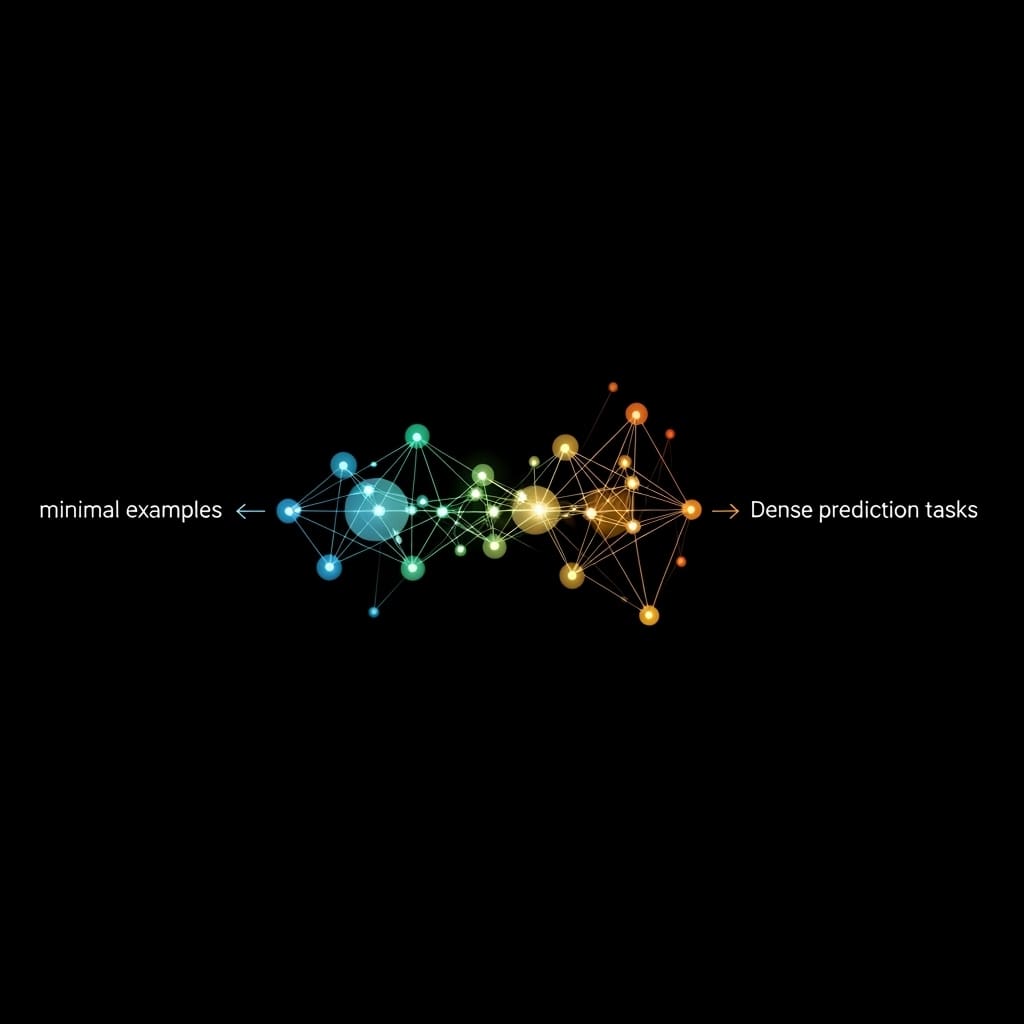

In this work, we introduce a new system for dense prediction tasks that overcomes the limitations of relying on empirically determined choices and adaptively selects the optimal diffusion timestep. Researchers designed a framework that incorporates task-aware timestep selection (TTS) and timestep feature integration (TFC) to go beyond fixed, predefined choices and dynamically identify the timesteps most relevant to accurate predictions. The TTS module iteratively evaluates timestep features based on loss and similarity scores. Meanwhile, the TFC module integrates these features with supporting label information to improve the performance of few-shot learning scenarios. Experiments utilizing a pre-trained latent diffusion model (LDM) and the Taskonomy dataset demonstrate the system's excellent performance in universal and few-shot learning, allowing generalization to unseen tasks with limited labeled samples. This parameter-efficient fine-tuning adapter represents a major advance, providing a path toward more robust and adaptive diffusion-based systems, and ushering in methods for identifying ideal diffusion timesteps to generalize across multiple tasks.

Enhanced diffusion model predictions with adaptive timesteps

Scientists have developed a system that adaptively selects the optimal diffusion timestep, achieving breakthroughs in dense prediction tasks and significantly improving the performance of few-shot learning. This study leverages latent diffusion models for tasks that require detailed pixel-level predictions, recognizing that early timesteps prioritize global structure, while later timesteps focus on more details. To intelligently exploit these characteristics, the team introduced task-aware timestep selection (TTS) and timestep feature integration (TFC). TTS iteratively searches for the most relevant timestep features based on loss and similarity scores, and TFC integrates these features to enhance prediction performance with limited data. Testing on the Taskonomy dataset demonstrates the effectiveness of our approach in universal and few-shot learning, where the system generalizes to unseen tasks with minimal supporting data. Measurements confirm good performance using only a small number of supporting queries, establish a pioneering method for identifying the ideal diffusion timestep, and extend the applicability of pre-trained diffusion models to multiple tasks.

Adaptive timesteps enhance few-shot predictions

This study highlights the importance of carefully choosing the diffusion timestep to achieve good performance in few-shot learning for dense prediction tasks. Scientists have developed a framework that incorporates task-aware timestep selection and timestep feature integration. It adaptively identifies and refines the most useful time steps from the diffusion process, improving prediction accuracy with limited data. Applying this method to large datasets resulted in a noticeable improvement in dense prediction performance, especially in universal and unseen scenarios. The proposed module represents a computationally efficient advance that consists of a minimal ratio of total computational cost and model parameters and is easily applicable to other diffusion model-based applications. Although the current study focuses on time-step stratification based on similarity scores, the authors acknowledge potential limitations in broader generalizability and suggest that future research could explore more robust methods and extend the evaluation to larger and more diverse datasets.

👉 More information

🗞 Task-oriented learnable diffusion timestep for universal few-shot learning of dense tasks

🧠ArXiv: https://arxiv.org/abs/2512.23210