Dataset and preprocessing

Experimental dataset

The data used in this paper comes from the DAIC-WOZ dataset, which is part of a larger clinical interview corpus called the Distress Analysis Interview Corpus (DAIC)40. This dataset also uses a virtual human interviewer, and each audio file contains dialogue data between a patient and a virtual agent named Ellie with a sampling rate of 16 kHz. The dataset provides 189 audio files that has been divided into training, validation, and test sets. The audio ID numbers range from 300 to 492, excluding 342, 394, 398, 460 due to technical reasons. The dataset includes information such as patient ID, binary labels, and PHQ-8 scores. For more details, please see Table 2.

The sample distribution of PHQ-8 scores is shown in Fig. 1.

Sample distribution of PHQ-8 score.

Preprocessing

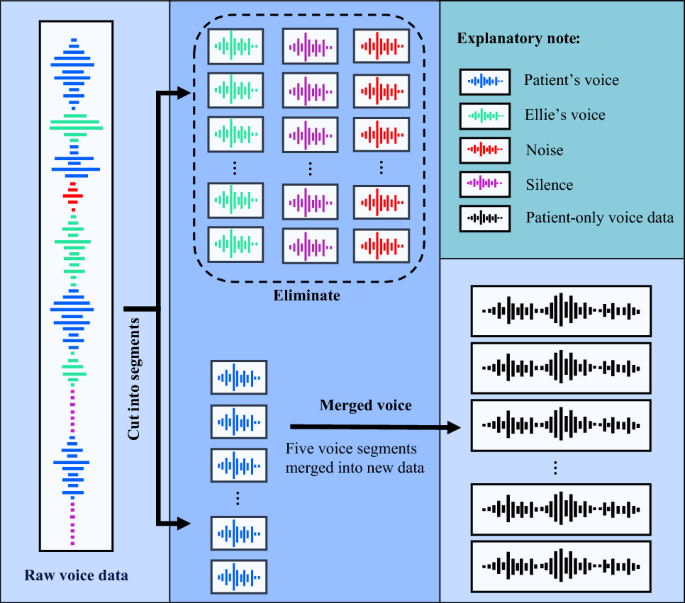

The audio files in the DAIC-WOZ dataset were preprocessed to enhance data quality. While wavelet transforms and signal denoising techniques are commonly employed for data processing41, given the objective of training a robust model in this study, we have employed a distinct strategy. Specifically, we employed voice segmentation and merging techniques to extract segments that exclusively contain patient voice.

The TRANSCRIPT files provided by the DAIC-WOZ dataset contain detailed records of the start and end times of each participant’s conversations. A total of 189 TRANSCRIPT files, each corresponding to a specific participant. To illustrate this, the excerpts extracted from these conversation records are shown in Table 3.

Specifically, first, based on the start and end times of each patient’s utterance recorded in the transcript files of the DAIC-WOZ dataset, 189 raw audio files were segmented into independent segments, each containing only one sentence spoken by the patient. This process resulted in over thirty thousand small segments. We merged these segments in sequential order, grouping every five voice segments together. This procedure generated a total of 6545 new audio files. It is important to note that before merging voices, it must be determined that only voice data from the same patient ID can be merged.

At the same time, Ellie’s voice and long periods of silence were excluded. This process resulted in obtaining patient-only voice data, as depicted in Fig. 2, which outlines the steps involved in voice data preprocessing.

Voice data preprocessing steps.

Redivision of dataset

Adequate data samples, obtained after preprocessing, have helped alleviate the issue of sample imbalance. Therefore, data augmentation is no longer necessary. Subsequently, the preprocessed dataset was randomly divided into training set, validation set, and test set according to the ratio of 6:2:2. The relevant information about the dataset after preprocessing and redivision is summarized in Table 4.

The training set and validation set were used to fine-tune and evaluate the model performance, and the test set was used to evaluate the model reliability in actual applications. To ensure a balanced proportion of labels, the four categories of non, mild, moderate, and severe were separated before being randomly grouped. For repeatability purposes, we used a random seed for division and set it uniformly to 103.

Model and experimental setup

Voice recognition model

The combination of pre-training and fine-tuning has proven to be an effective learning method42. During pre-training, a model is trained on a large dataset in an unsupervised manner to learn meaningful representations. This approach prevents over-fitting to task-specific data because the pre-training stage acts as a regularizer, providing a wealth of prior information.

Wav2vec 2.0 voice pre-training model, with its strong potential in voice processing, can learn discrete voice units and end-to-end context representation. It has evolved from previous models such as contrastive predictive coding (CPC), Wav2vec, and Vq-Wav2vec. As described by Baevski et al.28, it consists of a feature encoder module, a quantization module, and a transformer module, as shown in Fig. 3. The automatic extraction of voice features by the wav2vec 2.0 model proceeds as follows.

Framework of the wav2vec 2.0 pre-trained model.

First, the feature encoder maps the raw audio \(X\) to latent voice representations \(Z:\left\{ {z_{1} ,z_{2} , \cdots ,z_{n} } \right\}\), represented as \(f:X \to Z\). Specifically, the raw audio \(X\) was input into multiple convolution layers, where the first convolution layer was a time convolution and a GELU activation function was adopted. A group normalization method was used prior to GELU to normalize each output channel of the first layer. In each layer, the output data has been normalized to improve robustness. At the last layer, an L2 regularization term was applied to the activation of the feature encoder to stabilize training. By utilizing multiple convolution layers, the feature encoder was able to acquire high-level semantic information from voice.

Second, in the quantization module, the output of the feature encoder \(Z\) was discretized into a set of quantized voice features \(Q:\left\{ {q_{1} ,q_{2} , \cdots ,q_{n} } \right\}\) that can be expressed as \(h:Z \to Q\). \(Q\) was used as a self-supervised objective. The quantization process reduced the difficulty of predicting real voice features because the output of the feature encoder \(Z\) was transformed into a finite set of voice representations. The Gumbel softmax was used in this module to solve the problem that the feature space was not derivable and cannot be back-propagated after being discretized.

Third, the latent voice representations \(Z\) from the feature encoder module were directly input to the transformer module to create context representations \(C:\left\{ {c_{1} ,c{}_{2}, \cdots ,c_{n} } \right\}\). This process can be expressed as:\(g:Z \to C\). Before being input to the transformer module, approximately half of the latent voice representations \(Z\) were masked by the transformer’s encoder. This masking process brings the context representation \(c_{i}\) of masked locations closer to the corresponding discrete features \(q_{i}\). Due to self-attention mechanisms, each representation in the final output sequence may contain both local and global information10. The transformer module effectively captures long-distance dependencies and global context information, further improving the speech recognition performance of the model.

Finally, the quantized voice representations \(q_{i}\) and context representations \(c_{i}\) were used to calculate the loss function \(L\). For each \(c_{i}\) generated by the masked position, the positive example is \(q_{i}\) generated by the quantization module at the same position, and the negative example is \(\kappa\) quantization vectors \(\tilde{q} \in Q_{{\text{t}}}\) generated by the quantization module at other masked positions. When the loss function \(L\) reached its optimal value, high-quality voice features from the wav2vec2.0 model were input into a small fine-tuning network for voice depression recognition. The Loss function is defined as Eq. (1):

$$L = – \log \frac{{\exp (sim(c_{i} ,q_{i} ))/\kappa }}{{\sum\nolimits_{{\tilde{q}\sim Q_{{\text{t}}} }} {\exp (sim(c_{i} ,\tilde{q}))/\kappa } }}$$

(1)

There are two types of wav2vec2.0 models (Base and Large) that share the same encoder architecture but differ in the number of transformer blocks and model dimensions. The Base model is trained on the Librispeech corpus. It contains 7 convolutional layers and a 12-layer transformer structure. Each convolutional layer has 512 channels, with strides of (5, 2, 2, 2, 2, 2, 2) and kernel widths (10, 3, 3, 3, 3, 2, 2). The convergence of speed of the Base model is faster than that of the Large model, and the Base model requires fewer computational resources. Hence, the Base mode is more suitable for small datasets or scenarios with limited computing power.

A large amount of voice data has been used to train the wav2vec 2.0 pre-training model in advance, so it can quickly and accurately extract high-quality voice features. Furthermore, the DAIC-WOZ dataset was used to fine-tune the wav2vec 2.0 model for depression recognition. We performed all experiments using the Base model and connected a small fine-tuning network to its output for voice depression recognition as shown in Fig. 4.

Overall framework of voice depression recognition.

As shown in Fig. 4, the voice signal was input into the wav2vec 2.0-base model, and the feature encoder module was used to capture the local information of the voice signal, while the transformer module was employed to capture global speech features, resulting in the extraction of high-quality voice features. These high-quality voice features were then pooled to expand the model’s receptive field, thereby enhancing both accuracy and robustness in voice recognition. Then, a dropout layer was applied in the small fine-tuning network to prevent over-fitting. By randomly dropping some neurons, it reduces co-adaptation between neurons so that the dependence of weight updating on fixed hidden nodes was decreased. The model proposed in this paper was fine-tuned through continuous iterations and the predictions of the model were output when the loss function reached the desired value.

Experimental tasks

Currently, research on depression predominantly revolves around binary classification, with limited exploration into multi-classification. Hence, this study has established two experimental tasks: binary classification and multi-classification.

In the binary classification task, we detected whether patients have the possibility of suffering from depression. The voice data labels had set to dep or ndep based on the binary values provided by the DAIC-WOZ dataset.

In the multi-classification task, the severity of depression was divided into four levels: no depression, mild depression, moderate depression, and severe depression. The PHQ-8 score (range 0–24) was discretized into 4 categories: [0–4], [5–9] , [10-14] and [15–24], and these four categories are labeled as non, mild, moderate and severe, respectively.

Parameter setting

Information about the experimental environment for this paper is as follows: CPU: 11th Gen Intel(R) Core(TM) i7-11700 @ 2.50 GHz; GPU: NVIDIA GeForce RTX 3090; RAM: 24G. Operating system: 64-bit Ubuntu 20.04.4 LTS; CUDA: 11.6; Python 3.7.

During the fine-tuning process, the pre-trained network parameters are also updated. To prevent large changes in parameters, a smaller learning rate should be chosen. Using a larger learning rate may cause drastic changes in the parameters that leads to bad performance of the model. This is because the pre-trained model has already been trained on a large amount of data and its parameters have been adjusted to an optimal state. We perform an experimental comparison of the effect of different learning rates (\(1 \times 10^{ – 4}\), \(1 \times 10^{ – 5}\), \(1 \times 10^{ – 6}\)) on model performance and find that the model performs best when the learning rate is \(1 \times 10^{ – 5}\). Therefore, we fixed the learning rate at \(1 \times 10^{ – 5}\) for all subsequent experiments. In addition, considering limited computational resources and the need to prevent memory overflow, the batch size was set to 4 for each run, with two gradient accumulations. As a result, the cumulative batch size was set to 8.

Evaluation methods

In the case of sample imbalance, relying solely on a single metric may lead to an incomplete evaluation. Therefore, the classification performance of the model was assessed using a comprehensive set of five evaluation metrics: accuracy, precision, recall, F1 score, and the RMSE. The formula for calculating accuracy is shown in Eq. (2)43,44,45:

$$Accuracy = \frac{TP + TN}{{TP + TN + FP + FN}}$$

(2)

The calculation formulas for Precision, Recall, and F1 score are shown in Eqs. (3)–(5)46,47,48:

$$Precision = \frac{TP}{{TP + FP}}$$

(3)

$$Recall = \frac{TP}{{TP + FN}}$$

(4)

$$F1 = \frac{2 \times Precision \times Recall}{{Precision + Recall}} = \frac{2TP}{{2TP + FP + FN}}$$

(5)

where TP (true positive) represents the number of that the sample is predicted to be positive and it is positive; TN (true negative) represents the number of that the sample is predicted to be negative and it is negative; FP (false positive) represents the number of samples predicted to be positive but being negative; FN (false negative) represents the number of samples predicted to be negative but being positive.

Precision measures how many of the samples predicted to be positive and they are actually positive; Recall measures how many of all positive samples are correctly predicted; and the F1 score is the harmonic mean of precision and recall, which can comprehensively consider the impact of both.

The calculation formulas for RMSE is shown in Eq. (6)33,49:

$$RMSE = \sqrt {\frac{1}{m}\sum\limits_{i = 1}^{m} {(y_{i} – \widehat{y}_{i} )}^{2} }$$

(6)

where \(y_{i}\) is the real value, \(\widehat{y}_{i}\) is the predicted value, and \(m\) is the number of samples. RMSE reflects the difference between the predicted value and the real value. The smaller the value of RMSE, the higher the accuracy of the prediction model.