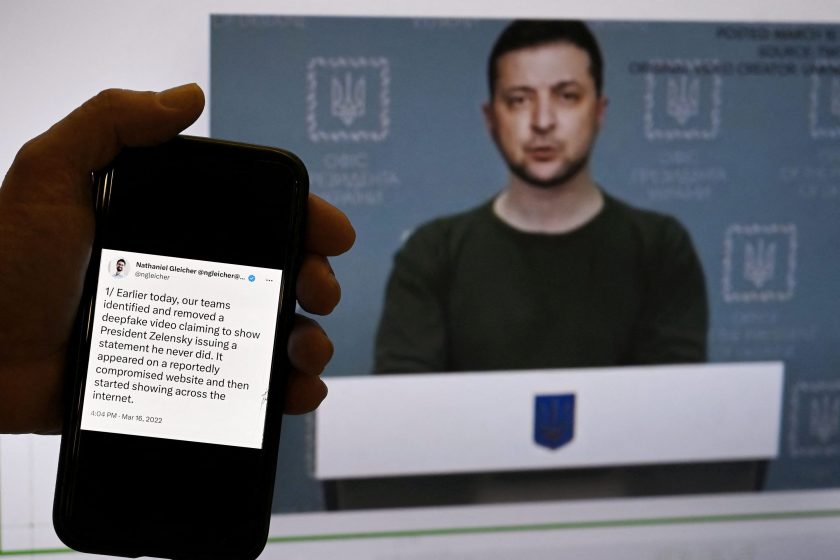

This illustrated photo taken on January 30, 2023 shows a phone screen displaying a statement from META’s security policy officer. In the background is shown a fake video of Ukrainian President Volodymyr Zelensky calling on his soldiers to lay down their weapons, as shown in Washington. DC. Olivier d’Uriery/AFP — Getty Images)

Social media is flooded with fake images of stylish Pope Francis, Elon Musk protesting in New York, and Donald Trump resisting arrest.

Such AI-generated images and videos, or deepfakes, are becoming increasingly accessible due to advances in artificial intelligence. As more sophisticated image hoaxes become more prevalent, it becomes increasingly difficult for users to distinguish between real and fake images.

Deepfakes get their name from the technology used to create them: deep learning neural networks. When unleashed on a dataset, these algorithms can learn patterns and replicate them in novel and compelling ways.

This technology can be used for entertainment, but it also has dark potential that raises social and ethical concerns.

Unlike simplistic stories and memes that are little different from the propaganda techniques of Nazi Germany or the photo-editing of communist Russia, deepfakes have a high degree of realism. Accessibility to ordinary citizens and states can undermine our sense of reality.

fake news anchor

Beyond growing concerns that AI-generated art threatens human art and artists, deepfakes could be used as an unchecked mouthpiece for organizations and nations.

Leading the way, China’s state media is experimenting with an AI newscaster named Ren Xiaorong. Ren isn’t the first Chinese-developed AI news anchor, but it shows both a commitment to technology and an incremental increase in realism.

Other countries such as Kuwait and Russia have also launched AI-generated anchors.

Looking at these anchors, you might counter that only the most naive viewer would mistake them for real humans, such as Russia’s first robotic news anchor. They cannot be dismissed.

fake news

China’s transparency using AI-generated news anchors contrasts with Venezuela’s hoaxed news reporting. Venezuelan state media has released a favorable report on the country’s progress, allegedly produced by an international English-language news outlet. However, the story and anchor are a hoax.

The use of these videos in Venezuela is particularly troubling as they are used as external verification of government activity. Claiming that the video is from outside the country provides another source of information to strengthen their claims.

Venezuela is not alone in adopting these methods. A fabricated video was also circulated of Ukrainian President Volodymyr Zelensky discussing surrender to Russia during the ongoing Russian-Ukrainian conflict.

Falsified images and videos are just the tip of the deepfake iceberg. In 2021, Russia was accused of using deepfake image filters to simulate enemies during interviews with international politicians.Ability to imitate political figures and interact with others real time It’s a really disturbing development.

As these technologies become available to everyone from harmless meme creators to aspiring social engineers, the lines between reality and imagination become increasingly blurred.

The proliferation of deepfakes portends a post-truth world defined by fragmented geopolitical landscapes, echo chambers of opinion, and mutual mistrust that can be exploited by governments and non-governmental organizations.

disinformation and believable fakes

The spread of disinformation requires an understanding of how ideas, innovations, or actions spread within social networks called social contagion.

Cognitive science is concerned with ‘information’, anything that reduces uncertainty about the actual state of the world. disinformation It has the appearance of information, except that uncertainty is reduced at the expense of accuracy.

The observation that disinformation spreads faster likely stems from the fact that when the message is simple, it increases our trust.

Disinformation spreads for many reasons. It must appear as close to “truth” as it is believable. When a new “fact” contradicts what we know, we tend to reject it, even if it is true. People hate contradictions and try to resolve them. Also, people ignore the structure and quality of the argument and focus on the credibility of the conclusions.

Deepfakes move us beyond text-based persuasion. Because images make messages much more memorable and compelling than abstract concepts alone. So using it to spread disinformation is much more concerning.

The structure of the environment is also important. People pay attention to available information, focusing on information that confirms their previous beliefs. By increasing the frequency of images, ideas, and other media, people increase their confidence in their own knowledge and the illusion of agreement.

social networks and contagion

We seek out reliable sources (experts and peers), but our memory stores information separately from that source. Over time, this source-monitoring failure leads to retrieving information from memory without understanding its origin.

Through product placement and algorithms that control media exposure, marketers and governments have used these techniques for generations. Most recently, social media influencers have been paid to spread disinformation.

The introduction of AI will only accelerate this process by giving us tighter control over our information environment through dark patterns of design.

legal, social and moral issues

Creating, managing and distributing information empowers and empowers people. Truth loses its value when the information ecosystem is flooded with false information.

“Fake news” accusations have become a tactic used to discredit arguments. Deepfakes are variations on this theme. Social media users have already falsely claimed that real-life videos of US President Joe Biden and former US President Donald Trump are fake.

Social movements such as Black Lives Matter and claims about the treatment of Uyghurs in China rely on the compelling nature of videos.

Once a belief is formed, it is difficult to refute it. The time required for verification (especially when left to the user) allows false information to spread. Private and public fact-checking websites can help. But building trust requires legitimacy.

Brazil provides a recent demonstration of such an attempt. After the government launched the verification website, critics accused it of pro-government bias. However, government officials have pointed out that the site is not meant to replace private initiative.

There is no easy solution to revealing deepfakes. Rather than being passive consumers of media, we must actively challenge our own beliefs.

The only way to combat harmful forms of artificial intelligence is to cultivate human intelligence.

Jordan Richard Schoenherr is an Assistant Professor of Psychology at Concordia University.

see chart