Opus 4.7, released by Anthropic last week, included stronger protections to prevent exploits. Unfortunately, these safeguards sometimes prevented legitimate use.

Opus 4.7 came shortly after Anthropic announced Mythos. Mythos is a model that is considered to have too many vulnerabilities discovered and exploited for public release. Although this seems like an arbitrary assessment of the model’s risk, the company decided to use Opus 4.7 as a testbed for high-alert guardrails.

“We are releasing Opus 4.7 with protections that automatically detect and block requests that indicate prohibited or high-risk cybersecurity uses,” the AI industry said in a statement. “What we learn from the actual deployment of these safety measures will help us work toward our ultimate goal of broadly releasing the Mythos class of models.”

Anthropic learned a lot by poring over the complaints in Claude Code’s GitHub repository. Objections to the company’s Terms of Use (AUP) classification are proliferating, and customers are struggling to do legitimate work.

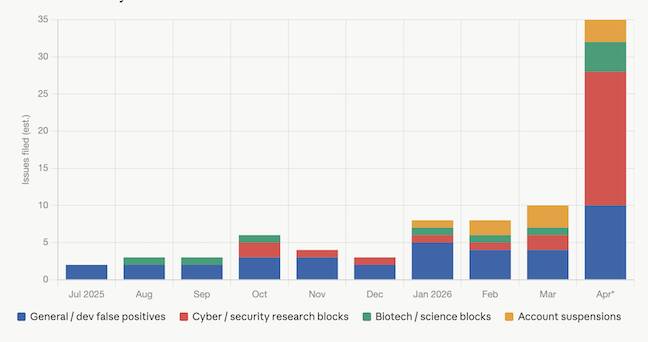

As security increases, so do false positives. Claude became overly cautious and refused to respond to innocuous requests. Claude’s graph of AUP denial complaints tells us the following:

Graph of Claude’s problem with model rejection – click to enlarge

Claude Code users have been voicing concerns about invalid rejections in issue posts on GitHub for several months, but the rate has remained fairly stable until recently.

From July 2025 to September 2025, we received approximately 2-3 complaints of this type per month. One such issue was #4373, “Memory authorization code from claude.ai causes API policy error.”

From October 2025 to November 2025, AUP-related rejections increased to approximately 5-7 per month, with issues such as #8784 “Claude 4.5 Throws API Error: Claude Code is unavailable to respond to this request for a random, regular request.”

In December, there were only a few related complaints, likely due to the US holiday slowdown.

The number of complaints in January was back to about eight. The developer who submitted #16129, “Repeated erroneous AUP violations in code code,” said, “Conversations about technical software development should not result in AUP violations. Safety filters seem overly aggressive against harmless content.” The numbers were similar in February and March.

Then, in April, the dam burst.

In April, developers filed more than 30 reports alleging false positives related to security, general development use, and scientific rejection.

These include:

- Issue #48442, “Persistent AUP False Positives — 40+ per 4 sessions across unrelated projects (psychology books, web apps, infrastructure, bots)” deals with Claude’s refusal to handle various Russian prompts.

- Issue #49751, “Opus 4.7 flags standard computational structural biology as usage policy violation, regression from 4.6.” This describes flagged computational structural biology tasks.

- Publication #50916, “Usage Policy Issues,” by Golden G. Richard III, director of the LSU Cyber Center and Applied Cybersecurity Lab, explains how Claude was denied access to the Cybersecurity Lab. “For $200 or more per month, I expect that basic editorial support will not be denied,” he said. “This is a lab related to my textbook, Cybersecurity in Context. I’m well aware of the potential malicious use cases for the model, but a model that refuses to be calibrated in a lab involving simple cryptographic exercises is ridiculous. If the model is so confusing that it can’t be understood by cybersecurity educators or researchers (which I am), then we can’t use them. What positive impact does this have on security?”

- Issue #48723, “Constant AUP Violation Error Occurs When Claude Code Reads Raw Data File (Example Included)” describes how Claude throws an AUP error when asked to read a PDF of a Hasbro Shrek toy ad. The developer who posted the issue then identified specific PDF content stream syntax in the file that caused Claude to refuse further work. It translates to “under the character or donkey.”

- Next, issue #49679, “Cyber use case exemption is granted and works in Claude chat, but using Claude code API access continues to retrieve FP from secure systems. Approved cyber use case exemptions are not fully propagated to Claude code’s API using Opus.” This is due to the special exception Anthropic set up to allow security researchers access to bypass security guardrails. Here’s how it doesn’t work.

There are also many recent examples of questionable denials, including #50795, #51352, #51794, #52086, #50494, #49904, #46147, and #51248.

Part of the increase can probably be attributed to a growing user base. As Claude has more customers, more people will report problems. However, there are clearly a large number of cloud users who are being shut down by excessive AUP classifiers.

Given how the source of the leaked code code uses regular expression patterns for sentiment analysis, the AUP classifier may also be performing a similar shortcut by simply checking for banned words without considering context.

Anthropic did not respond to a request for comment. ®