A team from ByteDance and Nanyang Technological University has developed a system that keeps AI-generated videos consistent across multiple scenes. This approach saves key frames from previously generated scenes and uses them as references for new scenes.

Current AI video models such as Sora, Kling, and Veo deliver impressive results for individual clips of a few seconds. But when you combine multiple scenes to create a coherent story, a fundamental problem becomes apparent. Characters' appearance changes from scene to scene, environments look inconsistent, and visual details change.

According to the researchers, previous solutions presented a dilemma. Processing all scenes together in one model increases computational costs rapidly. Generating each scene separately and combining them results in inconsistencies between sections.

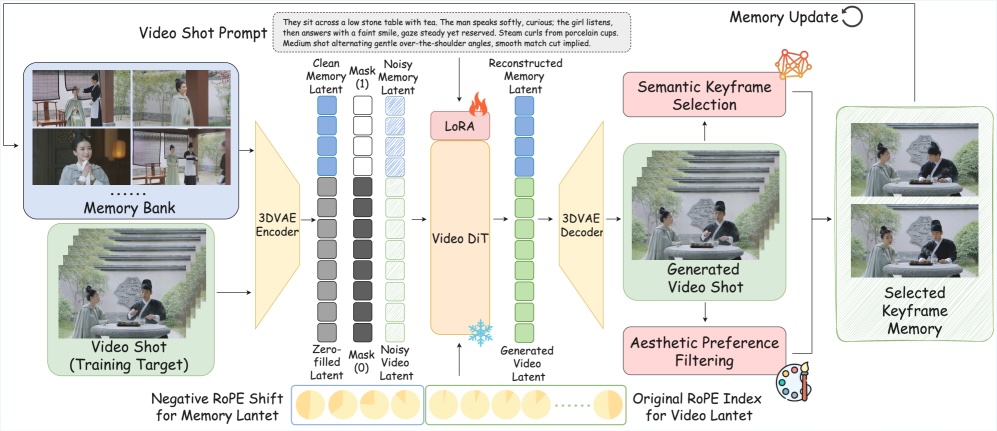

The StoryMem system takes a third approach. Save the key frames you selected during generation to a memory bank and refer to them for each new scene. This gives the model a record of what the characters and environment looked like early in the story.

Make your memory easier to manage with smart choices

Rather than storing every frame generated, the algorithm selects visually significant images by analyzing the content and identifying semantically different frames. The second filter checks the technical quality and filters out blurry or noisy images.

The memory bank uses a hybrid system. Early key images remain as long-term references, and more recent images are rotated through a sliding window. This keeps memory size down without losing important visual information from the beginning of the story.

When generating a new scene, the saved image is fed into the model along with the video being created. A special positional encoding called RoPE (Rotary Position Embedding) allows the model to interpret memory frames as preceding events. The researchers assigned a negative time index to the stored images, causing the model to treat the images as events in the past.

Adaptive keyframe extraction with semantic deduplication

The practical advantage of this approach is that it reduces training effort. Competing methods require training on long, continuous video sequences, which are rarely available in high quality. StoryMem works with the LoRA adaptation (low-rank adaptation) of Alibaba's existing open source model Wan2.2-I2V.

The team trained on 400,000 short clips, each 5 seconds long. Because we grouped clips by visual similarity, the model learned to generate consistent sequels from related images. This enhancement only adds about 700 million parameters to the 14 billion parameter model.

Benchmarks show significant consistency improvement

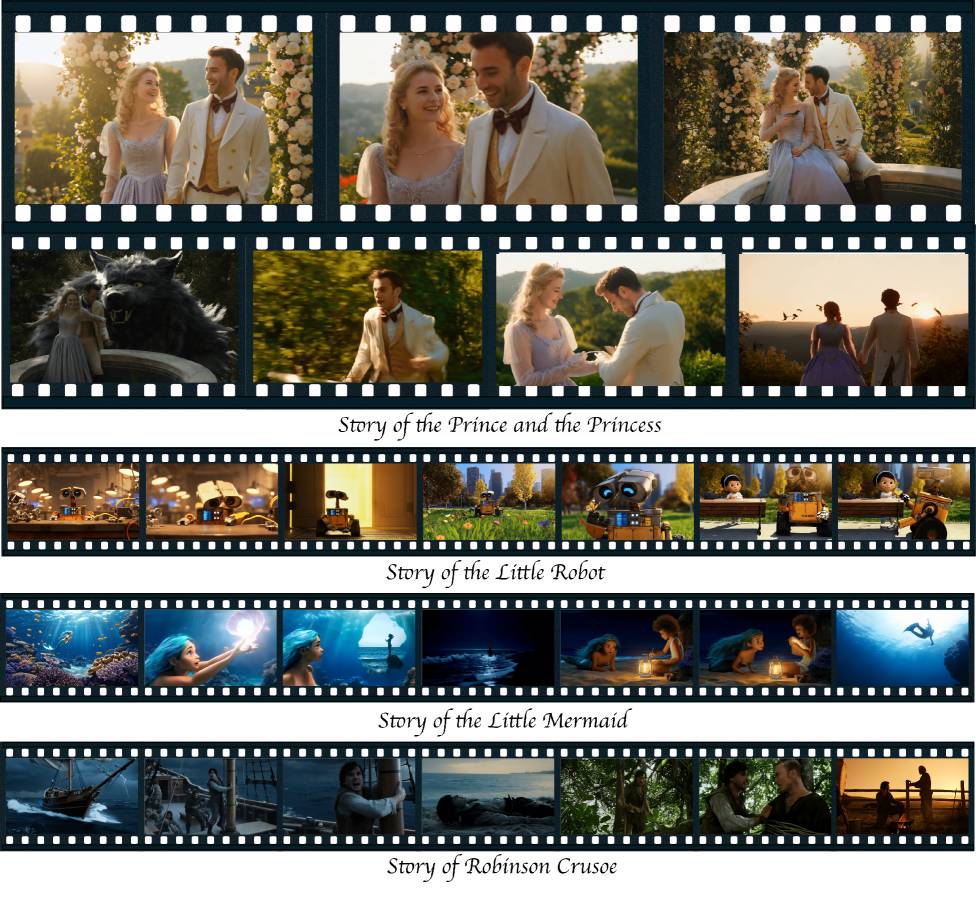

The researchers built their own benchmark called ST-Bench for evaluation. Includes 30 stories and 300 detailed scene descriptions, covering styles from realistic scenarios to fairy tales.

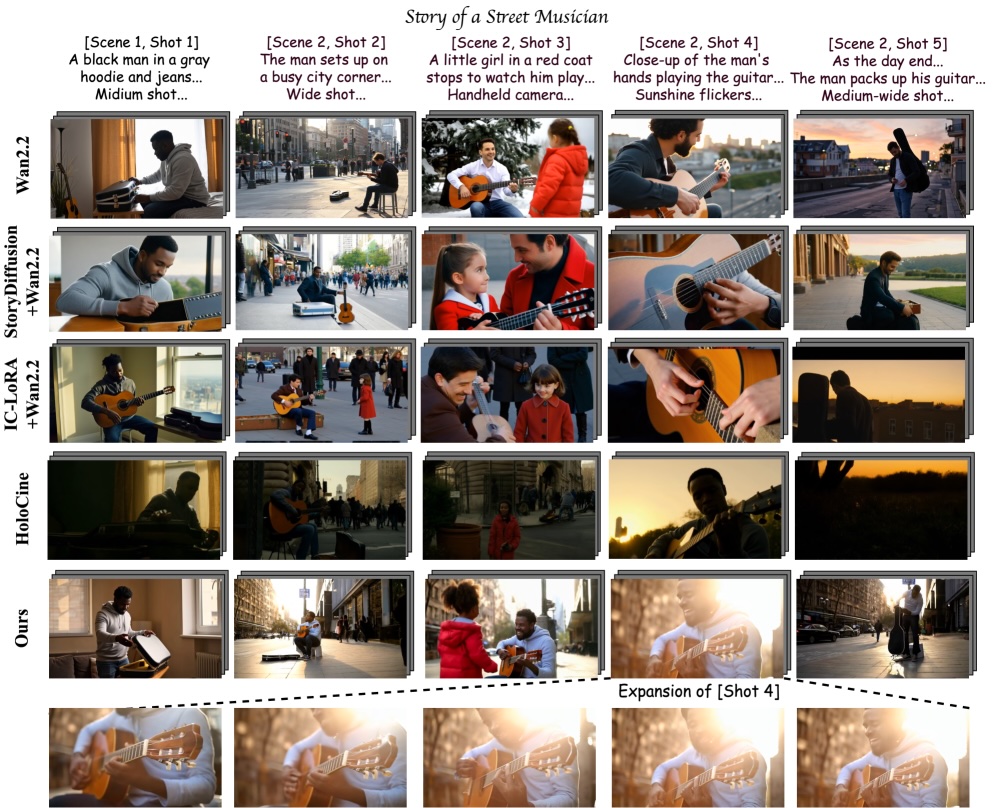

Research shows that StoryMem significantly improves scene-to-scene consistency. The model performs 28.7 percent better than the unmodified base model and 9.4 percent better than HoloCine, which the researchers describe as the previous state-of-the-art. StoryMem also achieved the highest aesthetic score among all consistency optimization methods tested.

User research supports quantitative results. Participants preferred StoryMem's output over all baselines in most evaluation categories.

| method | Appearance quality↑ | Follow prompt↑ | Cross shot stability↑ |

|---|---|---|---|

| global | Single shot | ||

| Wan2.2-T2V | 0.6452 | 0.2174 | 0.2452 |

| Story Diffusion + Wan2.2-I2V | 0.6085 | 0.2288 | 0.2349 |

| IC-LoRA + Wan2.2-I2V | 0.5704 | 0.2131 | 0.2181 |

| holocine | 0.5653 | 0.2199 | 0.2125 |

| ours | 0.6133 | 0.2289 | 0.2313 |

This framework supports two additional use cases. Users can feed their own reference images (for example, photos of people or places) as a starting point for the memory bank. The system then generates a story that features these elements throughout. Scene transitions can also be done more smoothly. Instead of a hard cut, the system can use the last frame of one scene as the first frame of the next scene.

Complex scenes still pose challenges

The researchers note several limitations. The system has a hard time with scenes that include many characters. Because the memory bank stores images without assigning them to specific characters, the model may incorrectly apply visual properties when a new character appears.

As a workaround, researchers recommend writing the letters explicitly in each prompt. Frame connections do not contain velocity information, so transitions between scenes with widely varying movement speeds can also appear unnatural.

A project page with additional examples is already live. ST-Bench will be released as a benchmark for further research. Researchers published the weight of Hugging Face.

AI News Without the Hype – Curated by Humans

as The Decoder Subscriberyou can read without ads. Weekly AI Newsletterexclusive “AI Radar” Frontier Report 6 times a yearaccess to comments, and Complete archive.

Subscribe now