The control of wastewater and the monitoring of water quality in metropolitan areas are problematic. Combining machine learning methods allows for development of a model that reliably forecasts urban water quality. Hence, multiple intricate wastewater engineering problems have been tackled using the Regression Tree model, SVM and PSO. These issues include air entrainment in drop maintenance holes, pool depth, and lateral outflow in low-crested side weirs. Use pH, chemical concentration, and temperature readings from monitoring wells to predict water quality. It is possible to optimize the machine learning model’s parameters using the particle optimization approach to get very accurate results when forecasting water quality. We expect these machine-learning methods will prove their worth by being applied to previously investigated experimental challenges.

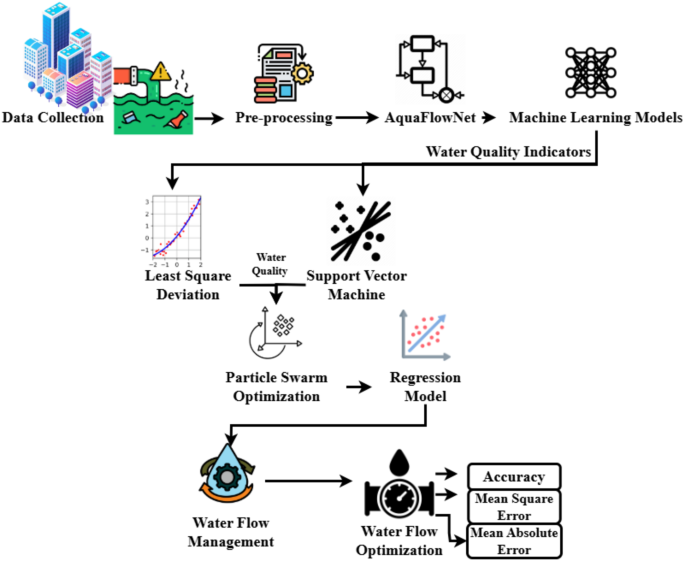

The wastewater management system begins with data collection, as depicted in Fig. 1. Flow meters and IoT devices deployed at monitoring sites gather real-time data on critical parameters such as temperature, chemical concentrations, pH levels, and wastewater flow rates. This data is sourced from treatment facilities, pipelines, and groundwater systems to ensure comprehensive monitoring.

In the preprocessing stage, the collected data undergoes noise removal, missing value imputation, and standardization to improve its quality. Techniques like outlier detection and data normalization ensure consistency, which is crucial for reliable machine learning predictions.Once preprocessed, the data is analyzed using the Least Squares Support Vector Machine (LS-SVM) to assess groundwater quality. The LS-SVM model performs classification and regression to evaluate key metrics such as dissolved oxygen (DO), pH levels, and pollution indicators. However, due to the complex and nonlinear nature of wastewater data, Particle Swarm Optimization (PSO) is employed to fine-tune the LS-SVM model’s parameters, including kernel coefficients and feature weights. This optimization improves both accuracy and adaptability to dynamic environmental conditions.

For wastewater flow prediction, Regression Tree Models are utilized. These models incorporate historical and real-time data to provide accurate estimations of wastewater flow rates under different scenarios. This enables proactive management of wastewater systems, facilitating early detection of anomalies and improved operational control.The model’s performance is evaluated using metrics such as Mean Square Error (MSE), Mean Absolute Error (MAE), and overall accuracy. These measures validate the effectiveness of the proposed approach compared to baseline methods. The integration of this optimized model into a decision support system allows wastewater treatment facility operators to leverage real-time monitoring and data-driven insights for efficient resource allocation and compliance with environmental standards.

Additionally, wastewater output prediction begins with building population estimation, a key factor in demand forecasting. A two-stage approach is used for greater accuracy:

-

1.

Estimating population density based on building area, which is effective for most urban settings but challenging in densely packed slum areas.

-

2.

Averaging population density across sub-districts to refine predictions in areas with irregular housing patterns

By integrating machine learning models, optimization techniques, and real-time monitoring, this comprehensive system ensures accurate forecasting, adaptive parameter tuning, and practical insights for sustainable wastewater management.

Wastewater output prediction begins with determining building population. A two-stage method improves accuracy: first, population density is estimated from building areas, though this poses challenges in slums due to small, densely packed structures. To address this, the second approach averages population density across a sub-district. The final estimate combines both methods. Pre-processing, essential for wastewater quality prediction, includes data cleansing and optimization.

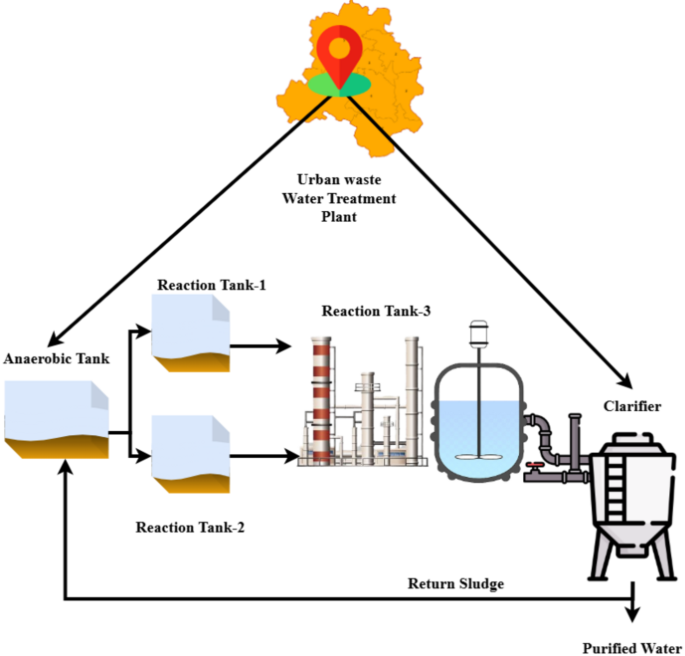

Wastewater Treatment Process.

This investigation focused on an urban nutrient removal data set from the municipal treatment plant. A daily average capacity of 22,000 tons is designed for this WWTP. The wastewater treatment plant has a clarifier, anaerobic/aerobic reactors, and a sedimentation tank. The wastewater treatment plant (WWTP) has a grit chamber, aerobic, anaerobic, pretreatment tanks, and an active sludge system (Fig. 2). After the biological treatment system, the final effluent was treated using flocculation, sedimentation, sand filtration, disinfection, and a secondary clarifier.

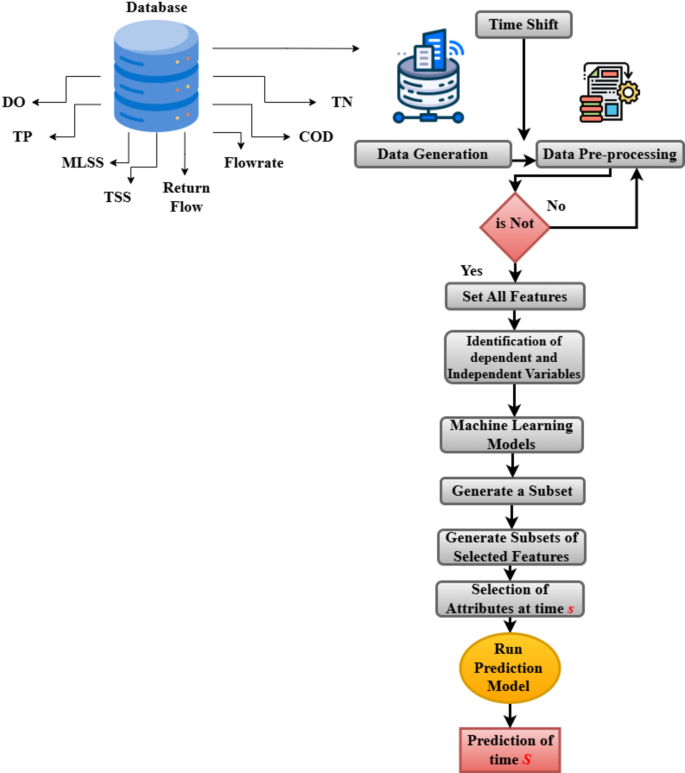

Figure 3 examines the feature selection. An information set for training purposes that includes hourly readings of the following parameters: dissolved oxygen (DO), influent flowrate (Qin), effluent flowrate (Qeff), return flowrate (RAS), waste flowrate (WAS), mixed liquor suspended solids (MLSS), total phosphorus (TP), and total suspended solids (TSS). Input and effluent waste quality statistics from WWTPs. A total of 14.08 mg/L of COD, 2793.07 mg/L of MLSS, 3.66 mg/L of TSS, 7.13 mg/L of TN, and 0.55 mg/L of TP were measured in the influent. With a data collection frequency of 1 h, the operation data were acquired in real-time. In addition, standardizing the dataset and removing superfluous datasets are crucial steps in producing an adequate model. Finding the best sensors and operational parameters in a dataset is the primary objective of feature selection. In reality, feature reduction is challenging and often requires extensive testing. Many methods exist in the machine learning community to determine which attributes to use as predictors of future outcomes or as nonpredictors.

Recursive feature removal aims to shorten training time and speed learning by removing nonpredictive features from a model without increasing its error. Consequently, employing data that may be predicted using a regression and a decision-tree-based classifier is essential to improve a detection system to extract relevant attributes. The research lends credibility to the idea that regression-based feature selection may improve classifier performance and identify important characteristics of influent and effluent water quality indicators. Here, we can see the steps to generate data extracted from the SCADA database before it is preprocessed. Finally, water parameters are eliminated using RFE and a decision tree model. Feature selection occurs at time step \(\:S\), and the procedure continues until completion by predicting the effluent at time \(\:S\).

Equation (1a) was used to estimate the population in this study. In this context, \(\:Building\:Production\:\left(Pb\right)\)represents building occupancy rate, Building area represents building size, \(\:\sum\:building\:area\)measures the sum of all building areas in a region, population represents the region’s population, and \(\:\sum\:building\)represents the region’s total number of buildings. This approach determines Bandung City sub-district regions. The term “population estimate” refers to studies that monitor demographic characteristics such as birth and death rates, migration trends, and population growth and composition. The research also included two methods for estimating construction wastewater production. The original method estimated home and business trash output. This computational technique uses the rural factor (RF) variable to account for the reality that not all inhabitants work from home and create wastewater at home. Finding out what proportion of the population works in each sub-district showed that a lower RF value indicates a greater workforce.

$$\:Building\:Production\:\left(Pb\right)=\frac{\left(\frac{building\:area}{\sum\:building\:area}\times\:population\right)+\left(\frac{population}{\sum\:building}\right)}{2}$$

(1a)

$$\:Residential\:Building = Residents \times \:\frac{{93L}}{{resident}}/c \times \:RA$$

(1b)

$$\:Commercial\:Building = Building\:Area \times \:\frac{{2.4L}}{{m^{2} }}/d$$

(1c)

Residential and commercial waste generation formulae are in Eq. 1(a-c). The daily water consumption of residential and commercial buildings is \(\:Residential\:Building\) and \(\:Commercial\:Building\) respectively. Hospitals and places of worship were regularly computed at 1600 and 2400 L/d, respectively. The final wastewater output estimate was the average of techniques one and two, determined for each building.

Based on two possible outcomes—one with a river and the other without—this analysis calculated the possible access to an existing centralized wastewater system. Because rivers are sometimes the only means of disposing of untreated wastewater in places lacking sanitation, access to river parameters is an important consideration. In this study, we look at the possibility of future construction connections to the sewage system. The river’s proximity is important for extending the wastewater treatment infrastructure’s sewage system. Combining the index created without considering each parameter’s weight with the percentage of sub-district sanitation access yielded the final output.

$$\:Santiation\:access={\sum\:}_{j=0}^{m}K{d}_{j}+T{J}_{j}+C{O}_{j}+C{Z}_{j}+C{R}_{j}$$

(2)

Potential sanitation access \(\:Santiation\:access\) formula shown in Eq. (2). Here, \(\:m\) is the number of buildings processed in the research area, \(\:j\) is the building parameter, \(\:K{d}_{j}\) is the land cover parameter, \(\:T{J}_{j}\)is the slope parameter, \(\:C{O}_{j}\) is the road distance, \(\:C{Z}_{j}\) is the water source distance, \(\:C{R}_{j}\) is the river distance, and so on. The next step in establishing new sanitary networks was ranking buildings by importance. This priority index combines waste production estimates with sanitation network accessibility. Structures with plenty of trash and no sanitation were prioritized. To effectively treat wastewater while reducing negative impacts on the ecosystem, decision-makers should consider river conditions. Therefore, it is recommended that a WWTP be constructed at a considerable distance from the river, according to a comprehensive assessment of these elements. The best spot to put a WWTP is the one with the greatest combined value of the normalized and integrated parameters.

$$\:Suitabilty\:Index=\sum\:_{j=0}^{n}K{d}_{j}+T{J}_{j}+C{O}_{j}+C{Z}_{j}+C{R}_{j}$$

(3)

As stated in Eq. (3), the technique for calculating the suitability index of WWTP candidates \(\:Suitabilty\:Index\) makes use of the following parameters: \(\:K{d}_{j}\) is the distance from the road \(\:C{O}_{j}\) is the slope \(\:T{J}_{j},\)the water source \(\:C{Z}_{j}\), and the river \(\:C{R}_{j}\), as well as the value of each parameter (\(\:j\)). In the case of a centralized WWTP, there are m GOF objectives, and these goals are used as a criterion for each WWTP.

Random Forest (RF) is a popular machine learning method for response variables \(\:Y\). The RF method uses a set of randomly dispersed, independently distributed data vectors called parameters to build prediction models according to the decision trees. The classification model of the approach is based on a decision tree regression. In RF’s computation, the basic mathematical formula is \(\:{\widehat{G}}_{i}\), where \(\:i\) is a sample with a constant classification level, \(\:i\) is the prediction model’s value and \(\:argma{x}_{x}\) is the sample representation. Equation (6) shows this is a commonly used machine learning technique for determining which class has the greatest predictive probability. Here, \(\:y\) are the predictors and \(\:x\) shown in Eq. (4).

$$\:E\left(y\right)=argma{x}_{x}{\sum\:}_{i=1}^{i}I\left({\widehat{G}}_{i}\left(y\right)=x\right)$$

(4)

This work uses Gradient Tree Boosting (GTB) to enhance error function-based classification and regression. Decision trees and other low-predictive models help GBT develop more accurate prediction models. GBT largely reduces residual yield from the previous prediction model using gradient-wise methods. By using the negative gradient of the residues\(\:\:{E}_{n-1}\) in conjunction with the \(\:learning\:rate\), the outcomes of the regression tree \(\:{\phi\:}_{n}\), and the loss function \(\:{h}_{n}\), the goal of the GBT prediction model, as demonstrated in Eq. (5), is to reduce residues and overfitting.

$$\:{E}_{n}\left(y\right)={E}_{n-1}\left(y-1\right)+learning\:rate*{\phi\:}_{n}{h}_{n}\left(\vartheta\:\right)$$

(5)

In addition, the likelihood of a flood catastrophe is estimated by implementing an overfitting solution based on the two machine learning algorithms. This solution is derived from Eq. (6), where \(\:{J}_{ML}\) is an index of the chance of a flood disaster, and the new index results from integrating RF and GBT.

$$\:{J}_{ML}={\sum\:}_{j=0}^{m}{E}_{n}\left(y\right)+\widehat{E}\left(y\right)$$

(6)

A correlation test was also run to assess the model, which included making a correlation matrix of the study variables and analyzing the ones that impact the conditions of the flood susceptibility index. After determining the sample size (\(\:m\)), we used Eq. (7), where \(\:x\:and\:y\) are the criteria for determining the correlation coefficient, which are the variables that are independent and dependent, respectively (\(\:o\)). Coefficients of correlation (\(\:o\)) show how strongly two variables\(\:,\:x\:and\:y\), are related to one another.

$$\:Correlation\:Coefficient=\frac{\sum\:yx-\frac{\sum\:x\sum\:y}{m}}{\sqrt{\left(\sum\:{y}^{2}-\frac{{\left(\sum\:y\right)}^{2}}{m}\right)\left(\sum\:{x}^{2}-\frac{{\left(\sum\:x\right)}^{2}}{m}\right)}}$$

(7)

Details are entered from a variety of water sources. This information has been compiled in many forms. The normalizing procedure can only be used for data that is homogenous. To normalize anything, you need to start with the min-max technique. It is located between the intervals [− 2, 2].

Here is the definition of standardization shown in Eq. (9):

$$\:H{l}_{j}=\frac{O-\delta\:}{\alpha\:}$$

(9)

The middle value \(\:\delta\:\) is shown here as (10):

$$\:\delta\:=\frac{1}{k}{\sum\:}_{j=1}^{l}\left({O}_{i}\right)$$

(10)

Here is the definition of the standard deviation \(\:\alpha\:\)

$$\:\alpha\:=\sqrt{\frac{1}{M}}{\sum\:}_{j=1}^{m}{\left({O}_{i}-\delta\:\right)}^{2}$$

(11)

By training a model using input variables like pH levels, chemical concentrations, and temperature, SVM with PSO can assess wastewater quality parameters.

Predictions of wastewater quality and management using the SVM approach were evaluated using these factors. When determining the loss function, SVM usually employs the linear least-squares approach. The input dataset \(\:\left\{{q}_{j}{,p}_{j}\right\}\) for each \(\:i\) ranging from \(\:1\:to\:n\). Here, \(\:{q}_{j}\)is the dataset that serves as input is the desired result. Linear regression is characterized by its function in Eq. (12):

$$\:P\left({q}_{j}\right)={\epsilon\:}^{S}\omega\:\left({q}_{j}\right)+a$$

(12)

The following is the expression for the quadratic optimization of linear regression in Eq. (13):

$$\:minI\left(\epsilon\:,\phi\:\right)=\frac{1}{2}{\epsilon\:}^{S}\epsilon\:+\frac{g}{2}{\sum\:}_{j=1}^{l}{\phi\:}^{2}$$

(13)

In this particular setting, the symbolic representation of the weight vector is denoted by the symbol \(\:\epsilon\:\) the parameter is denoted by \(\:g\), the error variable is denoted by \(\:\phi\:\) the bias value is denoted by \(\:a\), and the dimension of the space mapping is denoted by \(\:\omega\:\left({q}_{j}\right)\). Through the use of the Lagrange method to optimize Eq. 13. Equation (12) is a representation of the linear regression function that is being discussed. In this equation, the desired output is denoted by the symbol “\(\:\:P\left({q}_{j}\right)\)” the input dataset is denoted by \(\:q\left(j\right)\)the weight vector is denoted by “\(\:\alpha\:\),” the function that maps the data to a high-dimensional space is denoted by “\(\:\:P\left({q}_{j}\right)\) and the bias value is denoted by “\(\:a\).” Eq. (13) provides the formula that may be used to minimize the effects of linear regression. To achieve the goal of achieving the minimal value of the cost function \(\:I(\epsilon\:,\phi\:)\) via a modification of the weight vector\(\:\:\epsilon\:\) and the error variable \(\:\phi\:\) is the target. Within the cost function, there is a regularization component known as \(\:\frac{1}{2}{\epsilon\:}^{S}\epsilon\:\) which serves to prevent overfitting. On the other hand, the sum of squared errors is represented by \(\:\frac{g}{2}{\sum\:}_{j=1}^{l}{\phi\:}^{2}\)which is the other portion of the cost function. The constraint of Eq. (13) ensures that the intended output \(\:{p}_{i}\) is equivalent to the sum of the weighted values of the function that maps \(\:\epsilon\:\left({q}_{j}\right)\) and the bias value “\(\:a\),” in addition to the error component \(\:\phi\:\). During the process of optimizing Eq. (13), the Lagrange method is used to ascertain the values of \(\:\epsilon\:\), \(\:\phi\:\), and \(\:a\)that reduce the cost function \(\:I(\epsilon\:,\phi\:)\) while simultaneously satisfying the constraint in Eq. (14).

$$\:La\left(\epsilon\:,\phi\:,\:b,\:a\right)=\frac{1}{2}{\epsilon\:}^{S}\epsilon\:+\frac{g}{2}{\sum\:}_{j=1}^{l}{\phi\:}^{2}-{\sum\:}_{j=1}^{l}{\sigma\:}^{2}\left({\epsilon\:}^{S}\epsilon\:\left({q}_{j}\right)+a+{\phi\:}_{i}-{p}_{i}\right)$$

(14)

The Lagrange multiplier is denoted by \(\:{\sigma\:}_{i}\) here. The kernel function is located at this point. The SVM may now be written as (15):

$$\:P\left(q\right)=\sum\:_{i=1}^{l}{\sigma\:}_{i}kernel\left(q,{q}_{i}\right)+a$$

(15)

Particle Swarm Optimization might enhance a wastewater model. The PSO method is a population-based optimization strategy that attempts to solve the optimization issue by simulating a swarm of particles. To find the best solution on a global scale, the method must update the position and speed of every particle in the swarm according to the best solutions found by their peers. Wastewater prediction might be enhanced by optimizing model parameters using PSO, such as the penalty parameter shown in Eq. 15. Following these steps will enhance a model for predicting water quality using PSO.:

Particle swarm optimization (PSO) based waste water quality analysis

Step 1: Intialize the particle swarm.

Make a collection of particles that stand in for different ways to solve the optimization issue. Here, we may see the particle’s location and speed depicted as (16)

$$\:Q{x}_{posj}^{j}=\left[q{x}_{poi1}^{1},\:q{x}_{poi2}^{2},\dots\:,\:q{x}_{poim}^{j}\right]$$

(16)

Each particle’s dimension is denoted by \(\:posj\), where \(\:posi\:=\:1,\:2,…,\:n\). With each iteration, the particle’s position and velocity \(\:Velocit{y}_{j}^{s}\) are altered.

Step 2: Assess the fitness.

Find the fitness of each particle, which is a measure of how accurate its optimization solution is. Given two randomly chosen particles’ locations inside the swarm, PSO’s fitness function may be easily applied. The fitness function may be assessed using (17):

$$\:Fitness\left(q,p\right)=1-\left(\frac{Sum\:square\:of\:residual\left(q\right)}{total\:sum\:of\:square\left(q\right)}\right)$$

(17)

Here, p and q are the swarm locations of two randomly chosen particles representing the expected and observed values.

Step 3: keep the top solutions up to date.

The best solution for each particle is specified as \(\:Qbes{t}_{posi}^{j}\), and it is kept track of using that position. Additionally, \(\:Hbes{t}_{posi}^{j}\) defines the optimal solution that the swarm as a whole finds in Eq. 18(a-b)

$$\:Qbes{t}_{posi}^{j}=\left[{Q}_{posj1}^{1},{Q}_{posj2}^{2},\dots\:,{Q}_{posjm}^{j}\right]$$

(18a)

$$\:Hbes{t}^{j}=min\left\{qbes{t}_{posi1}^{j},qbes{t}_{posi2}^{j},\dots\:,\:qbes{t}_{posin}^{j}\right\}$$

(18b)

Step 4: revise the speeds and locations.

Bring each particle’s location and speed up to date according to its own best \(\:Qbes{t}_{posi}^{j}\)and the world’s best \(\:Hbes{t}_{posi}^{j}\). We can compute the new location by combining the present location with the current velocity. Each iteration’s location and velocity may be updated using Eq. (19a) and (19b).

$$\:Velocit{y}_{j}^{s+1}=zVelocit{y}_{j}^{s}+coeffcien{t}_{1}.r{d}_{1}\left(Hbes{t}_{s}-current\:positio{n}_{i}^{s}\right)+coeffcien{t}_{2}.r{d}_{2}\left(Qbes{t}_{s}-current\:positio{n}_{i}^{s}\right)$$

(19a)

$$\:current\:positio{n}_{i}^{s+1}=current\:positio{n}_{i}^{s}+Velocit{y}_{j}^{s+1}$$

(19b)

For all values of \(\:i\) from 1 to Swarm population number, denoted as \(\:m\). Vector of velocity \(\:Velocit{y}_{j}^{s}\), iteration (\(\:s\)), the \(\:i\)-th particle’s present location \(\:current\:positio{n}_{i}^{s+1}\)Here, \(\:Pbes{t}_{s}\) is the optimal location of the \(\:i\)-th particle in the past, and \(\:Hbes{t}_{s}\) is the optimal location of the complete particle as of right now. Both the cognitive and social parameters, denoted by the coefficients \(\:coeffcien{t}_{1}.\) and \(\:coeffcien{t}_{2}.\), If \(\:r{d}_{1}\) and \(\:r{d}_{2}\) The internal coefficient is a set of random integers between 0 and 1 to regulate both the local and global search use Eq. (20):

$$\:Q{y}_{j}=\left\{\begin{array}{c}1if\:round

(20)

In this case, sigmoid \(\:Velocit{y}_{j}^{s+1}\) is the sigmoid function that will convert the velocity to a range of values between zero and one, and \(\:r{d}_{1}\) is a randomly chosen integer from the distribution of values between zero and one.

Step 5: Keep going until reach a predetermined stopping point, or until iterate a specified number of times.

Step 6: The optimum solution is determined as the best solution identified by the swarm after optimization.

Step 7: apply the adjusted parameters to make the wastewater quality forecast model more accurate.

To provide forecasts, the Regression Tree model employs a decision tree. The tree nodes stand in for the input variables, the leaves for the values of the target variables, and the root node contains all the data. A regression tree model is constructed by iteratively subdividing the input data domain. Predictions in each sub-domain are provided using a multivariate linear regression model. Before dividing the dataset in half, the iterative technique considers every conceivable binary split for each field. The growth process continues as the system branches out, dividing each into smaller parts. At each stage, the technique generates offspring with lower levels of “impurity” than their parents by dividing the population to minimise the total squared deviations from the mean. The procedure will end when some impurities have been removed or other requirements are satisfied. Common stopping criteria include the lowest possible impurity variation that can be handled by further subdivisions, the deepest section of the tree, or the fewest number of units in any single node.

\(\:O\left(s\right)\) is an indicator of a node’s impurity, which is the least-squared deviation (LSD). In a nutshell, it’s the target variable’s mean value in node\(\:\:{x}_{j}\), the \(\:j\)th unit’s value, the number of sample units in node \(\:m\left(s\right)\), and the inside variance for node\(\:\:{x}_{n}\) expressed in Eq. (21):

$$\:O\left(s\right)=\frac{1}{m\left(s\right)}\sum\:_{j\in\:s}{\left({x}_{j}-{x}_{n}\left(s\right)\right)}^{2}$$

(21)

The following LSD function is used to determine the split criterion at each node \(\:s\) in Eq. (22):

$$\:\rho\:\left({T}_{q},s\right)=O\left(s\right)-{Q}_{k}O\left({s}_{k}\right)-{Q}_{o}O\left({s}_{o}\right)$$

(22)

The left child node \(\:{s}_{k}\) and the right child node \(\:{s}_{o}\) does the split generate both \(\:{T}_{q}\), and the corresponding portions of the units allocated to them are \(\:{Q}_{k}\) and \(\:{Q}_{o}\), respectively. At last, we choose the split sp that causes \(\:\rho\:\left({T}_{q},s\right)\) to be maximized. It produces optimal results while evaluating tree homogeneity.

Wastewater flow rate analysis.

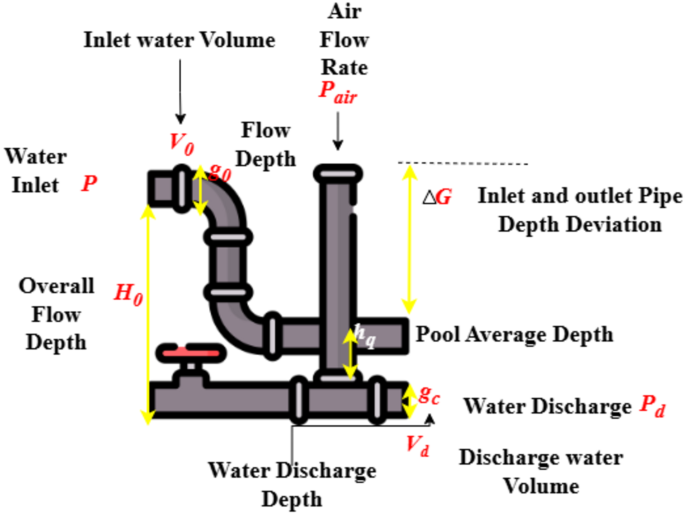

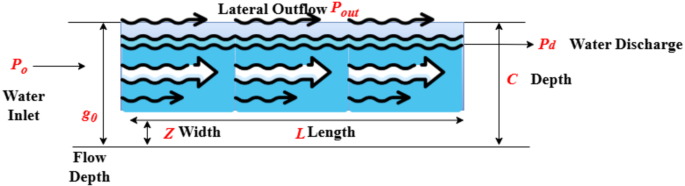

Figure 4 deliberates the Wastewater flow rate analysis. The foude number \(\:{E}_{0}\) and the flow depth\(\:\:{g}_{0}\). This apparatus consisted of a plexiglass frame into which plates with alternating filling ratios were put. The flow depths were recorded using piezometers and point gauges with an accuracy of ± 0.5 mm, and the water discharges were measured using an electromagnetic flowmeter with an accuracy of 0.1 L/s. A series of piezometers attached to the base of the maintenance hole were used to quantify the pool’s average depth over time, abbreviated as \(\:{g}_{q}\). An anemometric probe was installed within a 50 mm diameter pipe that was hermetically sealed on top of the maintenance hole to perform air demand experiments. The conduit was designed to provide the air flow rate\(\:{P}_{air}\). The side weir experiment facility consists of a 300 mm diameter round plexiglass pipe with side weir measurements L and z. The recirculation system includes a supply tank, a downstream flow tank, and a lateral outflow tank to regulate the flow through the pipe.

Wastewater Flow Optimization.

Figure 5 expresses the Wastewater Flow Optimization. A flowmeter that uses electromagnetic waves was used to determine the flow rate that was flowing in. A flow meter that measures electromagnetic inflow was used for this measurement. The collecting tanks were equipped with V-notch weirs to monitor the flow that comes from the downstream rate and the lateral outflow \(\:{P}_{out}\). Piezoelectric instruments were strung along the pipeline to measure the depths of the flows. A Pitot tube was used to find the velocities at certain spots. Hydrology, population density, and geology are regional and local factors that should be considered when deciding where to put wastewater treatment plants to provide a reliable water supply.

At the same time, considering urban forest factors, the sewage system was built to avoid cutting down trees in urban forests. The ideal sewage system network was determined using the optimal wastewater flow formula, as shown in Eq. (23)

$$\:WFMOP=\sum\:_{j=0}^{l}\sum\:_{j=0}^{i}b*{\varDelta\:}_{Residential\:Area}+a*{\varDelta\:}_{Roadside\:network}+c*{\varDelta\:}_{Railway\:network}+d*{\varDelta\:}_{slop\:Area}+e*{\varDelta\:}_{forest\:Area}$$

(23)

In the parameters of the residential area, variables \(\:b\) and \(\:{\varDelta\:}_{Residential\:Area}\) have fixed values and weights, whereas in the parameters of the road network, variables \(\:a\) and \(\:{\varDelta\:}_{Roadside\:network}\) also have fixed values and weights. Moreover, variables \(\:d\) and \(\:{\varDelta\:}_{slop\:Area}\) show the constant and weight values of the slope parameters, respectively, while variables \(\:c\) and \(\:{\varDelta\:}_{Railway\:network}\) provide the railway network characteristics’ constant and weight values independently. The variables \(\:e\) and \(\:{\varDelta\:}_{forest\:Area}\) reflect the constant and weight values of the characteristics of urban forests. Also, \(\:l\) is the sum of all the cells required to connect the two locations, and \(\:i\) is the variable for the eight cells surrounding a central cell. After that, we need to examine the features of the WWTP with an eye on each neighbourhood’s projected total water waste volume and flood susceptibility. The sum of all the wastewater produced by each home was used to determine the overall quantity.