Benj Edwards / Steady Diffusion

AI company Anthropic announced Thursday that it has given its ChatGPT-like Claude AI language model the ability to analyze the equivalent of an entire book in less than a minute. This new ability is obtained by extending Claude’s context window to 100,000 tokens, or approximately 75,000 words.

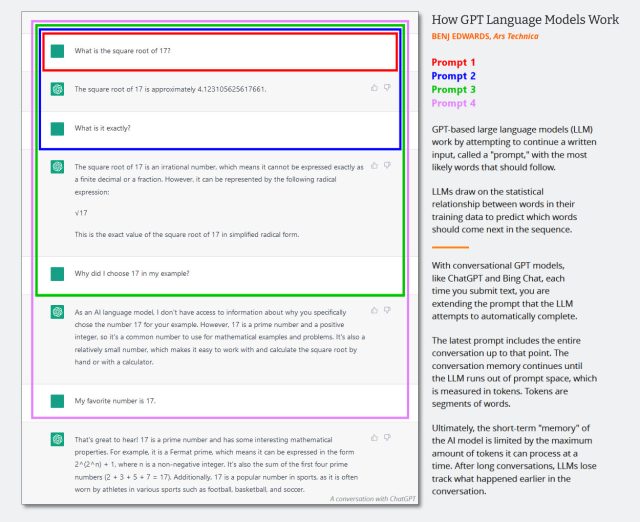

Similar to OpenAI’s GPT-4, Claude is a large scale language model (LLM) that works by predicting the next token in a sequence given a given input. Tokens are he word fragments used to simplify AI data processing, and “context windows” are short-term memory, i.e. he resembles the amount of human-supplied input data an LLM can process at once. increase.

According to Anthropic, a larger contextual window means LLMs can consider large works like books or engage in very long interactive conversations that can last “hours, even days.” To do.

The average person can read 100,000 tokens of text in about five hours or more, but digesting, memorizing, and analyzing that information can take considerable time. Claude can now do this in less than a minute. For example, he loads the full text of The Great Gatsby into Claude-Instant (72K tokens) and he changes one line to say that Mr. Callaway is “a software engineer working on machine learning tools at Anthropic.” Did. He asked the model to point out what was wrong, and the model gave him the correct answer within 22 seconds.

Detecting changes in text may not be impressive (Microsoft Word can do it, but only if you have two documents to compare), but after Claude enters the following text: , consider the following: great gatsby, the AI model can interactively answer questions about it and analyze its meaning. 100,000 tokens is a big upgrade for LLM. By comparison, OpenAI’s GPT-4 LLM boasts a context window length of 4,096 tokens (approximately 3,000 words) when used as part of ChatGPT, and the GPT-4 API (currently only available via the waiting list). ) via 8,192 or 32,768 tokens.

To understand how conversations with chatbots such as ChatGPT and Claude grow longer as the context window grows, I wrote in a previous article that the size of the prompt (held within the context window) includes the entire I made a diagram to show how it scales for Conversation text. This means that conversations can last longer before the chatbot loses its “memory” of the conversation.

Benji Edwards / Ars Technica

According to Anthropic, Claude’s enhanced abilities extend past book processing. Enlarged contextual windows can help businesses extract key information from multiple documents through conversational interaction. The company suggests that this approach may perform better than vector search-based methods when dealing with complex queries.

Demo from Anthropic using Claude as a Business Analyst.

While not as big a name in the AI space as Microsoft or Google, Anthropic has emerged as a notable competitor to OpenAI in terms of competitive offerings in LLM and API access.Former OpenAI Research Vice President Dario Amodei and his sister Daniela Anthropic in 2021 after disagreements over OpenAI’s commercial direction. Notably, Anthropic received his $300 million investment from Google in late 2022, with Google acquiring a 10% stake in the company.

According to Anthropic, users of the Claude API currently have 100,000 context windows available, currently limited by a waiting list.