An analysis of more than 41 million research papers from the past 40 years reveals that researchers who use artificial intelligence (AI) tools in their research publish more papers and receive more citations. However, the use of these tools can limit academic research to areas where the data are most abundant.

AI tools can help scientists identify patterns in large datasets, automate certain processes for high-throughput experiments, and write scientific papers. A team led by James Evans at the University of Chicago, along with researchers in China, has been working to better understand how the introduction of these tools will impact both individual scientists and the broader scientific community.

What do we mean when we say AI?

Artificial intelligence (AI) is an umbrella term that is often mistakenly used to encompass a variety of connected but simpler processes.

A.I. The ability of a machine or computer program to perform tasks normally only performed by humans, such as reasoning, responding to feedback, and making decisions.

Generation AI is a new variant of AI that analyzes and detects patterns in training datasets and generates original text, images, and videos in response to user requests. ChatGPT, Microsoft Copilot, Google Gemini, and more recently X’s Grok are all examples of chatbots that use generative AI.

neural network It is an interconnected array of artificial neurons, similar to a biological brain, that identifies, analyzes, and learns from statistical patterns in data.

machine learning is a subset of AI that allows machines to learn from datasets and make predictions based on new data without the programmer explicitly asking for it. Machine learning models perform better the more data they receive.

deep learning is an enhanced type of machine learning that uses neural networks with many layers to analyze complex data from very large datasets. Applications of deep learning include speech recognition, image generation, and translation.

Large-scale language model or LLM It is a type of deep learning that is trained on large amounts of data to understand and generate language. LLMs learn patterns in text by predicting the next word in a sequence, and these models can now write prose, analyze text from the Internet, and interact with users.

To do this, the team used a large-scale language model to analyze more than 41 million research papers using AI tools published over the past 40 years. The model identified papers that used AI tools and divided such research into three AI “eras”: traditional machine learning (1980-2014), deep learning (2015-2022), and generative AI (2023-present).

Research across the natural sciences was analyzed, including biology, medicine, chemistry, physics, materials science, and geology. Evans explained that the team did not choose fields such as mathematics, computer science, or social science because they are often described as developing and using AI tools, rather than using them to conduct scientific research. We then used human expertise to evaluate a sample of papers classified by the model and agreed with the model’s choice in nearly 90% of cases.

The analysis reveals that the proportion of papers published using AI tools has increased rapidly over the past 40 years, and the number of researchers using AI tools has also increased rapidly. This is likely a result of these tools becoming more easily accessible. This result was consistent across all three AI eras.

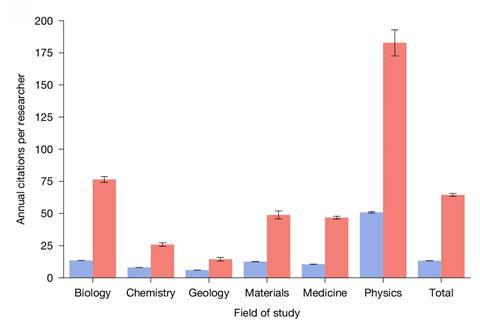

Additionally, scientists who use AI in their research publish, on average, about three times as many papers and receive just under five times as many citations as scientists who don’t employ AI.

However, Milad Abolhasani, who studies autonomous flow chemistry at North Carolina State University in the US, suggests that the model may miss certain papers if the use of AI is not clear, leading to an underestimation of research that incorporates AI.

Evans and his team also extracted the career paths of more than 2 million scientists from the dataset, classifying them as either junior or established scientists who have not yet led a research project. Although teams that adopted AI tools were smaller and had fewer junior researchers, early career members of these groups were 13% more likely to stay in academia. These young researchers who introduced AI tended to become established researchers about a year and a half earlier than their peers.

However, the team was unable to fully explain why the introduction of AI tools would increase scientific impact. Molly Crockett, a neuroscientist at Princeton University, cautions that researchers’ definition of “impact” is based on citation counts, and that scientists need to reach the level of the principal investigator. “Apart from the quality of the research, these metrics may equally reflect hype or economic incentives to use new technologies, and the data cannot disentangle these explanations,” Crockett added.

broader scientific community

While the introduction of AI tools can help individual scientists, further analysis suggested that they can help focus research on niche problems within already established fields. Compared to fields that explore fundamental questions such as the origin of natural phenomena, it is easier to conduct research using AI in fields where there is a wealth of available data. “If automated tracking is not possible, entire fields of research may be at risk of extinction,” Crockett says.

The study also found that AI research could reduce engagement among scientists by 22%, creating “lonely crowds” in popular scientific research fields.

“We’ll bring these [artificial] Our research incorporates intelligence, but it really only applies to one part of the spectrum of knowledge intelligence,” says Evans. He believes this creates an imbalance between focusing on existing research questions rather than exploring new problems. “The problem is that there is a conflict between individual and collective incentives,” he explains.

But Abolhassani says the study’s results don’t mean scientists have to use AI to advance their careers. He added that the study “should not encourage scientists to replace critical thinking with large-scale language models.”