Estimated Reading Time: 4 Min

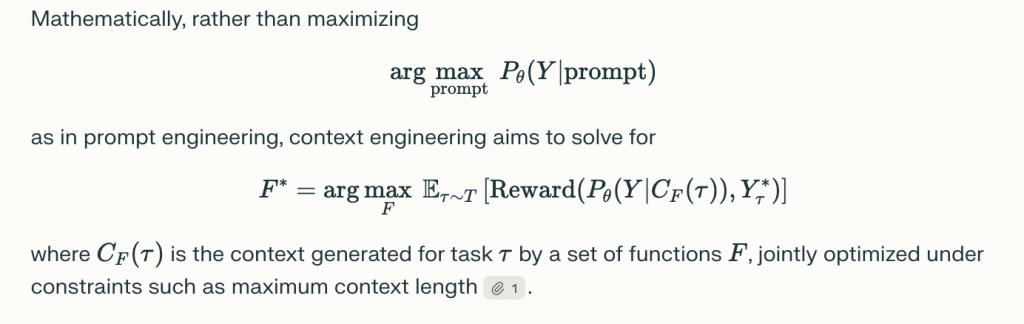

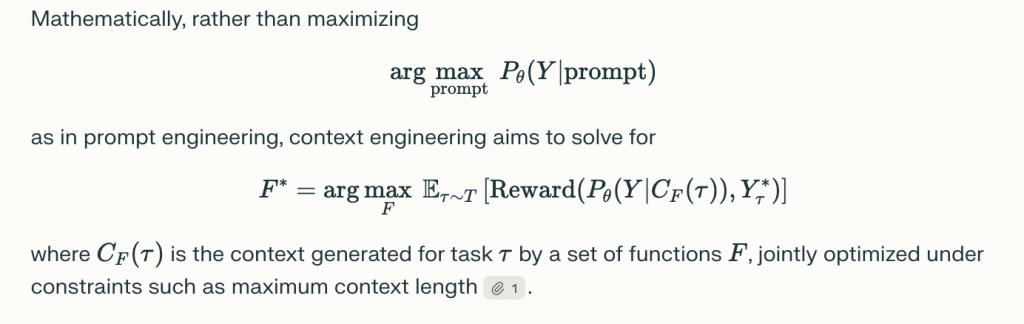

paper”Investigating context engineering for large-scale language modelsEstablish the Context Engineering As a formal discipline that goes far beyond rapid engineering, it provides a unified, systematic framework for designing, optimizing and managing information that guides large-scale language models (LLMs). Here is an overview of the main contributions and frameworks:

What is context engineering?

Context Engineering It is defined as science and engineering that organize, assemble and optimize any form of context delivered to LLM to maximize understanding, inference, adaptability, and overall performance of the actual application. Rather than seeing a context as a static string (assumption of rapid engineering), context engineering treats it as a dynamic, structured assembly of components.

Context Engineering Classification

This paper breaks down context engineering.

1. Basic components

a. Search and generate contexts

- Includes rapid engineering, in-context learning (zero/minor shots, thinking, pre-thinking graphs), external knowledge search (e.g., searched generation, knowledge graphs), and dynamic assembly of context elements1.

- It highlights techniques such as clear frameworks, dynamic template assembly, and modular search architecture.

b. Context Processing

- Addresses long sequencing (using architectures such as Mamba, Longnet, Flashattention), context self-correction (iterative feedback, self-assessment), and integration of multimodal and structured information (vision, audio, graphs, tables).

- Strategies include attention sparse, memory compression, and in-context learning metaptimization.

c. Context Management

- Includes memory tiers and storage architectures (short-term context windows, long-term memory, external databases), memory paging, context compression (auto-encoder, recurrence compression), and scalable management in multi-turn or multi-agent configurations.

2. Implementing the system

a. Searched Generation (rag)

- Modular, agent, and graph-enhanced RAG architectures integrate external knowledge and support dynamic, sometimes multi-agent search pipelines.

- Enables both real-time knowledge updates and complex inference via structured databases/graphs.

b. Memory System

- Implements persistent, hierarchical storage, allowing longitudinal learning and knowledge recalls of agents (e.g. Memgpt, MemoryBank, external vector databases).

- Keys for extended multi-turn dialogs, personalized assistants, and simulation agents.

c. Tool Integrated Inference

- LLMS uses external tools (APIs, search engines, code execution) via function calls or environmental interactions, combining language inference and world-action capabilities.

- Enable new domains (mathematics, programming, web interaction, scientific research).

d. Multi-agent system

- Coordination between multiple LLMs (agents) via standardized protocols, orchestrators, and context sharing – essential for complex, collaborative problem-solving and distributed AI applications.

Key insights and research gaps

- Understanding – Generation asymmetry: LLMS with advanced context engineering can understand very sophisticated and multifaceted contexts, but struggle to produce output to suit its complexity or length.

- Integration and modularity: Best performance comes from a modular architecture that combines multiple techniques (search, memory, and tool use).

- Rating Limitations: Current assessment metrics/benchmarks (BLE, Rouge, etc.) are often unable to capture the composition, multi-step, and collaborative actions enabled by advanced context engineering. New benchmarks and dynamic, overall evaluation paradigms are needed.

- Open research questions: Theoretical foundations, efficient scaling (particularly computational), cross-modal and structured context integration, real-world deployment, safety, integrity, and ethical concerns remain unresolved research challenges.

Applications and Impacts

Context engineering supports robust and domain adaptive AI.

- Long Document/Question Answer

- Personalized Digital Assistant and Memory Organizing Agent

- Scientific, medical and technical problem solving

- Multi-agent collaboration in business, education and research

Future direction

- Uniform theory: Development of mathematical and information theoretical frameworks.

- Scaling and efficiency: Innovation in attention mechanisms and memory management.

- Multimodal Integration: Seamless adjustment of text, vision, audio and structured data.

- Robust, safe and ethical development: Ensuring reliability, transparency and fairness in real-world systems.

In summary: Context engineering has emerged as a vital discipline for guiding the next generation of LLM-based intelligent systems, shifting its focus from creative rapid writing to information optimization, system design, and the rigorous science of context-driven AI.

Please check paper. Please feel free to check GitHub pages for tutorials, code and notebooks. Also, please feel free to follow us Twitter And don't forget to join us 100k+ ml subreddit And subscribe Our Newsletter.

Mikal Sutter is a data science expert with a Master's degree in Data Science from Padova University. With its solid foundations of statistical analysis, machine learning, and data engineering, Michal excels at transforming complex datasets into actionable insights.