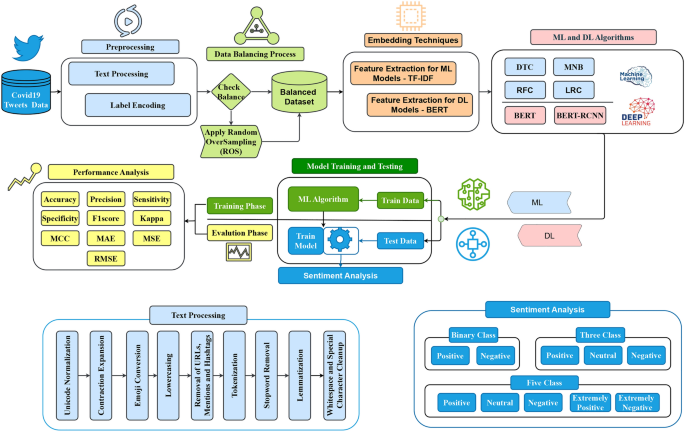

The proposed methodology for sentiment analysis of COVID-19 tweets follows a structured pipeline encompassing preprocessing, data balancing, feature extraction, model training, and performance evaluation, as illustrated in Fig. 1. Initially, raw tweets undergo comprehensive text preprocessing, including Unicode normalization, contraction expansion, emoji conversion, lowercasing, removal of URLs, mentions, and hashtags, tokenization, stopword removal (excluding negations), lemmatization, and whitespace normalization. Label encoding is then applied to transform sentiment labels into numerical values. To address class imbalance, the dataset undergoes a balancing process using Random OverSampling (ROS) to ensure an equal representation of sentiment classes. DL models employ BERT embeddings for feature extraction, whereas ML models use TF-IDF. The dataset is divided into subgroups for testing and training. ML models (DTC, RFC, MNB, and LRC) are trained using features that have been retrieved. In order to train DL models (BERT, BERT-LSTM), contextual embeddings are used. The following metrics are used in performance evaluation: ACC, PREC, REC, F1, SPEC, KAPPA, MAE, MSE, and RMSE. Finally, the models classify sentiments into binary (positive, negative), three-class (positive, neutral, negative), and five-class (positive, neutral, negative, extremely positive, extremely negative) categories, providing a comprehensive sentiment analysis framework for assessing public opinion on COVID-19-related discussions across social networks.

The proposed hybrid (BERT-LSTM) architecture for sentiment analysis.

Dataset description

During the COVID-19 lockdown, public sentiment varied significantly, influencing social discourse across different regions. To capture these variations, we utilized a dataset comprising 44,955 tweets, compiled from Twitter messages in several different nations. The dataset was sourced from Kaggle, a publicly accessible and open-source repository. These tweets reflect public emotions, opinions, and concerns during the pandemic, making the dataset valuable for sentiment analysis research. The dataset utilized in this study comprises four key attributes: Location, TweetAt, OriginalTweet, and Sentiment. These attributes provide essential contextual information, enabling an in-depth analysis of the attitude of tweets gathered throughout the COVID-19 outbreak. The Location attribute specifies where the tweet was posted, while TweetAt records the timestamp. The OriginalTweet contains the actual text analyzed, and Sentiment categorizes the tweet into Positive, Neutral, or Negative, forming the basis for classification tasks in this research.

Sentiment analysis task

This dataset was employed in a sentiment analysis experiment, where we aimed to classify tweets into different sentiment categories. Sentiment analysis is crucial for understanding public opinion, tracking emotional trends, and assessing responses to events like the COVID-19 pandemic. The dataset was utilized in three different classification tasks:

-

Binary sentiment: Distinguishing between Positive and Negative sentiments.

-

Three-class sentiment: Identifying Positive, Neutral, and Negative Sentiments.

-

Five-class sentiment: Expanding classification to Extremely Positive, Positive, Neutral, Negative, and Extremely Negative categories for more granular sentiment detection.

A thorough analysis of the dataset distribution across various sentiment classification tasks is given in Table 1, which also highlights the differences in the quantity of examples available for testing and training.

Challenges in dataset

The challenges in the sentiment dataset include class imbalance, which drives it problematic for the model to properly comprehend underrepresented classes. Additionally, the dataset may contain noisy or mislabeled data, affecting the quality of the training process. Variations in the data, such as different formats or resolutions, can also introduce inconsistencies that hinder model performance. Addressing these challenges often requires thorough text preprocessing, data balancing, and careful evaluation to ensure reliable and accurate results.

Text preprocessing

In this sentiment analysis classification experiment, a structured text preprocessing pipeline is applied to refine raw tweets for ML models. The steps include Unicode normalization to remove accents and special characters, contraction expansion, and emoji conversion to retain sentiment value. Text is standardized to lowercase, and social media artifacts such as URLs, user mentions, and hashtags are removed. Tokenization, stopword removal (excluding negations), and lemmatization are performed to refine linguistic representation. Finally, redundant spaces and special characters are eliminated to ensure clean formatting. This preprocessing enhances the quality of textual data, enabling effective sentiment classification.

-

1.

Unicode normalization: Ensures consistency by removing accents and special characters.

-

2.

Contraction expansion: Converts contractions (e.g., “can’t” to “cannot”) into their full forms.

-

3.

Emoji conversion: Replaces emojis with descriptive text to preserve sentiment meaning.

-

4.

Lowercasing: Standardizes text by converting all characters to lowercase.

-

5.

Removal of URLs, Mentions, and Hashtags: Eliminates social media artifacts irrelevant to sentiment analysis.

-

6.

Tokenization: This process divides the text into individual words or tokens for further analysis.

-

7.

Stopword removal: Removes common stopwords while retaining negations critical for sentiment analysis.

-

8.

Lemmatization: Reduces words to their base forms to improve generalization.

-

9.

Whitespace and special character cleanup: Eliminates redundant spaces and non-essential symbols for clean formatting.

Label encoding

In order to translate categorical sentiment labels into numerical values that machine learning models can comprehend effectively, label encoding is used in this experiment. The following encoding schemes are used based on the sentiment granularity:

-

1.

Binary classification: The sentiment labels are encoded (Table 2)as follows:

Table 2 Binary label encoding. -

2.

Three-class classification: For a three-class sentiment analysis, the labels are encoded (Table 3) as:

Table 3 Three-class label encoding. -

3.

Five-class classification: For a more fine-grained classification, five distinct sentiment categories are encoded (Table 4) as:

Table 4 Five-class label encoding

This encoding process ensures that the sentiment categories are effectively represented as numerical values, making them suitable for ML model training and evaluation.

Data balancing

Data balancing refers to the technique used to address class imbalances in a dataset, which can direct to biased learning models. When the class distribution is highly skewed, the model may become biased toward the majority class, resulting in poor performance, especially for the minority class. To mitigate this issue, we applied Random Oversampling (ROS), a technique where the minority class samples are randomly duplicated to correspond to the number of samples in the majority class.

Mathematically, ROS involves the following steps:

Given a dataset with classes \(C_1, C_2, \dots , C_k\) and corresponding class counts \(N(C_1), N(C_2), \dots , N(C_k)\), let \(N_{\text {majority}} = \max (N(C_1), N(C_2), \dots , N(C_k))\) be the count of the majority class. ROS creates new instances by randomly selecting samples from the minority class, with the substitute, until the number of samples in each class equals \(N_{\text {majority}}\).

The motivation for using ROS in sentiment analysis is that it allows the model to better learn from underrepresented classes, which is essential for tasks such as sentiment classification where certain sentiments (e.g., “Neutral” or “Extremely Positive”) may appear less frequently. By balancing the classes, the model can generalize better, improving its performance across all sentiment categories.

After applying ROS, the dataset distribution for the training data (Table 5) is as follows:

Dataset partition

Our dataset is divided into two primary sections: the testing and training datasets. There are 3,798 samples in the test dataset and 41,157 samples in the training dataset. In order to generate a validation set, we retained 90% of the training dataset for training and used 10% for validation. The dataset distribution that was produced is displayed in Table 6.

Embedding techniques

In our experiment, we employ two distinct embedding techniques to represent text data effectively, each suited for different types of models: BERT embeddings for deep learning models and TF-IDF embeddings for ML models. BERT embeddings are pre-trained, contextualized word embeddings generated by the BERT model. BERT processes text bidirectionally, capturing the nuanced meanings of words based on their surrounding context. This technique enables the model to understand complex linguistic structures and context-dependent word representations, making it particularly effective for tasks like sentiment analysis, where understanding the context is critical. BERT embeddings are utilized in our deep learning models, such as the BERT-LSTM, to enhance performance by leveraging rich, context-aware representations. In contrast, TF-IDF embeddings are used with ML models to capture the importance of words within a document relative to the entire dataset. TF-IDF focuses on identifying terms that are unique to specific documents, providing a sparse representation that is particularly useful for feature-based models like RFC and LRC. These embeddings are applied in our ML models to improve performance in tasks where feature extraction is key. Thus, BERT embeddings are used for deep learning models, while TF-IDF embeddings are applied for ML models, maximizing each strategy’s performance according to its advantages.

The distinct embedding techniques used in the experiment illustrate how MLand DL models leverage various types of feature representations as follows:

Feature extraction for DL models

In this study, we utilize the BERT tokenizer as part of the feature extraction process for building the BERT-LSTM model. For a variety of NLP applications, BERT, a pre-trained transformer model, has demonstrated exceptional efficacy. For feature extraction, we employ the BERT tokenizer to convert input sentences into tokenized representations that BERT can process. Specifically, we use the BertTokenizer from the transformers library, initializing it with the model identifier “bert-base-uncased”. This tokenizer converts text into a sequence of tokens, which are then mapped to their respective token IDs. These token IDs are used as inputs to the BERT model, which generates contextualized embeddings for each token in a sentence.

The tokenizer processes the sentences with truncation and padding to ensure a consistent sequence length, specified as 128 tokens. Padding is added to sentences shorter than this length, and longer sentences are truncated. The tokenizer outputs several components, including input_ids, attention_mask, and token_type_ids. The input_ids represent the tokenized words, while the attention_mask indicates which tokens are padding (0) and which are actual data (1). The token_type_ids are used to differentiate between different sentences in tasks like question-answering. These components are converted into tensors and moved to the appropriate device (GPU or CPU) for model processing.

We also define a collate_fn function, which prepares the batch for training by handling tokenization and padding for each sentence, converting the corresponding labels to tensor format, and ensuring that the output is compatible with the BERT model. A PyTorch DataLoader is used to load the dataset efficiently, with batching and shuffling enabled to improve training performance.

The main benefit of using BERT for feature extraction in the BERT-LSTM model is its ability to generate rich, context-dependent word embeddings. BERT captures the nuances of language by processing sentences bidirectionally, meaning that each word’s meaning is influenced by both the words preceding and following it. This feature enables the model to comprehend intricate linguistic patterns, enhancing performance in jobs where context comprehension is essential, such as sentiment analysis. The BERT-LSTM model improves the model’s capacity to identify both local and global dependencies in the text by combining BERT embeddings with a CNN architecture to further extract hierarchical features from the tokenized input. This hybrid method is very successful for sentiment classification tasks, particularly in complex datasets with a wide range of language expressions, because it combines the pre-trained knowledge of BERT with the spatial feature extraction power of CNNs.

Feature extraction for ML msodels

Feature extraction plays a crucial role in ML by converting raw data into a structured format suitable for ML algorithms. In NLP tasks, such as sentiment analysis, one of the most commonly used techniques for extracting features from text data is TF-IDF. This method transforms textual information into numerical vectors by assessing the significance of words within a document in relation to the entire dataset.

TF-IDF is computed in two key steps:

-

1.

Term frequency (TF): This measures how often a specific word appears in a document. The higher the frequency, the more relevant the word may be to the document’s context. It is mathematically expressed as:

$$\begin{aligned} \text {TF}(w, d) = \frac{\text {Number of times word } w \text { appears in document } d}{\text {Total words in document } d} \end{aligned}$$

where \(w\) represents a word, and \(d\) denotes a document.

-

2.

Inverse document frequency (IDF): This evaluates the uniqueness of a word across multiple documents. Words that appear frequently across many documents contribute less to distinguishing content, while rarer words carry more weight. IDF is defined as:

$$\begin{aligned} \text {IDF}(w) = \log \left( \frac{N}{\text {df}(w)} \right) \end{aligned}$$

where \(N\) is the total number of documents, and \(\text {df}(w)\) represents the number of documents containing the word \(w\).

The final TF-IDF score is computed by multiplying these two values:

$$\begin{aligned} \text {TF-IDF}(w, d) = \text {TF}(w, d) \times \text {IDF}(w) \end{aligned}$$

This score highlights the relevance of a word in a specific document relative to the entire dataset.

For our implementation, we utilize the TfidfVectorizer from the sklearn.feature_extraction.text module to transform textual data into numerical feature vectors. The TfidfVectorizer automatically calculates TF-IDF scores for words across the dataset and generates a sparse matrix, where each row corresponds to a document, and each column represents a unique term in the vocabulary. This matrix is then used as input for ML models, such as LRC or DTC.

The primary benefits of using TF-IDF for feature extraction in ML models include:

-

Weighting term importance: By emphasizing rare and informative terms while down-weighting common words, TF-IDF helps ML models focus on the most relevant features.

-

Handling high-dimensional data: TF-IDF can handle the high-dimensional nature of text data efficiently by transforming the text into numerical vectors that preserve semantic meaning, even with sparse representations.

-

Improved accuracy for text classification: TF-IDF allows the models to understand and utilize the contextual importance of words within the corpus, leading to better performance in classification tasks, such as sentiment analysis.

By using TF-IDF for feature extraction, we can complement our deep learning models with traditional ML models, allowing for a hybrid approach that benefits from both data-driven and expert-engineered feature sets.

Machine and deep learning algorithms

In our experiments, we used ML and DL models to identify the best-performing approach. Performance of BERT-LSTM was compared with other DL models to assess its effectiveness. Traditional ML techniques, including DTC, MNB, RFC, and LRC, were evaluated. DL models tested include BERT and BERT-LSTM, offering a comprehensive comparison between ML and DL approaches.

Decision tree classifier (DTC)

The Decision Tree classifier is a rule-based model that recursively partitions the dataset by selecting features that best differentiate the target classes. The selection process aims to reduce impurity at each node, which is typically measured using entropy or the Gini index. The mathematical formulations for these impurity measures are:

$$\begin{aligned} H(D) = -\sum _{i=1}^{k} p_i \log _2 p_i \end{aligned}$$

where \(p_i\) represents the probability of class \(i\) in dataset \(D\).

$$\begin{aligned} Gini(D) = 1 – \sum _{i=1}^{k} p_i^2 \end{aligned}$$

where \(p_i\) is the probability of class \(i\) in \(D\).

The feature with the minimum entropy or Gini index is selected at each step to create splits in the dataset. Decision Trees are widely used due to their interpretability, ability to handle various data types, and capacity to capture non-linear relationships.

Multinomial naive bayes (MNB)

The Multinomial Naive Bayes classifier applies Bayes’ theorem to estimate the probability of a class given a set of input features. It assumes that feature occurrences are independent given the class. The probability of class \(C\) given an input \(X\) is calculated as follows:

$$\begin{aligned} P(C \mid X) = \frac{P(C) \prod _{i=1}^{n} P(x_i \mid C)}{P(X)} \end{aligned}$$

where:

-

\(C\) is a class label.

-

\(X = (x_1, x_2, \dots , x_n)\) represents the input feature vector.

-

\(P(C \mid X)\) is the posterior probability of class \(C\).

-

\(P(C)\) is the prior probability of class \(C\).

-

\(P(x_i \mid C)\) is the likelihood of feature \(x_i\) occurring in class \(C\).

-

\(P(X)\) is the normalizing constant.

Naive Bayes is computationally efficient, performs well with high-dimensional data, and is particularly effective when dealing with word frequency-based features.

Random forest classifier (RFC)

Random Forest is an ensemble learning method that constructs multiple Decision Trees and aggregates their predictions to enhance accuracy. The algorithm employs bootstrap aggregation (bagging), where each tree is trained on a random subset of the dataset. The final prediction is determined by majority voting. The mathematical representation of Random Forest is:

$$\begin{aligned} {\hat{y}} = \frac{1}{T} \sum _{t=1}^{T} f_t(X) \end{aligned}$$

where: – \(T\) represents the total number of trees. – \(f_t(X)\) is the prediction from the \(t\)-th tree given input \(X\).

Random Forest reduces overfitting compared to a single Decision Tree and provides feature importance scores, which help in understanding the impact of individual variables.

Logistic regression classifier (LRC)

Logistic Regression is a statistical model used for binary classification tasks. It predicts the probability that an input belongs to a particular class using the logistic function:

$$\begin{aligned} P(y=1 \mid X) = \frac{1}{1 + e^{-w^T X + b}} \end{aligned}$$

where:

-

\(P(y=1 \mid X)\) is the probability that the input \(X\) belongs to class 1.

-

\(w\) is the weight vector.

-

\(X\) is the feature vector.

-

\(b\) is the bias term.

-

\(e\) is the Euler’s number (natural logarithm base).

The model parameters (\(w\) and \(b\)) are optimized by minimizing the log-likelihood loss function using gradient descent. Logistic Regression is favored for its simplicity, interpretability, and effectiveness in scenarios where the relationship between features and output is approximately linear.

BERT model

BERT (Bidirectional Encoder Representations from Transformers) is a deep learning model based on the Transformer architecture. It leverages self-attention mechanisms to process text in a bidirectional manner, considering both preceding and succeeding words in a sentence. The self-attention mechanism is computed as:

$$\begin{aligned} \text {Attention}(Q, K, V) = \text {softmax}\left( \frac{Q K^T}{\sqrt{d_k}} \right) V \end{aligned}$$

where: – \(Q\) (query), \(K\) (key), and \(V\) (value) are matrices derived from input embeddings. – \(d_k\) represents the dimension of the key vectors.

BERT undergoes pre-training through two key tasks: Masked Language Modeling (MLM), where certain words in a sentence are masked and predicted, and Next Sentence Prediction (NSP), where the model learns sentence relationships. Fine-tuning BERT on downstream tasks such as sentiment analysis enhances its ability to capture contextual meanings in text data.

By utilizing bidirectional context, BERT significantly improves performance across various NLP tasks, outperforming traditional word embedding models in terms of language understanding.

BERT-LSTM model

The BERT-LSTM model is a hybrid deep learning approach that combines the Bidirectional Encoder Representations from Transformers (BERT) with a Long Short-Term Memory (LSTM) network to enhance sentiment analysis of COVID-19-related tweets. BERT is pre-trained on large-scale textual data and generates contextual embeddings that capture the semantic meaning of words within a sentence. However, while BERT effectively models word-level dependencies, it does not explicitly retain sequential information across longer contexts. To address this, we integrate an LSTM layer after BERT, which allows the model to capture long-term dependencies and improve sentiment classification accuracy. The bidirectional LSTM (BiLSTM) further enhances the learning process by processing text sequences in both forward and backward directions, ensuring a richer understanding of sentiment patterns in tweets.

This hybrid architecture improves upon traditional machine learning models and standalone deep learning models like BERT by leveraging both contextual embeddings and sequential modeling. Unlike conventional machine learning approaches, which require manual feature extraction, BERT-LSTM automatically learns meaningful representations from raw text. Additionally, compared to BERT-only models, the inclusion of LSTM ensures that sequential dependencies are preserved, making it particularly effective for short, informal texts like tweets. By employing this model, we achieve superior performance in sentiment classification, capturing nuanced emotions and opinions expressed on social media regarding the COVID-19 pandemic.

Mathematical explanation of BERT-LSTM model

The BERT-LSTM model combines BERT’s powerful contextual word representations with LSTM’s ability to model long-range dependencies in sequential data. Mathematically, the model can be expressed as follows:

BERT embedding representation

Given an input tweet represented as a sequence of tokens:

$$\begin{aligned} X = [x_1, x_2,…, x_n] \end{aligned}$$

(1)

BERT encodes each token \(x_i\) into a contextualized embedding \(h_i\) using a multi-layer Transformer-based architecture:

$$\begin{aligned} H = BERT(X) = [h_1, h_2,…, h_n] \end{aligned}$$

(2)

where \(H \in {\mathbb {R}}^{n \times d}\), and \(d\) is the hidden dimension (typically 768 for BERT-base). The special [CLS] token embedding, denoted as \(h_{CLS}\), is often used as a sentence representation.

LSTM for sequential modeling

The embeddings from BERT are fed into a bidirectional LSTM (BiLSTM) layer, which processes the sequence in both forward and backward directions:

$$\begin{aligned} & \overrightarrow{h_t} = LSTM_f(h_t, \overrightarrow{h_{t-1}}) \end{aligned}$$

(3)

$$\begin{aligned} & \overleftarrow{h_t} = LSTM_b(h_t, \overleftarrow{h_{t+1}}) \end{aligned}$$

(4)

The final hidden state representation at each time step is the concatenation of both directions:

$$\begin{aligned} h_t^{LSTM} = [\overrightarrow{h_t}; \overleftarrow{h_t}] \end{aligned}$$

(5)

where \(h_t^{LSTM} \in {\mathbb {R}}^{2d}\), capturing both past and future dependencies.

Pooling and classification

To obtain a fixed-length representation for sentiment classification, we apply max pooling over the LSTM hidden states:

$$\begin{aligned} h_{pool} = \max _t h_t^{LSTM} \end{aligned}$$

(6)

Then, we concatenate this pooled output with the [CLS] embedding from BERT:

$$\begin{aligned} H_{final} = [h_{CLS}; h_{pool}] \end{aligned}$$

(7)

Finally, the classification layer maps this representation to sentiment classes using a fully connected layer with softmax activation:

$$\begin{aligned} {\hat{y}} = \text {Softmax}(W H_{final} + b) \end{aligned}$$

(8)

where \(W\) and \(b\) are trainable parameters, and \({\hat{y}}\) represents the predicted sentiment probabilities (e.g., Positive, Neutral, Negative).

This mathematical formulation highlights how BERT and LSTM work together to enhance the sentiment analysis of COVID-19 tweets, ensuring both deep contextual representation and sequential information retention.

Benefits of BERT-LSTM

BERT-LSTM effectively combines the strengths of BERT’s contextual word representations with LSTM’s ability to model long-term dependencies in sequential data. While BERT captures rich contextual meanings, it lacks explicit sequence modeling, which LSTM addresses by maintaining memory across the sequence, thereby improving sequential dependency modeling. This hybrid approach is particularly beneficial for sentiment analysis of tweets, as social media text is often informal and context-dependent, requiring both deep feature extraction and sequential understanding. Moreover, BERT-LSTM outperforms traditional ML models such as DTC, MNB and RFC, as well as standalone DL models such as BERT, by leveraging both powerful feature representations and sequential learning, ultimately leading to higher classification accuracy.

Fine-tuning process

In order to maximize the performance of the suggested model BERT-LSTM and other deep learning models for the particular task at hand, we fine-tune them in our trials. In order to adapt pre-trained models, like BERT, to a new task, fine-tuning is an essential step that involves further training the model on the target dataset at a lower learning rate. This procedure preserves the generic information gained from extensive pretraining while enabling the model to learn domain-specific features and modify its weights. In order to provide rich, context-aware embeddings for the input text, we employ the pre-trained BERT model (bert-base-uncased) as the encoder for the BERT-LSTM model. A bidirectional LSTM layer is then applied to these embeddings in order to identify any sequential dependencies in the data. After the LSTM reduces the dimensionality of the sequence output using a MaxPooling layer, the features are concatenated with the [CLS] token output from BERT and then go through a fully connected layer for classification. The AdamW optimizer is the first optimizer we create to fine-tune the BERT-LSTM model. Its learning rate is \(5 \times 10^{-5}\) and its weight decay is \(1 \times 10^{-4}.\) This ensures effective training with an emphasis on minimizing overfitting. When training for classification problems, the loss is calculated using the Cross-Entropy Loss function. The model’s parameters are updated via backpropagation over the course of 20 training epochs. After each epoch, the model’s generalization capacity is evaluated on a validation dataset as part of the fine-tuning process. To keep an eye on the model’s development, we measure important performance indicators including recall, accuracy, and F1 score. The best-performing model for our assessment is the one that produces the highest validation accuracy. In summary, fine-tuning the BERT-LSTM and BERT models involves adjusting the pre-trained weights to suit the specific characteristics of our target dataset, ultimately improving their performance for our text classification task.

Hyperparameters

Tuning hyperparameters is essential for maximizing model performance. Based on previous research and practical assessment, we carefully chose and adjusted the hyperparameters for both the ML and DL models in this work. We trained RFC with a learning rate of 0.001 and DTC, MNB, and LRC ML models with a learning rate of 0.1. The SGD optimizer was used to train each ML model for 100 epochs. To guarantee effective training, a batch size of 32 was chosen. We used a BERT-LSTM architecture for the DL model, combining an LSTM layer for sequential learning with BERT for contextual feature extraction. With a weight decay of \(1 \times 10^{-4}\), the AdamW optimizer was used to optimize the learning rate, which was set at \(5 \times 10^{-5}\). For regularization, a 0.25 dropout rate was used. ReLU was employed as the activation function for the 768 hidden units in the LSTM layer. The sequence length was set to 128 for consistent text processing, and a batch size of 64 was selected for effective gradient modifications. In order to balance convergence and computational efficiency, the model was trained across 20 epochs. The model was then stored for assessment, and the optimal epoch was chosen. These hyperparameter choices were carefully evaluated to improve generalization across binary, three-class, and five-class sentiment classification tasks.

Explanation of hyperparameters

-

Learning rate: The learning rate controls how much the model updates its parameters during training. For machine learning models such as Decision Tree Classifier (DTC), Multinomial Naïve Bayes (MNB), and Logistic Regression Classifier (LRC), a learning rate of 0.1 is used. In contrast, Random Forest Classifier (RFC) utilizes a learning rate of 0.001. For deep learning models, a much smaller learning rate of \(5 \times 10^{-5}\) is chosen to maintain stability during fine-tuning.

-

Batch size: The batch size defines how many samples are processed in one training iteration. Traditional ML models commonly use a batch size of 32. However, deep learning architectures like BERT-LSTM require a larger batch size of 64 to efficiently manage memory constraints during training.

-

Epochs: This parameter indicates how many times the entire dataset is used for training. Machine learning models typically undergo 100 epochs to ensure thorough learning, whereas deep learning models like BERT-LSTM are trained for 20 epochs. The lower epoch count in deep learning is due to the high computational demands, and the best-performing epoch is selected based on validation results.

-

Optimizer: The optimizer fine-tunes model parameters to minimize loss. Machine learning models generally rely on Stochastic Gradient Descent (SGD) for optimization. In contrast, deep learning models employ the AdamW optimizer, which dynamically adjusts the learning rate and is particularly suited for handling large-scale architectures such as BERT.

-

Weight decay: This regularization technique helps prevent overfitting by penalizing large parameter values. It is especially important for deep learning models like BERT-LSTM, where a weight decay value of \(1 \times 10^{-4}\) is applied.

-

Dropout rate: Dropout is a method used to improve generalization by randomly deactivating a portion of neurons during training. In the BERT-LSTM model, a dropout rate of 0.25 is employed to mitigate overfitting.

-

Hidden units: In deep learning, the number of hidden units determines the size of the hidden layers. The LSTM layer in the BERT-LSTM model contains 768 hidden units, aligning with the pre-trained hidden state size of BERT.

-

Activation function: The activation function determines how neuron outputs are transformed. In the BERT-LSTM model, the ReLU (Rectified Linear Unit) function is applied, introducing non-linearity into the network.

-

Sequence length: This parameter specifies the number of tokens processed in each input sequence. For BERT-LSTM, a sequence length of 128 is chosen, which is a standard setting for fine-tuning BERT in text classification tasks.

These hyperparameters have been carefully tuned to enhance the performance of both machine learning and deep learning models. The selected values, particularly for deep learning models, are based on prior research and experimental validation, ensuring a balance between computational efficiency and model effectiveness.