From monitoring and access control to smart factories and predictive maintenance, the deployment of artificial intelligence (AI) built around machine learning (ML) models is becoming widespread in industrial IoT edge processing applications. This proliferation has “democratized” building AI-enabled solutions, moving them from being the domain of data scientists to something that embedded system designers can understand.

The challenge with such democratization is that designers are not always fully equipped to define the problem to be solved and to best capture and organize the data. Unlike consumer solutions, there are few datasets for industrial AI implementations, so they often have to be created from scratch from users' data.

to the mainstream

AI has gone mainstream, and deep learning and machine learning (DL and ML, respectively) are behind many applications we now take for granted, including natural language processing, computer vision, predictive maintenance, and data mining. Early AI implementations were cloud or server-based, requiring significant processing power, storage, and high bandwidth between the AI/ML application and the edge (endpoint). While generative AI applications such as ChatGPT, DALL-E, and Bard still require such a setup, recent years have seen the emergence of edge processing AI, where data is processed in real-time at the point of capture.

Edge processing significantly reduces dependence on the cloud, making the entire system/application faster and requiring less power and cost. Many believe that security should also be improved, but it's fair to say that the primary focus of security has shifted from securing on-board communications between the cloud and endpoints to making edge devices more secure. It may be accurate.

AI/ML at the edge can be implemented in traditional embedded systems, giving designers access to powerful microprocessors, graphical processing units, and rich memory devices. PC-like resources. However, there is a growing demand for IoT devices (commercial and industrial) powered by AI/ML at the edge, where they typically have limited hardware resources and are often battery-powered.

The potential for AI/ML at the edge, running on resource- and power-constrained hardware, gave rise to the term TinyML. Use cases include industry (e.g., predictive maintenance), building automation (environmental monitoring), construction (monitoring worker safety), and security.

data flow

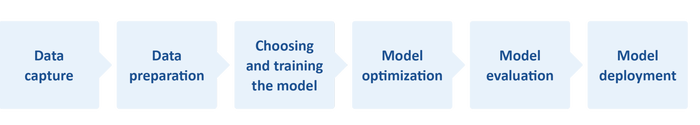

AI (and its subset ML) requires a workflow from data capture/collection to model deployment (Figure 1). When it comes to TinyML, optimization of the entire workflow is essential due to limited resources within embedded systems.

For example, TinyML has resource requirements of 1-400 MHz processing speed, 2-512 KB of RAM, and 32 KB-2 MB of storage (flash). Also, from 150 μW to 23.5 mW, it is often difficult to operate within such a small power budget.

Figure 1. Simplified AI workflow flow. Model deployment itself requires data to be fed back into the flow, and in some cases can even affect data collection. (microchip)

Greater considerations, or trade-offs, exist when incorporating AI into resource-constrained embedded systems. Models are important for system operation, but designers often compromise between model quality and accuracy, which affects system reliability/reliability and performance. Mainly operating speed and power consumption.

Another important factor is deciding what type of AI/ML to adopt. Generally, three types of algorithms are available: supervised, unsupervised, and reinforcement.

Creating a workable solution

Even designers with a strong understanding of AI and ML can struggle to optimize each stage of an AI/ML workflow and strike the perfect balance between model accuracy and system performance. So how can an embedded designer with no experience meet the challenge?

First, don't lose sight of the fact that models deployed on resource-constrained IoT devices are efficient when the models are small and the AI tasks are limited to solving simple problems.

Fortunately, the arrival of ML (particularly TinyML) in the embedded systems space has given rise to new (or improved) integrated development environments (IDEs), software tools, architectures, and models, many of which are open source. . For example, TensorFlow™ Lite for Microcontrollers (TF Lite Micro) is a free, open-source software library for ML and AI. It was designed to implement ML on devices with just a few KB of memory. Programs can also be written in Python, which is also open source and free.

For IDE, Microchip's MPLAB® X is an example of such an environment. The IDE can be used with the company's MPLAB ML, an MPLAB X plugin developed specifically for building optimized AI IoT sensor recognition code. Powered by AutoML, MPLAB ML Fully automate each step of your AI ML workflow and eliminate the need for repetitive, tedious, and time-consuming model building. Feature extraction, training, validation, and testing ensure an optimized model that meets the memory constraints of a microcontroller or microprocessor, allowing developers to use it on their Microchip Arm® Cortex-based 32-bit MCU or MPU. You can quickly create and deploy ML solutions.

Workflow optimization

Workflow optimization tasks can be simplified by starting with ready-made datasets and models. For example, if an ML-enabled IoT device requires image recognition, it makes sense to start with an existing dataset of labeled static images and video clips for model training (testing and evaluation). Note that supervised ML algorithms require labeled data.

Many image datasets already exist for computer vision applications. However, because they are aimed at PC, server, or cloud-based applications, they tend to be large. For example, ImageNet contains over 14 million annotated images.

Depending on your ML application, you may only need a small subset. There are many images of people, but only a few images of inanimate objects. For example, when using a ML-enabled camera at a construction site, it can immediately raise an alarm if someone not wearing a helmet comes into view. The ML model needs to be trained, but probably only with a few images of people wearing or not wearing helmets. However, you may need a larger dataset for hat types and sufficient range within the dataset to account for various factors such as different lighting conditions.

Have the correct live (data) inputs and datasets, prepare the data, and train the model. Consider steps 1 through 3 in Figure 1. Model optimization (step 4) is typically a case of compression, which helps reduce memory requirements (RAM during processing). and her NVM for storage) and processing delays.

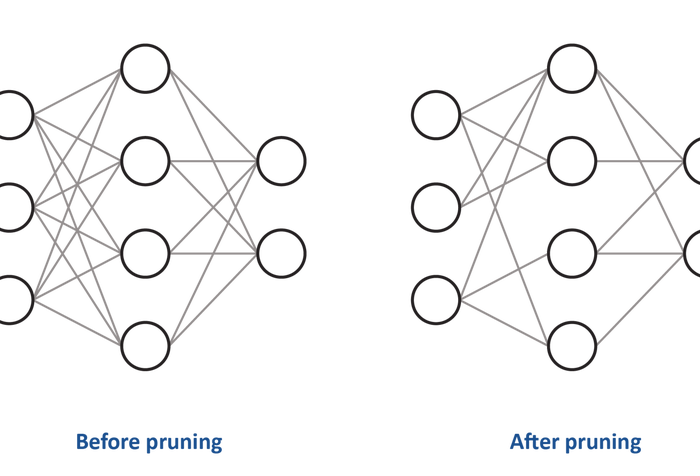

When it comes to processing, many AI algorithms, such as convolutional neural networks (CNNs), struggle with complex models. A common compression technique is pruning (see Figure 2). There are four types of this: weight pruning, unit/neuron pruning, and iterative pruning.

Figure 2. Pruning reduces the density of a neural network. Above, the weights of some of the connections between neurons are set to zero. (microchip)

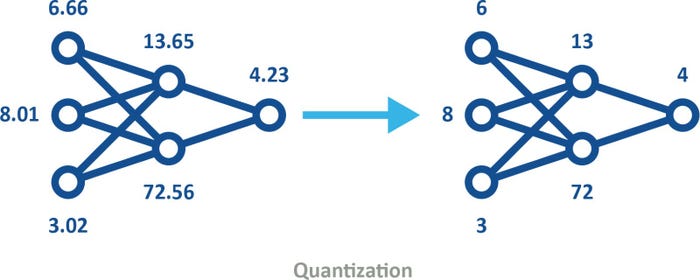

Quantization is also a common compression technique. This process converts data from a high-precision format, such as floating point 32-bit (FP32), to a lower-precision format, such as 8-bit integer (INT8). The use of quantized models (see Figure 3) can be incorporated into machine training in one of two ways.

•Post-training quantization involves using a model such as FP32 format, and once training is considered complete, it is quantized for deployment. For example, standard TensorFlow can be used to train and optimize initial models on your PC. The model can then be quantized and embedded into IoT devices through TensorFlow Lite.

•Quantization-aware training emulates quantization during inference and uses downstream tools to create models and produce quantized models.

Figure 3. Quantized models use lower precision, reducing memory and storage requirements and increasing energy efficiency while maintaining the same shape. (microchip)

Although quantization is useful, it should not be used excessively, as it is similar to representing colors with fewer bits or compressing digital images using fewer pixels.

summary

AI is now really taking off in the embedded systems space. However, this democratization means that design engineers who have not previously had to understand AI or ML will now face the challenge of implementing AI-based solutions into their designs.

The challenge of creating ML applications may be daunting, but it's not new, at least for experienced embedded system designers. The good news is that there is a wealth of information (and training) available in the engineering community, IDEs like MPLAB X, model builders like MPLAB ML, and open source datasets and models. This ecosystem helps engineers at various levels understand AL and ML solutions that can be implemented on 16-bit and even 8-bit microcontrollers.

Yann LeFaou is an Associate Director in Microchip's Touch and Gesture Business Unit. In this role, LeFaou leads the team developing capacitive touch technology and also drives the company's machine learning (ML) initiatives for microcontrollers and microprocessors. He has held a series of technology and marketing roles at Microchip, including leading the company's global marketing efforts for capacitive touch, human-machine interfaces, and consumer electronics technologies. LeFaou holds a degree from his ESME Sudria in France.