MosaicML has announced the availability of MPT-30B Base, Instruct, and Chat, the most advanced models in the MPT (MosaicML Pretrained Transformer) series of open source large-scale language models. Trained on an 8K token context window, these state-of-the-art models surpass the quality of his original GPT-3 and can be used directly for inference or as a starting point for building your own models. increase. They were trained on the MosaicML platform, partly using NVIDIA’s latest generation H100 accelerator. This accelerator is now available to MosaicML customers. By building on the MPT-30B, enterprises can harness the power of generative AI without compromising security or data privacy.

Over 3 million MPT downloads since May

The MosaicML MPT family of models are some of the most powerful and popular open source language models commercially available today. Since its launch on May 5, 2023, the MPT-7B model (Base, Instruct, Chat, StoryWriter) has been downloaded over 3.3 million times. The new release expands his MPT family with a larger, higher quality model of his MPT-30B that allows for even more applications. As usual, his MPT model in MosaicML is optimized for efficient training and inference.

MPT-30B surpasses GPT-3

As we celebrate the 3rd anniversary of GPT-3, it’s worth noting that the MPT-30B was designed to exceed the quality of this iconic model. When measured using standard academic benchmarks, the MPT-30B outperforms his first published GPT-3.

Moreover, the MPT-30B achieves this quality target while using up to 1/6th the number of parameters. GPT-3 has 175 billion parameters, while MPT-30B has only 30 billion parameters. This means that the MPT-30B is easier to run on local hardware and much cheaper to deploy for inference. Starting today, developers and businesses will be able to build and deploy their own commercially viable, enterprise-grade GPT-3 quality model of him. It was also trained at an order of magnitude lower cost than his original GPT-3 estimate, putting the ability to train custom GPT-3 models within the reach of enterprises.

Finally, MPT-30B was trained on longer sequences (up to 8,000 tokens) than GPT-3, the popular LLaMA model family, and the recent Falcon model (2,000 tokens each). It is actually designed to handle longer sequences, making it ideal for data-intensive enterprise applications.

Training on H100 GPUs Now Available to MosaicML Customers

MPT-30B is the first publicly known LLM trained on NVIDIA H100 GPUs, thanks to the great flexibility and reliability of the MosaicML platform. Within days of the hardware delivery, the MosaicML team was able to seamlessly migrate his MPT-30B training run from his original A100 cluster to his new H100 cluster, increasing the throughput per GPU to his More than a 2.4x increase resulting in a faster finish time. MosaicML is committed to bringing the latest advances in hardware and software to all businesses, enabling them to train models faster and at a lower cost than ever before.

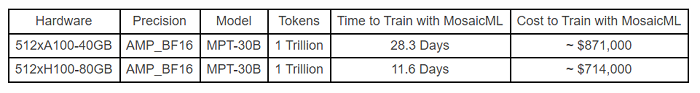

Time and cost to pre-train MPT-30B from scratch with 1 trillion tokens.

The H100 time is extrapolated from the 256xH100 system. Costs are based on the current price of $2.50/A100-40GB/hour and $5.00/H100-80GB/hour for MosaicML reserved clusters as of June 22, 2023. Costs are subject to change.

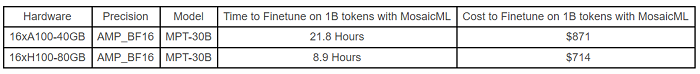

Time and cost fine-tuning of MPT-30B in small systems.

Costs are based on the current price of $2.50/A100-40GB/hour and $5.00/H100-80GB/hour for MosaicML reserved clusters as of June 22, 2023. Costs are subject to change.

MosaicML MPT: Powering the New Generation of AI Applications

Companies have deployed MPT models for use cases such as code completion and dialog generation, and fine-tuned these models using their own data.

Replit, the world’s leading web-based IDE, was able to build a new code generation model in just three days using its own data and MosaicML’s training platform. Their custom MPT model, replit-code-v1-3b, has significantly improved the GhostWriter product’s performance in terms of speed, cost and code quality.

Scatter Lab, a cutting-edge AI startup building “social AI chatbots” that enable engaging human-like conversations, trained its own MPT model from scratch to power custom chatbots . The model is one of his first multilingual generative AI models that can understand both English and Korean, enabling a new chat experience for 1.5 million users.

Navan, a global travel and expense management software company, builds a custom LLM on top of the MPT foundation.

“At Navan, we use generative AI across our products and services to power experiences such as virtual travel agents and conversational business intelligence agents. We are excited to see how this promising technology develops,” said Navan Co-Founder and Chief Technology Officer. One Ilan Twig said.

The MPT-30B is designed to accelerate model development for companies wishing to build their own language models for chat, question answering, summarization, extraction and other language applications.

How Developers Can Use MPT-30B Today

The MPT-30B is completely open source and available for download through the HuggingFace Hub. Developers can fine-tune his MPT-30B based on data or deploy models for inference on their own infrastructure. For a faster and easier experience, developers can use his MPT-30B-Instruct managed his endpoint in his MosaicML to perform model inference. This frees the developer from having to reserve GPU capacity for her to serve the infrastructure they serve. MPT-30B-Instruct is priced at $0.005/1,000 tokens, which is 4-6 times cheaper than comparable endpoints such as OpenAI DaVinci. For technical details, see the MPT-30B blog.

Sign up for the free insideBIGDATA newsletter.

Join us on Twitter: https://twitter.com/InsideBigData1

Join us on LinkedIn: https://www.linkedin.com/company/insidebigdata/

Join us on Facebook: https://www.facebook.com/insideBIGDATANOW