April 30, 2026

story

Ever since physical AI became the hype term of the time, I’ve been a little uncomfortable with the term.

As commonly defined, physical AI refers to the intersection of AI and the physical world, and the computational processes that allow AI to sense, control, and operate in the real world.

These decisions and actions are made based on sensor data (read: sensor fusion) and statistical analysis of historical models and training on the data (read: machine learning and AI). All of this leads to commands that are sent to the robot, control system, valves, actuators, and all the rest of the devices at the edge.

All in all, it’s a so-called “physical AI” and I think that’s good enough, but my problem boils down to: There is already a better term for that process and all of its underlying technology. That’s what embedded computing is, and always has been. Well-designed computing structures and functionality are embedded within a working physical device. Physical AI’s claim to be a differentiator is a relatively recent addition to the embedded computing space, but it has been part of embeddedness since its inception: industrial automation.

And there’s also friction, right? Industrial automation is in a rare boom cycle. In its February 2026 report on the state of industrial automation, Price Waterhouse Cooper (PwC) predicted that “the median proportion of industrial manufacturers implementing highly automated processes is expected to more than double by 2030, rising from 18 percent to 50 percent.” This is a significant increase in a short period of time. Additionally, companies that are currently in the lead may further extend their lead as competitors seek to catch up.

“As technology adoption and automation accelerate, the advantage will shift from who has the tools to who can deploy them and orchestrate them the fastest. Manufacturers who are nimble, technology-enabled, and fit for the future already have an advantage. The chasm between those who are technology-enabled and those still operating patched systems will widen further,” said Ryan Hawk, global industry and services leader at PwC US.

PwC is also looking at physical AI now, but to be fair, they don’t think the same way I do. However, if you accept my premise (and I do) that physical AI is just another term for embedded computing, then the future is bright for embedded companies leading the way in automation.

In this March 2026 report, PwC predicts that the global physical AI market could reach nearly $500 billion by 2030, with initial pilots scaling up to commercial deployments in limited domains and early adopter use cases over the next three to five years. Most striking and interesting to me is that the report predicts value to be created across the entire ecosystem, so benefits and growth will likely not be limited to robotics and automakers, but will also extend to “semiconductor suppliers, cloud and data center operators, simulation and software platform providers, infrastructure players, and end users redesigning their processes around embodied intelligence.”

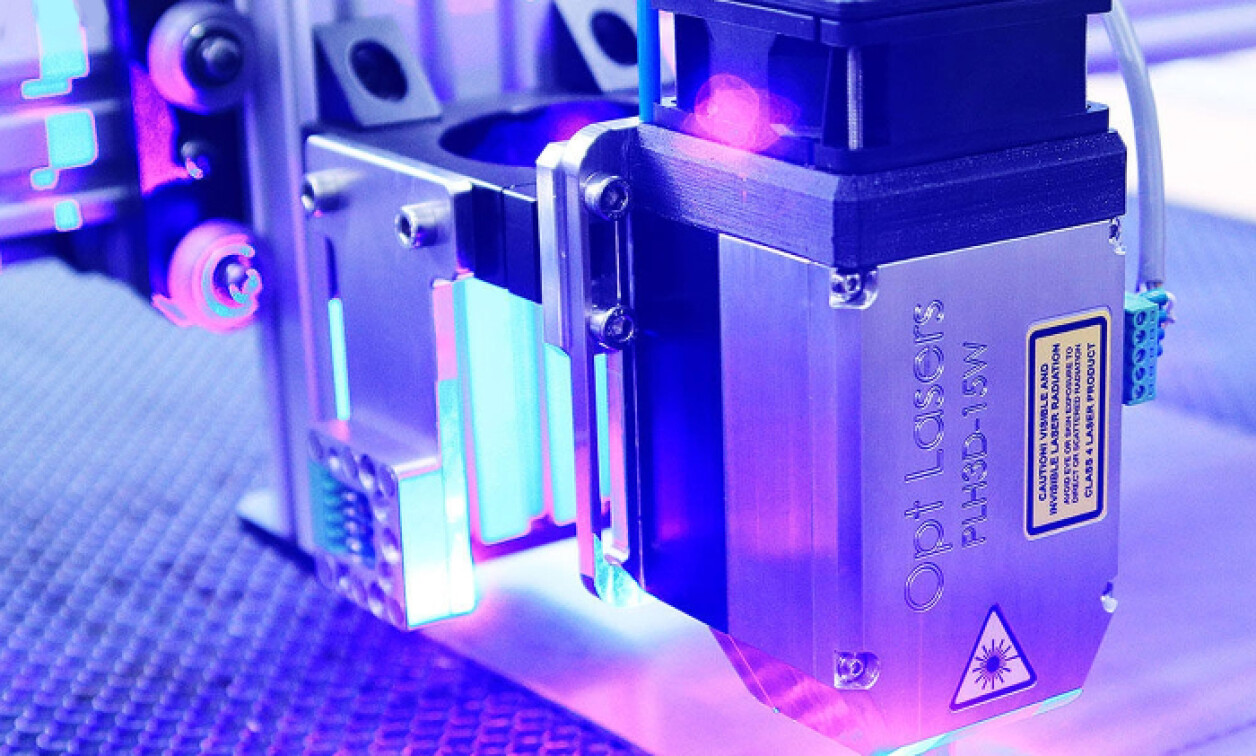

Embedded Computing Design contributing editor Rich Nass wrote about the important intersection of motor control and physical AI in a recent article. This is emblematic of the entire physical AI trend. Every part of it relies on and is deeply intertwined with innovations in embedded technology and industrial automation.

As 2026 continues, look for the intersection between physical and digital, AI and servo motors, embedded computing and machine learning. You can see that there is a lot to see.