Evolving threat landscape

Professor Renato Cuocolo

The threat these new technologies pose to healthcare institutions should not be underestimated, emphasizes Professor Renato Cuocolo, a radiologist at the University of Salerno. For example, reversing the damage caused by data poisoning requires significant resources. “Once a model is poisoned, you cannot simply delete the poisoned data after the fact,” he explained. “You have to retrain the model from scratch, reimplement it from scratch, and revalidate it again. Obviously, the cost is orders of magnitude higher than traditional software that can be simply patched.”

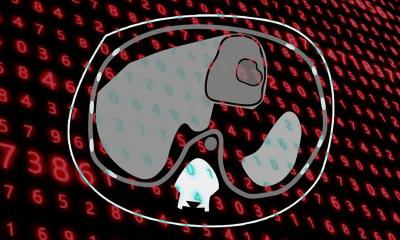

Additionally, this vulnerability could be used to increase the threat level of ransomware attacks, which are already a concern. Rather than encrypting the data, an attacker could corrupt a small portion of the hospital’s files. There is no way to know which data is true and which data is false.

Another new vulnerability opened by LLM technology is the possibility of inversion attacks. “When using generative AI to generate synthetic data for research or training purposes, you need to be aware that certain types of prompts may be used that may cause the generated data to be a little too close together,” Cuocolo continued. For example, an attacker might ask the AI model to “generate a brain MRI of a 40-year-old man with glioblastoma in hospital X.” If the model overfits, users may extract not only personal information but also recognizable image information of the real patients used in the training data. “The model itself becomes an access point,” experts point out. “And it’s more easily accessible than the original data.”