Yesterday, we demonstrated a new real-time video AI model that can generate its first frame in less than a tenth of a second. If you’re feeling like the world is out of control and full of AI shit right now, wait for what’s to come.

To be honest, social media is catching me more these days: newsreel-style videos, Donald dancing, Michael Jordan saying things Michael Jordan never said, influencers who never existed. I pride myself on having some knowledge, but the clear signs of what is produced and what is genuine are starting to blur.

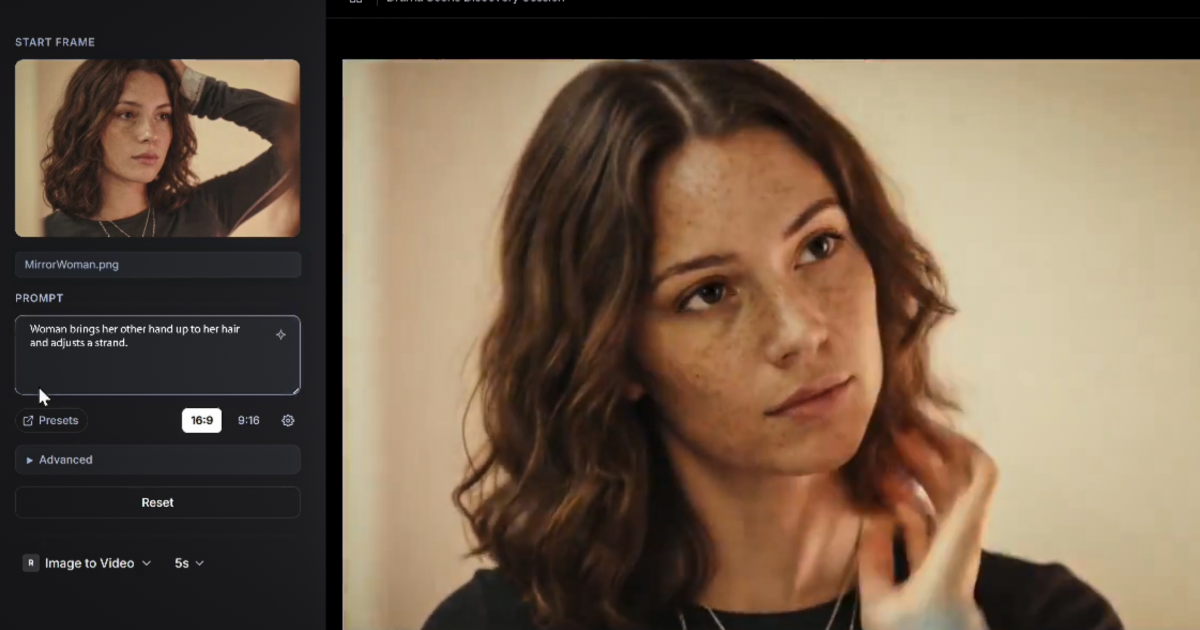

And as the reeling world struggles to come to terms with the problem, the next salvo comes. It’s AI-generated real-time video. Runway has teamed up with Nvidia to announce an as-yet-unnamed video model that takes less than 100 milliseconds from prompt to first frame. For reference, blinking takes between 100 and 400 milliseconds.

We’re having some issues with X at the moment so embedding isn’t working, but you can check out the video here.

Demonstrated yesterday at Nvidia’s GPU Technology Conference (GTC) in San Jose, it’s believed to be the first AI video generation tool that can start streaming instantly at truly high resolutions. So what does it mean?

Well, on the positive side, it’s definitely a step towards the holodeck-style convergence I’ve been advocating for years, where we’ll be able to interact in real time with characters, situations, and worlds of our own choosing. The ultimate video gaming experience in virtual reality is delivered frame by frame a la carte, catering to your every whim. In fact, Runway is one of many companies tackling this very idea through playable world generation.

GWM Real-Time World — 2025 Research Demo Day | Runway

On the downside…well, everything you now recognize as AI will at some point start coming at you in real time, tailored to persuade or persuade you, generated using real images as a starting point, and potentially capable of reading and reacting to your body language in real time. What a strange world we are building for ourselves!

In any case, it will still take a minute. The Runway demo was run on Nvidia’s Vera Rubin. This supercomputer runs 36 Vero CPUs, 72 Rubin GPUs, 54 terabytes of CPU memory, 20.7 terabytes of GPU memory, and more teraflops than I can comfortably poke. I can probably run with this crisis.

10 Years of AI Infrastructure Innovation: From NVIDIA DGX-1 to NVIDIA Vera Rubin

That means it’s not yet in the hands of spammers and scammers, but it is absolutely within the reach of governments and businesses, and hardware limitations are unlikely to remain limited for long.

Source: Runway