SK Hynix exhibited advanced memory technology for the era of AI and high performance computing (HPC) at Supercomputing 2025 (SC25) held in St. Louis, USA from November 16th to 21st.

SC is the world’s largest global HPC conference, held annually since 1988. Experts from industry, academia, and research institutions come together to share the latest trends, create opportunities for collaboration, and discuss the future direction of technological progress. The convergence of AI and HPC emerged as a central topic at this year’s event.

Under the theme of “Memory, AI and Powering Tomorrow,” SK Hynix introduced an innovative memory lineup that leads the AI and HPC markets, and shared a new technology vision to accelerate data analysis in computing systems.

Visitors touring the SK Hynix booth

Innovative products and technologies that drive AI and HPC performance

SK Hynix announced its main products such as HBM at this event.1DRAM, and eSSD2 Solution. Visitors to the booth could also see product demonstrations in AI and HPC environments, highlighting the company’s technological competitiveness.

1High Bandwidth Memory (HBM): A high-value, high-performance memory product that vertically interconnects multiple DRAM chips to increase capacity and dramatically increase data processing speeds. There are six generations of HBM, starting with the original HBM, followed by HBM2, HBM2E, HBM3, HBM3E, and HBM4.

2Enterprise solid state drive (eSSD): Enterprise-grade SSD used in servers and data centers.

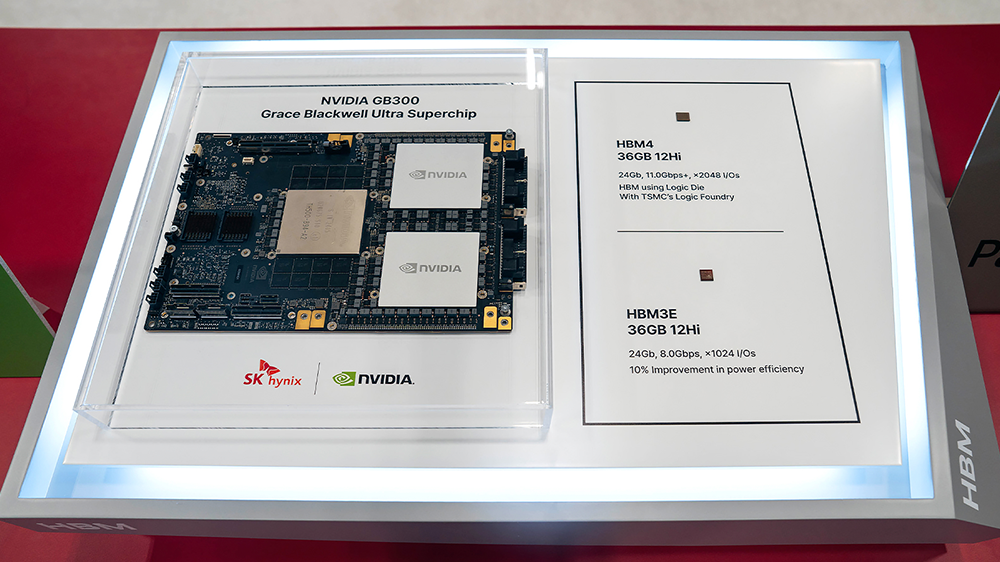

At the front of the booth, SK Hynix displayed its latest HBM products, including the world’s first 12-layer HBM4 developed in September 2025. HBM4 has 2,048 input/output (I/O) channels, twice the number of the previous generation, providing a significant increase in bandwidth. And with over 40% more power efficiency, HBM4 is an ideal solution for ultra-high performance AI computing systems.

Additionally, 12-layer HBM3E, the highest performance commercial HBM currently available on the market, was announced along with NVIDIA’s next-generation GB300 Grace™ Blackwell GPU.

A variety of advanced DDR5-based server DRAM modules

The DRAM section featured DDR5-based modules for the next-generation server market. These include RDIMMs3 and MRDIMM4 Products that utilize 1c5 Node, 6th generation of 10 nm process technology, and 256 GB 3DS6 DDR5 RDIMM and 256GB DDR5 Tall MRDIMM. These solutions deliver faster speeds and improved power efficiency in high-performance system environments, supporting stable operation of servers and data centers.

3Registered Dual Inline Memory Module (RDIMM): This is a memory module product for servers equipped with multiple DRAM chips.

4Multiplex Rank Dual Inline Memory Module (MRDIMM): This is a product that increases speed by operating two ranks, which are the basic operating units of the module, at the same time.

51c: 10 nm process technology has evolved over six generations: 1x, 1y, 1z, 1a, 1b, and 1c.

63D stacked memory (3DS): High-performance memory with two or more DRAM chips interconnected using TSV technology.

High-performance eSSD products that achieve large capacity

SK Hynix also exhibited a variety of high-capacity and high-performance eSSDs. Among the solutions on display were the PS1010 E3.S and PE9010 M.2 based on 176-layer 4D NAND, and the PEB110 E1.S built on 238-layer NAND. The QLC-based PS1012 U.2 was also featured in this section.7 NAND, and the 245 TB PS1101 E3.L built on the industry’s best 321-layer QLC NAND.

7Quad Level Cell (QLC): NAND flash is classified based on how many bits of data can be stored in a single cell, the smallest unit of storage. NAND flash is classified as single-level cell (SLC), multi-level cell (MLC), triple-level cell (TLC), QLC, and pentalevel cell (PLC).

These products not only offer high capacity but also support high-speed data processing with high-speed I/O interfaces PCIe 4.0 and 5.0. Additionally, SK Hynix introduced a storage portfolio that supports a wide range of server environments, including the SE5110, which uses the SATA3 interface commonly applied in entry-level servers and PCs.

In addition to product exhibitions, SK Hynix also demonstrated next-generation solutions and highlighted future technology applications. First, a heterogeneous memory-based system consisting of CXL8 Memory modules DDR5 (CMM-DDR5) and MRDIMM were demonstrated in collaboration with semiconductor design experts Montage Technology. This system emphasized scalability of memory capacity and improved overall system performance.

8Computing Express Link (CXL): An interface technology that efficiently connects memory, processors, and other components in a system and extends bandwidth and capacity limitations.

Another demonstration featured the CXL Memory Module Accelerator (CMM-Ax), which integrates computational functionality into memory, and demonstrated its potential for integration with Meta’s vector search engine Faiss.9. The successful application of CMM-Ax on SK Telecom’s Petasus AI Cloud further highlighted the potential of using CMM-Ax in future AI infrastructures.

9Facebook AI Similarity Search (FAISS): Vector search engine developed by Meta. Unlike traditional keyword-based searches that require precise commands, it relies on vectors (numeric representations of data such as text and images) to understand the meaning and context of a query and return more relevant results.

Additionally, memory-centric AI machines based on CXL pooled memory10 Connecting multiple servers and GPUs without using a network to support distributed inference tasks for large-scale language models (LLMs) was demonstrated.

10CXL pooled memory: A technology that allows multiple hosts (CPUs and GPUs) to share memory capacity and data, increasing efficiency.

SK Hynix also demonstrated OASIS (Object-based Analytics Storage for Intelligent SQL Query Offloading), a next-generation storage system based on data-aware CSD.11. The system was applied to an HPC application developed by Los Alamos National Laboratory.12As a result, data analysis performance has been significantly improved. Additionally, Optimizer Offloading SSD, which maximizes GPU efficiency by running an optimizer, attracted attention.13 Perform calculations directly in storage during AI training.

11Data-aware compute storage drive (data-aware CSD): A storage device that can recognize, analyze, and process data on its own.

12Los Alamos National Laboratory (LANL): A national research and development center under the U.S. Department of Energy, it conducts research in a variety of fields including national security, nuclear fusion, and space exploration. It is especially known for conducting scientific research for the Manhattan Project, the Allied effort that developed the world’s first nuclear weapon during World War II.

13optimizer: A stage in AI model training that improves performance by adjusting the model’s parameters to minimize the loss function (which indicates how far the result is from the target).

A vision for next-generation storage: A revolution in data analytics efficiency

Soonyeal Yang, Technical Lead of Solution SW giving a presentation

In a presentation session, SK Hynix presented directions for improving data analysis efficiency and promoting storage innovation in HPC environments. Soonyeal Yang, Technical Lead for Solution SW, gave a presentation entitled “Proposal for OASIS: Interoperable standards-based computational storage systems to accelerate data analysis in HPC.”

Yang said that inefficiencies in I/O channels during HPC-based data analysis cause overall system performance degradation and increased costs. He emphasized that OASIS flexibly distributes this computational load and greatly contributes to system optimization.

At SC25, SK Hynix showcased both its future-oriented solutions and its vision for the upcoming AI infrastructure era. We will continue to be proactive in a rapidly changing environment and work closely with customers around the world to become the leading full-stack AI memory creator in the AI and HPC field.