In the video, MrWhoseTheboss tested GROK (GROK 3), Gemini (2.5 Pro), ChatGpt (GPT-4O), and Perplexity (Sonar Pro). He revealed throughout the video that he was impressed by the performance Grok is offering. Grok started off really well, relaxed a bit and came back to claim the second position behind ChatGpt. To be fair, ChatGpt and Gemini have boosted their scores thanks to the features that others simply lack, video generation.

To begin testing, MrWhoseTheboss tested the model's substantial problem-solving capabilities and gave this prompt to each AI model. Drive Honda Civic 2017. Aero Light 29″ Hard Shell (79x58x31cm) Can the number of suitcases fit in boots? Grok's answer was the easiest as he answered correctly “2”. I said that Chatgpt and Gemini could theoretically fit 3, but in reality 2.

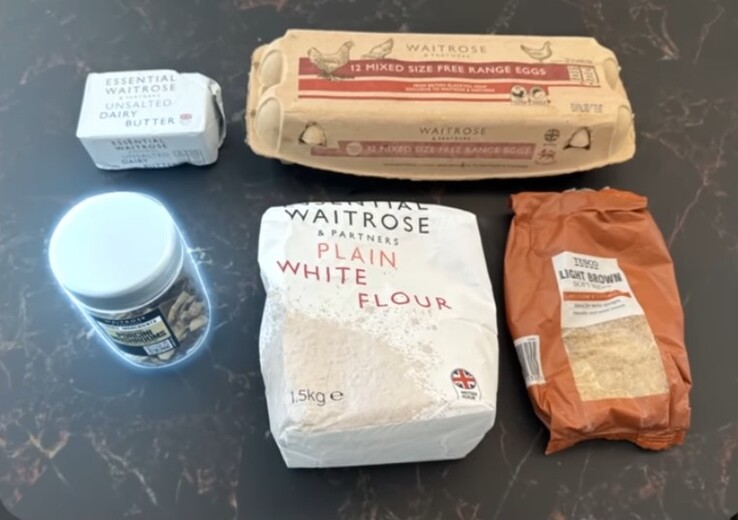

For the next question, he didn't go easily with the chatbot – he asked for advice on making cakes. In addition to the query, he uploaded an image showing five items. One of them is not used to make cakes (a jar of dried porcini mushrooms) all fell into the trap. Chatgpt identified it as a jar of mixed spices on the ground, and Gemini was a jar of crunchy fried onion, which was confusing, baptized instant coffee, and Groke correctly identified it as a jar of dried mushrooms from Waitrose. Here's the image he uploaded:

Going ahead, he tested them with mathematics, product recommendations, accounting, language translation, logical reasoning, and more. One thing was universal for them – hallucinations – each model exhibited some degree of hallucination at some point in the video. I will talk about things that didn't exist with confidence. Here's how each AI was ultimately ranked:

- chatgpt (29 points)

- Grok (24 points)

- Gemini (22 points)

- Confused (19 points)

Artificial intelligence has helped to ease the burden on most tasks, especially since the arrival of LLM. The book Artificial Intelligence ($19.88 on Amazon) is one of the books that we try to help people use AI.

I've always been fascinated by technology and digital devices, and I've been hooked on it. I have always marveled at the complexity of even the simplest digital devices and systems around us. I've been writing and publishing articles online for about six years now, and exactly a year ago I got lost in the wonders of the smartphones and laptops we have every day. I was passionate about learning about the new devices and technologies that come with them. At one point, “Why don't you write a technical article?” It's useless that I followed up on the idea – it's clear. I am an individual with the heart of deriving endless joy from studying and discovering new information. I think there is a lot to learn and there is such a short life to live. I am a “bookworm” on the Internet and digital devices. When I'm not writing, you will still find me on my device, I explore and admire the beauty of nature and creatures. I am a fast learner, quickly adapting to change and always look forward to new adventures.