Generative AI has become virtually popular among UK students. However, new research reveals a wide gap between how students use AI and the extent to which universities support it.

In just three years, generative AI has gone from novelty to standard practice in UK universities. According to the Higher Education Policy Institute’s (HEPI) 2026 Student-Generated AI Survey, 95% of full-time undergraduate students are currently using at least one form of AI.

In 2024, that percentage was 66%. The survey is based on responses from 1,054 students surveyed in December 2025. The question is no longer whether students are using AI, the authors write, but how well they are using it.

AI-generated text in exams quadruples as cheating concerns grow

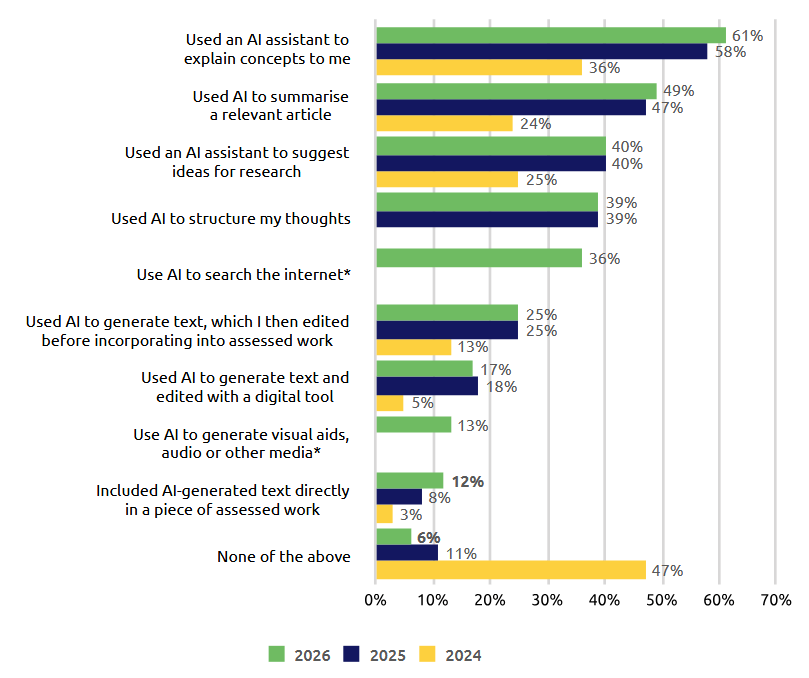

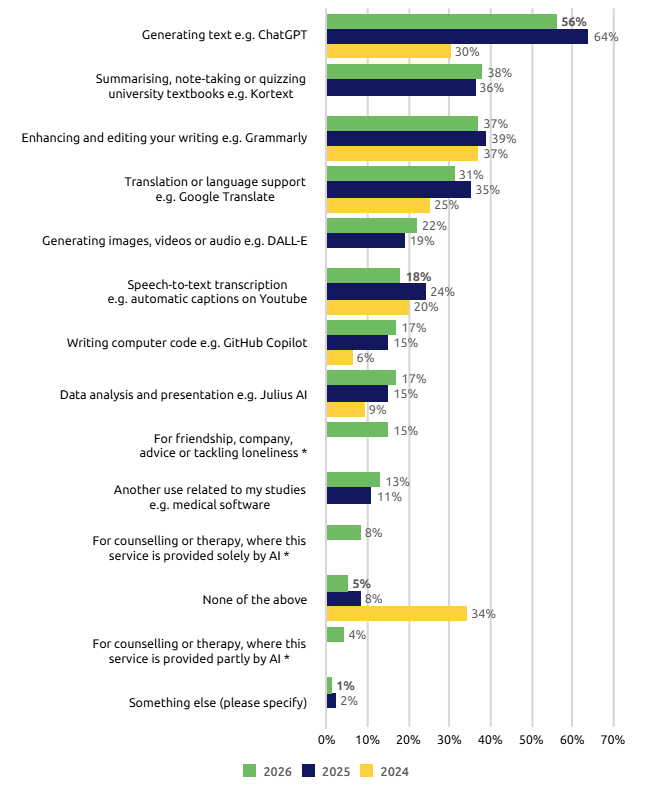

The majority of students use generative AI for assessed tasks such as explaining concepts, summarizing material, and structuring ideas. About a third also use AI as a search engine. The proportion of students inserting AI-generated text directly into exam questions has quadrupled since 2024. At the same time, the use of popular tools like ChatGPT has decreased slightly, and the authors believe this is due to the proliferation of more specialized tools.

Nearly two-thirds of students said the exam format had changed significantly. In the free text responses, some people expressed fear of being accused of wrongdoing. One student wrote, “Even though I have never written an assignment using AI, I am always worried that my work will be detected by AI.”

Students are split between deeper learning and intellectual dependence

The survey revealed a deeply divided student population. Just under half say AI has improved their learning experience, but some are concerned about fairness, loss of skills and what it means for the job market. Some feel that creative subjects are being neglected.

Two quotes from the study capture this disagreement. One student said AI “saves me hours of tedious work” and allows me to “focus on critical analysis and deeper understanding.” Another says bluntly: “I don’t use my brain at all.”

In terms of sources, students are divided into three roughly equal groups. Some groups rely on traditional sources, some use AI, or a combination of both. Nearly 1 in 10 people rarely consult traditional sources of information anymore.

According to the report, around 15% of students use AI for companionship, advice or to combat loneliness. Some are looking at purely AI-based treatment services. Overall, 4 in 10 people say AI has an impact on their feelings of loneliness, with positive and negative impacts roughly balanced. “Because it’s like having friends nearby,” one student wrote. Another: “Feel isolated.”

Most students think AI skills are essential, but fewer than half feel supported

More than two-thirds of students think AI skills are essential, but fewer than half feel supported by their instructors. Only about a third say their university actively encourages the use of AI.

The availability of AI tools has doubled since 2024, and significantly fewer universities now ban AI compared to the previous year. Russell Group universities that fell behind in 2025 are now most likely to encourage students to use AI.

However, inequalities persist across subjects, backgrounds, and genders. Humanities students are very skeptical and feel particularly unsupported. Students from wealthier families use AI more frequently, while male students tend to have more prior experience. One-third of students enter university with no experience with AI. Environmental concerns also come into play. Almost a quarter say they are not able to use AI because of its environmental impact.

Medical students who used AI without supervision had the worst performance but were the most confident.

A case study from Queen Mary University of London found that medical students who used only AI without human feedback during clinical training performed the worst, but were the most confident in their abilities. Professor Rakesh Patel likens this to giving a student a sports car before learning to drive. As a counterexample, the report highlights Aston University, which has made AI training mandatory across all programs and provided AI tools to all staff as early as 2023.

HEPI’s report recommends introducing AI to first-year students in a structured way, creating clear exam guidelines that include both non-AI and AI-supported formats, providing tools to all students, and conducting targeted research into how AI impacts loneliness and mental health.

The debate over AI in education has intensified in recent months. An anthropology study conducted last year showed that in nearly half of the conversations analyzed with the AI assistant Claude, students delegated higher-order thinking, such as analysis and creation, to the AI.

At the same time, AI companies are expanding into higher education. Anthropic launched “Claude for Education” with a dedicated learning mode, and OpenAI is offering “ChatGPT Edu” to students starting in 2024, aiming to engage users early and retain them as they enter the workforce.

AI pioneer Andrei Karpathy recently argued that schools need a fundamental rethink. In other words, they assumed that all work done outside the classroom was created by AI, and argued that exams should be moved completely in-person.

AI News Without the Hype – Curated by Humans

as The Decoder Subscriberyou can read without ads. Weekly AI Newsletterexclusive “AI Radar” Frontier Report 6 times a yearaccess to comments, and Complete archive.

Subscribe now