Getty Images

Until now, even AI companies have struggled to develop tools that can reliably detect that a piece of writing was generated using a large-scale language model. Now, a group of researchers has established a new way to estimate the usage of LLMs in a large volume of scientific papers by measuring which “excess words” began to appear more frequently in the LLM era (i.e., 2023 and 2024). According to the researchers, the results “suggest that at least 10% of abstracts in 2024 were processed with LLMs.” In a preprint paper posted earlier this month, four researchers from the University of Tübingen and Northwestern University in Germany said they were inspired by a study that measured the impact of the COVID-19 pandemic by examining excess deaths compared to the recent past. The researchers similarly investigated “excess word usage” after LLM writing tools became widely available in late 2022, and found that “the advent of LLMs led to a sharp increase in the frequency of certain styles of words, with an unprecedented increase in both their quality and quantity.”

Digging deeper

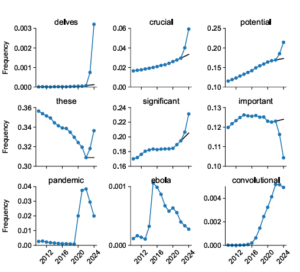

To measure this vocabulary change, the researchers analyzed 14 million article abstracts published in PubMed between 2010 and 2024, tracking the relative frequency of each word each year. They then compared the predicted frequency of those words (based on trend lines before 2023) with their actual frequency in abstracts in 2023 and 2024, when LLMs are becoming more widely used.

Results show that words that were extremely rare in these scientific abstracts before 2023 suddenly spiked in popularity after the introduction of LLM. For example, the word “delves” appeared 25 times more often in 2024 papers than would be expected based on pre-LLM trends, and the use of words like “showcasing” and “underscores” also increased nine-fold. Other previously common words also became significantly more common in abstracts after the introduction of LLM, with the frequency of “potential” increasing by 4.1 percentage points, “findings” by 2.7 percentage points, and “crucial” by 2.6 percentage points.

Of course, these changes in word usage can occur independently of LLM use; the natural evolution of language means that words go in and out of fashion. But the researchers found that in the pre-LLM era, such large and sudden year-over-year increases were only seen for words associated with major global health events, such as “Ebola” in 2015, “Zika” in 2017, and words like “coronavirus,” “lockdown,” and “pandemic” in the 2020-2022 period.

However, after completing the LLM, the researchers found a sudden and significant increase in the use of hundreds of scientific terms that had nothing in common with world affairs. Indeed, while the words that became over-represented during the COVID pandemic were overwhelmingly nouns, the words that saw a sudden increase in frequency after completing the LLM were overwhelmingly “style words” such as verbs, adjectives, and adverbs (just to name a few: “across, additionally, comprehensive, crucial, enhancing, exhibited, insights, notably, departural, within”).

This is not an entirely new finding; for example, the increasing prevalence of “delve” in scientific papers has been widely noted recently. However, previous studies have typically relied on comparisons to “ground truth” human writing samples, or lists of pre-defined LLM markers taken from outside the study. Here, the pre-2023 abstract set serves as its own effective control group, showing how lexical choices have changed overall in the post-LLM era.

Complex interactions

You may be able to easily spot telltale signs of LLM use by highlighting hundreds of so-called “marker words” that have become significantly more prevalent in the post-LLM era. Take a look at this example abstract with marker words highlighted by a researcher: Comprehensive Grasp Complex interactions while […] and […] teeth Extremely important for effective treatment strategies.”

After conducting statistical measurements of the occurrence of marker words in individual papers, the researchers estimate that at least 10 percent of papers in the PubMed corpus from 2022 onwards were written with at least some LLM assistance. The number could be even higher, the researchers say, because their set may be missing LLM-assisted abstracts that do not contain any of the marker words they identified.

The percentages measured can vary widely across different subsets of papers. The researchers found that papers written in countries such as China, South Korea, and Taiwan used LLM marker words 15% of the time, indicating that “LLMs may be useful for non-native English text editors, justifying their widespread use.” On the other hand, the researchers found that native English speakers “used LLM marker words less than native English speakers.” [just] It is good at noticing and actively removing unnatural style words from LLM output,” allowing it to hide its use from this kind of analysis.

Detecting the use of LLMs is important, the researchers note, because “LLMs are notorious for fabricating references, providing inaccurate summaries, and making false claims that sound authoritative and persuasive.” But as knowledge of LLMs' distinctive marker words begins to spread, human editors may get better at removing them from generated texts before they are shared with the world.

Maybe future large-scale language models will be able to perform this kind of frequency analysis themselves, lowering the weight of marker words to make the output less human. Soon, we may need to call in a few Blade Runners to find the generative AI text hiding among us.