Top Deep Learning Algorithms Beginners Need To Know In 2023 Unlock Potential For AI Tasks

Deep learning algorithms have emerged as a powerful force in artificial intelligence, driving significant advances in fields as diverse as computer vision, natural language processing, and robotics. These algorithms are designed to mimic the way the human brain processes information, learning and making predictions from vast amounts of data.

The most effective deep learning algorithms include Convolutional Neural Networks (CNN) for image processing, Recurrent Neural Networks (RNN) for sequential data analysis, and Generative Adversarial Networks (GAN) for creating realistic synthetic data. And so on. These algorithms have revolutionized numerous industries, from healthcare and finance to self-driving cars and voice assistants. Deep learning algorithms excel at automatically learning from large datasets, revealing complex patterns that are difficult to capture with traditional machine learning algorithms. Here are the top 10 deep learning algorithms for beginners:

1. Artificial Neural Network (ANN): ANN is the foundation of deep learning. They are made up of interconnected nodes or “neurons” that mimic the structure of the human brain. ANNs are used for a variety of tasks such as image and speech recognition, natural language processing, and generative modeling.

2. Convolutional Neural Network (CNN): CNNs are specifically designed for image processing and computer vision tasks. It uses convolutional layers to automatically extract relevant features from images and is widely used in applications such as object detection, face recognition, and autonomous driving.

3. Recurrent Neural Network (RNN): RNNs are designed to process sequential data such as time series and natural language processing tasks. The memory component allows us to retain information about previous inputs, making it suitable for tasks such as language translation, sentiment analysis, and speech recognition.

4. Long Short Term Memory (LSTM): LSTM is an extension of RNNs that addresses the “vanishing gradient” problem that occurs when training RNNs on long sequences. LSTMs use a gating mechanism to selectively remember or forget information, making them effective in tasks involving long-term dependencies, such as speech recognition and language modeling.

5.Generative Adversarial Network (GAN): A GAN consists of two neural networks that compete against each other: a generator and a discriminator. Generators learn how to produce realistic data such as images and text, while discriminators distinguish between accurate and generated data. GANs have been used to create real photos, synthesize videos, and generate text.

6. Autoencoder: An autoencoder is a neural network trained to learn efficient representations of input data. They consist of an encoder network that compresses the data into a low-dimensional latent space and a decoder network that reconstructs the original input from the latent representation. Autoencoders are used for image denoising, dimensionality reduction, and anomaly detection.

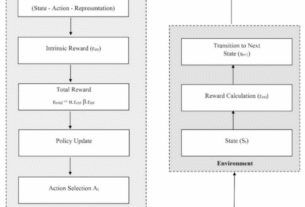

7. Deep reinforcement learning: Reinforcement learning involves training agents to make continuous decisions in an environment that maximizes their reward. Deep reinforcement learning is a combination of deep learning techniques and reinforcement learning algorithms. It has been successfully applied to difficult tasks such as complex games (such as AlphaGo), robot control, and autonomous navigation.

8. Deep Q Network (DQN): DQN is a deep reinforcement learning algorithm that uses deep neural networks to approximate the Q-value, which represents the future expected reward for different actions in a given state. DQN has had remarkable success in playing video games and has been extended to solve more complex problems.

9. Transfer Learning: Transfer learning allows knowledge learned from one task to be transferred to another task. Deep learning models trained on large datasets can be fine-tuned or used as feature extractors for new functions on limited data. Transfer learning has proven effective in computer vision, natural language processing, and other fields, alleviating the need for large training data.

10. Self-supervised learning: Self-supervised learning is a technique in which a model learns to predict certain aspects of input data without explicit human annotation. Models exploit inherent structures or relationships in the data to discover useful representations. Self-supervised learning has attracted attention due to its ability to learn from large unlabeled datasets and has shown promising results in various fields.

These 10 deep learning algorithms provide a solid foundation for beginners in 2023. Explore more advanced techniques and architectures as you progress on your deep learning journey. With robust hardware and extensive datasets available, deep learning continues to advance, enabling breakthroughs in a variety of fields and driving the development of intelligent systems. In this era of rapidly advancing technology, for those looking to unleash the potential of artificial intelligence and drive innovation in today’s data-driven world, deep learning understands and harnesses the power of his algorithms. is essential. By stacking multiple layers of these nodes, deep learning models can extract hierarchical representations of the input data and learn complex patterns to make accurate predictions.