Ripples indicate that something happened, but we don’t know what exactly happened.

This is the central problem behind the difficult kinds of equations that scientists use when trying to work backwards from something they can measure to a cause. In weather systems, biology, and materials science, researchers often understand visible outcomes such as changing patterns, temperature fields, and cellular structures, but not the hidden laws and forces that produced them.

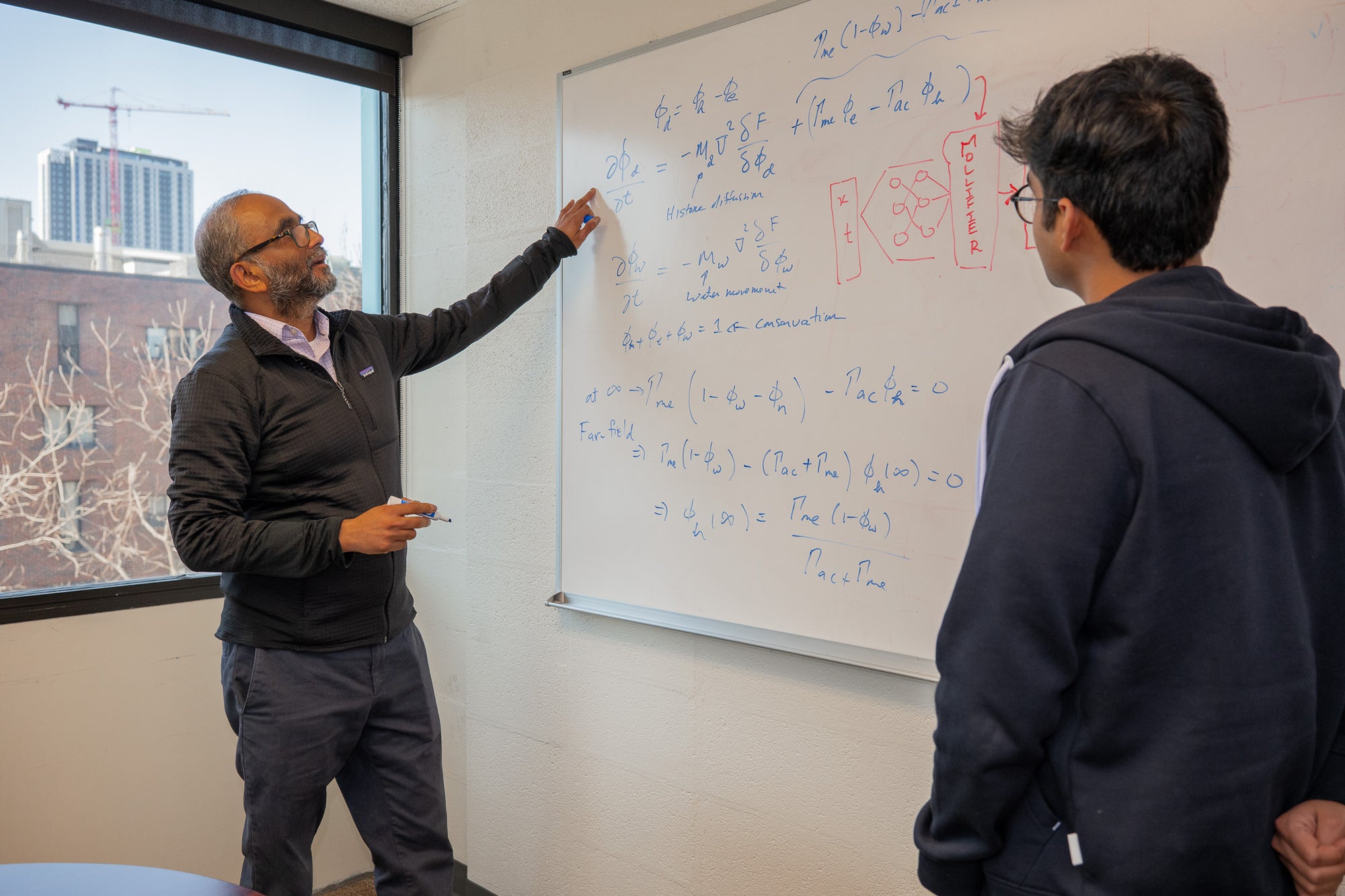

Engineers at the University of Pennsylvania say they have discovered a better way to address this problem using artificial intelligence. Their technique, called “Mollifier Layers,” aims to help AI systems better solve inverse partial differential equations, or inverse partial differential equations, especially when the data is noisy and the mathematics is unstable.

The research, published in Transactions on Machine Learning Research and scheduled to be presented at NeurIPS 2026, offers a different path from that followed by much of modern AI. Instead of focusing on bigger models and more computing power, the Penn State team turned to mathematical ideas that have been around for decades and reworked them for physics-based machine learning.

“Solving an inverse problem is like looking at the ripples in a pond and working backwards to figure out where the pebble landed,” said Vivek Shenoy, the Eduard D. Grant Chair in Materials Science and Engineering and senior author of the study. “The effects are clearly visible, but the real challenge is to deduce the hidden causes.”

Where the usual approach starts to break down

Differential equations are one of science’s fundamental tools for describing change. You can track how heat moves through matter, how populations grow, and how chemical processes unfold. Partial differential equations go further and explain how these changes occur in both space and time.

Inverse partial differential equations turn ordinary questions on their head. Rather than starting from known rules and predicting what will happen, we start from observations and ask what hidden parameters and dynamics must have existed in the first place.

It’s a much more difficult task.

“For many years, we have used these equations to study how chromatin, the folded state of DNA in the nucleus, is organized in living cells,” Shenoy says. “But we kept running into the same problem. Although we could see the structure and model its formation, we couldn’t reliably infer the epigenetic processes that drive this system, the chemical changes that help control which genes are activated. The more we tried to optimize existing approaches, the more it became clear that the mathematics itself had to change.”

At the heart of the bottleneck is differentiation, the mathematics of measuring how something changes. AI systems that process inverse partial differential equations typically compute derivatives through recursive automatic differentiation. This is the process of repeatedly tracking how values change through a neural network.

That works up to a point.

If the equations contain high-order derivatives and the data is noisy, the process can become memory-intensive, slow, and unstable. Penn researchers compared the model’s behavior across several benchmark problems and found that distortion rises rapidly as the system becomes more complex. In one memory comparison using PINN (a physics-based neural network), peak memory usage increased from 0.21 gigabytes to 2.70 gigabytes when both data loss and PDE loss were used in a fourth-order reaction-diffusion problem. As the network depth increased, the training time also expanded.

Speed isn’t the only issue. Accuracy also begins to wobble. The researchers reported that PINN recovered the Laplacian with a correlation to the ground truth of only 0.21 on a reaction-diffusion benchmark. This indicates that the derivative estimate has drifted severely.

A smoother way to measure change

The researchers ultimately determined that the main cause of the problem was not the neural network design itself.

“We initially thought this problem had to do with the architecture of neural networks,” said Ananyae Kumar Bartali, a graduate of Penn Engineering’s Master of Science in Scientific Computing program and the study’s other co-lead author. “But after careful tuning of the network, we ultimately discovered that the bottleneck was the recursive autodifferentiation itself.”

Their solution makes use of relaxants, a mathematical tool described by mathematician Kurt Otto Friedrichs in the 1940s. Mollifier smooths jagged or noisy functions before analyzing them. Rather than obtaining unstable derivatives directly from the network output, the Penn system first inserts a relaxation layer that smoothes the signal and then computes the derivatives through fixed convolution-based operations.

Simply put, this method separates the most difficult part of the differentiation work from the repeated gradient calculations throughout the network. Its derivative is instead obtained from an analytical smoothing kernel.

This change appears to do several things at once. This reduces memory strain, reduces training time, and makes derivative estimates more stable under noise. The authors describe this layer as lightweight and architecture-independent, meaning it can be added to machine learning models based on multiple types of physical information.

Vinayak Vinayak, a PhD candidate in materials science and engineering and co-lead author, succinctly stated the broader lesson: “Modern AI often advances by scaling up the amount of computation. But some scientific challenges require better math than just more computation.”

what happened on the test

To see if this approach was effective, the team tested it on three classes of problems: first-order 1D Langevin equations, second-order 2D heat equations, and fourth-order 2D reaction-diffusion systems.

These last parts are particularly demanding, as higher order derivatives are often the most difficult for traditional methods.

Across these benchmarks, relaxant-based models typically performed better than native models. For first-order Langevin, the relaxed PINN achieved a time correlation of 0.97 compared to 0.36 for the standard PINN, but also used less memory, 0.16 gigabytes vs. 0.21 gigabytes, and shorter training time, 1,615 vs. 2,138 seconds.

On harder systems, the difference widened even further. For the quadratic heat equation, the spatial correlation of the relaxed PINN reached 0.99, while the standard PINN was 0.21. Peak memory usage decreased from 1.20 GB to 0.24 GB. In the 4th-order reaction-diffusion benchmark, the same model reduced training time from 3,386 seconds to 335 seconds and peak memory from 2.75 gigabytes to 0.23 gigabytes. The average correlation of the estimated parameters increased from 0.44 to 0.99.

The authors say their system reduced memory usage and training time by about 6 to 10 times in their experiments.

These gains are most important when the hidden parameters vary over space or time, or when the signal contains noise. In these settings, the relaxed model was better at tracking trends that the standard system often missed.

A closer look inside the cell nucleus

For Shenoy’s lab, the biological benefits come from chromatin, the mixture of DNA and proteins that packages chromosomes within the nucleus.

Small chromatin domains, about 100 nanometers in size depending on the source material, help control access to genetic material. This is important because accessibility influences gene expression, and gene expression shapes cellular identity, function, aging, and disease.

“These domains are only 100 nanometers in size,” Shenoy says. “However, these domains play important roles in biology and health because accessibility determines gene expression, and gene expression governs cell identity, function, aging, and disease.”

The research team was already studying how epigenetic responses and physical interactions organize chromatin structure. What they wanted was a more reliable way to infer the reaction rates behind those changes from what they could actually observe.

In doing so, researchers will be able to move beyond snapshots of chromatin structure to models of how that structure changes over time. This work has also been extended to super-resolution microscopy to include STORM images of human cell nuclei, and the researchers say the relaxant layer allows them to spatially resolve and infer important biophysical parameters from noisy image-derived fields.

In synthetic reaction-diffusion tests related to DNA organization, softened PINNs recovered spatially varying reaction rates with high accuracy and captured the Laplacian more faithfully than standard PINNs. This paper argues that this type of parameter extraction can link nanoscale chromatin remodeling to cell fate memory in gene regulation, cancer metastasis, development and disease.

“Tracking how these reaction rates change during aging, cancer, and development opens up new therapeutic possibilities. If reaction rates control chromatin organization and cell fate, then altering these rates could guide cells toward desired states,” Vinayak added.

Why the approach works

The appeal of inverse partial differential equations is that they are not limited to one field.

Scientists encounter them whenever they need to estimate hidden quantities, such as diffusivity, conductance, and reaction rates, from sparse or noisy measurements. This means that the pen framework may be important in areas far beyond chromatin. The source material lists materials science, fluid mechanics, genetics, and climate modeling as places where stable and efficient inference can be useful.

The authors also suggest that the same principles can be extended beyond inverse problems to forward models, operator learning, and neural ODE systems, all areas where accurate gradients are important.

Still, the work has its limits.

Performance is determined by the choice of morifier kernel. Morifier kernels must balance noise suppression with the risk of washing out high dispersion features. Current implementations also have weaknesses near boundaries and on anisotropic grids. The researchers say that future research should explore adaptive or learning kernels, boundary-aware formulations, and adaptive mesh validation strategies.

These warnings are important because methods that look elegant in benchmarks do not necessarily transfer cleanly to complex configurations. Here, the researchers say, it’s clear that mathematical shortcuts can help, but they don’t eliminate the need for careful adjustments.

Practical implications of the research

This study shows a more efficient way for AI to extract hidden rules from difficult scientific data.

In practice, this could help researchers infer changes in chromatin reaction rates, recover spatially varying thermal properties in thermal problems, or estimate unknown forcing terms in noisy dynamical systems. This method reduces memory usage and training time while improving stability for higher-order problems, potentially making some physics-based machine learning tasks more practical on real scientific datasets.

The broader value is not just speed. This is the possibility of converting observations into interpretable parameters with fewer failures when the data are noisy or the derivatives are difficult to calculate. “The ultimate goal is to take the observation of complex patterns and quantitatively reveal the rules that generate them. Understanding the rules that govern a system gives us the possibility to change it,” said Shenoy.